ELK之-redis(错误,警告)日志使用filebeat收集

2019-04-15 14:27

961 查看

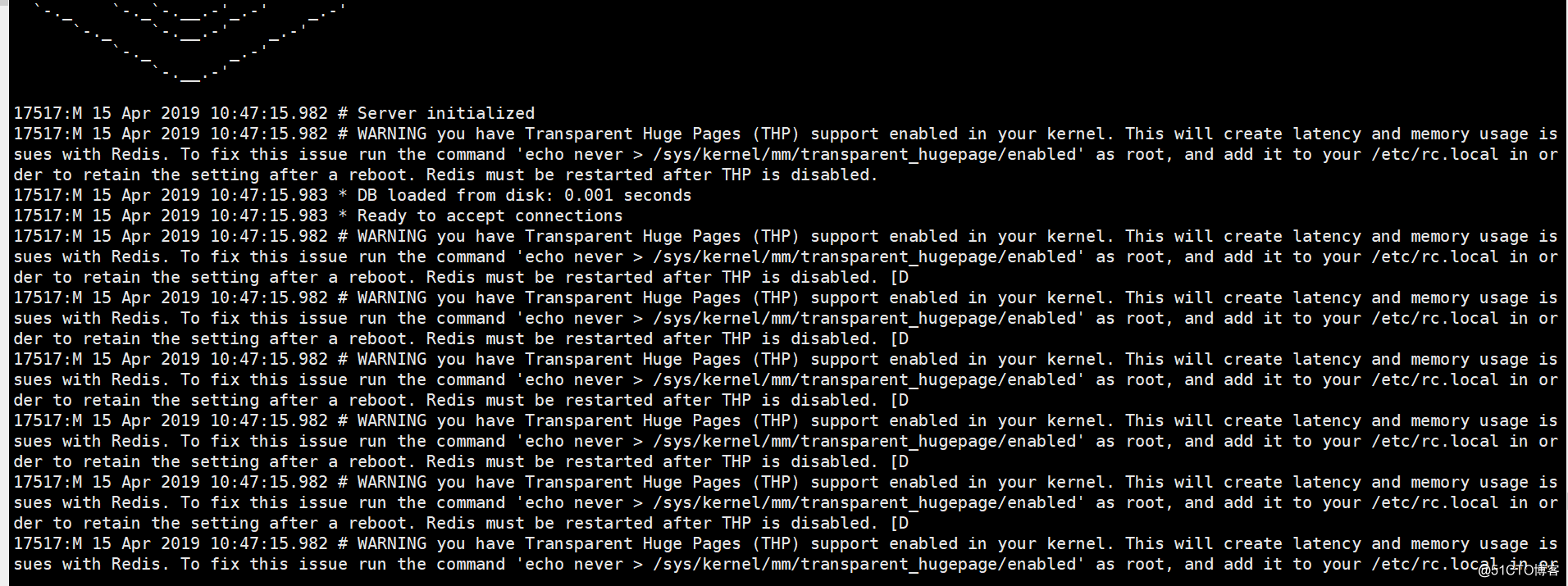

处理redis日志展示

收集redis警告和错误日志即可

filebeat include_lines: ["WARNING","ERROR"] include_lines 一个正则表达式的列表,以匹配您希望Filebeat包含的行。Filebeat仅导出与列表中正则表达式匹配的行。默认情况下,导出所有行。 参考:https://www.elastic.co/guide/en/beats/filebeat/current/configuration-filebeat-options.html

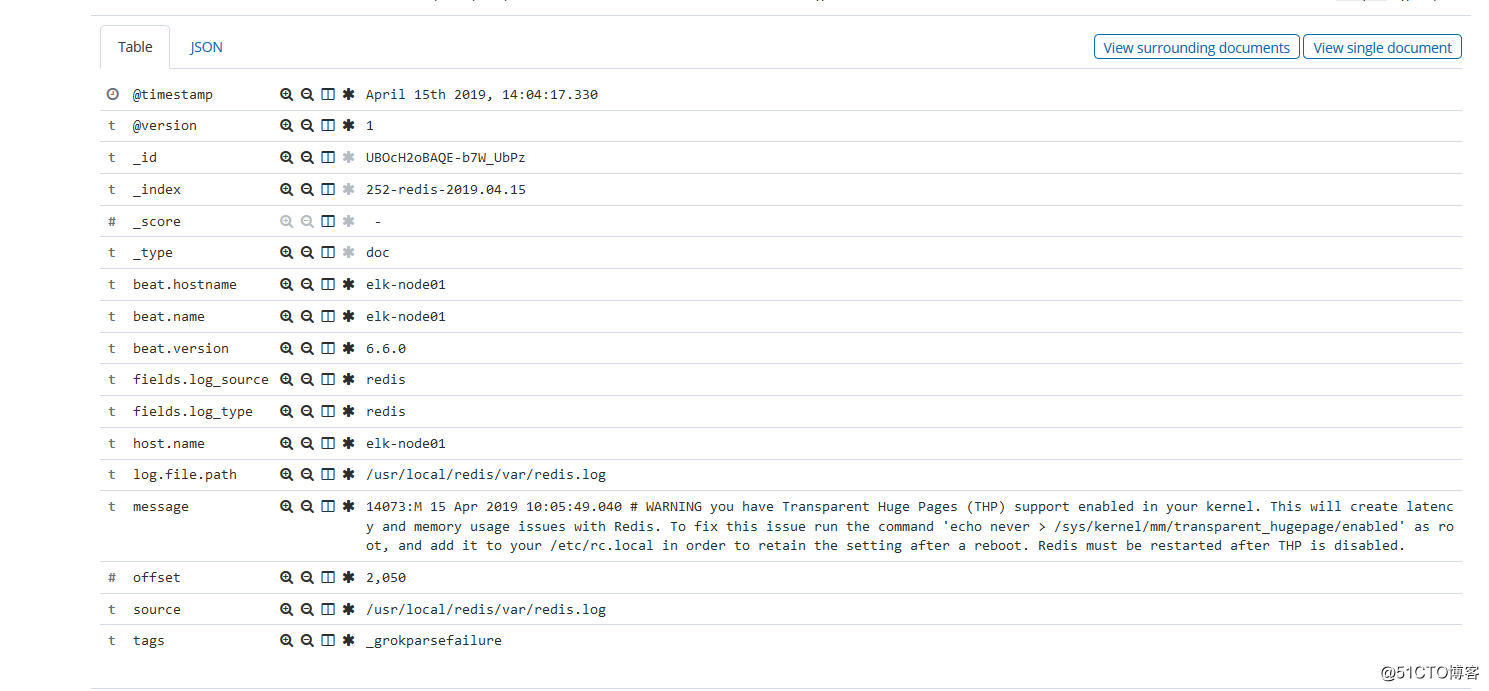

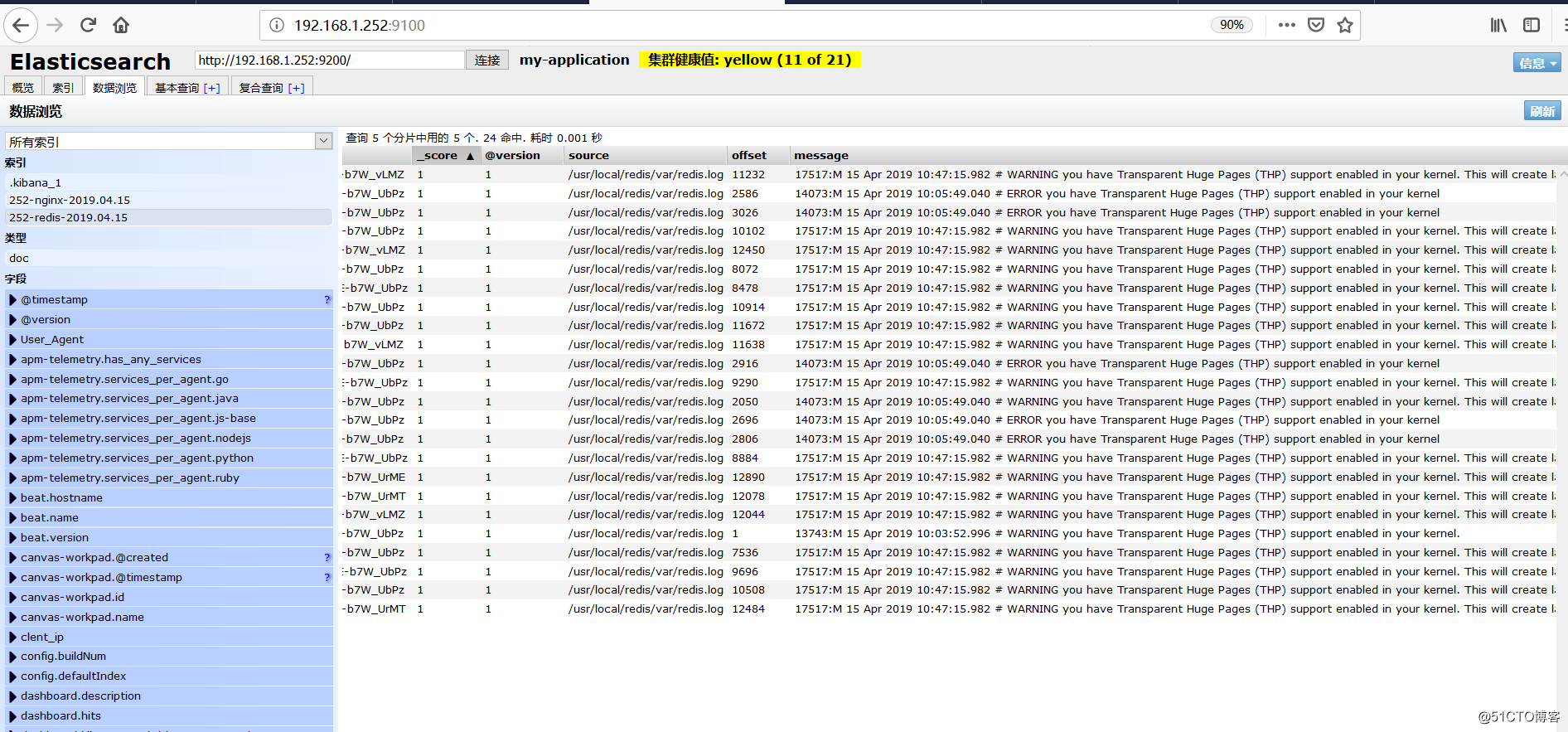

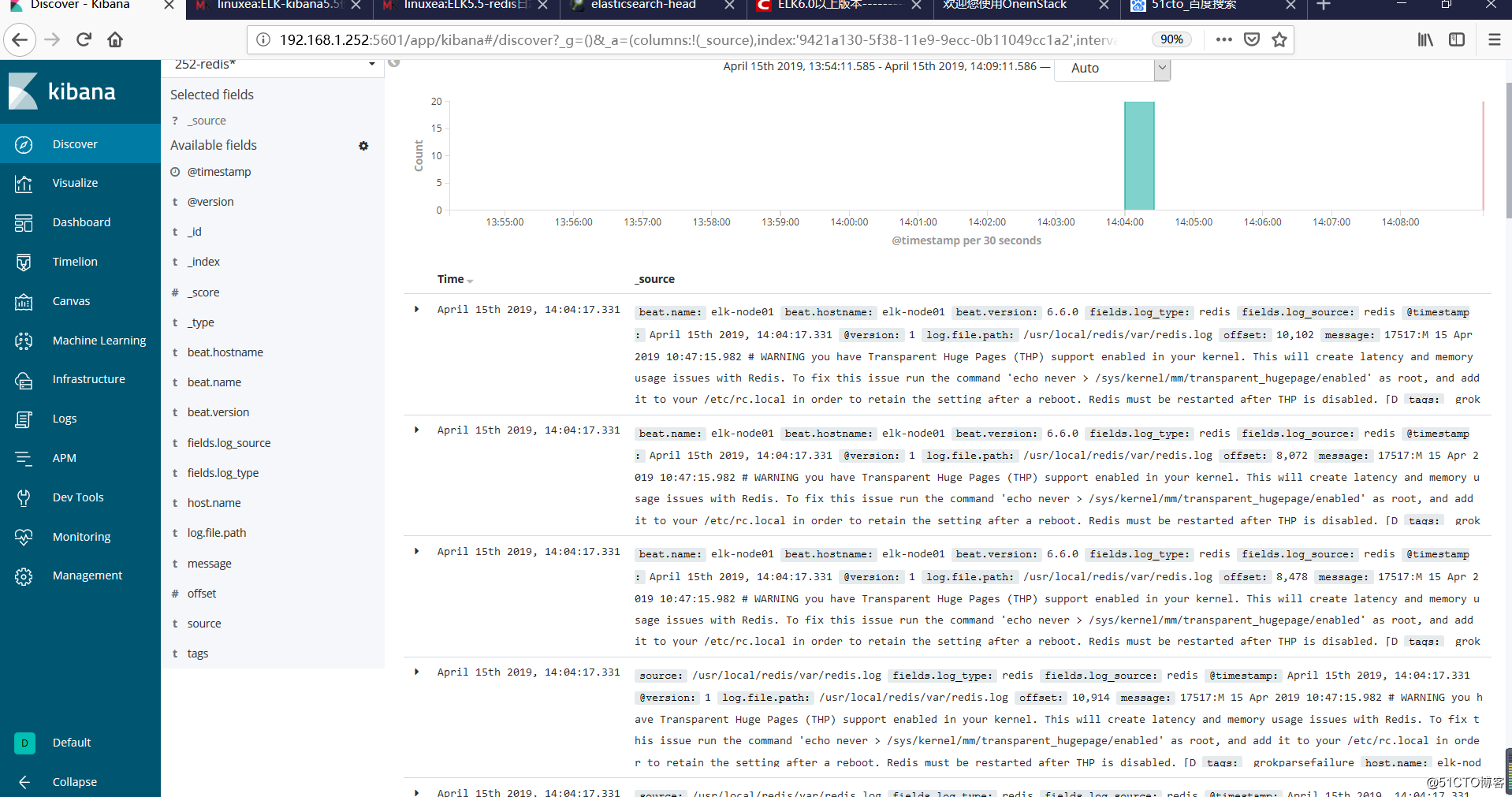

kibana展示效果

filebeat安装配置

[root@elk-node01 var]# wget https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-6.6.0-x86_64.rpm [root@elk-node01 var]# yum install filebeat-6.6.0-x86_64.rpm [root@elk-node01 var]# cat /etc/filebeat/filebeat.yml #主要通过log_type来判断 filebeat.prospectors: - input_type: log paths: - /data/wwwlogs/access_nginx.log fields: log_source: nginx log_type: nginx - input_type: log paths: - /var/log/messages fields: log_source: messages log_type: messages - input_type: log paths: - /usr/local/redis/var/redis.log include_lines: ["WARNING","ERROR"] fields: log_source: redis log_type: redis output.redis: hosts: ["127.0.0.1:6379"] key: "defaul_list" db: 5 timeout: 5

logstash配置

input {

redis {

key => "defaul_list"

data_type => "list"

db => "5"

host => "127.0.0.1"

port => "6379"

threads => "5"

codec => "json"

}

}

filter {

if [fields][log_type] == "redis" {

grok {

patterns_dir => "/data/elk-services/logstash/patterns.d"

match => { "message" => "%{REDISLOG}" }

}

mutate {

gsub => [

"loglevel", "\.", "debug",

"loglevel", "\-", "verbose",

"loglevel", "\*", "notice",

"loglevel", "\#", "warring",

"role","X","sentinel",

"role","C","RDB/AOF writing child",

"role","S","slave",

"role","M","master"

]

}

date {

match => [ "timestamp" , "dd MMM HH:mm:ss.SSS" ]

target => "@timestamp"

remove_field => [ "timestamp" ]

}

}

if [fields][log_type] == "nginx" {

grok {

patterns_dir => [ "/data/elk-services/logstash/patterns.d" ]

match => { "message" => "%{NGINXACCESS}" }

overwrite => [ "message" ]

}

geoip {

source => "clent_ip"

target => "geoip"

database => "/data/soft/GeoLite2-City_20190409/GeoLite2-City.mmdb"

}

useragent {

source => "User_Agent"

target => "userAgent"

}

urldecode {

all_fields => true

}

mutate {

gsub => ["User_Agent","[\"]",""] #将user_agent中的 " 换成空

convert => [ "response","integer" ]

convert => [ "body_bytes_sent","integer" ]

convert => [ "bytes_sent","integer" ]

convert => [ "upstream_response_time","float" ]

convert => [ "upstream_status","integer" ]

convert => [ "request_time","float" ]

convert => [ "port","integer" ]

}

date {

match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ]

}

}

}

output {

if [fields][log_type] == "redis" {

elasticsearch {

hosts => ["192.168.1.252:9200"]

index => "252-redis-%{+YYYY.MM.dd}"

action => "index"

}

}

if [fields][log_type] == "nginx" {

elasticsearch {

hosts => ["192.168.1.252:9200"]

index => "252-nginx-%{+YYYY.MM.dd}"

action => "index"

}

}

}

查看nginx和redis的patterns

[root@elk-node01 var]# cat /data/elk-services/logstash/patterns.d/redis

EDISTIMESTAMP %{MONTHDAY} %{MONTH} %{TIME}

REDISLOG %{POSINT:pid}\:%{WORD:role} %{REDISTIMESTAMP:timestamp} %{DATA:loglevel} %{GREEDYDATA:msg}

[root@elk-node01 var]# cat /data/elk-services/logstash/patterns.d/nginx

NGUSERNAME [a-zA-Z\.\@\-\+_%]+

NGUSER %{NGUSERNAME}

NGINXACCESS %{IP:clent_ip} (?:-|%{USER:ident}) \[%{HTTPDATE:log_date}\] \"%{WORD:http_verb} (?:%{PATH:baseurl}\?%{NOTSPACE:params}(?: HTTP/%{NUMBER:http_version})?|%{DATA:raw_http_request})\" (%{IPORHOST:url_domain}|%{URIHOST:ur_domain}|-)\[(%{BASE16FLOAT:request_time}|-)\] %{NOTSPACE:request_body} %{QS:referrer_rul} %{GREEDYDATA:User_Agent} \[%{GREEDYDATA:ssl_protocol}\] \[(?:%{GREEDYDATA:ssl_cipher}|-)\]\[%{NUMBER:time_duration}\] \[%{NUMBER:http_status_code}\] \[(%{BASE10NUM:upstream_status}|-)\] \[(%{NUMBER:upstream_response_time}|-)\] \[(%{URIHOST:upstream_addr}|-)\]

nginx的配置参考:https://blog.51cto.com/9025736/2377352

启动检查数据

[root@elk-node01 config]# /etc/init.d/filebeat restart Restarting filebeat (via systemctl): [ OK ] [root@elk-node01 config]# ../bin/logstash -f filebeat-nginx-redis.yml

相关文章推荐

- K8S使用filebeat统一收集应用日志

- 使用 Filebeat 收集日志

- 从零进阶--教你如何使用Filebeat实现日志可视化收集

- Linux搭建ELK日志收集系统:FIlebeat+Redis+Logstash+Elasticse

- ELK6.3.1版本使用filebeat收集nginx的日志配置文件

- 使用Filebeat 6 收集多个目录的日志并发送到lostash

- 6.3.1版本elk+redis+filebeat收集docker+swarm日志分析

- 使用filebeat收集kubernetes容器日志

- K8S使用filebeat统一收集应用日志

- elk的安装部署三(kibana的安装及使用filebeat收集日志)

- ELK实战之使用filebeat代替logstash收集日志

- Kubernetes部署ELK并使用Filebeat收集容器日志

- 使用elasticsearch和filebeat做日志收集

- Filebeat 日志收集器 安装和配置

- 170228、Linux操作系统安装ELK stack日志管理系统--(1)Logstash和Filebeat的安装与使用

- elk6.3.1+zookeeper+kafka+filebeat收集dockerswarm容器日志

- Filebeat 日志收集器 安装和配置

- logstash与filebeat收集日志

- Linux操作系统安装ELK stack日志管理系统--(1)Logstash和Filebeat的安装与使用

- Filebeat 5.x 日志收集器 安装和配置