Oracle通过sqoop同步数据到hive

2019-03-19 10:33

281 查看

版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/qq_28356739/article/details/88656516

一、介绍

将关系型数据库ORACLE的数据导入到HDFS中,可以通过Sqoop、OGG来实现,相比较ORACLE GOLDENGATE,Sqoop不仅不需要复杂的安装配置,而且传输效率很高,同时也能实现增量数据同步。

说明:本测试hadoop是单节点伪分布式环境,是基于之前写的两篇文章对应的环境操作,前两篇文章分别是:

1、Hadoop+Hive+HBase+Kylin 伪分布式安装指南

2、sqoop1.4.7的安装及使用(hadoop2.7环境)

本文档将在以上两个文章的基础上操作,是对第二篇文章环境的一个简单使用测试,使用过程中出现的错误亦可以验证暴漏第二篇文章安装的问题出现的错误,至于sqoop增量同步到hive请看本人在这篇文章之后写的测试文档:

Oracle通过sqoop增量同步数据到hive

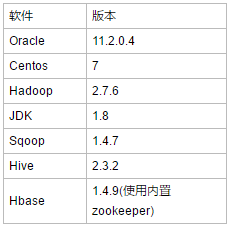

二、环境配置

三、实验过程

1、Oracle源端创建测试用表并初始化

--scott用户下创建此表

create table ora_hive(

empno number primary key,

ename varchar2(30),

hiredate date

);

--简单初始化出近1000天数据

insert into ora_hive

select level, dbms_random.string('u', 20), sysdate - level

from dual

connect by level <= 1000;

commit;

--现在表中存在2019,2018,2017,2016四年的数据

2、hive创建目标表

切换到hive目录: [root@hadoop ~]# cd /hadoop/hive/ [root@hadoop hive]# cd bin [root@hadoop bin]# ./hive SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/hadoop/hive/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/imp l/StaticLoggerBinder.class]SLF4J: Found binding in [jar:file:/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/ org/slf4j/impl/StaticLoggerBinder.class]SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory] Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/ hive-log4j2.properties Async: trueHive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.hive> show databases; OK default sbux Time taken: 1.508 seconds, Fetched: 2 row(s) hive> create database oracle; OK Time taken: 1.729 seconds hive> show databases; OK default oracle sbux Time taken: 0.026 seconds, Fetched: 3 row(s) hive> use oracle; OK Time taken: 0.094 seconds hive> create table ora_hive( > empno int, > ename string, > hiredate date > ); OK Time taken: 0.744 seconds hive> show tables;

3、导入数据:

[root@hadoop bin]# sqoop import --connect jdbc:oracle:thin:@192.168.1.6:1521:orcl --username scott --password tiger --table ORA_HIVE -m 1 --hive-import --hive-database oracle

Warning: /hadoop/sqoop/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

Warning: /hadoop/sqoop/../zookeeper does not exist! Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation.

19/03/12 14:38:11 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7

19/03/12 14:38:11 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

19/03/12 14:38:11 INFO tool.BaseSqoopTool: Using Hive-specific delimiters for output. You can override

19/03/12 14:38:11 INFO tool.BaseSqoopTool: delimiters with --fields-terminated-by, etc.

19/03/12 14:38:12 INFO oracle.OraOopManagerFactory: Data Connector for Oracle and Hadoop is disabled.

19/03/12 14:38:12 INFO manager.SqlManager: Using default fetchSize of 1000

19/03/12 14:38:12 INFO tool.CodeGenTool: Beginning code generation

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/hadoop/hbase/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/hadoop/hive/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

19/03/12 14:38:12 INFO manager.OracleManager: Time zone has been set to GMT

19/03/12 14:38:12 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM ORA_HIVE t WHERE 1=0

19/03/12 14:38:12 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /hadoop

Note: /tmp/sqoop-root/compile/e198fef468abdfcbc531a36035dd6646/ORA_HIVE.java uses or overrides a deprecated API.

Note: Recompile with -Xlint:deprecation for details.

19/03/12 14:38:16 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/e198fef468abdfcbc531a36035dd6646/ORA_HIVE.jar

19/03/12 14:38:16 INFO manager.OracleManager: Time zone has been set to GMT

19/03/12 14:38:16 INFO manager.OracleManager: Time zone has been set to GMT

19/03/12 14:38:16 INFO mapreduce.ImportJobBase: Beginning import of ORA_HIVE

19/03/12 14:38:17 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar

19/03/12 14:38:17 INFO manager.OracleManager: Time zone has been set to GMT

19/03/12 14:38:18 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

19/03/12 14:38:18 INFO client.RMProxy: Connecting to ResourceManager at /192.168.1.66:8032

19/03/12 14:38:21 INFO db.DBInputFormat: Using read commited transaction isolation

19/03/12 14:38:21 INFO mapreduce.JobSubmitter: number of splits:1

19/03/12 14:38:22 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1552371714699_0001

19/03/12 14:38:23 INFO impl.YarnClientImpl: Submitted application application_1552371714699_0001

19/03/12 14:38:23 INFO mapreduce.Job: The url to track the job: http://hadoop:8088/proxy/application_1552371714699_0001/

19/03/12 14:38:23 INFO mapreduce.Job:

4000

Running job: job_1552371714699_0001

19/03/12 14:38:35 INFO mapreduce.Job: Job job_1552371714699_0001 running in uber mode : false

19/03/12 14:38:35 INFO mapreduce.Job: map 0% reduce 0%

19/03/12 14:38:42 INFO mapreduce.Job: map 100% reduce 0%

19/03/12 14:38:43 INFO mapreduce.Job: Job job_1552371714699_0001 completed successfully

19/03/12 14:38:44 INFO mapreduce.Job: Counters: 30

File System Counters

FILE: Number of bytes read=0

FILE: Number of bytes written=140340

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=87

HDFS: Number of bytes written=46893

HDFS: Number of read operations=4

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Other local map tasks=1

Total time spent by all maps in occupied slots (ms)=4625

Total time spent by all reduces in occupied slots (ms)=0

Total time spent by all map tasks (ms)=4625

Total vcore-milliseconds taken by all map tasks=4625

Total megabyte-milliseconds taken by all map tasks=4736000

Map-Reduce Framework

Map input records=1000

Map output records=1000

Input split bytes=87

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=101

CPU time spent (ms)=2730

Physical memory (bytes) snapshot=203558912

Virtual memory (bytes) snapshot=2137956352

Total committed heap usage (bytes)=100139008

File Input Format Counters

Bytes Read=0

File Output Format Counters

Bytes Written=46893

19/03/12 14:38:44 INFO mapreduce.ImportJobBase: Transferred 45.7939 KB in 25.921 seconds (1.7667 KB/sec)

19/03/12 14:38:44 INFO mapreduce.ImportJobBase: Retrieved 1000 records.

19/03/12 14:38:44 INFO mapreduce.ImportJobBase: Publishing Hive/Hcat import job data to Listeners for table ORA_HIVE

19/03/12 14:38:44 INFO manager.OracleManager: Time zone has been set to GMT

19/03/12 14:38:44 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM ORA_HIVE t WHERE 1=0

19/03/12 14:38:44 WARN hive.TableDefWriter: Column EMPNO had to be cast to a less precise type in Hive

19/03/12 14:38:44 WARN hive.TableDefWriter: Column HIREDATE had to be cast to a less precise type in Hive

19/03/12 14:38:44 INFO hive.HiveImport: Loading uploaded data into Hive

19/03/12 14:38:44 INFO conf.HiveConf: Found configuration file file:/hadoop/hive/conf/hive-site.xml

2019-03-12 06:38:47,077 main ERROR Could not register mbeans java.security.AccessControlException: access denied ("javax.management.MBeanTrustPermission" "register")at java.security.AccessControlContext.checkPermission(AccessControlContext.java:472)

at java.lang.SecurityManager.checkPermission(SecurityManager.java:585)

at com.sun.jmx.interceptor.DefaultMBeanServerInterceptor.checkMBeanTrustPermission(DefaultMBeanServerInterceptor.java:1848)

at com.sun.jmx.interceptor.DefaultMBeanServerInterceptor.registerMBean(DefaultMBeanServerInterceptor.java:322)

at com.sun.jmx.mbeanserver.JmxMBeanServer.registerMBean(JmxMBeanServer.java:522)

at org.apache.logging.log4j.core.jmx.Server.register(Server.java:380)

at org.apache.logging.log4j.core.jmx.Server.reregisterMBeansAfterReconfigure(Server.java:165)

at org.apache.logging.log4j.core.jmx.Server.reregisterMBeansAfterReconfigure(Server.java:138)

at org.apache.logging.log4j.core.LoggerContext.setConfiguration(LoggerContext.java:507)

at org.apache.logging.log4j.core.LoggerContext.start(LoggerContext.java:249)

at org.apache.logging.log4j.core.async.AsyncLoggerContext.start(AsyncLoggerContext.java:86)

at org.apache.logging.log4j.core.impl.Log4jContextFactory.getContext(Log4jContextFactory.java:239)

at org.apache.logging.log4j.core.config.Configurator.initialize(Configurator.java:157)

at org.apache.logging.log4j.core.config.Configurator.initialize(Configurator.java:130)

at org.apache.logging.log4j.core.config.Configurator.initialize(Configurator.java:100)

at org.apache.logging.log4j.core.config.Configurator.initialize(Configurator.java:187)

at org.apache.hadoop.hive.common.LogUtils.initHiveLog4jDefault(LogUtils.java:154)

at org.apache.hadoop.hive.common.LogUtils.initHiveLog4jCommon(LogUtils.java:90)

at org.apache.hadoop.hive.common.LogUtils.initHiveLog4jCommon(LogUtils.java:82)

at org.apache.hadoop.hive.common.LogUtils.initHiveLog4j(LogUtils.java:65)

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:702)

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:686)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.sqoop.hive.HiveImport.executeScript(HiveImport.java:331)

at org.apache.sqoop.hive.HiveImport.importTable(HiveImport.java:241)

at org.apache.sqoop.tool.ImportTool.importTable(ImportTool.java:537)

at org.apache.sqoop.tool.ImportTool.run(ImportTool.java:628)

at org.apache.sqoop.Sqoop.run(Sqoop.java:147)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:183)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:234)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:243)

at org.apache.sqoop.Sqoop.main(Sqoop.java:252)

Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true

19/03/12 14:38:47 INFO SessionState:

Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true

19/03/12 14:38:47 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:47 INFO session.SessionState: Created local directory: /hadoop/hive/tmp/root/0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:47 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/0a864287-81f1-47f6-80fb-8ea3cf0b2faf/_tmp_space.db

19/03/12 14:38:47 INFO conf.HiveConf: Using the default value passed in for log id: 0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:47 INFO session.SessionState: Updating thread name to 0a864287-81f1-47f6-80fb-8ea3cf0b2faf main

19/03/12 14:38:47 INFO conf.HiveConf: Using the default value passed in for log id: 0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:47 INFO ql.Driver: Compiling command(queryId=root_20190312063847_496038e3-f0ee-4f95-b35b-bfd1746cfd09): CREATE TABLE IF NOT EXISTS `oracle`.`ORA_HIVE` ( `EMPNO` DOUBLE, `ENAM

E` STRING, `HIREDATE` STRING) COMMENT 'Imported by sqoop on 2019/03/12 06:38:44' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 14:38:50 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083

19/03/12 14:38:50 INFO hive.metastore: Opened a connection to metastore, current connections: 1

19/03/12 14:38:50 INFO hive.metastore: Connected to metastore.

19/03/12 14:38:50 INFO parse.CalcitePlanner: Starting Semantic Analysis

19/03/12 14:38:50 INFO parse.CalcitePlanner: Creating table oracle.ORA_HIVE position=27

19/03/12 14:38:51 INFO ql.Driver: Semantic Analysis Completed

19/03/12 14:38:51 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null)

19/03/12 14:38:51 INFO ql.Driver: Completed compiling command(queryId=root_20190312063847_496038e3-f0ee-4f95-b35b-bfd1746cfd09); Time taken: 3.385 seconds

19/03/12 14:38:51 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager

19/03/12 14:38:51 INFO ql.Driver: Executing command(queryId=root_20190312063847_496038e3-f0ee-4f95-b35b-bfd1746cfd09): CREATE TABLE IF NOT EXISTS `oracle`.`ORA_HIVE` ( `EMPNO` DOUBLE, `ENAM

E` STRING, `HIREDATE` STRING) COMMENT 'Imported by sqoop on 2019/03/12 06:38:44' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 14:38:51 INFO sqlstd.SQLStdHiveAccessController: Created SQLStdHiveAccessController for session context : HiveAuthzSessionContext [sessionString=0a864287-81f1-47f6-80fb-8ea3cf0b2fa

f, clientType=HIVECLI]19/03/12 14:38:51 WARN session.SessionState: METASTORE_FILTER_HOOK will be ignored, since hive.security.authorization.manager is set to instance of HiveAuthorizerFactory.

19/03/12 14:38:51 INFO hive.metastore: Mestastore configuration hive.metastore.filter.hook changed from org.apache.hadoop.hive.metastore.DefaultMetaStoreFilterHookImpl to org.apache.hadoop.

hive.ql.security.authorization.plugin.AuthorizationMetaStoreFilterHook19/03/12 14:38:51 INFO hive.metastore: Closed a connection to metastore, current connections: 0

19/03/12 14:38:51 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083

19/03/12 14:38:51 INFO hive.metastore: Opened a connection to metastore, current connections: 1

19/03/12 14:38:51 INFO hive.metastore: Connected to metastore.

19/03/12 14:38:51 INFO ql.Driver: Completed executing command(queryId=root_20190312063847_496038e3-f0ee-4f95-b35b-bfd1746cfd09); Time taken: 0.134 seconds

OK

19/03/12 14:38:51 INFO ql.Driver: OK

Time taken: 3.534 seconds

19/03/12 14:38:51 INFO CliDriver: Time taken: 3.534 seconds

19/03/12 14:38:51 INFO conf.HiveConf: Using the default value passed in for log id: 0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:51 INFO session.SessionState: Resetting thread name to main

19/03/12 14:38:51 INFO conf.HiveConf: Using the default value passed in for log id: 0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:51 INFO session.SessionState: Updating thread name to 0a864287-81f1-47f6-80fb-8ea3cf0b2faf main

19/03/12 14:38:51 INFO ql.Driver: Compiling command(queryId=root_20190312063851_88f08fef-b111-439a-ac51-62b91c507927):

LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/ORA_HIVE' INTO TABLE `oracle`.`ORA_HIVE`

19/03/12 14:38:51 INFO ql.Driver: Semantic Analysis Completed

19/03/12 14:38:51 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null)

19/03/12 14:38:51 INFO ql.Driver: Completed compiling command(queryId=root_20190312063851_88f08fef-b111-439a-ac51-62b91c507927); Time taken: 0.451 seconds

19/03/12 14:38:51 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager

19/03/12 14:38:51 INFO ql.Driver: Executing command(queryId=root_20190312063851_88f08fef-b111-439a-ac51-62b91c507927):

LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/ORA_HIVE' INTO TABLE `oracle`.`ORA_HIVE`

19/03/12 14:38:51 INFO ql.Driver: Starting task [Stage-0:MOVE] in serial mode

19/03/12 14:38:51 INFO hive.metastore: Closed a connection to metastore, current connections: 0

Loading data to table oracle.ora_hive

19/03/12 14:38:51 INFO exec.Task: Loading data to table oracle.ora_hive from hdfs://192.168.1.66:9000/user/root/ORA_HIVE

19/03/12 14:38:51 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083

19/03/12 14:38:51 INFO hive.metastore: Opened a connection to metastore, current connections: 1

19/03/12 14:38:51 INFO hive.metastore: Connected to metastore.

19/03/12 14:38:51 ERROR hdfs.KeyProviderCache: Could not find uri with key [dfs.encryption.key.provider.uri] to create a keyProvider !!

19/03/12 14:38:52 ERROR exec.TaskRunner: Error in executeTask

java.lang.NoSuchMethodError: com.fasterxml.jackson.databind.ObjectMapper.readerFor(Ljava/lang/Class;)Lcom/fasterxml/jackson/databind/ObjectReader;at org.apache.hadoop.hive.common.StatsSetupConst$ColumnStatsAccurate.<clinit>(StatsSetupConst.java:165)

at org.apache.hadoop.hive.common.StatsSetupConst.parseStatsAcc(StatsSetupConst.java:300)

at org.apache.hadoop.hive.common.StatsSetupConst.clearColumnStatsState(StatsSetupConst.java:261)

at org.apache.hadoop.hive.ql.metadata.Hive.loadTable(Hive.java:2032)

at org.apache.hadoop.hive.ql.exec.MoveTask.execute(MoveTask.java:360)

at org.apache.hadoop.hive.ql.exec.Task.executeTask(Task.java:199)

at org.apache.hadoop.hive.ql.exec.TaskRunner.runSequential(TaskRunner.java:100)

at org.apache.hadoop.hive.ql.Driver.launchTask(Driver.java:2183)

at org.apache.hadoop.hive.ql.Driver.execute(Driver.java:1839)

at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:1526)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1237)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1227)

at org.apache.hadoop.hive.cli.CliDriver.processLocalCmd(CliDriver.java:233)

at org.apache.hadoop.hive.cli.CliDriver.processCmd(CliDriver.java:184)

at org.apache.hadoop.hive.cli.CliDriver.processLine(CliDriver.java:403)

at org.apache.hadoop.hive.cli.CliDriver.processLine(CliDriver.java:336)

at org.apache.hadoop.hive.cli.CliDriver.processReader(CliDriver.java:474)

at org.apache.hadoop.hive.cli.CliDriver.processFile(CliDriver.java:490)

at org.apache.hadoop.hive.cli.CliDriver.executeDriver(CliDriver.java:793)

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:759)

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:686)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.sqoop.hive.HiveImport.executeScript(HiveImport.java:331)

at org.apache.sqoop.hive.HiveImport.importTable(HiveImport.java:241)

at org.apache.sqoop.tool.ImportTool.importTable(ImportTool.java:537)

at org.apache.sqoop.tool.ImportTool.run(ImportTool.java:628)

at org.apache.sqoop.Sqoop.run(Sqoop.java:147)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:183)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:234)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:243)

at org.apache.sqoop.Sqoop.main(Sqoop.java:252)

FAILED: Execution Error, return code -101 from org.apache.hadoop.hive.ql.exec.MoveTask. com.fasterxml.jackson.databind.ObjectMapper.readerFor(Ljava/lang/Class;)Lcom/fasterxml/jackson/databi

nd/ObjectReader;19/03/12 14:38:52 ERROR ql.Driver: FAILED: Execution Error, return code -101 from org.apache.hadoop.hive.ql.exec.MoveTask. com.fasterxml.jackson.databind.ObjectMapper.readerFor(Ljava/lang/C

lass;)Lcom/fasterxml/jackson/databind/ObjectReader;19/03/12 14:38:52 INFO ql.Driver: Completed executing command(queryId=root_20190312063851_88f08fef-b111-439a-ac51-62b91c507927); Time taken: 0.497 seconds

19/03/12 14:38:52 INFO conf.HiveConf: Using the default value passed in for log id: 0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:52 INFO session.SessionState: Resetting thread name to main

19/03/12 14:38:52 INFO conf.HiveConf: Using the default value passed in for log id: 0a864287-81f1-47f6-80fb-8ea3cf0b2faf

19/03/12 14:38:52 INFO session.SessionState: Deleted directory: /tmp/hive/root/0a864287-81f1-47f6-80fb-8ea3cf0b2faf on fs with scheme hdfs

19/03/12 14:38:52 INFO session.SessionState: Deleted directory: /hadoop/hive/tmp/root/0a864287-81f1-47f6-80fb-8ea3cf0b2faf on fs with scheme file

19/03/12 14:38:52 INFO hive.metastore: Closed a connection to metastore, current connections: 0

19/03/12 14:38:52 ERROR tool.ImportTool: Import failed: java.io.IOException: Hive CliDriver exited with status=-101

at org.apache.sqoop.hive.HiveImport.executeScript(HiveImport.java:355)

at org.apache.sqoop.hive.HiveImport.importTable(HiveImport.java:241)

at org.apache.sqoop.tool.ImportTool.importTable(ImportTool.java:537)

at org.apache.sqoop.tool.ImportTool.run(ImportTool.java:628)

at org.apache.sqoop.Sqoop.run(Sqoop.java:147)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:183)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:234)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:243)

at org.apache.sqoop.Sqoop.main(Sqoop.java:252)

发现报错,先看第一个报错内容:

2019-03-12 06:38:47,077 main ERROR Could not register mbeans java.security.AccessControlException: access denied ("javax.management.MBeanTrustPermission" "register")

这个报错内容是Oracle jdbc驱动连接数据库时报的错,解决办法为:

修改$JAVA_HOME/jre/lib/security/java.policy文件,添加一行: permission javax.management.MBeanTrustPermission "register";1c6f3

来看第二个错误:

java.lang.NoSuchMethodError: com.fasterxml.jackson.databind.ObjectMapper.readerFor(Ljava/lang/Class;)Lcom/fasterxml/jackson/databind/ObjectReader;

这个报错原因是hive+sqoop的jackson版本不一致导致的问题,解决办法为:

将SQOOPHOME/lib/jackson∗.jar文件bak,再把SQOOP_HOME/lib/jackson*.jar 文件bak,再把SQOOPHOME/lib/jackson∗.jar文件bak,再把HIVE_HOME/lib/jackson*.jar 拷贝替换至 $SQOOP_HOME/lib 目录中。

[root@hadoop bin]# cd /hadoop/sqoop/lib/ [root@hadoop lib]# mkdir /hadoop/bak [root@hadoop lib]# mv jackson*.jar /hadoop/bak/ [root@hadoop lib]# cp $HIVE_HOME/lib/jackson*.jar .

这时候先删除一次已经创建的ORA_HIVE再执行一次导入:

[root@hadoop ~]# hadoop fs -rmr ORA_HIVE rmr: DEPRECATED: Please use 'rm -r' instead. 19/03/12 14:54:21 INFO fs.TrashPolicyDefault: Namenode trash configuration: Deletion interval = 0 minut es, Emptier interval = 0 minutes.Deleted ORA_HIVE [root@hadoop ~]# sqoop import --connect jdbc:oracle:thin:@192.168.1.6:1521:orcl --username scott --password tiger --table ORA_HIVE -m 1 --hive-import --hive-database oracle Warning: /hadoop/sqoop/../accumulo does not exist! Accumulo imports will fail. Please set $ACCUMULO_HOME to the root of your Accumulo installation. Warning: /hadoop/sqoop/../zookeeper does not exist! Accumulo imports will fail. Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation. 19/03/12 14:55:21 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7 19/03/12 14:55:21 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead. 19/03/12 14:55:21 INFO tool.BaseSqoopTool: Using Hive-specific delimiters for output. You can override 19/03/12 14:55:21 INFO tool.BaseSqoopTool: delimiters with --fields-terminated-by, etc. 19/03/12 14:55:21 INFO oracle.OraOopManagerFactory: Data Connector for Oracle and Hadoop is disabled. 19/03/12 14:55:21 INFO manager.SqlManager: Using default fetchSize of 1000 19/03/12 14:55:21 INFO tool.CodeGenTool: Beginning code generation SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/hbase/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/hive/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] 19/03/12 14:55:22 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 14:55:22 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM ORA_HIVE t WHERE 1=0 19/03/12 14:55:22 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /hadoop Note: /tmp/sqoop-root/compile/a53ff4a6266b4f7f9f659e0a43fa9e7e/ORA_HIVE.java uses or overrides a deprecated API. Note: Recompile with -Xlint:deprecation for details. 19/03/12 14:55:24 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/a53ff4a6266b4f7f9f659e0a43fa9e7e/ORA_HIVE.jar 19/03/12 14:55:24 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 14:55:24 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 14:55:24 INFO mapreduce.ImportJobBase: Beginning import of ORA_HIVE 19/03/12 14:55:25 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar 19/03/12 14:55:25 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 14:55:26 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps 19/03/12 14:55:26 INFO client.RMProxy: Connecting to ResourceManager at /192.168.1.66:8032 19/03/12 14:55:29 INFO db.DBInputFormat: Using read commited transaction isolation 19/03/12 14:55:29 INFO mapreduce.JobSubmitter: number of splits:1 19/03/12 14:55:29 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1552371714699_0002 19/03/12 14:55:30 INFO impl.YarnClientImpl: Submitted application application_1552371714699_0002 19/03/12 14:55:30 INFO mapreduce.Job: The url to track the job: http://hadoop:8088/proxy/application_1552371714699_0002/ 19/03/12 14:55:30 INFO mapreduce.Job: Running job: job_1552371714699_0002 19/03/12 14:55:39 INFO mapreduce.Job: Job job_1552371714699_0002 running in uber mode : false 19/03/12 14:55:39 INFO mapreduce.Job: map 0% reduce 0% 19/03/12 14:55:46 INFO mapreduce.Job: map 100% reduce 0% 19/03/12 14:55:47 INFO mapreduce.Job: Job job_1552371714699_0002 completed successfully 19/03/12 14:55:47 INFO mapreduce.Job: Counters: 30 File System Counters FILE: Number of bytes read=0 FILE: Number of bytes written=143639 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=87 HDFS: Number of bytes written=46893 HDFS: Number of read operations=4 HDFS: Number of large read operations=0 HDFS: Number of write operations=2 Job Counters Launched map tasks=1 Other local map tasks=1 Total time spent by all maps in occupied slots (ms)=4765 Total time spent by all reduces in occupied slots (ms)=0 Total time spent by all map tasks (ms)=4765 Total vcore-milliseconds taken by all map tasks=4765 Total megabyte-milliseconds taken by all map tasks=4879360 Map-Reduce Framework Map input records=1000 Map output records=1000 Input split bytes=87 Spilled Records=0 Failed Shuffles=0 Merged Map outputs=0 GC time elapsed (ms)=152 CPU time spent (ms)=2670 Physical memory (bytes) snapshot=201306112 Virtual memory (bytes) snapshot=2138767360 Total committed heap usage (bytes)=101187584 File Input Format Counters Bytes Read=0 File Output Format Counters Bytes Written=46893 19/03/12 14:55:47 INFO mapreduce.ImportJobBase: Transferred 45.7939 KB in 21.7019 seconds (2.1101 KB/sec) 19/03/12 14:55:47 INFO mapreduce.ImportJobBase: Retrieved 1000 records. 19/03/12 14:55:47 INFO mapreduce.ImportJobBase: Publishing Hive/Hcat import job data to Listeners for table ORA_HIVE 19/03/12 14:55:47 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 14:55:47 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM ORA_HIVE t WHERE 1=0 19/03/12 14:55:47 WARN hive.TableDefWriter: Column EMPNO had to be cast to a less precise type in Hive 19/03/12 14:55:47 WARN hive.TableDefWriter: Column HIREDATE had to be cast to a less precise type in Hive 19/03/12 14:55:47 INFO hive.HiveImport: Loading uploaded data into Hive 19/03/12 14:55:47 INFO conf.HiveConf: Found configuration file file:/hadoop/hive/conf/hive-site.xml Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true 19/03/12 14:55:50 INFO SessionState: Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true 19/03/12 14:55:50 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:50 INFO session.SessionState: Created local directory: /hadoop/hive/tmp/root/4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:50 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/4a5f4f66-31eb-4a12-95d2-bf22d45ecde4/_tmp_space.db 19/03/12 14:55:50 INFO conf.HiveConf: Using the default value passed in for log id: 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:50 INFO session.SessionState: Updating thread name to 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 main 19/03/12 14:55:50 INFO conf.HiveConf: Using the default value passed in for log id: 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:51 INFO ql.Driver: Compiling command(queryId=root_20190312065551_050b64c3-f5a1-4073-addd-a838c3585502): CREATE TABLE IF NOT EXISTS `oracle`.`ORA_HIVE` ( `EMPNO` DOUBLE, `ENAM E` STRING, `HIREDATE` STRING) COMMENT 'Imported by sqoop on 2019/03/12 06:55:47' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 14:55:53 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 14:55:53 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 14:55:53 INFO hive.metastore: Connected to metastore. 19/03/12 14:55:53 INFO parse.CalcitePlanner: Starting Semantic Analysis 19/03/12 14:55:53 INFO parse.CalcitePlanner: Creating table oracle.ORA_HIVE position=27 19/03/12 14:55:53 INFO ql.Driver: Semantic Analysis Completed 19/03/12 14:55:53 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null) 19/03/12 14:55:53 INFO ql.Driver: Completed compiling command(queryId=root_20190312065551_050b64c3-f5a1-4073-addd-a838c3585502); Time taken: 2.75 seconds 19/03/12 14:55:53 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager 19/03/12 14:55:53 INFO ql.Driver: Executing command(queryId=root_20190312065551_050b64c3-f5a1-4073-addd-a838c3585502): CREATE TABLE IF NOT EXISTS `oracle`.`ORA_HIVE` ( `EMPNO` DOUBLE, `ENAM E` STRING, `HIREDATE` STRING) COMMENT 'Imported by sqoop on 2019/03/12 06:55:47' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 14:55:53 INFO sqlstd.SQLStdHiveAccessController: Created SQLStdHiveAccessController for session context : HiveAuthzSessionContext [sessionString=4a5f4f66-31eb-4a12-95d2-bf22d45ecde 4, clientType=HIVECLI]19/03/12 14:55:53 WARN session.SessionState: METASTORE_FILTER_HOOK will be ignored, since hive.security.authorization.manager is set to instance of HiveAuthorizerFactory. 19/03/12 14:55:53 INFO hive.metastore: Mestastore configuration hive.metastore.filter.hook changed from org.apache.hadoop.hive.metastore.DefaultMetaStoreFilterHookImpl to org.apache.hadoop. hive.ql.security.authorization.plugin.AuthorizationMetaStoreFilterHook19/03/12 14:55:53 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 14:55:53 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 14:55:53 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 14:55:53 INFO hive.metastore: Connected to metastore. 19/03/12 14:55:53 INFO ql.Driver: Completed executing command(queryId=root_20190312065551_050b64c3-f5a1-4073-addd-a838c3585502); Time taken: 0.088 seconds OK 19/03/12 14:55:53 INFO ql.Driver: OK Time taken: 2.851 seconds 19/03/12 14:55:53 INFO CliDriver: Time taken: 2.851 seconds 19/03/12 14:55:53 INFO conf.HiveConf: Using the default value passed in for log id: 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:53 INFO session.SessionState: Resetting thread name to main 19/03/12 14:55:53 INFO conf.HiveConf: Using the default value passed in for log id: 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:53 INFO session.SessionState: Updating thread name to 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 main 19/03/12 14:55:53 INFO ql.Driver: Compiling command(queryId=root_20190312065553_81be5e55-9c13-4ad8-86e0-3ec286bea2e0): LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/ORA_HIVE' INTO TABLE `oracle`.`ORA_HIVE` 19/03/12 14:55:54 INFO ql.Driver: Semantic Analysis Completed 19/03/12 14:55:54 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null) 19/03/12 14:55:54 INFO ql.Driver: Completed compiling command(queryId=root_20190312065553_81be5e55-9c13-4ad8-86e0-3ec286bea2e0); Time taken: 0.414 seconds 19/03/12 14:55:54 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager 19/03/12 14:55:54 INFO ql.Driver: Executing command(queryId=root_20190312065553_81be5e55-9c13-4ad8-86e0-3ec286bea2e0): LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/ORA_HIVE' INTO TABLE `oracle`.`ORA_HIVE` 19/03/12 14:55:54 INFO ql.Driver: Starting task [Stage-0:MOVE] in serial mode 19/03/12 14:55:54 INFO hive.metastore: Closed a connection to metastore, current connections: 0 Loading data to table oracle.ora_hive 19/03/12 14:55:54 INFO exec.Task: Loading data to table oracle.ora_hive from hdfs://192.168.1.66:9000/user/root/ORA_HIVE 19/03/12 14:55:54 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 14:55:54 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 14:55:54 INFO hive.metastore: Connected to metastore. 19/03/12 14:55:54 ERROR hdfs.KeyProviderCache: Could not find uri with key [dfs.encryption.key.provider.uri] to create a keyProvider !! 19/03/12 14:55:55 INFO ql.Driver: Starting task [Stage-1:STATS] in serial mode 19/03/12 14:55:55 INFO exec.StatsTask: Executing stats task 19/03/12 14:55:55 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 14:55:55 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 14:55:55 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 14:55:55 INFO hive.metastore: Connected to metastore. 19/03/12 14:55:55 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 14:55:55 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 14:55:55 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 14:55:55 INFO hive.metastore: Connected to metastore. 19/03/12 14:55:55 INFO exec.StatsTask: Table oracle.ora_hive stats: [numFiles=2, numRows=0, totalSize=93786, rawDataSize=0] 19/03/12 14:55:55 INFO ql.Driver: Completed executing command(queryId=root_20190312065553_81be5e55-9c13-4ad8-86e0-3ec286bea2e0); Time taken: 1.186 seconds OK 19/03/12 14:55:55 INFO ql.Driver: OK Time taken: 1.6 seconds 19/03/12 14:55:55 INFO CliDriver: Time taken: 1.6 seconds 19/03/12 14:55:55 INFO conf.HiveConf: Using the default value passed in for log id: 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:55 INFO session.SessionState: Resetting thread name to main 19/03/12 14:55:55 INFO conf.HiveConf: Using the default value passed in for log id: 4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 19/03/12 14:55:55 INFO session.SessionState: Deleted directory: /tmp/hive/root/4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 on fs with scheme hdfs 19/03/12 14:55:55 INFO session.SessionState: Deleted directory: /hadoop/hive/tmp/root/4a5f4f66-31eb-4a12-95d2-bf22d45ecde4 on fs with scheme file 19/03/12 14:55:55 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 14:55:55 INFO hive.HiveImport: Hive import complete. 19/03/12 14:55:55 INFO hive.HiveImport: Export directory is contains the _SUCCESS file only, removing the directory.

这次导入成功了,去hive验证一下:

[root@hadoop bin]# ./hive SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/hadoop/hive/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory] Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases. hive> use oracle; OK Time taken: 1.111 seconds hive> select * from ORA_HIVE; 。。。。。。。 997 XAOOEHXITLWEFBZFCNAB NULL 998 IZVWHVGTEJHCJWJZTDXK NULL 999 YMBFLJTTWPENEBXEWVIJ NULL 1000 QFKDIGEYFWQBZBGTJPPD NULL Time taken: 2.092 seconds, Fetched: 2000 row(s) 已经导入进来了

相关文章推荐

- Sqoop增量同步Oracle数据到hive:merge-key再次详解

- 通过sqoop导入Oracle数据到Hive时异常.IOException: Cannot run program "hive": error=2, No such file or directory

- 通过sqoop增量传送oracle数据到hive

- Sqoop增量同步mysql/oracle数据到hive(merge-key/append)测试文档

- 《Sqoop将Oracle数据导入至Hive中界面无错却依旧没有数据导入》

- Hadoop数据工具sqoop,导入HDFS,HIVE,HBASE,导出到oracle

- sqoop从hive中导出oracle数据

- Oracle中通过Job实现定时同步两个数据表之间的数据

- SQOOP从Oracle导入数据到Hive时hang up在MapReduce作业过程

- sqoop把oracle数据导入hive出现的问题

- 通过Sqoop实现Mysql / Oracle 与HDFS / Hbase互导数据

- 通过Sqoop实现Mysql / Oracle 与HDFS / Hbase互导数据

- 运用sqoop将数据从oracle导入到hive中的一些坑

- Oracle 11g 通过创建物化视图实现不同数据库间的表数据同步

- 定时从大数据平台同步HIVE数据到oracle

- 用sqoop将oracle数据导入Hive

- ORACLE 通过EXP IMP同步数据时sequence问题

- 通过Sqoop实现Mysql / Oracle 与HDFS / Hbase互导数据

- 利用sqoop 将 hive/hdfs数据 导入 Oracle中

- 使用Sqoop将HDFS/Hive/HBase与MySQL/Oracle中的数据相互导入、导出