Sqoop全量同步mysql/Oracle数据到hive

前面文章写了如何部署一套伪分布式的handoop+hive+hbase+kylin环境,也介绍了如何在这个搭建好的伪分布式环境安装配置sqoop工具以及安装完成功后简单的使用过程中出现的错误及解决办法,前面说的文章连接清单如下:

Hadoop+Hive+HBase+Kylin 伪分布式安装指南

sqoop1.4.7的安装及使用(hadoop2.7环境)

Oracle通过sqoop同步数据到hive

接下来本篇文章详细介绍一下使用sqoop全量同步oracle/mysql数据到hive,这里实验采用oracle数据库为例,

后面一篇文章将详细介绍:

1、sqoop --incremental append 附加模式增量同步数据到hive

2、sqoop --incremental --merge-key合并模式增量同步到hive

文章现已经写完,连接下面给出:

Sqoop增量同步mysql/Oracle数据到hive(merge-key/append)测试文档

一、知识储备

sqoop import和export工具有些通用的选项,如下表所示:

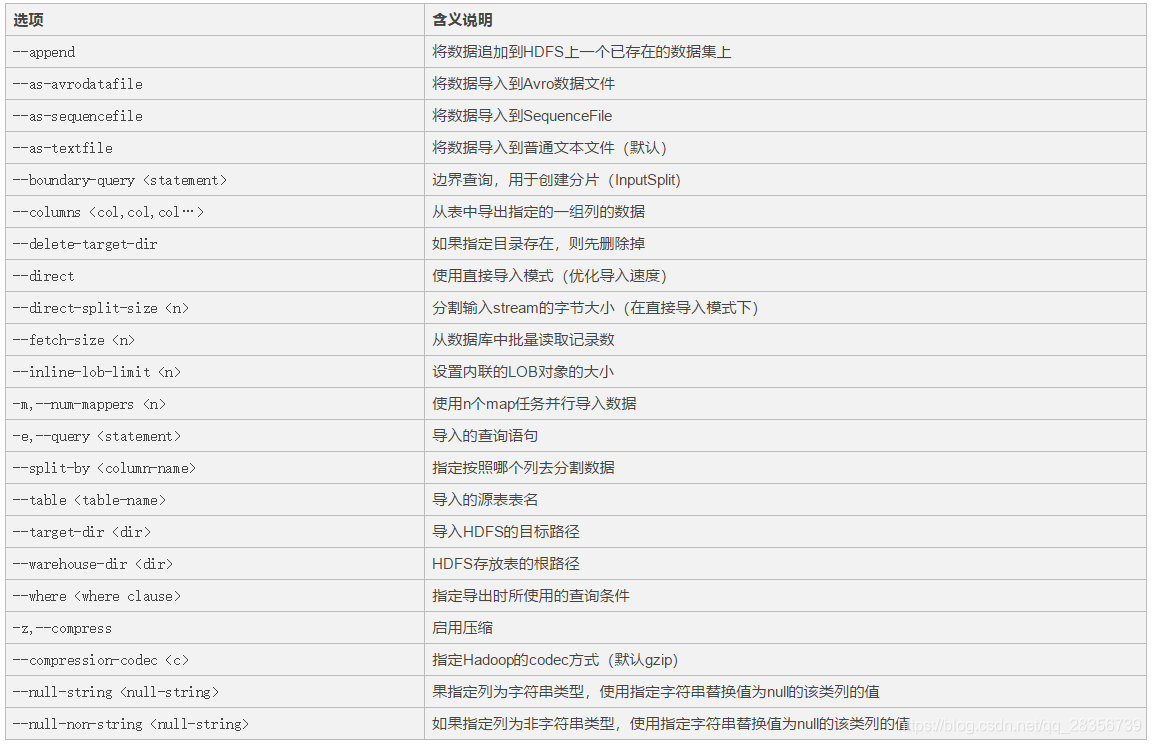

数据导入工具import:

import工具,是将HDFS平台外部的结构化存储系统中的数据导入到Hadoop平台,便于后续分析。我们先看一下import工具的基本选项及其含义,如下表所示:

下面将通过一系列案例来测试这些功能。因为笔者现在只用到import,因此本文章只测试import相关功能,export参数没有列出,请读者自行测试。

二、导入实验

1、Oracle库创建测试用表初始化及hive创建表

--连接的用户为scott用户 create table inr_emp as select a.empno, a.ename, a.job, a.mgr, a.hiredate, a.sal, a.deptno,sysdate as etltime from emp a where job is not null; select * from inr_emp; EMPNO ENAME JOB MGR HIREDATE SAL DEPTNO ETLTIME 7369 er CLERK 7902 1980/12/17 800.00 20 2019/3/19 14:02:13 7499 ALLEN SALESMAN 7698 1981/2/20 1600.00 30 2019/3/19 14:02:13 7521 WARD SALESMAN 7698 1981/2/22 1250.00 30 2019/3/19 14:02:13 7566 JONES MANAGER 7839 1981/4/2 2975.00 20 2019/3/19 14:02:13 7654 MARTIN SALESMAN 7698 1981/9/28 1250.00 30 2019/3/19 14:02:13 7698 BLAKE MANAGER 7839 1981/5/1 2850.00 30 2019/3/19 14:02:13 7782 CLARK MANAGER 7839 1981/6/9 2450.00 10 2019/3/19 14:02:13 7839 KING PRESIDENT 1981/11/17 5000.00 10 2019/3/19 14:02:13 7844 TURNER SALESMAN 7698 1981/9/8 1500.00 30 2019/3/19 14:02:13 7876 ADAMS CLERK 7788 1987/5/23 1100.00 20 2019/3/19 14:02:13 7900 JAMES CLERK 7698 1981/12/3 950.00 30 2019/3/19 14:02:13 7902 FORD ANALYST 7566 1981/12/3 3000.00 20 2019/3/19 14:02:13 7934 sdf sdf 7782 1982/1/23 1300.00 10 2019/3/19 14:02:13 --hive创建表 [root@hadoop bin]# ./hive SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/hadoop/hive/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory] Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases. hive> use oracle; OK Time taken: 1.234 seconds hive> create table INR_EMP > ( > empno int, > ename string, > job string, > mgr int, > hiredate DATE, > sal float, > deptno int, > etltime DATE > ); OK Time taken: 0.63 seconds

2、全量全列导入数据

[root@hadoop ~]# sqoop import --connect jdbc:oracle:thin:@192.168.1.6:1521:orcl --username scott --password tiger --table INR_EMP -m 1 --hive-import --hive-database oracle Warning: /hadoop/sqoop/../accumulo does not exist! Accumulo imports will fail. Please set $ACCUMULO_HOME to the root of your Accumulo installation. Warning: /hadoop/sqoop/../zookeeper does not exist! Accumulo imports will fail. Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation. 19/03/12 18:28:29 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7 19/03/12 18:28:29 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead. 19/03/12 18:28:29 INFO tool.BaseSqoopTool: Using Hive-specific delimiters for output. You can override 19/03/12 18:28:29 INFO tool.BaseSqoopTool: delimiters with --fields-terminated-by, etc. 19/03/12 18:28:29 INFO oracle.OraOopManagerFactory: Data Connector for Oracle and Hadoop is disabled. 19/03/12 18:28:29 INFO manager.SqlManager: Using default fetchSize of 1000 19/03/12 18:28:29 INFO tool.CodeGenTool: Beginning code generation SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/hbase/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/hive/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/ 4000 StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] 19/03/12 18:28:30 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:28:30 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM INR_EMP t WHERE 1=0 19/03/12 18:28:30 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /hadoop Note: /tmp/sqoop-root/compile/cbdca745b64b4ab94902764a5ea26928/INR_EMP.java uses or overrides a deprecated API. Note: Recompile with -Xlint:deprecation for details. 19/03/12 18:28:33 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/cbdca745b64b4ab94902764a5ea26928/INR_EMP.jar 19/03/12 18:28:34 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:28:34 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:28:34 INFO mapreduce.ImportJobBase: Beginning import of INR_EMP 19/03/12 18:28:35 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar 19/03/12 18:28:35 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:28:36 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps 19/03/12 18:28:36 INFO client.RMProxy: Connecting to ResourceManager at /192.168.1.66:8032 19/03/12 18:28:39 INFO db.DBInputFormat: Using read commited transaction isolation 19/03/12 18:28:39 INFO mapreduce.JobSubmitter: number of splits:1 19/03/12 18:28:40 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1552371714699_0004 19/03/12 18:28:40 INFO impl.YarnClientImpl: Submitted application application_1552371714699_0004 19/03/12 18:28:40 INFO mapreduce.Job: The url to track the job: http://hadoop:8088/proxy/application_1552371714699_0004/ 19/03/12 18:28:40 INFO mapreduce.Job: Running job: job_1552371714699_0004 19/03/12 18:28:51 INFO mapreduce.Job: Job job_1552371714699_0004 running in uber mode : false 19/03/12 18:28:51 INFO mapreduce.Job: map 0% reduce 0% 19/03/12 18:29:00 INFO mapreduce.Job: map 100% reduce 0% 19/03/12 18:29:01 INFO mapreduce.Job: Job job_1552371714699_0004 completed successfully 19/03/12 18:29:01 INFO mapreduce.Job: Counters: 30 File System Counters FILE: Number of bytes read=0 FILE: Number of bytes written=143523 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=87 HDFS: Number of bytes written=976 HDFS: Number of read operations=4 HDFS: Number of large read operations=0 HDFS: Number of write operations=2 Job Counters Launched map tasks=1 Other local map tasks=1 Total time spent by all maps in occupied slots (ms)=5538 Total time spent by all reduces in occupied slots (ms)=0 Total time spent by all map tasks (ms)=5538 Total vcore-milliseconds taken by all map tasks=5538 Total megabyte-milliseconds taken by all map tasks=5670912 Map-Reduce Framework Map input records=13 Map output records=13 Input split bytes=87 Spilled Records=0 Failed Shuffles=0 Merged Map outputs=0 GC time elapsed (ms)=156 CPU time spent (ms)=2560 Physical memory (bytes) snapshot=207745024 Virtual memory (bytes) snapshot=2150998016 Total committed heap usage (bytes)=99090432 File Input Format Counters Bytes Read=0 File Output Format Counters Bytes Written=976 19/03/12 18:29:01 INFO mapreduce.ImportJobBase: Transferred 976 bytes in 25.1105 seconds (38.8683 bytes/sec) 19/03/12 18:29:01 INFO mapreduce.ImportJobBase: Retrieved 13 records. 19/03/12 18:29:01 INFO mapreduce.ImportJobBase: Publishing Hive/Hcat import job data to Listeners for table INR_EMP 19/03/12 18:29:01 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:29:01 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM INR_EMP t WHERE 1=0 19/03/12 18:29:01 WARN hive.TableDefWriter: Column EMPNO had to be cast to a less precise type in Hive 19/03/12 18:29:01 WARN hive.TableDefWriter: Column MGR had to be cast to a less precise type in Hive 19/03/12 18:29:01 WARN hive.TableDefWriter: Column HIREDATE had to be cast to a less precise type in Hive 19/03/12 18:29:01 WARN hive.TableDefWriter: Column SAL had to be cast to a less precise type in Hive 19/03/12 18:29:01 WARN hive.TableDefWriter: Column DEPTNO had to be cast to a less precise type in Hive 19/03/12 18:29:01 WARN hive.TableDefWriter: Column ETLTIME had to be cast to a less precise type in Hive 19/03/12 18:29:01 INFO hive.HiveImport: Loading uploaded data into Hive 19/03/12 18:29:01 INFO conf.HiveConf: Found configuration file file:/hadoop/hive/conf/hive-site.xml Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true 19/03/12 18:29:05 INFO SessionState: Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true 19/03/12 18:29:05 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:07 INFO session.SessionState: Created local directory: /hadoop/hive/tmp/root/ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:07 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/ac8d208d-2339-4bae-aee8-c9fc1c3b93a4/_tmp_space.db 19/03/12 18:29:07 INFO conf.HiveConf: Using the default value passed in for log id: ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:07 INFO session.SessionState: Updating thread name to ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 main 19/03/12 18:29:07 INFO conf.HiveConf: Using the default value passed in for log id: ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:07 INFO ql.Driver: Compiling command(queryId=root_20190312102907_3fbb2f16-c52a-4c3c-843d-45c9ca918228): CREATE TABLE IF NOT EXISTS `oracle`.`INR_EMP` ( `EMPNO` DOUBLE, `ENAME ` STRING, `JOB` STRING, `MGR` DOUBLE, `HIREDATE` STRING, `SAL` DOUBLE, `DEPTNO` DOUBLE, `ETLTIME` STRING) COMMENT 'Imported by sqoop on 2019/03/12 10:29:01' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 18:29:10 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:29:10 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:29:10 INFO hive.metastore: Connected to metastore. 19/03/12 18:29:10 INFO parse.CalcitePlanner: Starting Semantic Analysis 19/03/12 18:29:10 INFO parse.CalcitePlanner: Creating table oracle.INR_EMP position=27 19/03/12 18:29:10 INFO ql.Driver: Semantic Analysis Completed 19/03/12 18:29:10 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null) 19/03/12 18:29:10 INFO ql.Driver: Completed compiling command(queryId=root_20190312102907_3fbb2f16-c52a-4c3c-843d-45c9ca918228); Time taken: 3.007 seconds 19/03/12 18:29:10 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager 19/03/12 18:29:10 INFO ql.Driver: Executing command(queryId=root_20190312102907_3fbb2f16-c52a-4c3c-843d-45c9ca918228): CREATE TABLE IF NOT EXISTS `oracle`.`INR_EMP` ( `EMPNO` DOUBLE, `ENAME ` STRING, `JOB` STRING, `MGR` DOUBLE, `HIREDATE` STRING, `SAL` DOUBLE, `DEPTNO` DOUBLE, `ETLTIME` STRING) COMMENT 'Imported by sqoop on 2019/03/12 10:29:01' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 18:29:10 INFO sqlstd.SQLStdHiveAccessController: Created SQLStdHiveAccessController for session context : HiveAuthzSessionContext [sessionString=ac8d208d-2339-4bae-aee8-c9fc1c3b93a 4, clientType=HIVECLI]19/03/12 18:29:10 WARN session.SessionState: METASTORE_FILTER_HOOK will be ignored, since hive.security.authorization.manager is set to instance of HiveAuthorizerFactory. 19/03/12 18:29:10 INFO hive.metastore: Mestastore configuration hive.metastore.filter.hook changed from org.apache.hadoop.hive.metastore.DefaultMetaStoreFilterHookImpl to org.apache.hadoop. hive.ql.security.authorization.plugin.AuthorizationMetaStoreFilterHook19/03/12 18:29:10 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:29:10 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:29:10 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:29:10 INFO hive.metastore: Connected to metastore. 19/03/12 18:29:10 INFO ql.Driver: Completed executing command(queryId=root_20190312102907_3fbb2f16-c52a-4c3c-843d-45c9ca918228); Time taken: 0.083 seconds OK 19/03/12 18:29:10 INFO ql.Driver: OK Time taken: 3.101 seconds 19/03/12 18:29:10 INFO CliDriver: Time taken: 3.101 seconds 19/03/12 18:29:10 INFO conf.HiveConf: Using the default value passed in for log id: ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:10 INFO session.SessionState: Resetting thread name to main 19/03/12 18:29:10 INFO conf.HiveConf: Using the default value passed in for log id: ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:10 INFO session.SessionState: Updating thread name to ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 main 19/03/12 18:29:10 INFO ql.Driver: Compiling command(queryId=root_20190312102910_d3ab56d4-1bcb-4063-aaab-badd4f8f13e2): LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/INR_EMP' INTO TABLE `oracle`.`INR_EMP` 19/03/12 18:29:11 INFO ql.Driver: Semantic Analysis Completed 19/03/12 18:29:11 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null) 19/03/12 18:29:11 INFO ql.Driver: Completed compiling command(queryId=root_20190312102910_d3ab56d4-1bcb-4063-aaab-badd4f8f13e2); Time taken: 0.446 seconds 19/03/12 18:29:11 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager 19/03/12 18:29:11 INFO ql.Driver: Executing command(queryId=root_20190312102910_d3ab56d4-1bcb-4063-aaab-badd4f8f13e2): LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/INR_EMP' INTO TABLE `oracle`.`INR_EMP` 19/03/12 18:29:11 INFO ql.Driver: Starting task [Stage-0:MOVE] in serial mode 19/03/12 18:29:11 INFO hive.metastore: Closed a connection to metastore, current connections: 0 Loading data to table oracle.inr_emp 19/03/12 18:29:11 INFO exec.Task: Loading data to table oracle.inr_emp from hdfs://192.168.1.66:9000/user/root/INR_EMP 19/03/12 18:29:11 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:29:11 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:29:11 INFO hive.metastore: Connected to metastore. 19/03/12 18:29:11 ERROR hdfs.KeyProviderCache: Could not find uri with key [dfs.encryption.key.provider.uri] to create a keyProvider !! 19/03/12 18:29:12 INFO ql.Driver: Starting task [Stage-1:STATS] in serial mode 19/03/12 18:29:12 INFO exec.StatsTask: Executing stats task 19/03/12 18:29:12 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:29:12 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:29:12 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:29:12 INFO hive.metastore: Connected to metastore. 19/03/12 18:29:12 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:29:12 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:29:12 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:29:12 INFO hive.metastore: Connected to metastore. 19/03/12 18:29:12 INFO exec.StatsTask: Table oracle.inr_emp stats: [numFiles=1, numRows=0, totalSize=976, rawDataSize=0] 19/03/12 18:29:12 INFO ql.Driver: Completed executing command(queryId=root_20190312102910_d3ab56d4-1bcb-4063-aaab-badd4f8f13e2); Time taken: 1.114 seconds OK 19/03/12 18:29:12 INFO ql.Driver: OK Time taken: 1.56 seconds 19/03/12 18:29:12 INFO CliDriver: Time taken: 1.56 seconds 19/03/12 18:29:12 INFO conf.HiveConf: Using the default value passed in for log id: ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:12 INFO session.SessionState: Resetting thread name to main 19/03/12 18:29:12 INFO conf.HiveConf: Using the default value passed in for log id: ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 19/03/12 18:29:12 INFO session.SessionState: Deleted directory: /tmp/hive/root/ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 on fs with scheme hdfs 19/03/12 18:29:12 INFO session.SessionState: Deleted directory: /hadoop/hive/tmp/root/ac8d208d-2339-4bae-aee8-c9fc1c3b93a4 on fs with scheme file 19/03/12 18:29:12 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:29:12 INFO hive.HiveImport: Hive import complete. 19/03/12 18:29:12 INFO hive.HiveImport: Export directory is contains the _SUCCESS file only, removing the directory.

查询hive表:

hive> select * from inr_emp; OK 7369 er CLERK 7902 NULL 800.0 20 NULL 7499 ALLEN SALESMAN 7698 NULL 1600.0 30 NULL 7521 WARD SALESMAN 7698 NULL 1250.0 30 NULL 7566 JONES MANAGER 7839 NULL 2975.0 20 NULL 7654 MARTIN SALESMAN 7698 NULL 1250.0 30 NULL 7698 BLAKE MANAGER 7839 NULL 2850.0 30 NULL 7782 CLARK MANAGER 7839 NULL 2450.0 10 NULL 7839 KING PRESIDENT NULL NULL 5000.0 10 NULL 7844 TURNER SALESMAN 7698 NULL 1500.0 30 NULL 7876 ADAMS CLERK 7788 NULL 1100.0 20 NULL 7900 JAMES CLERK 7698 NULL 950.0 30 NULL 7902 FORD ANALYST 7566 NULL 3000.0 20 NULL 7934 sdf sdf 7782 NULL 1300.0 10 NULL Time taken: 3.103 seconds, Fetched: 13 row(s)

发现导入hive表时间相关的数据都成空值了,这里我们把oracle时间列对应的hive表的时间列改为string类型重新导入:

hive> drop table inr_emp; OK Time taken: 2.483 seconds hive> create table INR_EMP > ( > empno int, > ename string, > job string, > mgr int, > hiredate string, > sal float, > deptno int, > etltime string > ); OK Time taken: 0.109 seconds

再次执行一次上面的导入,看下结果:

hive> select * from inr_emp; OK 7369 er CLERK 7902 1980-12-17 00:00:00.0 800.0 20 2019-03-19 14:02:13.0 7499 ALLEN SALESMAN 7698 1981-02-20 00:00:00.0 1600.0 30 2019-03-19 14:02:13.0 7521 WARD SALESMAN 7698 1981-02-22 00:00:00.0 1250.0 30 2019-03-19 14:02:13.0 7566 JONES MANAGER 7839 1981-04-02 00:00:00.0 2975.0 20 2019-03-19 14:02:13.0 7654 MARTIN SALESMAN 7698 1981-09-28 00:00:00.0 1250.0 30 2019-03-19 14:02:13.0 7698 BLAKE MANAGER 7839 1981-05-01 00:00:00.0 2850.0 30 2019-03-19 14:02:13.0 7782 CLARK MANAGER 7839 1981-06-09 00:00:00.0 2450.0 10 2019-03-19 14:02:13.0 7839 KING PRESIDENT NULL 1981-11-17 00:00:00.0 5000.0 10 2019-03-19 14:02:13.0 7844 TURNER SALESMAN 7698 1981-09-08 00:00:00.0 1500.0 30 2019-03-19 14:02:13.0 7876 ADAMS CLERK 7788 1987-05-23 00:00:00.0 1100.0 20 2019-03-19 14:02:13.0 7900 JAMES CLERK 7698 1981-12-03 00:00:00.0 950.0 30 2019-03-19 14:02:13.0 7902 FORD ANALYST 7566 1981-12-03 00:00:00.0 3000.0 20 2019-03-19 14:02:13.0 7934 sdf sdf 7782 1982-01-23 00:00:00.0 1300.0 10 2019-03-19 14:02:13.0 Time taken: 0.369 seconds, Fetched: 13 row(s)

这次正常了。

3、全量选择列导入

先drop了hive表inr_emp表,重建:

hive> drop table inr_emp; OK Time taken: 0.205 seconds hive> create table INR_EMP > ( > empno int, > ename string, > job string, > mgr int, > hiredate string, > sal float, > deptno int, > etltime string > ); OK Time taken: 0.102 seconds

然后另开一个会话挑几列导入

[root@hadoop ~]# sqoop import --connect jdbc:oracle:thin:@192.168.1.6:1521:orcl --username scott --password tiger --table INR_EMP -m 1 --columns 'EMPNO,ENAME,SAL,ETLTIME' --hive-import --hi ve-database oracleWarning: /hadoop/sqoop/../accumulo does not exist! Accumulo imports will fail. Please set $ACCUMULO_HOME to the root of your Accumulo installation. Warning: /hadoop/sqoop/../zookeeper does not exist! Accumulo imports will fail. Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation. 19/03/12 18:44:23 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7 19/03/12 23ff8 18:44:23 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead. 19/03/12 18:44:23 INFO tool.BaseSqoopTool: Using Hive-specific delimiters for output. You can override 19/03/12 18:44:23 INFO tool.BaseSqoopTool: delimiters with --fields-terminated-by, etc. 19/03/12 18:44:23 INFO oracle.OraOopManagerFactory: Data Connector for Oracle and Hadoop is disabled. 19/03/12 18:44:23 INFO manager.SqlManager: Using default fetchSize of 1000 19/03/12 18:44:23 INFO tool.CodeGenTool: Beginning code generation SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/hbase/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/hadoop/hive/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] 19/03/12 18:44:24 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:44:24 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM INR_EMP t WHERE 1=0 19/03/12 18:44:24 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /hadoop Note: /tmp/sqoop-root/compile/2e1abddfc21ac4e688984b572589f687/INR_EMP.java uses or overrides a deprecated API. Note: Recompile with -Xlint:deprecation for details. 19/03/12 18:44:26 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/2e1abddfc21ac4e688984b572589f687/INR_EMP.jar 19/03/12 18:44:26 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:44:26 INFO mapreduce.ImportJobBase: Beginning import of INR_EMP 19/03/12 18:44:27 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar 19/03/12 18:44:27 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps 19/03/12 18:44:28 INFO client.RMProxy: Connecting to ResourceManager at /192.168.1.66:8032 19/03/12 18:44:30 INFO db.DBInputFormat: Using read commited transaction isolation 19/03/12 18:44:30 INFO mapreduce.JobSubmitter: number of splits:1 19/03/12 18:44:30 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1552371714699_0007 19/03/12 18:44:31 INFO impl.YarnClientImpl: Submitted application application_1552371714699_0007 19/03/12 18:44:31 INFO mapreduce.Job: The url to track the job: http://hadoop:8088/proxy/application_1552371714699_0007/ 19/03/12 18:44:31 INFO mapreduce.Job: Running job: job_1552371714699_0007 19/03/12 18:44:40 INFO mapreduce.Job: Job job_1552371714699_0007 running in uber mode : false 19/03/12 18:44:40 INFO mapreduce.Job: map 0% reduce 0% 19/03/12 18:44:46 INFO mapreduce.Job: map 100% reduce 0% 19/03/12 18:44:47 INFO mapreduce.Job: Job job_1552371714699_0007 completed successfully 19/03/12 18:44:47 INFO mapreduce.Job: Counters: 30 File System Counters FILE: Number of bytes read=0 FILE: Number of bytes written=143499 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=87 HDFS: Number of bytes written=486 HDFS: Number of read operations=4 HDFS: Number of large read operations=0 HDFS: Number of write operations=2 Job Counters Launched map tasks=1 Other local map tasks=1 Total time spent by all maps in occupied slots (ms)=4271 Total time spent by all reduces in occupied slots (ms)=0 Total time spent by all map tasks (ms)=4271 Total vcore-milliseconds taken by all map tasks=4271 Total megabyte-milliseconds taken by all map tasks=4373504 Map-Reduce Framework Map input records=13 Map output records=13 Input split bytes=87 Spilled Records=0 Failed Shuffles=0 Merged Map outputs=0 GC time elapsed (ms)=69 CPU time spent (ms)=1990 Physical memory (bytes) snapshot=188010496 Virtual memory (bytes) snapshot=2143096832 Total committed heap usage (bytes)=111149056 File Input Format Counters Bytes Read=0 File Output Format Counters Bytes Written=486 19/03/12 18:44:47 INFO mapreduce.ImportJobBase: Transferred 486 bytes in 20.0884 seconds (24.193 bytes/sec) 19/03/12 18:44:47 INFO mapreduce.ImportJobBase: Retrieved 13 records. 19/03/12 18:44:47 INFO mapreduce.ImportJobBase: Publishing Hive/Hcat import job data to Listeners for table INR_EMP 19/03/12 18:44:47 INFO manager.OracleManager: Time zone has been set to GMT 19/03/12 18:44:47 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM INR_EMP t WHERE 1=0 19/03/12 18:44:48 WARN hive.TableDefWriter: Column EMPNO had to be cast to a less precise type in Hive 19/03/12 18:44:48 WARN hive.TableDefWriter: Column SAL had to be cast to a less precise type in Hive 19/03/12 18:44:48 WARN hive.TableDefWriter: Column ETLTIME had to be cast to a less precise type in Hive 19/03/12 18:44:48 INFO hive.HiveImport: Loading uploaded data into Hive 19/03/12 18:44:48 INFO conf.HiveConf: Found configuration file file:/hadoop/hive/conf/hive-site.xml Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true 19/03/12 18:44:50 INFO SessionState: Logging initialized using configuration in jar:file:/hadoop/hive/lib/hive-common-2.3.2.jar!/hive-log4j2.properties Async: true 19/03/12 18:44:50 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:51 INFO session.SessionState: Created local directory: /hadoop/hive/tmp/root/08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:51 INFO session.SessionState: Created HDFS directory: /tmp/hive/root/08d98a96-18e1-4474-98df-1991d7b421f5/_tmp_space.db 19/03/12 18:44:51 INFO conf.HiveConf: Using the default value passed in for log id: 08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:51 INFO session.SessionState: Updating thread name to 08d98a96-18e1-4474-98df-1991d7b421f5 main 19/03/12 18:44:51 INFO conf.HiveConf: Using the default value passed in for log id: 08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:51 INFO ql.Driver: Compiling command(queryId=root_20190312104451_88b6d963-af76-490c-8832-ccc07e0667a7): CREATE TABLE IF NOT EXISTS `oracle`.`INR_EMP` ( `EMPNO` DOUBLE, `ENAME ` STRING, `SAL` DOUBLE, `ETLTIME` STRING) COMMENT 'Imported by sqoop on 2019/03/12 10:44:48' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 18:44:53 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:44:53 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:44:53 INFO hive.metastore: Connected to metastore. 19/03/12 18:44:53 INFO parse.CalcitePlanner: Starting Semantic Analysis 19/03/12 18:44:53 INFO parse.CalcitePlanner: Creating table oracle.INR_EMP position=27 19/03/12 18:44:53 INFO ql.Driver: Semantic Analysis Completed 19/03/12 18:44:53 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null) 19/03/12 18:44:53 INFO ql.Driver: Completed compiling command(queryId=root_20190312104451_88b6d963-af76-490c-8832-ccc07e0667a7); Time taken: 2.808 seconds 19/03/12 18:44:53 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager 19/03/12 18:44:53 INFO ql.Driver: Executing command(queryId=root_20190312104451_88b6d963-af76-490c-8832-ccc07e0667a7): CREATE TABLE IF NOT EXISTS `oracle`.`INR_EMP` ( `EMPNO` DOUBLE, `ENAME ` STRING, `SAL` DOUBLE, `ETLTIME` STRING) COMMENT 'Imported by sqoop on 2019/03/12 10:44:48' ROW FORMAT DELIMITED FIELDS TERMINATED BY '\001' LINES TERMINATED BY '\012' STORED AS TEXTFILE19/03/12 18:44:54 INFO sqlstd.SQLStdHiveAccessController: Created SQLStdHiveAccessController for session context : HiveAuthzSessionContext [sessionString=08d98a96-18e1-4474-98df-1991d7b421f 5, clientType=HIVECLI]19/03/12 18:44:54 WARN session.SessionState: METASTORE_FILTER_HOOK will be ignored, since hive.security.authorization.manager is set to instance of HiveAuthorizerFactory. 19/03/12 18:44:54 INFO hive.metastore: Mestastore configuration hive.metastore.filter.hook changed from org.apache.hadoop.hive.metastore.DefaultMetaStoreFilterHookImpl to org.apache.hadoop. hive.ql.security.authorization.plugin.AuthorizationMetaStoreFilterHook19/03/12 18:44:54 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:44:54 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:44:54 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:44:54 INFO hive.metastore: Connected to metastore. 19/03/12 18:44:54 INFO ql.Driver: Completed executing command(queryId=root_20190312104451_88b6d963-af76-490c-8832-ccc07e0667a7); Time taken: 0.092 seconds OK 19/03/12 18:44:54 INFO ql.Driver: OK Time taken: 2.911 seconds 19/03/12 18:44:54 INFO CliDriver: Time taken: 2.911 seconds 19/03/12 18:44:54 INFO conf.HiveConf: Using the default value passed in for log id: 08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:54 INFO session.SessionState: Resetting thread name to main 19/03/12 18:44:54 INFO conf.HiveConf: Using the default value passed in for log id: 08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:54 INFO session.SessionState: Updating thread name to 08d98a96-18e1-4474-98df-1991d7b421f5 main 19/03/12 18:44:54 INFO ql.Driver: Compiling command(queryId=root_20190312104454_13a6c093-1f23-4362-a95e-db15aef02c97): LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/INR_EMP' INTO TABLE `oracle`.`INR_EMP` 19/03/12 18:44:54 INFO ql.Driver: Semantic Analysis Completed 19/03/12 18:44:54 INFO ql.Driver: Returning Hive schema: Schema(fieldSchemas:null, properties:null) 19/03/12 18:44:54 INFO ql.Driver: Completed compiling command(queryId=root_20190312104454_13a6c093-1f23-4362-a95e-db15aef02c97); Time taken: 0.411 seconds 19/03/12 18:44:54 INFO ql.Driver: Concurrency mode is disabled, not creating a lock manager 19/03/12 18:44:54 INFO ql.Driver: Executing command(queryId=root_20190312104454_13a6c093-1f23-4362-a95e-db15aef02c97): LOAD DATA INPATH 'hdfs://192.168.1.66:9000/user/root/INR_EMP' INTO TABLE `oracle`.`INR_EMP` 19/03/12 18:44:54 INFO ql.Driver: Starting task [Stage-0:MOVE] in serial mode 19/03/12 18:44:54 INFO hive.metastore: Closed a connection to metastore, current connections: 0 Loading data to table oracle.inr_emp 19/03/12 18:44:54 INFO exec.Task: Loading data to table oracle.inr_emp from hdfs://192.168.1.66:9000/user/root/INR_EMP 19/03/12 18:44:54 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:44:54 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:44:54 INFO hive.metastore: Connected to metastore. 19/03/12 18:44:54 ERROR hdfs.KeyProviderCache: Could not find uri with key [dfs.encryption.key.provider.uri] to create a keyProvider !! 19/03/12 18:44:55 INFO ql.Driver: Starting task [Stage-1:STATS] in serial mode 19/03/12 18:44:55 INFO exec.StatsTask: Executing stats task 19/03/12 18:44:55 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:44:55 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:44:55 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:44:55 INFO hive.metastore: Connected to metastore. 19/03/12 18:44:55 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:44:55 INFO hive.metastore: Trying to connect to metastore with URI thrift://192.168.1.66:9083 19/03/12 18:44:55 INFO hive.metastore: Opened a connection to metastore, current connections: 1 19/03/12 18:44:55 INFO hive.metastore: Connected to metastore. 19/03/12 18:44:55 INFO exec.StatsTask: Table oracle.inr_emp stats: [numFiles=1, numRows=0, totalSize=486, rawDataSize=0] 19/03/12 18:44:55 INFO ql.Driver: Completed executing command(queryId=root_20190312104454_13a6c093-1f23-4362-a95e-db15aef02c97); Time taken: 1.02 seconds OK 19/03/12 18:44:55 INFO ql.Driver: OK Time taken: 1.431 seconds 19/03/12 18:44:55 INFO CliDriver: Time taken: 1.431 seconds 19/03/12 18:44:55 INFO conf.HiveConf: Using the default value passed in for log id: 08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:55 INFO session.SessionState: Resetting thread name to main 19/03/12 18:44:55 INFO conf.HiveConf: Using the default value passed in for log id: 08d98a96-18e1-4474-98df-1991d7b421f5 19/03/12 18:44:55 INFO session.SessionState: Deleted directory: /tmp/hive/root/08d98a96-18e1-4474-98df-1991d7b421f5 on fs with scheme hdfs 19/03/12 18:44:55 INFO session.SessionState: Deleted directory: /hadoop/hive/tmp/root/08d98a96-18e1-4474-98df-1991d7b421f5 on fs with scheme file 19/03/12 18:44:55 INFO hive.metastore: Closed a connection to metastore, current connections: 0 19/03/12 18:44:55 INFO hive.HiveImport: Hive import complete. 19/03/12 18:44:55 INFO hive.HiveImport: Export directory is contains the _SUCCESS file only, removing the directory.

查询hive表

hive> select * from inr_emp; OK 7369 er 800 NULL NULL NULL NULL NULL 7499 ALLEN 1600 NULL NULL NULL NULL NULL 7521 WARD 1250 NULL NULL NULL NULL NULL 7566 JONES 2975 NULL NULL NULL NULL NULL 7654 MARTIN 1250 NULL NULL NULL NULL NULL 7698 BLAKE 2850 NULL NULL NULL NULL NULL 7782 CLARK 2450 NULL NULL NULL NULL NULL 7839 KING 5000 NULL NULL NULL NULL NULL 7844 TURNER 1500 NULL NULL NULL NULL NULL 7876 ADAMS 1100 NULL NULL NULL NULL NULL 7900 JAMES 950 NULL NULL NULL NULL NULL 7902 FORD 3000 NULL NULL NULL NULL NULL 7934 sdf 1300 NULL NULL NULL NULL NULL Time taken: 0.188 seconds, Fetched: 13 row(s)

发现的确只导入了这几列,其他列为空,如果hive表只创建我们需要的源端几个列来创建一个表,然后指定需要的这几列导入呢?

删除重建hive表:

hive> drop table inr_emp; OK Time taken: 0.152 seconds hive> create table INR_EMP > ( > empno int, > ename string, > sal float > ); OK Time taken: 0.086 seconds

重新导入:

[root@hadoop ~]# sqoop import --connect jdbc:oracle:thin:@192.168.1.6:1521:orcl --username scott --password tiger --table INR_EMP -m 1 --columns 'EMPNO,ENAME,SAL,ETLTIME' --hive-import --hi ve-database oracle 。。。

查询hive表

hive> select * from inr_emp; OK 7369 er 800.0 7499 ALLEN 1600.0 7521 WARD 1250.0 7566 JONES 2975.0 7654 MARTIN 1250.0 7698 BLAKE 2850.0 7782 CLARK 2450.0 7839 KING 5000.0 7844 TURNER 1500.0 7876 ADAMS 1100.0 7900 JAMES 950.0 7902 FORD 3000.0 7934 sdf 1300.0 Time taken: 0.18 seconds, Fetched: 13 row(s)

导入的数据没问题,这样在做kylin增量时没我可以只选择需要计算的列来创建hive表,然后通过sqoop来增量数据到hive,降低空间使用,加下下一篇文章介绍增量导入,连接已经在文章开始给出。

- Sqoop增量同步mysql/oracle数据到hive(merge-key/append)测试文档

- Sqoop_详细总结 使用Sqoop将HDFS/Hive/HBase与MySQL/Oracle中的数据相互导入、导出

- Java api 调用Sqoop2进行MySQL-->Hive的数据同步

- Oracle通过sqoop同步数据到hive

- 使用Sqoop将HDFS/Hive/HBase与MySQL/Oracle中的数据相互导入、导出

- Sqoop是一款开源的工具,主要用于在HADOOP(Hive)与传统的数据库(mysql、oracle...)间进行数据的传递

- Sqoop增量同步Oracle数据到hive:merge-key再次详解

- 阿里巴巴去Oracle数据迁移同步工具(全量+增量,目标支持MySQL/DRDS)

- Sqoop_详细总结 使用Sqoop将HDFS/Hive/HBase与MySQL/Oracle中的数据相互导入、导出

- Sqoop_具体总结 使用Sqoop将HDFS/Hive/HBase与MySQL/Oracle中的数据相互导入、导出

- 使用Sqoop将HDFS/Hive/HBase与MySQL/Oracle中的数据相互导入、导出

- Mysql全量数据同步Oracle步骤详解

- Sqoop将MySQL和Oracle的数据导入HIVE和Hbase

- Sqoop增量从MySQL中向hive导入数据

- python脚本用sqoop把mysql数据导入hive数据仓库中

- 用Sqoop将mysql中的表和数据导入到Hive中

- [置顶] 【hadoop Sqoop】Sqoop从mysql导数据到hive

- 利用Sqoop从MySQL数据源向Hive中导入数据

- 使用GoldenGate实现MySQL到Oracle的数据实时同步

- 使用oracle goldengate 实现windows下mysql到oracle的数据同步