Scrapy爬虫实战项目【001】 - 抓取猫眼电影TOP100

2018-08-25 17:24

441 查看

爬取猫眼电影TOP100

参考来源:静觅丨崔庆才的个人博客 https://cuiqingcai.com/5534.html

目的:使用Scrapy爬取猫眼电影TOP100并保存至MONGODB数据库

目标网址:http://maoyan.com/board/4?offset=0

分析/知识点:

爬取难度:

a. 入门级,网页结构简单,静态HTML,少量JS,不涉及AJAX;

b. 处理分页需要用到正则;MONGODB的update语句使用:

a. update语句:具备查重/插入新数据功能,以title为查重标准

def process_item(self, item, spider):

self.db['movies'].update({'title': item['title']}, {'$set': item}, upsert=True) #注意upsert=True,更新并插入

return item

实际步骤:

1) 创建Scrapy项目/maoyan(spider)

Terminal: > scrapy startproject maoyan_movie Terminal: > scrapy genspider maoyan maoyan.com/board/4?offset=

2) 配置settings.py文件

# MONGODB配置

MONGO_URI = 'localhost'

MONGO_DB = 'maoyan_movie'

...

# 启用MongoPipeline

ITEM_PIPELINES = {

'maoyan_movie.pipelines.MongoPipeline': 300,

}

3) 编写items.py文件

from scrapy import Item, Field class MovieItem(Item): title = Field() #电影标题 actors = Field() #演员 releasetime = Field() #上映时间 cover_img = Field() #缩略图 detail_page = Field() #电影详情页url score = Field() #电影评分

4) 编写pipelines.py文件

根据Scrapy官方文档修改:https://doc.scrapy.org/en/latest/topics/item-pipeline.html?highlight=mongo

import pymongo

class MongoPipeline(object):

def __init__(self, mongo_uri, mongo_db):

self.mongo_uri = mongo_uri

self.mongo_db = mongo_db

@classmethod

def from_crawler(cls, crawler):

return cls(

mongo_uri=crawler.settings.get('MONGO_URI'),

mongo_db=crawler.settings.get('MONGO_DB')

)

def open_spider(self, spider):

self.client = pymongo.MongoClient(self.mongo_uri)

self.db = self.client[self.mongo_db]

def close_spider(self, spider):

self.client.close()

# !! 更新MONGODB,使用UPDATE方法,查重功能(以title字段进行判断)

def process_item(self, item, spider):

self.db['movies'].update({'title': item['title']}, {'$set': item}, upsert=True)

return item

5) 编写spiders > maoyan.py文件

注意:

a) 使用scrapy的css selector进行节点解析;

b) 获取电影缩略图url时,注意需要根据网页源代码写css选择器,和审查元素中看到的不同;

item['cover_img'] = movie.css('a.image-link img.board-img::attr(data-src)').extract_first()

c) 获取下一页节点时,直接使用xpath或css难以直接获得,需要使用正则匹配;

next = response.xpath('.').re_first(r'href="(.*?)">下一页</a>')

d) 完整代码如下:

from scrapy import Spider, Request

from maoyan_movie.items import MovieItem

class MaoyanSpider(Spider):

name = 'maoyan'

allowed_domains = ['maoyan.com/board/4?offset=']

start_urls = ['http://maoyan.com/board/4?offset=']

# 每部电影详情页的基本前缀url

base_url = 'http://maoyan.com'

# 下一页前缀url

next_base_url = 'http://maoyan.com/board/4'

def parse(self, response):

if response:

# 获取每页所有电影的节点

movies = response.css('dl.board-wrapper dd') # 获取所有电影相关节点,切记!!不能加上extract()

item = MovieItem()

for movie in movies:

item['title'] = movie.css('p.name a::text').extract_first()

item['actors'] = movie.css('p.star::text').extract_first().strip()

item['releasetime'] = movie.css('p.releasetime::text').extract_first().strip()

item['score'] = movie.css('i.integer::text').extract_first() + movie.css(

'i.fraction::text').extract_first()

item['detail_page'] = self.base_url + movie.css('p.name a::attr(href)').extract_first()

item['cover_img'] = movie.css(

'a.image-link img.board-img::attr(data-src)').extract_first() # 注意:需要根据网页源码写css选择器,和审查元素中的不同,估计是受JS影响

yield item

# 处理下一页

next = response.xpath('.').re_first(r'href="(.*?)">下一页</a>')

if next:

next_url = self.next_base_url + next

yield Request(url=next_url, callback=self.parse, dont_filter=True)

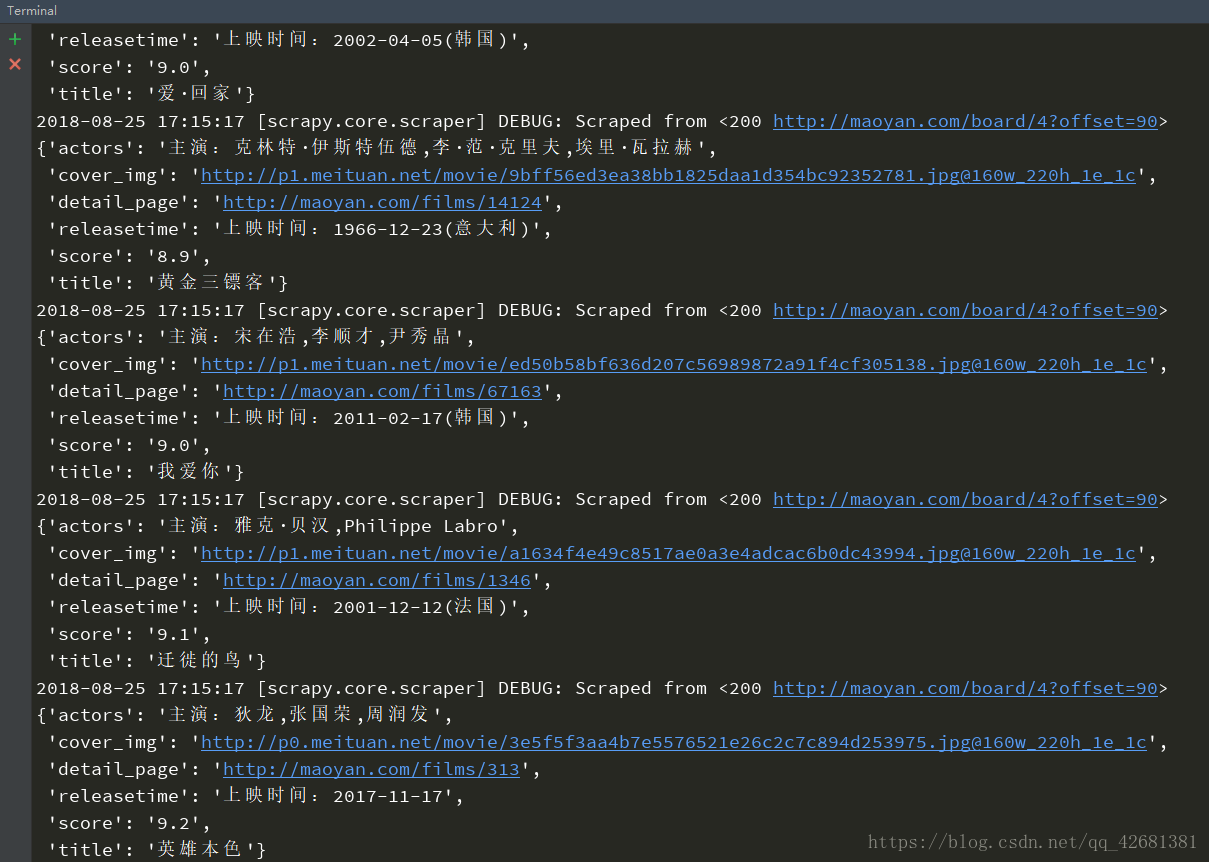

6) 运行结果

小结

- 入门级项目,熟悉了Scrapy的基本使用流程;

- Scrapy的css/xpath选择器、正则写法需要进一步熟悉;

- pymongo的update语句,需要进一步熟练掌握。

相关文章推荐

- python爬虫实战:抓取猫眼电影TOP100存放到MongoDB中

- Scrapy爬虫实战项目【003】 - 抓取360图解电影

- Python爬虫之抓取猫眼电影TOP100

- Python爬虫之三:抓取猫眼电影TOP100

- python 爬虫抓取猫眼电影 top100 源码

- 爬虫项目实战:51job抓取--scrapy版存于数据库

- Python实战---抓取猫眼电影TOP100

- 实践Python的爬虫框架Scrapy来抓取豆瓣电影TOP250

- python爬虫爬取猫眼电影top100

- Python爬虫框架Scrapy实战之批量抓取招聘信息

- Python爬虫框架Scrapy 学习笔记 10.2 -------【实战】 抓取天猫某网店所有宝贝详情

- 今天写的一个用爬虫爬猫眼电影top100的完整代码

- Python爬虫框架Scrapy 学习笔记 10.1 -------【实战】 抓取天猫某网店所有宝贝详情

- python爬虫实战---猫眼电影:西虹市首富的评论抓取

- 【实战\聚焦Python分布式爬虫必学框架Scrapy 打造搜索引擎项目笔记】第1章 课程介绍

- 爬虫框架Scrapy实战之批量抓取招聘信息--附源码

- Python爬虫-爬取猫眼电影Top100榜单

- 【实战\聚焦Python分布式爬虫必学框架Scrapy 打造搜索引擎项目笔记】第5章 scrapy爬取知名问答网站(1)

- Python爬虫框架Scrapy 学习笔记 10.2 -------【实战】 抓取天猫某网店所有宝贝详情

- Python3 大型网络爬虫实战 003 — scrapy 大型静态图片网站爬虫项目实战 — 实战:爬取 169美女图片网 高清图片