[转]Deep Reinforcement Learning Based Trading Application at JP Morgan Chase

Deep Reinforcement Learning Based Trading Application at JP Morgan Chase

https://medium.com/@ranko.mosic/reinforcement-learning-based-trading-application-at-jp-morgan-chase-f829b8ec54f2

FT released a story today about the new application that will optimize JP Morgan Chase trade execution ( Business Insider article on the same topic for readers that do not have FT subscription ). The intent is to reduce market impact and provide best trade execution results for large orders.

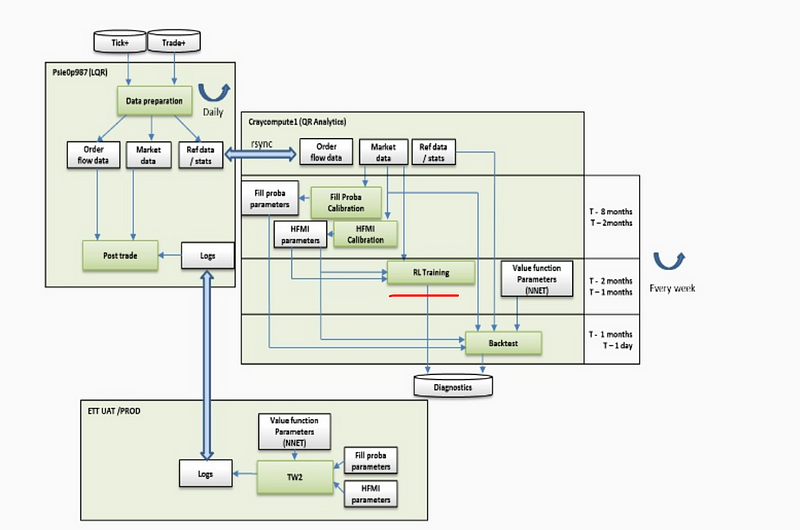

It is a complex application with many moving parts:

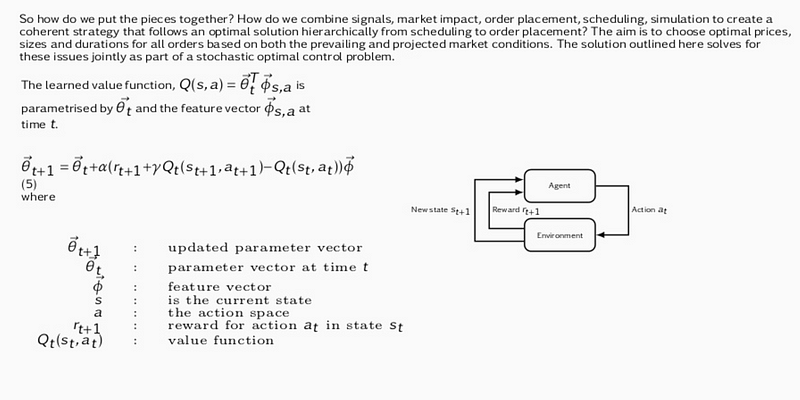

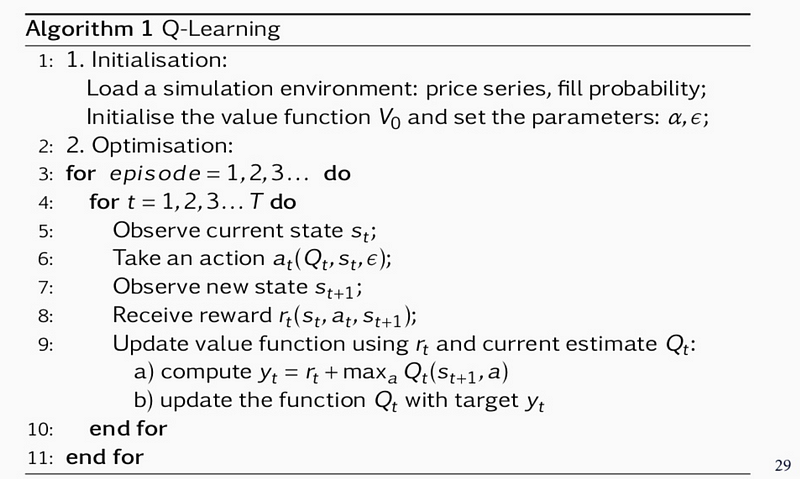

Its core is an RL algorithm that learns to perform the best action ( choose optimal price, duration and order size ) based on market conditions. It is not clear if it is Sarsa ( On-Policy TD Control) or Q-learning (Off-Policy Temporal Difference Control Algorithm ) as both algorithms are present in JP Morgan slides:

Sarsa

Q-learning

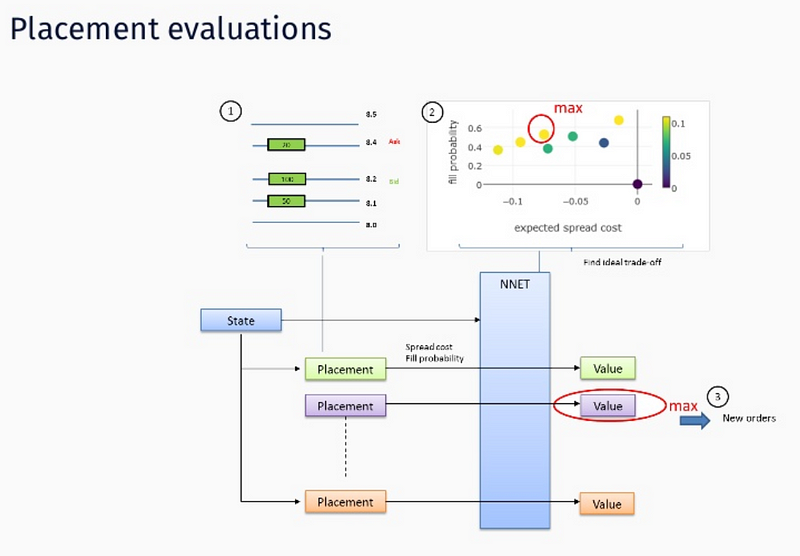

State consists of price series, expected spread cost, fill probability, size placed, as well as elapsed time, %progress, etc. Rewards are immediate rewards ( price spread ) and terminal ( end of episode ) rewards like completion, order duration and market penalties ( obviously those are negative rewards that punish the agent along these dimensions ).

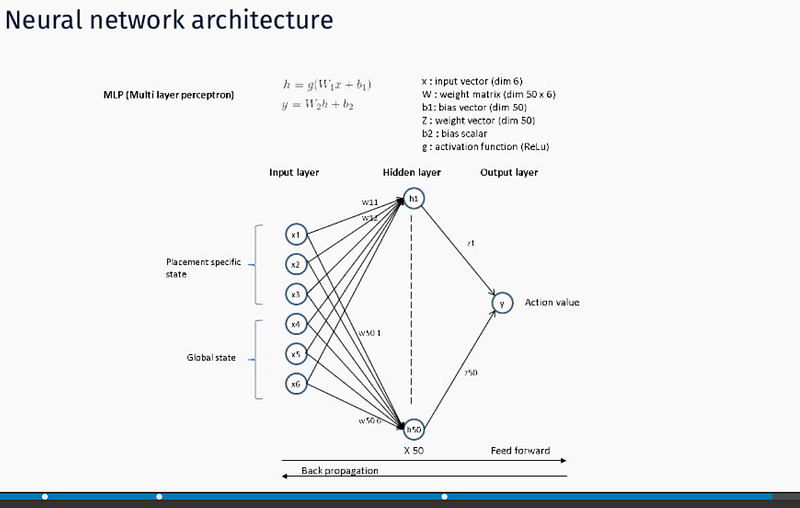

Actions are memorized as weights of a Deep Neural Network — function approximation via NN is used since state, action space is too big to be handled in tabular form. We assume stochastic gradient descent is used for both feed forward and backprop operation operation ( hence Deep designation ):

JP Morgan is convinced this is the very first real time trading AI/ML application on Wall Street. We are assuming this is not true i.e. there are surely other players operating in this space as RL implementation to order execution is known for quite a while now ( Kearns and Nevmyvaka 2006 ).

The latest LOXM developments will be presented at QuantMinds Conference in Lisbon (May of 2018).

Instinet is also using Q-learning, probably for the same purpose ( market impact reduction ).

- Deep Direct Reinforcement Learning for Financial Signal Representation and Trading

- Deep Reinforcement Learning-based Image Captioning with Embedding Reward

- PR10.10:#Exploration: A Study of Count-Based Exploration for Deep Reinforcement Learning

- 零基础10分钟运行DQN图文教程 Playing Flappy Bird Using Deep Reinforcement Learning (Based on Deep Q Learning DQN

- Deep Reinforcement Learning-based Image Captioning with Embedding Reward

- Deep Learning for Content-Based Image Retrival:A Comprehensive Study 论文笔记

- Deep Reinforcement Learning 深度增强学习资源

- Deep Learning for Chatbots, Part 2 – Implementing a Retrieval-Based Model in Tensorflow

- Deep Reinforcement Learning 基础知识(DQN方面)

- Deep Reinforcement Learning for Dialogue Generation 翻译

- 论文引介 | Deep Reinforcement Learning for Dialogue Generation

- 论文笔记之:Dueling Network Architectures for Deep Reinforcement Learning

- READING NOTE: Optimizing Deep CNN-Based Queries over Video Streams at Scale

- Python边学边用 - Python based Deeplearning Framework

- Deep Q-Network,Nature-2015:Human-level control through deep reinforcement learning

- Deep learning和Reinforcement lea…

- PR17.10.2:Reproducibility of Benchmarked Deep Reinforcement Learning Tasks for Continuous Control

- 【DQN】解析 DeepMind 深度强化学习 (Deep Reinforcement Learning) 技术

- caffe添加Layer,复现Feature Learning based Deep Supervidsed Hashing with PL

- 论文笔记之:Action-Decision Networks for Visual Tracking with Deep Reinforcement Learning