python_MNIST_CNN学习笔记

2018-04-13 10:37

169 查看

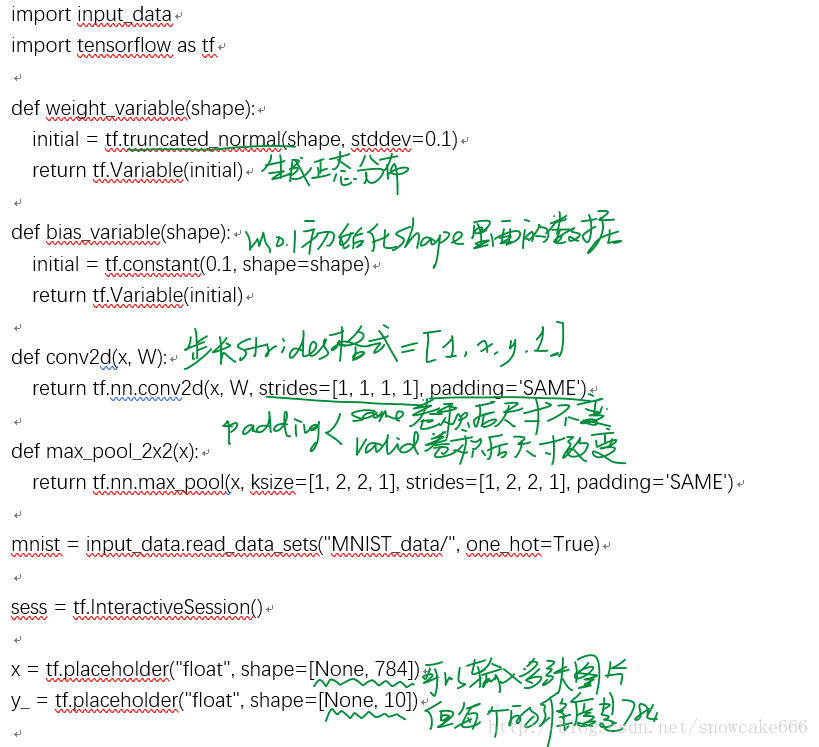

import input_data

import tensorflow as tf

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

sess = tf.InteractiveSession()

x = tf.placeholder("float", shape=[None, 784])

y_ = tf.placeholder("float", shape=[None, 10])

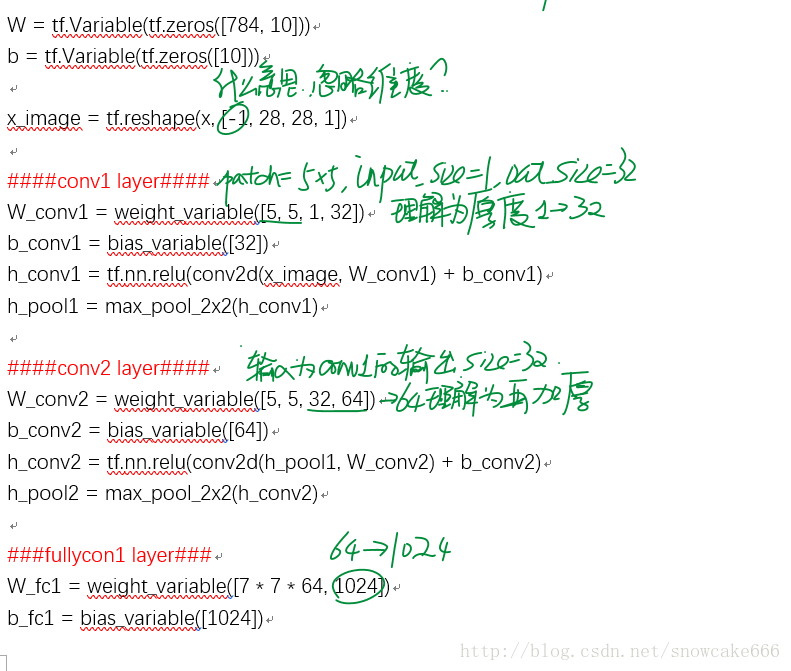

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

x_image = tf.reshape(x, [-1, 28, 28, 1])

####conv1 layer####

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

####conv2 layer####

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

###fullycon1 layer###

W_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

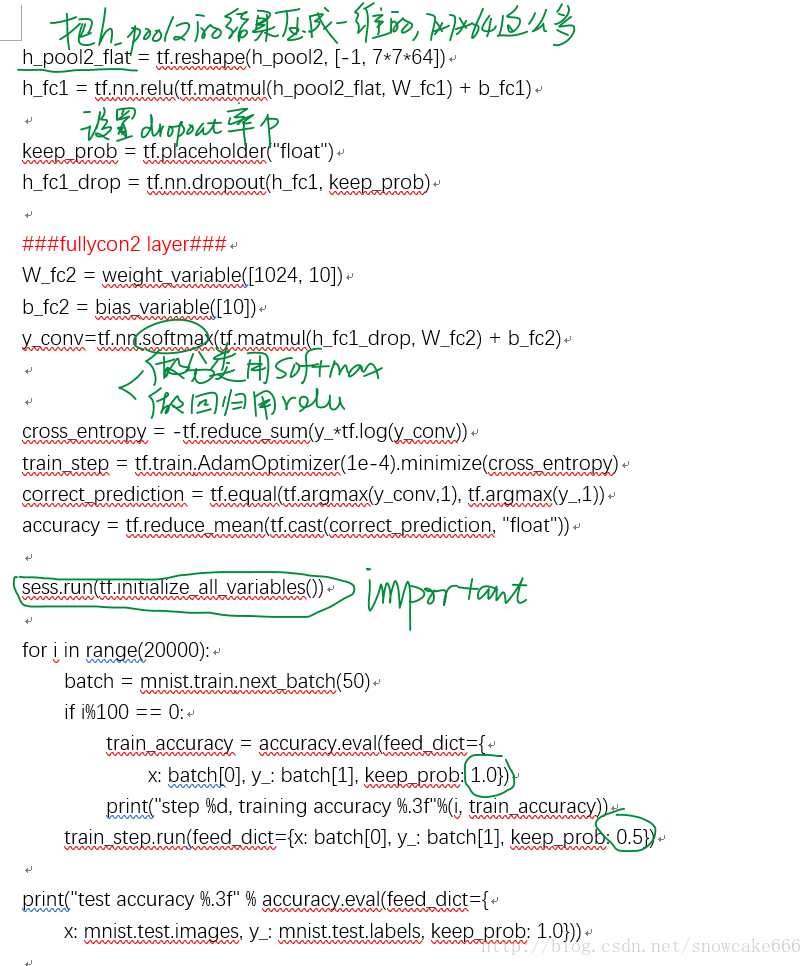

h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

keep_prob = tf.placeholder("float")

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

###fullycon2 layer###

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

cross_entropy = -tf.reduce_sum(y_*tf.log(y_conv))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

sess.run(tf.initialize_all_variables())

for i in range(20000):

batch = mnist.train.next_batch(50)

if i%100 == 0:

train_accuracy = accuracy.eval(feed_dict={

x: batch[0], y_: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %.3f"%(i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

print("test accuracy %.3f" % accuracy.eval(feed_dict={

x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0}))

相关文章推荐

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-03-基于Python的LeNet之LR

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-02-基于Python的卷积运算

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-03-基于Python的LeNet之LR

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-04-基于Python的LeNet之MLP

- 【caffe学习笔记之7】caffe-matlab/python训练LeNet模型并应用于mnist数据集(2)

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-03-基于Python的LeNet之LR

- Keras 深度学习框架Python Example:CNN/mnist

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-02-基于Python的卷积运算

- DeepLearning&Tensorflow学习笔记2__mnist数据集CNN

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-02-基于Python的卷积运算

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-02-基于Python的卷积运算

- tensorflow学习笔记五:mnist实例--卷积神经网络(CNN)

- tensorflow 学习笔记9 卷积神经网络(CNN)实现mnist手写识别

- tensorflow 学习笔记(五) - mnist实例--卷积神经网络(CNN)

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-04-基于Python的LeNet之MLP

- 【caffe学习笔记之6】caffe-matlab/python训练LeNet模型并应用于mnist数据集(1)

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-03-基于Python的LeNet之LR

- tensorflow学习笔记五:mnist实例--卷积神经网络(CNN)(Deep MNIST for Experts)

- tensorflow学习笔记五:mnist实例--卷积神经网络(CNN)

- 深度学习(DL)与卷积神经网络(CNN)学习笔记随笔-04-基于Python的LeNet之MLP