TensorFlow学习之实现深度卷积分类器

2018-03-07 23:07

316 查看

1 利用TensorFlow在MNIST数据上构建深度卷积分类器

数据集:MNIST 数据,MNIST是一个入门级的计算机视觉数据集,包含各种手写体数字图片

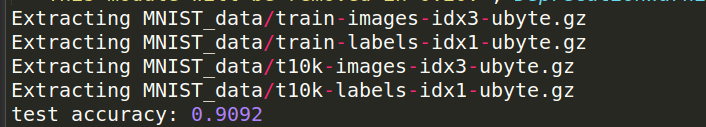

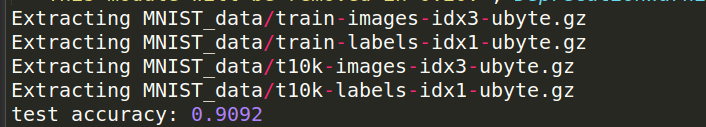

本文分为两部分(1)softmax模型实现

(2)深度卷积分类器实现

2 实验代码及结果截图

#coding:utf-8

#softmax模型

#下载数据

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

import tensorflow as tf

#创建计算图

sess=tf.InteractiveSession()

#创建计算图的输入和输出

x=tf.placeholder("float",shape=[None,784])#784是指单张展开的MNISt图片的维度数

y_=tf.placeholder("float",shape=[None,10])

#为模型定义权重和偏置

w=tf.Variable(tf.zeros([784,10]))

b=tf.Variable(tf.zeros([10]))

sess.run(tf.initialize_all_variables())

#预测分类和损失函数

y=tf.nn.softmax(tf.matmul(x,w)+b)

#最小化损失函数

cross_entropy=-tf.reduce_sum(y_*tf.log(y))

#训练模型

train_step=tf.train.GradientDescentOptimizer(0.01).minimize(cross_entropy)

for i in range(1000):

batch=mnist.train.next_batch(50)

train_step.run(feed_dict={x:batch[0],y_:batch[1]})

correct_prediction=tf.equal(tf.argmax(y,1), tf.argmax(y_,1))

accuracy=tf.reduce_mean(tf.cast(correct_prediction,"float"))

print "test accuracy:", accuracy.eval(feed_dict={x:mnist.test.images,y_:mnist.test.labels})

#初始化函数

def weight_variable(shape):

initial=tf.truncated_normal(shape,stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial=tf.constant(0.1,shape=shape)

return tf.Variable(initial)

#卷积和池化

def conv2d(x,w):

return tf.nn.conv2d(x,w,strides=[1,1,1,1],padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x,ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

#第一层卷积

w_conv1=weight_variable([5,5,1,32])

b_conv1=bias_variable([32])

x_image=tf.reshape(x,[-1,28,28,1])

h_conv1=tf.nn.relu(conv2d(x_image,w_conv1)+b_conv1)

h_pool1=max_pool_2x2(h_conv1)

#第二层卷积

w_conv2=weight_variable([5,5,32,64])

b_conv2=bias_variable([64])

h_conv2=tf.nn.relu(conv2d(h_pool1,w_conv2)+b_conv2)

h_pool2=max_pool_2x2(h_conv2)

#密集连接层

w_fc1=weight_variable([7*7*64,1024])

b_fc1=bias_variable([1024])

h_pool2_flat=tf.reshape(h_pool2,[-1,7*7*64])

h_fc1=tf.nn.relu(tf.matmul(h_pool2_flat,w_fc1)+b_fc1)

#减少过拟合

keep_prob=tf.placeholder("float")

h_fc1_drop=tf.nn.dropout(h_fc1, keep_prob)

#输出层

w_fc2=weight_variable([1024,10])

b_fc2=bias_variable([10])

y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop,w_fc2)+b_fc2)

#训练和评估模型

cross_entropy=-tf.reduce_sum(y_*tf.log(y_conv))

train_step=tf.train.AdadeltaOptimizer(1e-4).minimize(cross_entropy)

correct_prediction=tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1))

accuracy=tf.reduce_mean(tf.cast(correct_prediction,"float"))

sess.run(tf.initialize_all_variables())

for i in range(20000):

batch=mnist.train.next_batch(50)

if i%100==0:

train_accurcy=accuracy.eval(feed_dict={x:batch[0],y_:batch[1],keep_prob:1.0})

print "step:",i,"train_accurcy:",train_accurcy

train_step.run(feed_dict={x:batch[0],y_:batch[1],keep_prob:0.5})

print "test accuracy:",accuracy.eval(feed_dict={x:mnist.test.images,y_:mnist.test.labels,keep_prob:1.0})

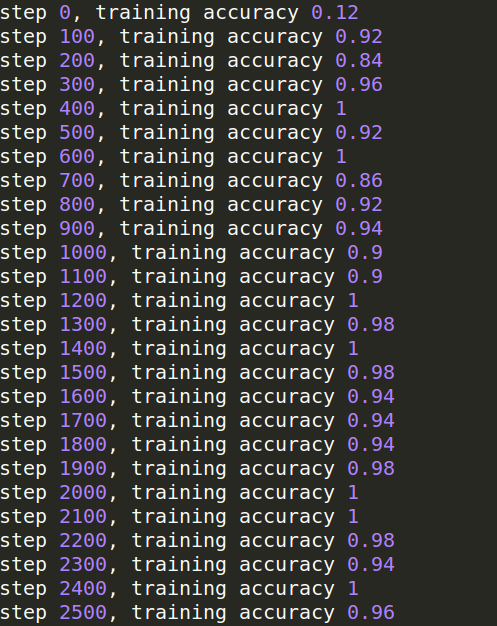

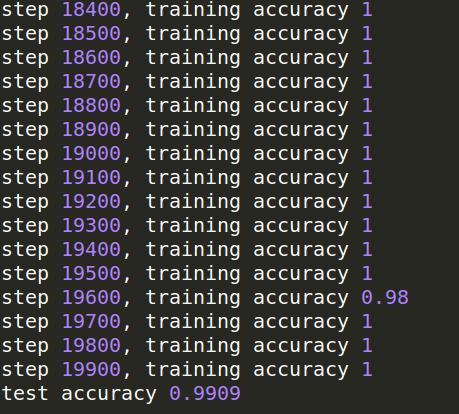

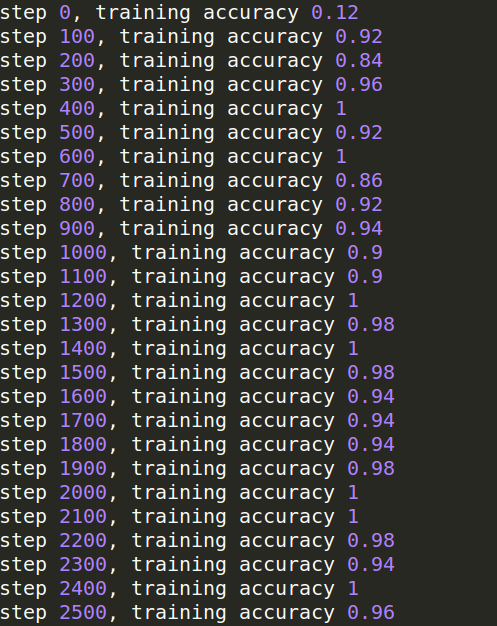

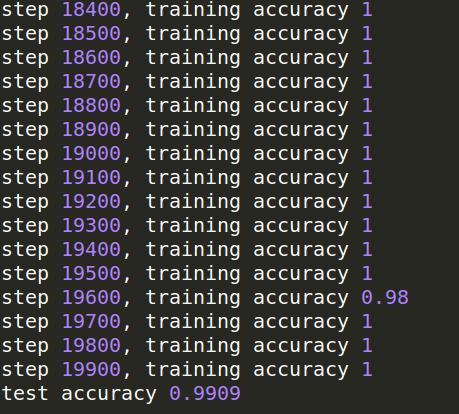

运行的结果就不所有都截图了,温馨提示:后面的程序运行起来会很慢很慢,需要将近半个小时才可以完成;

3 从两次的结果可以看出,准确性明显增加

数据集:MNIST 数据,MNIST是一个入门级的计算机视觉数据集,包含各种手写体数字图片

本文分为两部分(1)softmax模型实现

(2)深度卷积分类器实现

2 实验代码及结果截图

#coding:utf-8

#softmax模型

#下载数据

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

import tensorflow as tf

#创建计算图

sess=tf.InteractiveSession()

#创建计算图的输入和输出

x=tf.placeholder("float",shape=[None,784])#784是指单张展开的MNISt图片的维度数

y_=tf.placeholder("float",shape=[None,10])

#为模型定义权重和偏置

w=tf.Variable(tf.zeros([784,10]))

b=tf.Variable(tf.zeros([10]))

sess.run(tf.initialize_all_variables())

#预测分类和损失函数

y=tf.nn.softmax(tf.matmul(x,w)+b)

#最小化损失函数

cross_entropy=-tf.reduce_sum(y_*tf.log(y))

#训练模型

train_step=tf.train.GradientDescentOptimizer(0.01).minimize(cross_entropy)

for i in range(1000):

batch=mnist.train.next_batch(50)

train_step.run(feed_dict={x:batch[0],y_:batch[1]})

correct_prediction=tf.equal(tf.argmax(y,1), tf.argmax(y_,1))

accuracy=tf.reduce_mean(tf.cast(correct_prediction,"float"))

print "test accuracy:", accuracy.eval(feed_dict={x:mnist.test.images,y_:mnist.test.labels})

#初始化函数

def weight_variable(shape):

initial=tf.truncated_normal(shape,stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial=tf.constant(0.1,shape=shape)

return tf.Variable(initial)

#卷积和池化

def conv2d(x,w):

return tf.nn.conv2d(x,w,strides=[1,1,1,1],padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x,ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

#第一层卷积

w_conv1=weight_variable([5,5,1,32])

b_conv1=bias_variable([32])

x_image=tf.reshape(x,[-1,28,28,1])

h_conv1=tf.nn.relu(conv2d(x_image,w_conv1)+b_conv1)

h_pool1=max_pool_2x2(h_conv1)

#第二层卷积

w_conv2=weight_variable([5,5,32,64])

b_conv2=bias_variable([64])

h_conv2=tf.nn.relu(conv2d(h_pool1,w_conv2)+b_conv2)

h_pool2=max_pool_2x2(h_conv2)

#密集连接层

w_fc1=weight_variable([7*7*64,1024])

b_fc1=bias_variable([1024])

h_pool2_flat=tf.reshape(h_pool2,[-1,7*7*64])

h_fc1=tf.nn.relu(tf.matmul(h_pool2_flat,w_fc1)+b_fc1)

#减少过拟合

keep_prob=tf.placeholder("float")

h_fc1_drop=tf.nn.dropout(h_fc1, keep_prob)

#输出层

w_fc2=weight_variable([1024,10])

b_fc2=bias_variable([10])

y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop,w_fc2)+b_fc2)

#训练和评估模型

cross_entropy=-tf.reduce_sum(y_*tf.log(y_conv))

train_step=tf.train.AdadeltaOptimizer(1e-4).minimize(cross_entropy)

correct_prediction=tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1))

accuracy=tf.reduce_mean(tf.cast(correct_prediction,"float"))

sess.run(tf.initialize_all_variables())

for i in range(20000):

batch=mnist.train.next_batch(50)

if i%100==0:

train_accurcy=accuracy.eval(feed_dict={x:batch[0],y_:batch[1],keep_prob:1.0})

print "step:",i,"train_accurcy:",train_accurcy

train_step.run(feed_dict={x:batch[0],y_:batch[1],keep_prob:0.5})

print "test accuracy:",accuracy.eval(feed_dict={x:mnist.test.images,y_:mnist.test.labels,keep_prob:1.0})

运行的结果就不所有都截图了,温馨提示:后面的程序运行起来会很慢很慢,需要将近半个小时才可以完成;

3 从两次的结果可以看出,准确性明显增加

相关文章推荐

- TensorFlow深度学习笔记 实现与优化深度神经网络

- 深度学习FPGA实现基础知识14(如何理解“卷积”运算)

- 深度学习框架TensorFlow学习(二)----简单实现Mnist

- 深度学习框架TensorFlow学习----100行代码实现AlexNet

- TensorFlow 深度学习笔记 TensorFlow实现与优化深度神经网络

- 深度学习的TensorFlow实现

- 深度学习FPGA实现基础知识17(图像处理卷积运算 矩阵卷积)

- TensorFlow 深度学习笔记 TensorFlow实现与优化深度神经网络

- 【深度学习】使用tensorflow实现AlexNet

- Tensorflow深度学习入门——采用卷积和池化优化训练MNIST数据——代码+注释

- [置顶] 【深度学习】使用tensorflow实现VGG19网络

- TensorFlow 深度学习笔记 从线性分类器到深度神经网络

- TensorFlow 深度学习笔记 从线性分类器到深度神经网络

- 深度学习Caffe实战笔记(17)MATLAB实现卷积层卷积核权重的可视化

- TensorFlow 深度学习笔记 TensorFlow实现与优化深度神经网络

- (尤其是训练集验证集的生成)深度学习 tensorflow 实战(2) 实现简单神经网络以及随机梯度下降算法S.G.D

- Tensorflow 深度学习分布式实现方式

- 深度学习之卷积神经网络编程实现(二)

- TensorFlow深度学习笔记 从线性分类器到深度神经网络

- TensorFlow 深度学习笔记 TensorFlow实现与优化深度神经网络