基于corosync和pacemaker+drbd实现mfs高可用

2017-10-28 16:43

309 查看

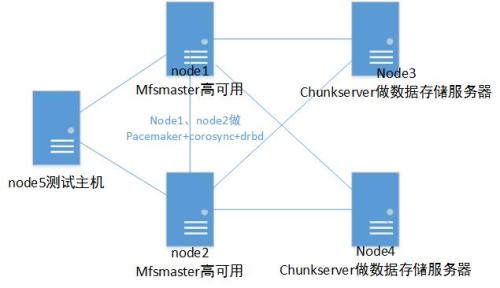

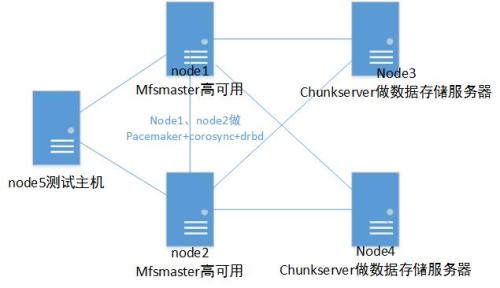

基于corosync和pacemaker+drbd实现mfs高可用一、MooseFS简介1、介绍 MooseFS是一个具备冗余容错功能的分布式网络文件系统,它将数据分别存放在多个物理服务器单独磁盘或分区上,确保一份数据有多个备份副本。因此MooseFS是一中很好的分布式存储。但是基于的是单节点的,对公司企业来说是不适合用的,所以今天我们通过corosync和pacemaker+drbd实现mfs高可用的部署,让mfs不再单点工作。拓扑图如下:

我们最主要的是在node1和node2上实现高可用就可以实现moosefs的高可用了好了现在我们来正式部署环境主机-centos7: node1:172.25.0.29 node2:172.25.0.30(都为mfsmaster,做高可用) node3: 172.25.0.33 node4: 172.25.0.34 (都为mfscheckservers,用来存储数据) node5: 172.25.0.35 (测试主机)Mysql,drbd已经安装好,我们重新初始化一下资源:搭建参考文档:http://xiaozhagn.blog.51cto.com/13264135/1975397

Corosync和Pacemaker这里搭建也不再演示了,详细可以参考文档:http://xiaozhagn.blog.51cto.com/13264135/1976185 二、MFSmaster安装1、node1上下载压缩包:

##因为是官方的,默认配置,我们投入即可使用。接下来我们要修改控制文件

二、check servers安装与配置1、在node3和node4上同样的操作:(下面只在node3上演示)

2、安装挂载客户端

3、在客户端上挂载文件系统,先创建挂载目录:

四、测试1、测试vip挂载在node1上看一下vip1是否存在

###可以看到vip已经在node1起来的在node5上测试挂载写入:

2、高可用测试我们先在node5把磁盘umount掉

我们最主要的是在node1和node2上实现高可用就可以实现moosefs的高可用了好了现在我们来正式部署环境主机-centos7: node1:172.25.0.29 node2:172.25.0.30(都为mfsmaster,做高可用) node3: 172.25.0.33 node4: 172.25.0.34 (都为mfscheckservers,用来存储数据) node5: 172.25.0.35 (测试主机)Mysql,drbd已经安装好,我们重新初始化一下资源:搭建参考文档:http://xiaozhagn.blog.51cto.com/13264135/1975397

Corosync和Pacemaker这里搭建也不再演示了,详细可以参考文档:http://xiaozhagn.blog.51cto.com/13264135/1976185 二、MFSmaster安装1、node1上下载压缩包:

[root@node1 ~]# yum install zlib-devel -y ##安装依赖包 [root@node1 ~]# cd /usr/local/src/ [root@node2 src]# wget https://github.com/moosefs/moosefs/archive/v3.0.96.tar.gz ###下载mfs包下载完后我们先把我们搭建好的的/dev/drbd1 挂载到/usr/local/mfs上2、挂载drbd

[root@node1 src]# mkdir /usr/local/mfs 新建mfs目录 [root@node1 src]# cat /proc/drbd 查看drbd的模式,可用看到node1为主了 version: 8.4.10-1 (api:1/proto:86-101) GIT-hash: a4d5de01fffd7e4cde48a080e2c686f9e8cebf4c build by mockbuild@, 2017-09-15 14:23:22 1: cs:Connected ro:Primary/Secondary ds:UpToDate/UpToDate C r----- ns:8192 nr:0 dw:0 dr:9120 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:0 [root@node1 src]# mkfs.xfs -f /dev/drbd1 ###重新格式一遍磁盘,以免有问题 meta-data=/dev/drbd1 isize=512 agcount=4, agsize=131066 blks = sectsz=512 attr=2, projid32bit=1 = crc=1 finobt=0, sparse=0 data = bsize=4096 blocks=524263, imaxpct=25 = sunit=0 swidth=0 blks naming =version 2 bsize=4096 ascii-ci=0 ftype=1 log =internal log bsize=4096 blocks=2560, version=2 = sectsz=512 sunit=0 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0 You have new mail in /var/spool/mail/root [root@node2 src]# useradd mfs [root@node1 src]#chown -R mfs:mfs /usr/local/mfs/ [root@node1 src]# mount /dev/drbd1 /usr/local/mfs/ ##把drbd挂载到mfs目录下 [root@node1 src]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/cl-root 18G 2.5G 16G 14% / devtmpfs 226M 0 226M 0% /dev tmpfs 237M 0 237M 0% /dev/shm tmpfs 237M 4.6M 232M 2% /run tmpfs 237M 0 237M 0% /sys/fs/cgroup /dev/sda1 1014M 197M 818M 20% /boot tmpfs 48M 0 48M 0% /run/user/0 /dev/drbd1 2.0G 33M 2.0G 2% /usr/local/mfs##可以看到drbd已经挂载成功了3、安装mfsmaster

[root@node1 src]# tar -xf v3.0.96.tar.gz [root@node1 src]# cd moosefs-3.0.96/ [root@node1 moosefs-3.0.96]# ./configure --prefix=/usr/local/mfs --with-default-user=mfs --with-default-group=mfs --disable-mfschunkserver --disable-mfsmount [root@node1 moosefs-3.0.96]# make && make install ##编译安装 [root@node1 moosefs-3.0.96]# cd /usr/local/mfs/etc/mfs/ [root@node1 mfs]# ls mfsexports.cfg.sample mfsmaster.cfg.sample mfsmetalogger.cfg.sample mfstopology.cfg.sample [root@node1 mfs]# mv mfsexports.cfg.sample mfsexports.cfg [root@node1 mfs]# mv mfsmaster.cfg.sample mfsmaster.cfg## 看一下默认配置的参数:

[root@node1 mfs]# vim mfsmaster.cfg # WORKING_USER = mfs # 运行 master server 的用户 # WORKING_GROUP = mfs # 运行 master server 的组 # SYSLOG_IDENT = mfsmaster # 是master server在syslog中的标识,也就是说明这是由master serve产生的 # LOCK_MEMORY = 0 # 是否执行mlockall()以避免mfsmaster 进程溢出(默认为0) # NICE_LEVEL = -19 # 运行的优先级(如果可以默认是 -19; 注意: 进程必须是用root启动) # EXPORTS_FILENAME = /usr/local/mfs-1.6.27/etc/mfs/mfsexports.cfg # 被挂载目录及其权限控制文件的存放路径 # TOPOLOGY_FILENAME = /usr/local/mfs-1.6.27/etc/mfs/mfstopology.cfg # mfstopology.cfg文件的存放路径 # DATA_PATH = /usr/local/mfs-1.6.27/var/mfs # 数据存放路径,此目录下大致有三类文件,changelog,sessions和stats; # BACK_LOGS = 50 # metadata的改变log文件数目(默认是 50) # BACK_META_KEEP_PREVIOUS = 1 # metadata的默认保存份数(默认为1) # REPLICATIONS_DELAY_INIT = 300 # 延迟复制的时间(默认是300s) # REPLICATIONS_DELAY_DISCONNECT = 3600 # chunkserver断开的复制延迟(默认是3600) # MATOML_LISTEN_HOST = * # metalogger监听的IP地址(默认是*,代表任何IP) # MATOML_LISTEN_PORT = 9419 # metalogger监听的端口地址(默认是9419) # MATOML_LOG_PRESERVE_SECONDS = 600 # MATOCS_LISTEN_HOST = * # 用于chunkserver连接的IP地址(默认是*,代表任何IP) # MATOCS_LISTEN_PORT = 9420 # 用于chunkserver连接的端口地址(默认是9420) # MATOCL_LISTEN_HOST = * # 用于客户端挂接连接的IP地址(默认是*,代表任何IP) # MATOCL_LISTEN_PORT = 9421 # 用于客户端挂接连接的端口地址(默认是9421) # CHUNKS_LOOP_MAX_CPS = 100000 # chunks的最大回环频率(默认是:100000秒) # CHUNKS_LOOP_MIN_TIME = 300 # chunks的最小回环频率(默认是:300秒) # CHUNKS_SOFT_DEL_LIMIT = 10 # 一个chunkserver中soft最大的可删除数量为10个 # CHUNKS_HARD_DEL_LIMIT = 25 # 一个chuankserver中hard最大的可删除数量为25个 # CHUNKS_WRITE_REP_LIMIT = 2 # 在一个循环里复制到一个chunkserver的最大chunk数目(默认是1) # CHUNKS_READ_REP_LIMIT = 10 # 在一个循环里从一个chunkserver复制的最大chunk数目(默认是5) # ACCEPTABLE_DIFFERENCE = 0.1 # 每个chunkserver上空间使用率的最大区别(默认为0.01即1%) # SESSION_SUSTAIN_TIME = 86400 # 客户端会话超时时间为86400秒,即1天 # REJECT_OLD_CLIENTS = 0 # 弹出低于1.6.0的客户端挂接(0或1,默认是0)

##因为是官方的,默认配置,我们投入即可使用。接下来我们要修改控制文件

[root@node1 mfs]# vim mfsexports.cfg * / rw,alldirs,mapall=mfs:mfs,password=xiaozhang * . rw##mfsexports.cfg 文件中,每一个条目就是一个配置规则,而每一个条目又分为三个部分,其中第一部分是mfs客户端的ip地址或地址范围,第二部分是被挂载的目录,第三个部分用来设置mfs客户端可以拥有的访问权限。下一步:开启元数据文件默认是empty文件,需要我们手工打开:

[root@node1 mfs]# cp /usr/local/mfs/var/mfs/metadata.mfs.empty /usr/local/mfs/var/mfs/metadata.mfs4、启动测试:

[root@node1 mfs]# /usr/local/mfs/sbin/mfsmaster start (没有问题就做启动脚本):5、启动脚本:

[root@node2 mfs]# cat /etc/systemd/system/mfsmaster.service [Unit] Description=mfs After=network.target [Service] Type=forking ExecStart=/usr/local/mfs/sbin/mfsmaster start ExecStop=/usr/local/mfs/sbin/mfsmaster stop PrivateTmp=true [Install] WantedBy=multi-user.target [root@node1 mfs]# chmod a+x /etc/systemd/system/mfsmaster.service [root@node1 mfs]# systemctl enable mfsmaster ##添加开机启动: [root@node1 mfs]# systemctl stop mfsmaster [root@node1 ~]# umount -l /usr/local/mfs/ ##然后把/dev/drbd1 umountd掉6、接下来在node2执行操作:

[root@node2 ~]# mkdir -p /usr/local/mfs [root@node2 ~]# useradd mfs [root@node2 ~]# chown -R mfs:mfs /usr/local/mfs/查看下drbd状态:

[root@node2 ~]# cat /proc/drbd version: 8.4.10-1 (api:1/proto:86-101) GIT-hash: a4d5de01fffd7e4cde48a080e2c686f9e8cebf4c build by mockbuild@, 2017-09-15 14:23:22 1: cs:Connected ro:Secondary/Primary ds:UpToDate/UpToDate C r----- ns:0 nr:25398 dw:25398 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:0 You have new mail in /var/spool/mail/root###为从drbd正常##创建启动mfsmaster脚本

[root@node2 ~]# cat /etc/systemd/system/mfsmaster.service [Unit] Description=mfs After=network.target [Service] Type=forking ExecStart=/usr/local/mfs/sbin/mfsmaster start ExecStop=/usr/local/mfs/sbin/mfsmaster stop PrivateTmp=true [Install] WantedBy=multi-user.target [root@node2 ~]# chmod a+x /etc/systemd/system/mfsmaster.service7、配置mfsmaster高可用(在node1上配)

[root@node1 ~]# crm crm(live)# status ##查看状态 Stack: corosync Current DC: node1 (version 1.1.16-12.el7_4.4-94ff4df) - partition with quorum Last updated: Sat Oct 28 12:36:03 2017 Last change: Thu Oct 26 11:15:07 2017 by root via cibadmin on node1 2 nodes configured 0 resources configured Online: [ node1 node2 ] No resources ##pacemaker和corosync正常运行。8、配置资源

crm(live)# configure crm(live)configure# primitive mfs_drbd ocf:linbit:drbd params drbd_resource=mysql op monitor role=Master interval=10 timeout=20 op monitor role=Slave interval=20 timeout=20 op start timeout=240 op stop timeout=100 ##配置drbd资源 crm(live)configure# verify crm(live)configure# ms ms_mfs_drbd mfs_drbd meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true" crm(live)configure# verify crm(live)configure# commit9、配置挂载资源

crm(live)configure# primitive mystore ocf:heartbeat:Filesystem params device=/dev/drbd1 directory=/usr/local/mfs fstype=xfs op start timeout=60 op stop timeout=60 crm(live)configure# verify crm(live)configure# colocation ms_mfs_drbd_with_mystore inf: mystore ms_mfs_drbd crm(live)configure# order ms_mfs_drbd_before_mystore Mandatory: ms_mfs_drbd:promote mystore:start10、配置mfs资源:

crm(live)configure# primitive mfs systemd:mfsmaster op monitor timeout=100 interval=30 op start timeout=30 interval=0 op stop timeout=30 interval=0 crm(live)configure# colocation mfs_with_mystore inf: mfs mystore crm(live)configure# order mystor_befor_mfs Mandatory: mystore mfs crm(live)configure# verify WARNING: mfs: specified timeout 30 for start is smaller than the advised 100 WARNING: mfs: specified timeout 30 for stop is smaller than the advised 100 crm(live)configure# commit11、配置VIP:

crm(live)configure# primitive vip ocf:heartbeat:IPaddr params ip=172.25.0.100 crm(live)configure# colocation vip_with_msf inf: vip mfs crm(live)configure# verify crm(live)configure# commit12、show查看

crm(live)configure# show node 1: node1 \ attributes standby=off node 2: node2 primitive mfs systemd:mfsmaster \ op monitor timeout=100 interval=30 \ op start timeout=30 interval=0 \ op stop timeout=30 interval=0 primitive mfs_drbd ocf:linbit:drbd \ params drbd_resource=mysql \ op monitor role=Master interval=10 timeout=20 \ op monitor role=Slave interval=20 timeout=20 \ op start timeout=240 interval=0 \ op stop timeout=100 interval=0 primitive mystore Filesystem \ params device="/dev/drbd1" directory="/usr/local/mfs" fstype=xfs \ op start timeout=60 interval=0 \ op stop timeout=60 interval=0 primitive vip IPaddr \ params ip=172.25.0.100 ms ms_mfs_drbd mfs_drbd \ meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 notify=true colocation mfs_with_mystore inf: mfs mystore order ms_mfs_drbd_before_mystore Mandatory: ms_mfs_drbd:promote mystore:start colocation ms_mfs_drbd_with_mystore inf: mystore ms_mfs_drbd order mystor_befor_mfs Mandatory: mystore mfs colocation vip_with_msf inf: vip mfs property cib-bootstrap-options: \ have-watchdog=false \ dc-version=1.1.16-12.el7_4.4-94ff4df \ cluster-infrastructure=corosync \ cluster-name=mycluster \ stonith-enabled=false \ migration-limit=1###查看各服务状态

crm(live)# status Stack: corosync Current DC: node1 (version 1.1.16-12.el7_4.4-94ff4df) - partition with quorum Last updated: Sat Oct 28 15:24:26 2017 Last change: Sat Oct 28 15:24:20 2017 by root via cibadmin on node1 2 nodes configured 5 resources configured Online: [ node1 node2 ] Full list of resources: Master/Slave Set: ms_mfs_drbd [mfs_drbd] Masters: [ node1 ] Slaves: [ node2 ] mystore(ocf::heartbeat:Filesystem):Started node1 mfs(systemd:mfsmaster):Started node1 vip(ocf::heartbeat:IPaddr):Started node1##可以发现所有服务都在node1起来了

二、check servers安装与配置1、在node3和node4上同样的操作:(下面只在node3上演示)

[root@node3 ~]# yum install zlib-devel -y ##安装依赖包 [root@node3 ~]# cd /usr/local/src/ [root@node3 src]# wget https://github.com/moosefs/moosefs/archive/v3.0.96.tar.gz ###下载mfs包 [root@node3 src]# useradd mfs [root@node3 src]# tar zxvf v3.0.96.tar.gz [root@node3 src]# cd moosefs-3.0.96/ [root@node3 moosefs-3.0.96]# ./configure --prefix=/usr/local/mfs --with-default-user=mfs --with-default-group=mfs --disable-mfsmaster --disable-mfsmount [root@node3 moosefs-3.0.96]# make && make install [root@node3 moosefs-3.0.96]# cd /usr/local/mfs/etc/mfs/ [root@node3 mfs]# cp mfschunkserver.cfg.sample mfschunkserver.cfg [root@node3 mfs]# vim mfschunkserver.cfg MASTER_HOST = 172.25.0.100 ##添加mfsmaster的虚拟ip2、配置mfshdd.cfg主配置文件 mfshdd.cfg该文件用来设置你将 Chunk Server 的哪个目录共享出去给 Master Server进行管理。当然,虽然这里填写的是共享的目录,但是这个目录后面最好是一个单独的分区。

[root@node3 mfs]# cp /usr/local/mfs/etc/mfs/mfshdd.cfg.sample /usr/local/mfs/etc/mfs/mfshdd.cfg [root@node3 mfs]# vim /usr/local/mfs/etc/mfs/mfshdd.cfg /mfsdata ###添加存储数据的目录3、启动check Server:

[root@node3 mfs]# mkdir /mfsdata [root@node3 mfs]# chown mfs:mfs /mfsdata/ You have new mail in /var/spool/mail/root [root@node3 mfs]# /usr/local/mfs/sbin/mfschunkserver start三、客户端挂载文件安装:在node5上1、安装FUSE:

[root@node5 mfs]# yum install fuse fuse-devel -y [root@node5 ~]# modprobe fuse [root@node5 ~]# lsmod |grep fuse fuse 91874 0

2、安装挂载客户端

[root@node6 ~]# yum install zlib-devel -y [root@node6 ~]# useradd mfs [root@node6 src]# tar -zxvf v3.0.96.tar.gz [root@node6 src]# cd moosefs-3.0.96/ [root@node6 moosefs-3.0.96]# ./configure --prefix=/usr/local/mfs --with-default-user=mfs --with-default-group=mfs --disable-mfsmaster --disable-mfschunkserver --enable-mfsmount [root@node6 moosefs-3.0.96]# make && make install

3、在客户端上挂载文件系统,先创建挂载目录:

[root@node6 moosefs-3.0.96]# mkdir /mfsdata [root@node6 moosefs-3.0.96]# chown -R mfs:mfs /mfsdata/

四、测试1、测试vip挂载在node1上看一下vip1是否存在

[root@node1 ~]# ip addr 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:49:e9:da brd ff:ff:ff:ff:ff:ff inet 172.25.0.29/24 brd 172.25.0.255 scope global ens33 valid_lft forever preferred_lft forever inet 172.25.0.100/24 brd 172.25.0.255 scope global secondary ens33 valid_lft forever preferred_lft forever inet6 fe80::20c:29ff:fe49:e9da/64 scope link

###可以看到vip已经在node1起来的在node5上测试挂载写入:

[root@node5 ~]# /usr/local/mfs/bin/mfsmount /mfsdata -H 172.25.0.100 -p MFS Password: ##输入在mfsmaster设置的密码 mfsmaster accepted connection with parameters: read-write,restricted_ip,map_all ; root mapped to 1004:1004 ; users mapped to 1004:1004 [root@node5 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/cl-root 18G 1.9G 17G 11% / devtmpfs 226M 0 226M 0% /dev tmpfs 237M 0 237M 0% /dev/shm tmpfs 237M 4.6M 232M 2% /run tmpfs 237M 0 237M 0% /sys/fs/cgroup /dev/sda1 1014M 139M 876M 14% /boot tmpfs 48M 0 48M 0% /run/user/0 172.25.0.100:9421 36G 4.1G 32G 12% /mfsdata##可以发现 moosefs已经通过虚拟ip挂载在/mfsdata上:

[root@node5 ~]# cd /mfsdata/ [root@node5 mfsdata]# touch xiaozhang.txt [root@node5 mfsdata]# echo xiaozhang > xiaozhang.txt [root@node5 mfsdata]# [root@node5 mfsdata]# cat xiaozhang.txt xiaozhang##可以发现写入数据成功,目的达到

2、高可用测试我们先在node5把磁盘umount掉

[root@node5 ~]# umount -l /mfsdata/ [root@node5 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/cl-root 18G 1.9G 17G 11% / devtmpfs 226M 0 226M 0% /dev tmpfs 237M 0 237M 0% /dev/shm tmpfs 237M 4.6M 232M 2% /run tmpfs 237M 0 237M 0% /sys/fs/cgroup /dev/sda1 1014M 139M 876M 14% /boot tmpfs 48M 0 48M 0% /run/user/0然后去到node1把模式改为下面我们把node1设置为standby:

[root@node1 ~]# crm crm(live)# node standby crm(live)# status crm(live)# status Stack: corosync Current DC: node1 (version 1.1.16-12.el7_4.4-94ff4df) - partition with quorum Last updated: Sat Oct 28 16:10:18 2017 Last change: Sat Oct 28 16:10:04 2017 by root via crm_attribute on node1 2 nodes configured 5 resources configured Node node1: standby Online: [ node2 ] Full list of resources: Master/Slave Set: ms_mfs_drbd [mfs_drbd] Masters: [ node2 ] Stopped: [ node1 ] mystore(ocf::heartbeat:Filesystem):Started node2 mfs(systemd:mfsmaster):Started node2 vip(ocf::heartbeat:IPaddr):Started node2##可以发现所有服务都已经切换到node2上了去到node2上查看一下资源vip

[root@node2 system]# ip addr 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:64:00:b1 brd ff:ff:ff:ff:ff:ff inet 172.25.0.30/24 brd 172.25.0.255 scope global ens33 valid_lft forever preferred_lft forever inet 172.25.0.100/24 brd 172.25.0.255 scope global secondary ens33 valid_lft forever preferred_lft forever inet6 fe80::20c:29ff:fe64:b1/64 scope link valid_lft forever preferred_lft forever磁盘挂载状况

[root@node2 system]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/cl-root 18G 2.5G 16G 14% / devtmpfs 226M 0 226M 0% /dev tmpfs 237M 39M 198M 17% /dev/shm tmpfs 237M 8.6M 228M 4% /run tmpfs 237M 0 237M 0% /sys/fs/cgroup /dev/sda1 1014M 197M 818M 20% /boot tmpfs 48M 0 48M 0% /run/user/0 /dev/drbd1 2.0G 40M 2.0G 2% /usr/local/mfs ###drbd已经在node2挂载mfs服务状态

[root@node2 system]# systemctl status mfsmaster mfsmaster.service - Cluster Controlled mfsmaster Loaded: loaded (/etc/systemd/system/mfsmaster.service; disabled; vendor preset: disabled) Drop-In: /run/systemd/system/mfsmaster.service.d └─50-pacemaker.conf Active: active (running) since Sat 2017-10-28 16:10:17 CST; 6min ago Process: 12283 ExecStart=/usr/local/mfs/sbin/mfsmaster start (code=exited, status=0/SUCCESS) Main PID: 12285 (mfsmaster) CGroup: /system.slice/mfsmaster.service └─12285 /usr/local/mfs/sbin/mfsmaster start###可以看到服务状态是running状态的现在我们去到node5上挂载看看

[root@node5 ~]# /usr/local/mfs/bin/mfsmount /mfsdata -H 172.25.0.100 -p MFS Password: mfsmaster accepted connection with parameters: read-write,restricted_ip,map_all ; root mapped to 1004:1004 ; users mapped to 1004:1004 [root@node5 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/cl-root 18G 1.9G 17G 11% / devtmpfs 226M 0 226M 0% /dev tmpfs 237M 0 237M 0% /dev/shm tmpfs 237M 4.6M 232M 2% /run tmpfs 237M 0 237M 0% /sys/fs/cgroup /dev/sda1 1014M 139M 876M 14% /boot tmpfs 48M 0 48M 0% /run/user/0 172.25.0.100:9421 36G 4.2G 32G 12% /mfsdata [root@node5 ~]# cat /mfsdata/xiaozhang.txt xiaozhang也可以发现数据还是存在的,说明我们的基于corosync和pacemaker+drbd实现mfs高可用是可实现的。基于corosync和pacemaker+drbd实现mfs高可用,个人觉得是一种很好的解决mfs单点的问题,利用做mfs的高可用不仅仅是解决了企业中做数据存储的问题,也保证当我们的master出现了问题后,我们也能够快速的转移我们的master主机,所以在使用mfs时,做mfs高可用个人还是比较推荐。

相关文章推荐

- 基于Corosync+Pacemaker+DRBD 实现高可用(HA)的MariaDB集群

- 基于corosync,pacemaker和drbd提供mariadb高可用 推荐

- Corosync+Pacemaker+DRBD+MySQL 实现高可用MySQL集群

- corosync+pacemaker+mysql+drbd 实现mysql的高可用 推荐

- Corosync+Pacemaker+DRBD实现MySQL的高可用

- Corosync+pacemaker实现基于drbd分散式存储的mysql高可用集群

- mfs+drbd+corosync+pacemaker+pcs+crmsh高可用分布式集群搭建

- drbd+mariadb+corosync+pacemaker构建高可用,实现负载均衡

- corosync + pacemaker + drbd 实现mysql存储的高可用(二)

- 初接触Linux,基于corosync+pacemaker实现web集群高可用

- 基于drbd&&corosync实现高可用mysql

- 集群:DRBD+corosync+pacemaker实现mysql服务高可用

- Corosync+Pacemaker+DRBD实现MySQL的高可用 推荐

- 编译安装MySQL实现corosync+pacemaker+drbd+mysql高可用

- MFS利用pacemaker+corosync+iscsi实现高可用

- corosync+pacemaker+drbd+mysql实现高可用

- Linux集群之corosync+pacemaker+drbd实现MySQL高可用

- drbd+mariadb+corosync+pacemaker实现高可用

- drbd+corosync+pacemaker实现web应用的高可用

- corosync/openais+pacemaker+drbd+web实现高可用群集