Keepalived高可用集群应用场景与配置

2017-03-07 20:21

537 查看

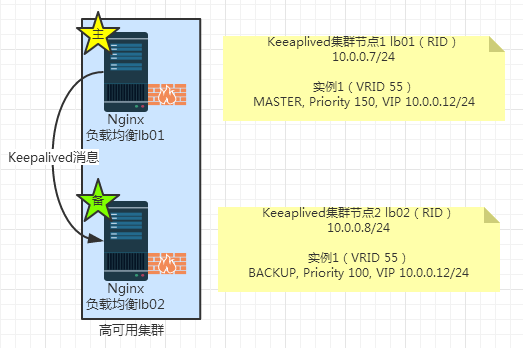

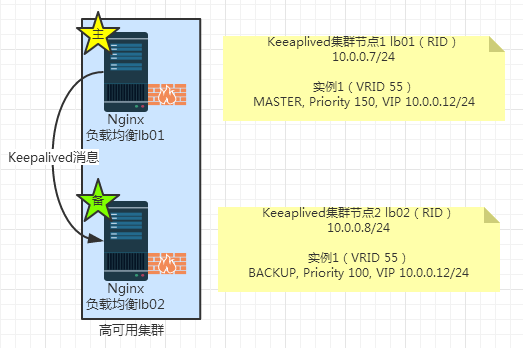

1.Keepalived单实例主备模式集群方案

这是最简单的模式,不只考虑高可用集群,先不考虑后方的Nginx负载均衡集群,即后端的服务器集群,参考下面的图示:

其对应的Keepalived核心配置如下:

lb01

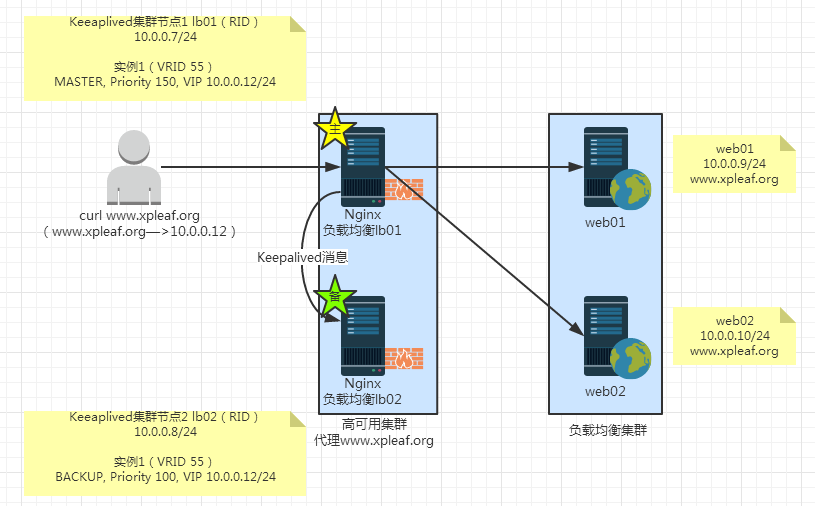

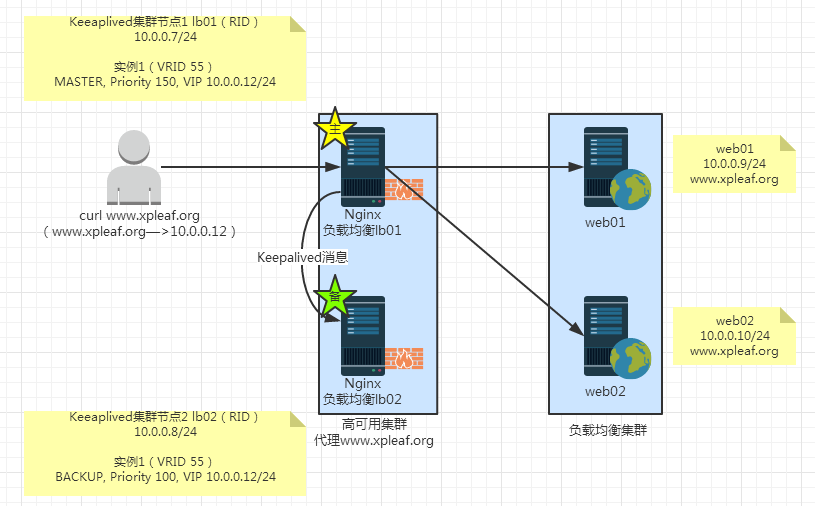

2.Nginx负载均衡集群配合Keepalived单实例主备模式集群方案

在1的基础上,同时考虑后端的Nginx负载均衡集群,参考下面的图示:

其对应的Keepalived和Nginx配置如下:

lb01

Keepalive配置:

Keepalived配置:

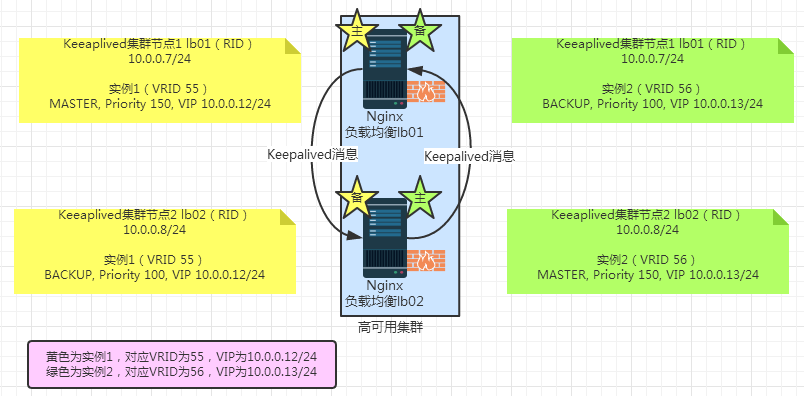

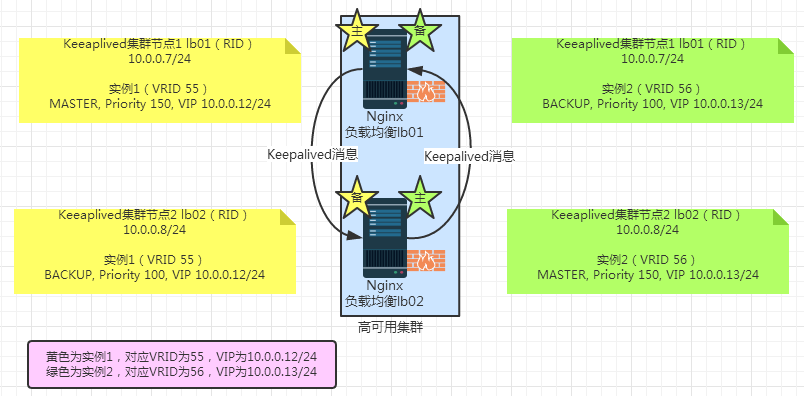

3.Keepalived双实例双主模式集群方案

参考下面图示:

其对应的Keepalive核心配置如下:

lb01

然后在每个高可用集群节点中,为两个不同的业务分别配置两个不同的upstream服务器池,从而实现前端反向代理高可用和负载均衡,高可用集群后端的服务器池在不同的业务中也能提供负载均衡。

结合上面的分析,就可以得到Nginx负载均衡配合Keepalived双实例双主模式的场景了。

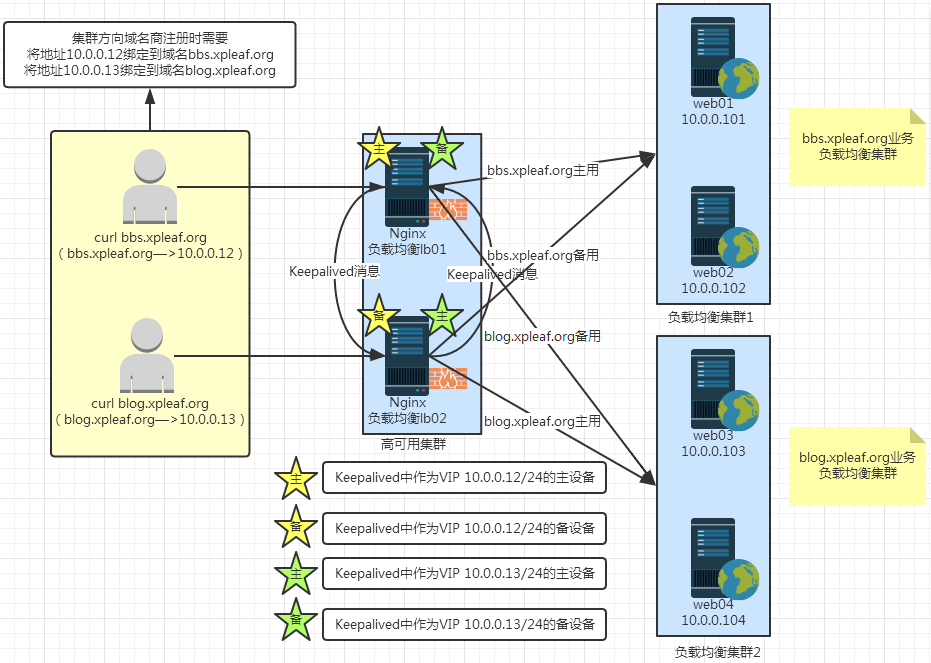

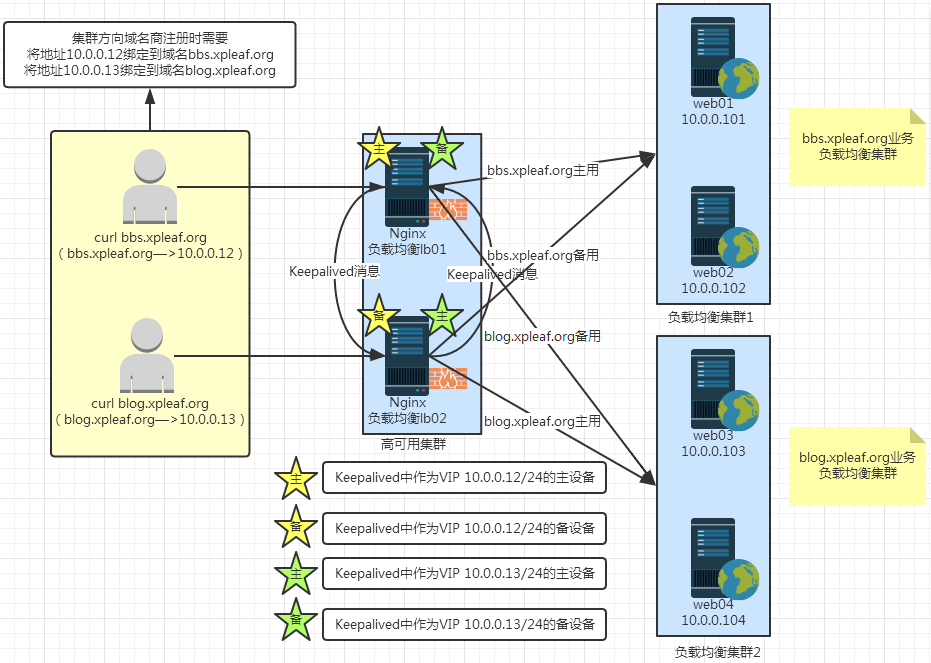

4.Nginx负载均衡集群配合Keepalived双实例双主模式集群方案

根据3的分析结果,参考下面的图示,注意下面这个图中的Keepalive配置与3的是一样的:

对应Nginx的配置如下:

lb01

这是最简单的模式,不只考虑高可用集群,先不考虑后方的Nginx负载均衡集群,即后端的服务器集群,参考下面的图示:

其对应的Keepalived核心配置如下:

lb01

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id lb01 # 用来标识一个Keepalived高可用集群中的一个节点服务器,因此是唯一的

}

vrrp_instance VI_1 {

state MASTER # 主

interface eth0

virtual_router_id 55 # 主备两台服务器的该值应该要相同

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.12/24 dev eth0 label eth0:1

}

}lb02global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 55

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.12/24 dev eth0 label eth0:1

}

}2.Nginx负载均衡集群配合Keepalived单实例主备模式集群方案

在1的基础上,同时考虑后端的Nginx负载均衡集群,参考下面的图示:

其对应的Keepalived和Nginx配置如下:

lb01

Keepalive配置:

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id lb01

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 55

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.12/24 dev eth0 label eth0:1

}

}Nginx配置:[root@lb01 conf]# cat nginx.conf

worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

upstream www_server_pools {

server 10.0.0.9:80 weight=1;

server 10.0.0.10:80 weight=1;

}

server {

listen 10.0.0.12:80;

server_name www.xpleaf.org;

location / {

proxy_pass http://www_server_pools; proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $remote_addr;

}

}

}lb02Keepalived配置:

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 55

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.12/24 dev eth0 label eth0:1

}

}Nginx配置:[root@lb02 conf]# cat nginx.conf

worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

upstream www_server_pools {

server 10.0.0.9:80 weight=1;

server 10.0.0.10:80 weight=1;

}

server {

listen 10.0.0.12:80;

server_name www.xpleaf.org;

location / {

proxy_pass http://www_server_pools; proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $remote_addr;

}

}

}3.Keepalived双实例双主模式集群方案

参考下面图示:

其对应的Keepalive核心配置如下:

lb01

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id lb01

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 55

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.12/24 dev eth0 label eth0:1

}

}

vrrp_instance VI_2 {

state BACKUP

interface eth0

virtual_router_id 55

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.13/24 dev eth0 label eth0:2

}

}lb02global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 55

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.12/24 dev eth0 label eth0:1

}

}

vrrp_instance VI_2 {

state MASTER

interface eth0

virtual_router_id 56

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.13/24 dev eth0 label eth0:2

}

} 如此一来,两个Keepalived集群节点的资源都得到了充分利用,可以考虑两个实例为不同的业务提供服务,例如,实例1可以作为业务bbs.xpleaf.org的主用设备,实例2可以作为业务blog.xpleaf.org的主用设备。然后在每个高可用集群节点中,为两个不同的业务分别配置两个不同的upstream服务器池,从而实现前端反向代理高可用和负载均衡,高可用集群后端的服务器池在不同的业务中也能提供负载均衡。

结合上面的分析,就可以得到Nginx负载均衡配合Keepalived双实例双主模式的场景了。

4.Nginx负载均衡集群配合Keepalived双实例双主模式集群方案

根据3的分析结果,参考下面的图示,注意下面这个图中的Keepalive配置与3的是一样的:

对应Nginx的配置如下:

lb01

worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

upstream bbs_server_pools { # bbs业务服务器池

server 10.0.0.101:80 weight=1;

server 10.0.0.102:80 weight=1;

# 假设10.0.0.101和10.0.0.102为bbs业务的两个集群节点

}

upstream blog_server_pools { # blog业务服务器池

server 10.0.0.103:80 weight=1;

server 10.0.0.104:80 weight=1;

# 假设10.0.0.103和10.0.0.104为bbs业务的两个集群节点

}

server {

listen 10.0.0.12:80;

server_name bbs.xpleaf.org;

location / {

proxy_pass http://bbs_server_pools; proxy_set_header X-Forwarded-For $remote_addr;

proxy_set_header Host $host;

}

}

server {

listen 10.0.0.13:80;

server_name blog.xpleaf.org;

location / {

proxy_pass http://blog_server_pools; proxy_set_header X-Forwarded-For $remote_addr;

proxy_set_header Host $host;

}

}

}lb02worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

upstream bbs_server_pools { # bbs业务服务器池

server 10.0.0.101:80 weight=1;

server 10.0.0.102:80 weight=1;

# 假设10.0.0.101和10.0.0.102为bbs业务的两个集群节点

}

upstream blog_server_pools { # blog业务服务器池

server 10.0.0.103:80 weight=1;

server 10.0.0.104:80 weight=1;

# 假设10.0.0.103和10.0.0.104为bbs业务的两个集群节点

}

server {

listen 10.0.0.12:80;

server_name bbs.xpleaf.org;

location / {

proxy_pass http://bbs_server_pools; proxy_set_header X-Forwarded-For $remote_addr;

proxy_set_header Host $host;

}

}

server {

listen 10.0.0.13:80;

server_name blog.xpleaf.org;

location / {

proxy_pass http://blog_server_pools; proxy_set_header X-Forwarded-For $remote_addr;

proxy_set_header Host $host;

}

}

} 可以看到,两台负载均衡器的Nginx配置是一样的。

相关文章推荐

- 高性能Web服务器Nginx的配置与部署研究(11)应用模块之Memcached模块的两大应用场景

- LVS+keepalived负载均衡兼高可用集群配置

- Keepalived配置及典型应用案例

- 基于云端虚拟机的LVS/DR+Keepalived+nginx的高可用集群架构配置(更新nginx代理功能) 推荐

- Nginx的配置与部署(11)应用模块之Memcached模块的两大应用场景

- [配置应用]LVS+keepalived负载均衡CentOS5.6环境下布署(32位)V2

- Nginx+Keepalived+Proxy_Cache 配置高可用集群和高速缓存

- zookeeper应用场景之配置文件同步

- nginx+keepalived负载均衡可用框架(补一 keepalived安装配置-热备-(服务器层和应用层))

- Keepalived配置及典型应用案例

- Keepalived配置及典型应用案例

- keepalived高可用集群的简单配置

- keepalived和应用在同一台机子的配置

- Keepalived配置及典型应用案例(已测试通过)

- 高性能Web服务器Nginx的配置与部署研究(11)应用模块之Memcached模块的两大应用场景

- keepalived + web高可用集群实现主从模型、双主模型配置

- 应用场景之Dynamic End Point(DEP)IPSec的配置

- keepalived配置及典型应用案例

- 基于云端虚拟机的LVS/DR+Keepalived+nginx的高可用集群架构配置