spark2.2.1安装、pycharm连接spark配置

2018-03-07 22:30

357 查看

一、单机版本Spark安装

Win10下安装Spark2.2.1

1. 工具准备

JDK 8u161 with NetBeans 8.2:

http://www.oracle.com/technetwork/java/javase/downloads/jdk-netbeans-jsp-142931.html

spark: spark-2.2.1-bin-hadoop2.7:

https://spark.apache.org/downloads.html

winutils.exe:下载的是针对hadoop-2.7的64位的winutils.exe

https://github.com/rucyang/hadoop.dll-and-winutils.exe-for-hadoop2.7.3-on-windows_X64/tree/master/bin

hadoop-2.7.3:

https://archive.apache.org/dist/hadoop/common/

scala-2.11.8可到官网自行下载

2. Java双击安装

3. spark, hadoop解压到你想保存的目录,hadoop解压过程发生提示需要以管理身份运行(载好安装包之后解压安装包,把文件夹名改成hadoop,并和Spark一样)。解决方案

https://jingyan.baidu.com/article/6079ad0e92cc8d28ff86dbc0.html?st=2&net_type=&bd_page_type=1&os=0&rst=&word=win7%E6%80%8E%E6%A0%B7%E8%A7%A3%E5%8E%8B%E6%96%87%E4%BB%B6

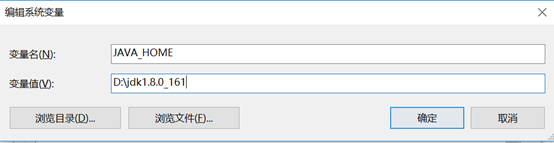

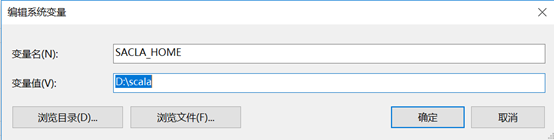

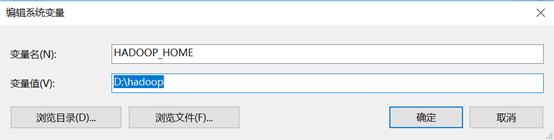

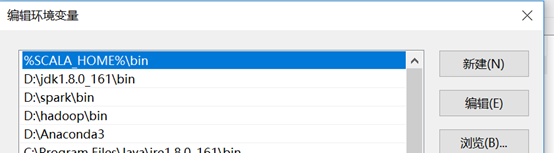

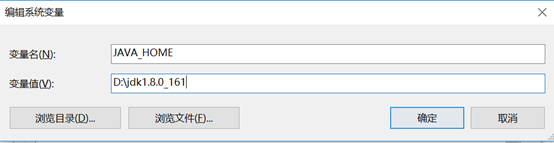

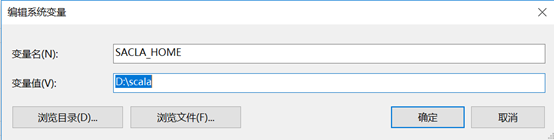

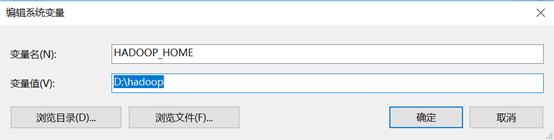

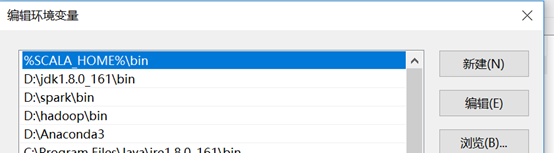

4. 环境变量设置

编辑系统变量PATH的值,将java,spark,Hadoop,scala的相关bin路径添加进去

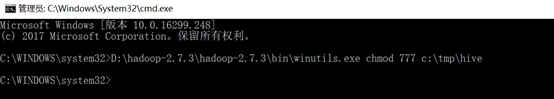

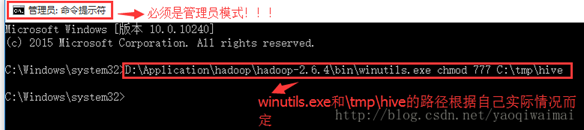

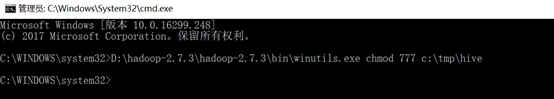

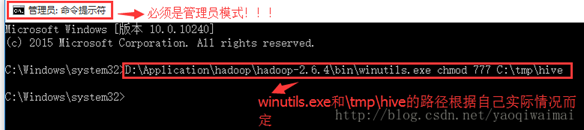

5. winutils.exe拷贝到hadoop解压后的bin目录下,打开C:\Windows\System32目录,找到cmd.exe,单击选中后右键,菜单中选择“以管理员身份运行”。以管理员模式进入cmd中输入 D:\hadoop-2.7.5\hadoop-2.7.5\bin\winutils.exe chmod 777 /tmp/hive

二、pycharm连接Spark配置

pip install pyspark

http://blog.csdn.net/clhugh/article/details/74590929

三、通过IDEA搭建scala开发环境开发

主要通过了两个网站

https://www.cnblogs.com/wcwen1990/p/7860716.html

https://www.jianshu.com/p/a5258f2821fc

bug1:

找了好久才找到原因:http://blog.csdn.net/fransis/article/details/51810926

Bug2:

解决方法:http://blog.csdn.net/shenlanzifa/article/details/42679577

Win10下安装Spark2.2.1

1. 工具准备

JDK 8u161 with NetBeans 8.2:

http://www.oracle.com/technetwork/java/javase/downloads/jdk-netbeans-jsp-142931.html

spark: spark-2.2.1-bin-hadoop2.7:

https://spark.apache.org/downloads.html

winutils.exe:下载的是针对hadoop-2.7的64位的winutils.exe

https://github.com/rucyang/hadoop.dll-and-winutils.exe-for-hadoop2.7.3-on-windows_X64/tree/master/bin

hadoop-2.7.3:

https://archive.apache.org/dist/hadoop/common/

scala-2.11.8可到官网自行下载

2. Java双击安装

3. spark, hadoop解压到你想保存的目录,hadoop解压过程发生提示需要以管理身份运行(载好安装包之后解压安装包,把文件夹名改成hadoop,并和Spark一样)。解决方案

https://jingyan.baidu.com/article/6079ad0e92cc8d28ff86dbc0.html?st=2&net_type=&bd_page_type=1&os=0&rst=&word=win7%E6%80%8E%E6%A0%B7%E8%A7%A3%E5%8E%8B%E6%96%87%E4%BB%B6

4. 环境变量设置

编辑系统变量PATH的值,将java,spark,Hadoop,scala的相关bin路径添加进去

5. winutils.exe拷贝到hadoop解压后的bin目录下,打开C:\Windows\System32目录,找到cmd.exe,单击选中后右键,菜单中选择“以管理员身份运行”。以管理员模式进入cmd中输入 D:\hadoop-2.7.5\hadoop-2.7.5\bin\winutils.exe chmod 777 /tmp/hive

二、pycharm连接Spark配置

pip install pyspark

http://blog.csdn.net/clhugh/article/details/74590929

三、通过IDEA搭建scala开发环境开发

主要通过了两个网站

https://www.cnblogs.com/wcwen1990/p/7860716.html

https://www.jianshu.com/p/a5258f2821fc

bug1:

找了好久才找到原因:http://blog.csdn.net/fransis/article/details/51810926

Bug2:

解决方法:http://blog.csdn.net/shenlanzifa/article/details/42679577

相关文章推荐

- Spark2.2.1+hadoop2.6.1安装配置成功运行WordCount

- [转载+补充][PY3]——环境配置(2)——windows下安装pycharm并连接Linux的python环境

- 连接远程linux spark 配置windows 下pycharm开发环境

- 阿里云服务器安装配置Hadoop2.7.5+Spark2.2.1伪分布式环境

- Ubuntu16.04服务器安装配置MySQL并开启远程连接

- ubuntu下Pycharm安装及配置

- spark在windows下的安装配置

- c#连接Redis Redis的安装与配置

- JSP连接MS SQL Server2000安装配置

- win10安装Anaconda+TensorFlow+配置PyCharm

- Windows+Pycharm+Spark环境配置

- TensorFlow-CPU+Pycharm使用 Windows7安装配置流程

- Spark ML 分布式机器学习(一):iPython+spark安装与环境变量配置

- 在apache和php安装之后进行连接配置,

- Oracle客户端的安装与远程连接配置

- Linux下PHP安装配置MongoDB数据库连接扩展

- windows下安装pycharm并连接Linux的python环境

- ubuntu下Pycharm安装及配置

- Anaconda和Pycharm安装和配置教程

- Spark 0.9的安装配置