Hadoop0.20.2 完全分布式安装和配置

2018-03-01 21:51

375 查看

转载请注明出处:

http://blog.csdn.net/gane_cheng/article/details/52922372

http://www.ganecheng.tech/blog/52922372.html (浏览效果更好)

虚拟机软件:VMware Workstation 12 Pro ,12.1.0 build-3272444

主机操作系统:Windows 7 Ultimate,64-bit 6.1.7601, Service Pack 1

虚拟机 Linux 操作系统:Deepin 15.3,64-bit

Hadoop 版本:hadoop-0.20.2.tar.gz

JDK 版本:jdk-8u101-linux-x64.tar.gz

开启 root 账户 和远程连接 SSH 方式如下。

① 激活root账户

[/code]

输入密码之后,切换到 root 账户。

[/code]

输入密码之后就可以进入 root 账户了。

② 安装 SSH 服务

[/code]

安装完成之后,可以使用命令启动 SSH 服务。

有两种方式可以启动,下面两种方式任选其一。

[/code]

或

[/code]

③ 开启 SSH 的 root 账户远程登录

用 Xshell root 连接时,显示 SSH 服务器拒绝了密码,原因是 sshd 默认设置不允许 root 用户密码远程登录。

现在开启 root 账户远程登录。

[/code]

找到

2

3

4

[/code]

改为

2

3

4

[/code]

此时重启 SSH 服务

[/code]

或

[/code]

当然,重启电脑更好。

2

[/code]

创建一个目录。软件安装在

2

3

4

5

6

7

[/code]

将

2

3

4

5

6

7

8

9

10

11

12

[/code]

设置 Java 环境变量

[/code]

在文件末尾加上以下内容。

2

3

4

5

[/code]

添加完成之后,使文件生效。

[/code]

测试 Java 环境。

2

3

4

5

[/code]

2

3

4

5

[/code]

hadoop-1节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

[/code]

hadoop-2节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

[/code]

hadoop-3节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

[/code]

hadoop-4节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

[/code]

现在在 hadoop-1 上远程操作各节点的认证。

hadoop-1节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

[/code]

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

[/code]

hadoop-2节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

[/code]

hadoop-3节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

[/code]

hadoop-4节点

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

[/code]

2

3

4

5

6

7

8

9

10

11

12

[/code]

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

[/code]

配置 hadoop-env.sh

2

3

4

[/code]

配置 core-site.xml

2

3

4

5

6

[/code]

配置 hdfs-site.xml

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

[/code]

配置 mapred-site.xml

2

3

4

5

6

[/code]

配置 masters

[/code]

配置 slaves

2

3

[/code]

2

3

[/code]

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

[/code]

2

3

4

5

6

7

8

9

10

11

12

13

14

15

[/code]

2

3

4

5

6

7

[/code]

hadoop-2

2

3

4

5

6

[/code]

hadoop-3

2

3

4

5

6

[/code]

hadoop-4

2

3

4

5

6

[/code]

http://hadoop-1:50060/(taskertracker的HTTP服务器地址和端口)

http://hadoop-1:50070/(namenode的HTTP服务器地址和端口)

http://hadoop-1:50075/(datanode的HTTP服务器地址和端口)

http://hadoop-1:50090/(secondary namenode的HTTP服务器地址和端口)

使用 Eclipse 新建一个 Map/Reduce 工程。

编写代码如下。

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

[/code]

导出为 JAR file。然后传到 hadoop-1 机器上的

创建 HDFS 的输入目录

[/code]

将单词文本文件传到

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

[/code]

现在开始使用 Hadoop 运行我们的 WordCount 程序。

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

[/code]

打开网页 http://hadoop-1:50030/ 可以看到执行过程。

现在看一下执行结果。

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

[/code]

至此,可以初步说明我们的 Hadoop0.20.2 完全分布式安装和配置是正确的。

关掉 Hadoop 所有节点。

2

3

4

5

6

7

8

9

10

11

[/code]

http://blog.csdn.net/gane_cheng/article/details/52913354

http://february30thcf.iteye.com/blog/1768795

http://blog.csdn.net/gane_cheng/article/details/52922372

http://www.ganecheng.tech/blog/52922372.html (浏览效果更好)

准备工作

安装 Hadoop 分布式环境,需要先做一些准备工作。Hadoop 集群规划

| IP地址 | 主机名hostname | 负责工作 |

|---|---|---|

| 192.168.0.31 | hadoop-1 | namenode,secondary namenode,job tracker |

| 192.168.0.32 | hadoop-2 | datanode,tasktracker |

| 192.168.0.33 | hadoop-3 | datanode,tasktracker |

| 192.168.0.34 | hadoop-4 | datanode,tasktracker |

主机操作系统:Windows 7 Ultimate,64-bit 6.1.7601, Service Pack 1

虚拟机 Linux 操作系统:Deepin 15.3,64-bit

Hadoop 版本:hadoop-0.20.2.tar.gz

JDK 版本:jdk-8u101-linux-x64.tar.gz

设置固定 IP 地址 (四台机器都操作)

Deepin 系统 GUI 做的非常不错,和 Windows 操作系统类似,可以在系统设置里面直接设置固定 IP 地址。

开启 root 账户 和 远程连接 SSH (四台机器都操作)

后面所有操作,都要在 root 账户下进行,这样可以避免很多问题。开启 root 账户 和远程连接 SSH 方式如下。

① 激活root账户

sudo passwd root1

[/code]

输入密码之后,切换到 root 账户。

su root1

[/code]

输入密码之后就可以进入 root 账户了。

② 安装 SSH 服务

apt-get install ssh1

[/code]

安装完成之后,可以使用命令启动 SSH 服务。

有两种方式可以启动,下面两种方式任选其一。

service sshd start1

[/code]

或

/etc/init.d/sshd start1

[/code]

③ 开启 SSH 的 root 账户远程登录

用 Xshell root 连接时,显示 SSH 服务器拒绝了密码,原因是 sshd 默认设置不允许 root 用户密码远程登录。

现在开启 root 账户远程登录。

vi /etc/ssh/sshd_config1

[/code]

找到

# Authentication: LoginGraceTime 120 PermitRootLogin prohibit-password StrictModes yes1

2

3

4

[/code]

改为

# Authentication: LoginGraceTime 120 PermitRootLogin yes StrictModes yes1

2

3

4

[/code]

此时重启 SSH 服务

service sshd restart1

[/code]

或

/etc/init.d/sshd restart1

[/code]

当然,重启电脑更好。

安装 JDK (四台机器都操作)

切换到 root 账户。deepin@hadoop-1:~$ su root 密码:1

2

[/code]

创建一个目录。软件安装在

/opt/softwares

root@hadoop-1:/home/deepin# cd /opt/ root@hadoop-1:/opt# mkdir softwares root@hadoop-1:/opt# ls cxoffice deepinwine google samsung smfp-common softwares1

2

3

4

5

6

7

[/code]

将

jdk-8u101-linux-x64.tar.gz复制到目录

/opt/softwares,然后解压。

root@hadoop-1:/opt/softwares# ls jdk-8u101-linux-x64.tar.gz root@hadoop-1:/opt/softwares# tar -zxvf jdk-8u101-linux-x64.tar.gz root@hadoop-1:/opt/softwares# ls jdk-8u101-linux-x64.tar.gz jdk1.8.0_101 root@hadoop-1:/opt/softwares# rm -rf jdk-8u101-linux-x64.tar.gz root@hadoop-1:/opt/softwares# ls jdk1.8.0_1011

2

3

4

5

6

7

8

9

10

11

12

[/code]

设置 Java 环境变量

/etc/profile。

root@hadoop-1:/opt/softwares# vi /etc/profile1

[/code]

在文件末尾加上以下内容。

## JAVA export JAVA_HOME=/opt/softwares/jdk1.8.0_101 export JRE_HOME=$JAVA_HOME/jre export PATH=$PATH</span>:<span class="hljs-variable">$JAVA_HOME/bin export CLASSPATH=./:$JAVA_HOME</span>/lib:<span class="hljs-variable">$JAVA_HOME/jre/lib1

2

3

4

5

[/code]

添加完成之后,使文件生效。

root@hadoop-1:/opt/softwares# source /etc/profile1

[/code]

测试 Java 环境。

root@hadoop-1:/opt/softwares# java -version Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp java version "1.8.0_101" Java(TM) SE Runtime Environment (build 1.8.0_101-b13) Java HotSpot(TM) 64-Bit Server VM (build 25.101-b13, mixed mode)1

2

3

4

5

[/code]

完全分布式环境搭建

现在讲一下 Hadoop0.20.2 完全分布式环境的搭建编辑 /etc/hosts (四台机器都操作)

127.0.0.1 localhost 192.168.0.31 hadoop-1 192.168.0.32 hadoop-2 192.168.0.33 hadoop-3 192.168.0.34 hadoop-41

2

3

4

5

[/code]

配置 root 用户能够无密码登录

hadoop 分布式集群要求每一台电脑都可以互相无密码连接。下面介绍具体步骤。hadoop-1节点

root@hadoop-1:~# hostname hadoop-1 root@hadoop-1:~# mkdir ~/.ssh root@hadoop-1:~# ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:yDfgKdrMikVM702FPU0MA5lcdj2bX/EzzeJ3iDWJMzU root@hadoop-1 The key's randomart image is: +---[RSA 2048]----+ | ..==oo. | | ++ =. o E. | | . o + . * ++| | o . o = . * =o=| | o o * S B =o| | . * + . . . + o| | o = . ..| | o . | |. . | +----[SHA256]-----+ root@hadoop-1:~# ssh-keygen -t dsa Generating public/private dsa key pair. Enter file in which to save the key (/root/.ssh/id_dsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_dsa. Your public key has been saved in /root/.ssh/id_dsa.pub. The key fingerprint is: SHA256:giTYQlpNTIThLBLN3VtwFhzaPl+WCdweiKzoR/XG0AA root@hadoop-1 The key's randomart image is: +---[DSA 1024]----+ |.+oX+.E+*o | |oB+ + .*o= o | |* = . .o* = o | |.o o o.+ + o + | | o + S + * | | . . . + o | | . . . | | . | | | +----[SHA256]-----+1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

[/code]

hadoop-2节点

root@hadoop-2:~# hostname hadoop-2 root@hadoop-2:~# mkdir ~/.ssh root@hadoop-2:~# ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:Gj9hFxYYaCyevF39fDDSFrGxMmYEoI7wcNw3ORqLRp4 root@hadoop-2 The key's randomart image is: +---[RSA 2048]----+ | ..oo+o o. | | . ...+... ..+ | |o +o++= .B.o. | | B =+= o.+o+= | | E +o..S .= o | | . . .= o o . | | . o . | | . | | | +----[SHA256]-----+ root@hadoop-2:~# ssh-keygen -t dsa Generating public/private dsa key pair. Enter file in which to save the key (/root/.ssh/id_dsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_dsa. Your public key has been saved in /root/.ssh/id_dsa.pub. The key fingerprint is: SHA256:pAJ+nvtredExhNKkwdVNhZMmA12eZwRJZfipiYO6POs root@hadoop-2 The key's randomart image is: +---[DSA 1024]----+ | ..++= ==O= | | ooo *.O+ | | . .... +ooo. | | . . o o oo | | . o . So + o | | o o o + o | | o o . . | | o= . | | .+E* | +----[SHA256]-----+1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

[/code]

hadoop-3节点

root@hadoop-3:~# hostname hadoop-3 root@hadoop-3:~# mkdir ~/.ssh root@hadoop-3:~# ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:FLxH+SoS4JjnnYEwLLxoeyIVvN8a9z3Xkpf6U4dYJCU root@hadoop-3 The key's randomart image is: +---[RSA 2048]----+ |.o .. .E.. | |..* . ..o ... | |...O o .o . o | |.o= + o.. . . . | |...+ o +S. . o . | |.o .+ * . . . . o| |. o + o o o o.| | . . o + = | | o.=.. | +----[SHA256]-----+ root@hadoop-3:~# ssh-keygen -t dsa Generating public/private dsa key pair. Enter file in which to save the key (/root/.ssh/id_dsa): \^H^H^[[3~^[[2~^C root@hadoop-3:~# ssh-keygen -t dsa Generating public/private dsa key pair. Enter file in which to save the key (/root/.ssh/id_dsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_dsa. Your public key has been saved in /root/.ssh/id_dsa.pub. The key fingerprint is: SHA256:p6nuIzyRBU/M1XaJmKcUywfIR75z7glu/QyiQbwXCHU root@hadoop-3 The key's randomart image is: +---[DSA 1024]----+ | +.+E= . . | | ..*+=o= o | | .+ o++.. | | oo..o | | o+ S o | | o. . O | | . .o *.o | | + .*.+.+ | | ==o. o.o | +----[SHA256]-----+1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

[/code]

hadoop-4节点

root@hadoop-4:~# hostname hadoop-4 root@hadoop-4:~# mkdir ~/.ssh root@hadoop-4:~# ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:iJIAXhELcaGr4gnZa8vTWxPSKnSRp104mLCk4c9DbYQ root@hadoop-4 The key's randomart image is: +---[RSA 2048]----+ |oo+*=. | |++=E++ . | |o+.o=o+ . | | .=..B + | | .++= = S | |.+ o.o . | |= o.. o | |+.+o.. . | | ++o.. | +----[SHA256]-----+ root@hadoop-4:~# ssh-keygen -t dsa Generating public/private dsa key pair. Enter file in which to save the key (/root/.ssh/id_dsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_dsa. Your public key has been saved in /root/.ssh/id_dsa.pub. The key fingerprint is: SHA256:N6P/fb+DVcZ+lL3WcAcVRaKYOHnN6+aYeiUb4qQJeu4 root@hadoop-4 The key's randomart image is: +---[DSA 1024]----+ | .+*| | o = ... | | + + + oo| | o ...O| | S + . *=| | . ooo+. ++| | . . =.. =o + .| | . . o ..o= o ..| | +E .o+.o .o=| +----[SHA256]-----+1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

[/code]

现在在 hadoop-1 上远程操作各节点的认证。

hadoop-1节点

root@hadoop-1:~# cat ~/.ssh/id_rsa.pub >>~/.ssh/authorized_keys root@hadoop-1:~# cat ~/.ssh/id_dsa.pub >>~/.ssh/authorized_keys root@hadoop-1:~# ssh hadoop-2 cat ~/.ssh/id_rsa.pub >>~/.ssh/authorized_keys The authenticity of host 'hadoop-2 (192.168.0.32)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-2,192.168.0.32' (ECDSA) to the list of known hosts. root@hadoop-2's password: root@hadoop-1:~# ssh hadoop-2 cat ~/.ssh/id_dsa.pub >>~/.ssh/authorized_keys root@hadoop-2's password: root@hadoop-1:~# ssh hadoop-3 cat ~/.ssh/id_rsa.pub >>~/.ssh/authorized_keys The authenticity of host 'hadoop-3 (192.168.0.33)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-3,192.168.0.33' (ECDSA) to the list of known hosts. root@hadoop-3's password: root@hadoop-1:~# ssh hadoop-3 cat ~/.ssh/id_dsa.pub >>~/.ssh/authorized_keys root@hadoop-3's password: root@hadoop-1:~# ssh hadoop-4 cat ~/.ssh/id_rsa.pub >>~/.ssh/authorized_keys The authenticity of host 'hadoop-4 (192.168.0.34)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-4,192.168.0.34' (ECDSA) to the list of known hosts. root@hadoop-4's password: root@hadoop-1:~# ssh hadoop-4 cat ~/.ssh/id_dsa.pub >>~/.ssh/authorized_keys root@hadoop-4's password: root@hadoop-1:~# scp /root/.ssh/authorized_keys hadoop-2:~/.ssh/authorized_keys root@hadoop-2's password: authorized_keys 100% 3992 3.9KB/s 00:00 root@hadoop-1:~# scp /root/.ssh/authorized_keys hadoop-3:~/.ssh/authorized_keys root@hadoop-3's password: authorized_keys 100% 3992 3.9KB/s 00:00 root@hadoop-1:~# scp /root/.ssh/authorized_keys hadoop-4:~/.ssh/authorized_keys root@hadoop-4's password: authorized_keys 100% 3992 3.9KB/s 00:001

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

[/code]

测试远程连接

hadoop-1节点root@hadoop-1:~# ssh hadoop-1 date The authenticity of host 'hadoop-1 (192.168.0.31)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-1,192.168.0.31' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:19:52 CST root@hadoop-1:~# ssh hadoop-1 date 2016年 10月 24日 星期一 22:20:18 CST root@hadoop-1:~# ssh hadoop-2 date 2016年 10月 24日 星期一 22:20:23 CST root@hadoop-1:~# ssh hadoop-3 date 2016年 10月 24日 星期一 22:20:29 CST root@hadoop-1:~# ssh hadoop-4 date 2016年 10月 24日 星期一 22:20:35 CST1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

[/code]

hadoop-2节点

root@hadoop-2:~# ssh hadoop-1 date The authenticity of host 'hadoop-1 (192.168.0.31)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-1,192.168.0.31' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:20:51 CST root@hadoop-2:~# ssh hadoop-2 date The authenticity of host 'hadoop-2 (192.168.0.32)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-2,192.168.0.32' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:22:50 CST root@hadoop-2:~# ssh hadoop-3 date The authenticity of host 'hadoop-3 (192.168.0.33)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-3,192.168.0.33' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:22:57 CST root@hadoop-2:~# ssh hadoop-4 date The authenticity of host 'hadoop-4 (192.168.0.34)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-4,192.168.0.34' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:23:06 CST root@hadoop-2:~# ssh hadoop-1 date 2016年 10月 24日 星期一 22:23:11 CST root@hadoop-2:~# ssh hadoop-2 date 2016年 10月 24日 星期一 22:23:14 CST root@hadoop-2:~# ssh hadoop-3 date 2016年 10月 24日 星期一 22:23:16 CST root@hadoop-2:~# ssh hadoop-4 date 2016年 10月 24日 星期一 22:23:18 CST1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

[/code]

hadoop-3节点

root@hadoop-3:~# ssh hadoop-1 date The authenticity of host 'hadoop-1 (192.168.0.31)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-1,192.168.0.31' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:23:31 CST root@hadoop-3:~# ssh hadoop-2 date The authenticity of host 'hadoop-2 (192.168.0.32)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-2,192.168.0.32' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:23:52 CST root@hadoop-3:~# ssh hadoop-3 date The authenticity of host 'hadoop-3 (192.168.0.33)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-3,192.168.0.33' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:23:57 CST root@hadoop-3:~# ssh hadoop-4 date The authenticity of host 'hadoop-4 (192.168.0.34)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-4,192.168.0.34' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:24:02 CST root@hadoop-3:~# ssh hadoop-1 date 2016年 10月 24日 星期一 22:24:04 CST root@hadoop-3:~# ssh hadoop-2 date 2016年 10月 24日 星期一 22:24:06 CST root@hadoop-3:~# ssh hadoop-3 date 2016年 10月 24日 星期一 22:24:07 CST root@hadoop-3:~# ssh hadoop-4 date 2016年 10月 24日 星期一 22:24:11 CST1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

[/code]

hadoop-4节点

root@hadoop-4:~# ssh hadoop-1 date The authenticity of host 'hadoop-1 (192.168.0.31)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-1,192.168.0.31' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:24:25 CST root@hadoop-4:~# ssh hadoop-2 date The authenticity of host 'hadoop-2 (192.168.0.32)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-2,192.168.0.32' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:24:31 CST root@hadoop-4:~# ssh hadoop-3 date The authenticity of host 'hadoop-3 (192.168.0.33)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-3,192.168.0.33' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:24:38 CST root@hadoop-4:~# ssh hadoop-4 date The authenticity of host 'hadoop-4 (192.168.0.34)' can't be established. ECDSA key fingerprint is SHA256:qnu1tEyeXgqVRYPkdGjVjQ5E/PBA8kbIQ1xRNH61OQ0. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'hadoop-4,192.168.0.34' (ECDSA) to the list of known hosts. 2016年 10月 24日 星期一 22:24:48 CST root@hadoop-4:~# ssh hadoop-1 date 2016年 10月 24日 星期一 22:24:51 CST root@hadoop-4:~# ssh hadoop-2 date 2016年 10月 24日 星期一 22:24:53 CST root@hadoop-4:~# ssh hadoop-3 date 2016年 10月 24日 星期一 22:24:55 CST root@hadoop-4:~# ssh hadoop-4 date 2016年 10月 24日 星期一 22:24:58 CST1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

[/code]

在 hadoop-1 上安装 Hadoop

将hadoop-0.20.2.tar.gz复制到目录

/opt/softwares,然后解压。

root@hadoop-1:/opt/softwares# ls hadoop-0.20.2.tar.gz jdk1.8.0_101 root@hadoop-1:/opt/softwares# tar -zxvf hadoop-0.20.2.tar.gz root@hadoop-1:/opt/softwares# ls hadoop-0.20.2 hadoop-0.20.2.tar.gz jdk1.8.0_101 root@hadoop-1:/opt/softwares# rm -rf hadoop-0.20.2.tar.gz root@hadoop-1:/opt/softwares# ls hadoop-0.20.2 jdk1.8.0_1011

2

3

4

5

6

7

8

9

10

11

12

[/code]

在 hadoop-1 上配置 Hadoop

root@hadoop-1:/opt/softwares# cd hadoop-0.20.2/conf/ root@hadoop-1:/opt/softwares/hadoop-0.20.2/conf# ls -l 总用量 56 -rw-r--r-- 1 root root 3936 10月 24 19:29 capacity-scheduler.xml -rw-r--r-- 1 root root 535 10月 24 19:29 configuration.xsl -rw-r--r-- 1 root root 267 10月 24 22:37 core-site.xml -rw-r--r-- 1 root root 2282 10月 24 22:30 hadoop-env.sh -rw-r--r-- 1 root root 1245 10月 24 19:29 hadoop-metrics.properties -rw-r--r-- 1 root root 4190 10月 24 19:29 hadoop-policy.xml -rw-r--r-- 1 root root 581 10月 24 22:46 hdfs-site.xml -rw-r--r-- 1 root root 2815 10月 24 19:29 log4j.properties -rw-r--r-- 1 root root 273 10月 24 22:48 mapred-site.xml -rw-r--r-- 1 root root 8 10月 24 22:48 masters -rw-r--r-- 1 root root 26 10月 24 22:49 slaves -rw-r--r-- 1 root root 1243 10月 24 19:29 ssl-client.xml.example -rw-r--r-- 1 root root 1195 10月 24 19:29 ssl-server.xml.example1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

[/code]

配置 hadoop-env.sh

# The java implementation to use. Required. # export JAVA_HOME=/usr/lib/j2sdk1.5-sun export JAVA_HOME=/opt/softwares/jdk1.8.0_1011

2

3

4

[/code]

配置 core-site.xml

<configuration> <property> <name>fs.default.name</name> <value>hdfs://hadoop-1:9000</value> </property> </configuration>1

2

3

4

5

6

[/code]

配置 hdfs-site.xml

<configuration> <property> <name>dfs.data.dir</name> <value>/opt/softwares/hadoop-data</value> </property> <property> <name>dfs.name.dir</name> <value>/opt/softwares/hadoop-name</value> </property> <property> <name>fs.checkpoint.dir</name> <value>/opt/softwares/hadoop-namesecondary</value> </property> <property> <name>dfs.replication</name> <value>2</value> </property> </configuration>1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

[/code]

配置 mapred-site.xml

<configuration> <property> <name>mapred.job.tracker</name> <value>hadoop-1:9001</value> </property> </configuration>1

2

3

4

5

6

[/code]

配置 masters

hadoop-11

[/code]

配置 slaves

hadoop-2 hadoop-3 hadoop-41

2

3

[/code]

分发 hadoop-1 上配置好的的 hadoop 软件到 hadoop-1,hadoop-2, hadoop-3 节点

root@hadoop-1:/opt/softwares# scp -r hadoop-0.20.2/ hadoop-2:/opt/softwares/ root@hadoop-1:/opt/softwares# scp -r hadoop-0.20.2/ hadoop-3:/opt/softwares/ root@hadoop-1:/opt/softwares# scp -r hadoop-0.20.2/ hadoop-4:/opt/softwares/1

2

3

[/code]

启动 Hadoop

Hadoop环境配置好以后,启动起来看一下效果。格式化 HDFS(在 hadoop-1 节点上)

root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./hadoop namenode -format Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp 16/10/24 22:55:52 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = hadoop-1/192.168.0.31 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 0.20.2 STARTUP_MSG: build = https://svn.apache.org/repos/asf/hadoop/common/branches/branch-0.20 -r 911707; compiled by 'chrisdo' on Fri Feb 19 08:07:34 UTC 2010 ************************************************************/ 16/10/24 22:55:53 INFO namenode.FSNamesystem: fsOwner=root,root 16/10/24 22:55:53 INFO namenode.FSNamesystem: supergroup=supergroup 16/10/24 22:55:53 INFO namenode.FSNamesystem: isPermissionEnabled=true 16/10/24 22:55:53 INFO common.Storage: Image file of size 94 saved in 0 seconds. 16/10/24 22:55:53 INFO common.Storage: Storage directory /opt/softwares/hadoop-name has been successfully formatted. 16/10/24 22:55:53 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at hadoop-1/192.168.0.31 ************************************************************/1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

[/code]

启动 Hadoop 的所有节点

root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./start-all.sh starting namenode, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-namenode-hadoop-1.out Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp hadoop-2: starting datanode, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-datanode-hadoop-2.out hadoop-3: starting datanode, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-datanode-hadoop-3.out hadoop-4: starting datanode, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-datanode-hadoop-4.out hadoop-1: starting secondarynamenode, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-secondarynamenode-hadoop-1.out starting jobtracker, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-jobtracker-hadoop-1.out Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp hadoop-4: starting tasktracker, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-tasktracker-hadoop-4.out hadoop-3: starting tasktracker, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-tasktracker-hadoop-3.out hadoop-2: starting tasktracker, logging to /opt/softwares/hadoop-0.20.2/bin/../logs/hadoop-root-tasktracker-hadoop-2.out1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

[/code]

查看 Hadoop 进程

hadoop-1root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# jps Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp 2033 NameNode 2163 SecondaryNameNode 2243 JobTracker 2361 Jps1

2

3

4

5

6

7

[/code]

hadoop-2

root@hadoop-2:/home/deepin# jps Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp 1705 Jps 1519 DataNode 1599 TaskTracker1

2

3

4

5

6

[/code]

hadoop-3

root@hadoop-3:/home/deepin# jps Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp 1719 TaskTracker 1639 DataNode 1786 Jps1

2

3

4

5

6

[/code]

hadoop-4

root@hadoop-4:/home/deepin# jps Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp 1586 DataNode 1666 TaskTracker 1735 Jps1

2

3

4

5

6

[/code]

访问 http 服务

http://hadoop-1:50030/(jobtracker的HTTP服务器地址和端口)http://hadoop-1:50060/(taskertracker的HTTP服务器地址和端口)

http://hadoop-1:50070/(namenode的HTTP服务器地址和端口)

http://hadoop-1:50075/(datanode的HTTP服务器地址和端口)

http://hadoop-1:50090/(secondary namenode的HTTP服务器地址和端口)

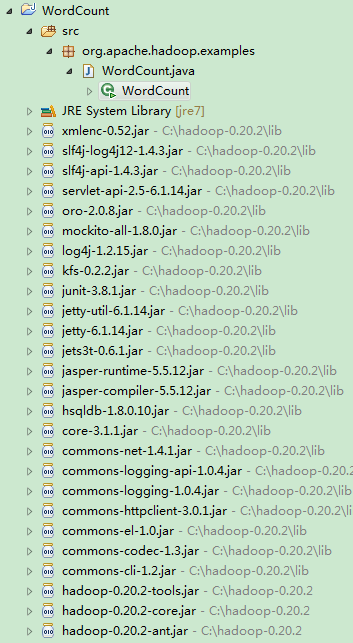

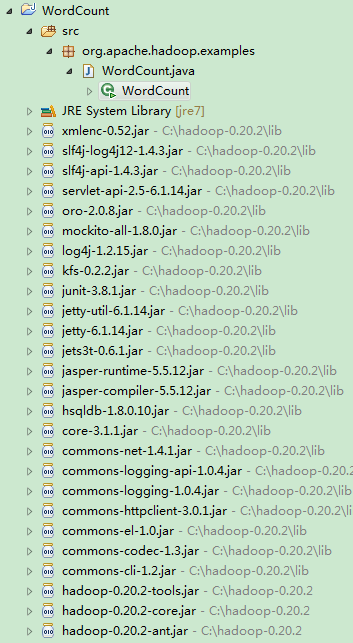

使用 WordCount 测试 Hadoop 集群

Hadoop 集群搭建起来后,我们来用一个单词统计的小程序来测试一下。使用 Eclipse 新建一个 Map/Reduce 工程。

编写代码如下。

package org.apache.hadoop.examples;

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount

{

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable>

{

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context) throws IOException, InterruptedException

{

String line = value.toString();

StringTokenizer itr = new StringTokenizer(line);

while (itr.hasMoreTokens())

{

word.set(itr.nextToken().toLowerCase());

context.write(word, one);

}

}

}

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable>

{

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException

{

int sum = 0;

for (IntWritable val : values)

{

sum += val.get();

}

result.set(sum);

context.write(key, new IntWritable(sum));

}

}

public static void main(String[] args) throws Exception

{

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length != 2)

{

System.err.println("Usage: wordcount <in> <out>");

System.exit(2);

}

Job job = new Job(conf, "word count");

job.setJarByClass(WordCount.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

job.setReducerClass(IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}12

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

[/code]

导出为 JAR file。然后传到 hadoop-1 机器上的

/opt/softwares目录下。

创建 HDFS 的输入目录

/input。

root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./hadoop fs -mkdir /input1

[/code]

将单词文本文件传到

/input目录下面。文件传入之后的效果如下面所示。

root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./hadoop fs -ls /input Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Found 20 items -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test1.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test10.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test11.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test12.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test13.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test14.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test15.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test16.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test17.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test18.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test19.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test2.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test20.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test3.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test4.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test5.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test6.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test7.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test8.txt -rw-r--r-- 2 root supergroup 57 2016-10-24 23:19 /input/test9.txt root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin#1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

[/code]

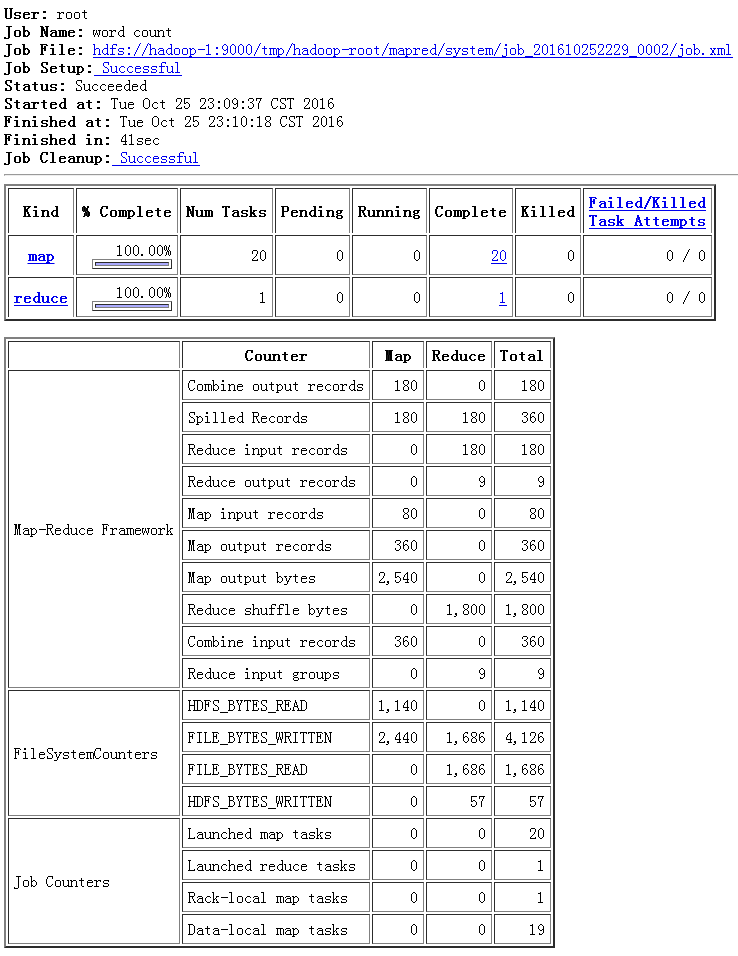

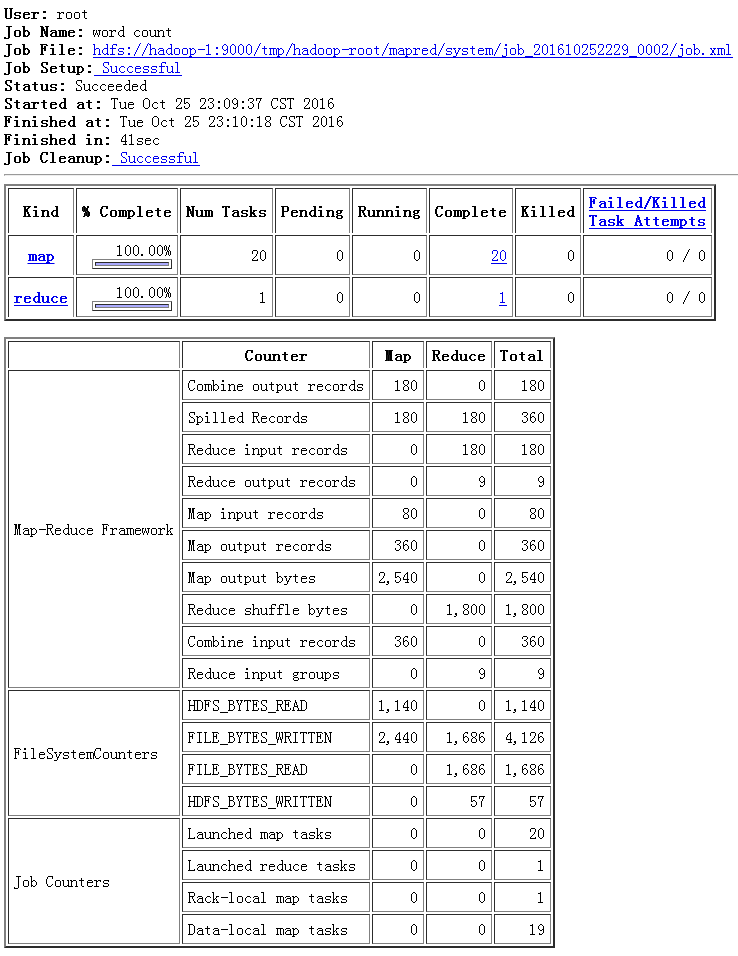

现在开始使用 Hadoop 运行我们的 WordCount 程序。

root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./hadoop jar /opt/softwares/wordcount.jar org.apache.hadoop.examples.WordCount /input /output Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp 16/10/25 23:09:37 INFO input.FileInputFormat: Total input paths to process : 20 16/10/25 23:09:37 INFO mapred.JobClient: Running job: job_201610252229_0002 16/10/25 23:09:38 INFO mapred.JobClient: map 0% reduce 0% 16/10/25 23:09:47 INFO mapred.JobClient: map 10% reduce 0% 16/10/25 23:09:53 INFO mapred.JobClient: map 40% reduce 0% 16/10/25 23:09:56 INFO mapred.JobClient: map 45% reduce 0% 16/10/25 23:09:59 INFO mapred.JobClient: map 75% reduce 13% 16/10/25 23:10:02 INFO mapred.JobClient: map 80% reduce 13% 16/10/25 23:10:05 INFO mapred.JobClient: map 100% reduce 13% 16/10/25 23:10:08 INFO mapred.JobClient: map 100% reduce 25% 16/10/25 23:10:17 INFO mapred.JobClient: map 100% reduce 100% 16/10/25 23:10:19 INFO mapred.JobClient: Job complete: job_201610252229_0002 16/10/25 23:10:19 INFO mapred.JobClient: Counters: 18 16/10/25 23:10:19 INFO mapred.JobClient: Map-Reduce Framework 16/10/25 23:10:19 INFO mapred.JobClient: Combine output records=180 16/10/25 23:10:19 INFO mapred.JobClient: Spilled Records=360 16/10/25 23:10:19 INFO mapred.JobClient: Reduce input records=180 16/10/25 23:10:19 INFO mapred.JobClient: Reduce output records=9 16/10/25 23:10:19 INFO mapred.JobClient: Map input records=80 16/10/25 23:10:19 INFO mapred.JobClient: Map output records=360 16/10/25 23:10:19 INFO mapred.JobClient: Map output bytes=2540 16/10/25 23:10:19 INFO mapred.JobClient: Reduce shuffle bytes=1800 16/10/25 23:10:19 INFO mapred.JobClient: Combine input records=360 16/10/25 23:10:19 INFO mapred.JobClient: Reduce input groups=9 16/10/25 23:10:19 INFO mapred.JobClient: FileSystemCounters 16/10/25 23:10:19 INFO mapred.JobClient: HDFS_BYTES_READ=1140 16/10/25 23:10:19 INFO mapred.JobClient: FILE_BYTES_WRITTEN=4126 16/10/25 23:10:19 INFO mapred.JobClient: FILE_BYTES_READ=1686 16/10/25 23:10:19 INFO mapred.JobClient: HDFS_BYTES_WRITTEN=57 16/10/25 23:10:19 INFO mapred.JobClient: Job Counters 16/10/25 23:10:19 INFO mapred.JobClient: Launched map tasks=20 16/10/25 23:10:19 INFO mapred.JobClient: Launched reduce tasks=1 16/10/25 23:10:19 INFO mapred.JobClient: Rack-local map tasks=1 16/10/25 23:10:19 INFO mapred.JobClient: Data-local map tasks=191

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

[/code]

打开网页 http://hadoop-1:50030/ 可以看到执行过程。

现在看一下执行结果。

root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./hadoop fs -ls /output Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Found 2 items drwxr-xr-x - root supergroup 0 2016-10-25 23:09 /output/_logs -rw-r--r-- 2 root supergroup 57 2016-10-25 23:10 /output/part-r-00000 root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./hadoop fs -cat /output/part-r-00000 Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp Picked up _JAVA_OPTIONS: -Dawt.useSystemAAFontSettings=gasp a 80 am 60 boy 40 girl 40 i 60 is 20 or 20 she 20 who 201

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

[/code]

至此,可以初步说明我们的 Hadoop0.20.2 完全分布式安装和配置是正确的。

关掉 Hadoop 所有节点。

root@hadoop-1:/opt/softwares/hadoop-0.20.2/bin# ./stop-all.sh stopping jobtracker hadoop-4: stopping tasktracker hadoop-3: stopping tasktracker hadoop-2: stopping tasktracker stopping namenode hadoop-4: stopping datanode hadoop-2: stopping datanode hadoop-3: stopping datanode hadoop-1: stopping secondarynamenode1

2

3

4

5

6

7

8

9

10

11

[/code]

参考文献

http://blog.itpub.net/26613085/viewspace-1077424/http://blog.csdn.net/gane_cheng/article/details/52913354

http://february30thcf.iteye.com/blog/1768795

<link rel="stylesheet" href="http://csdnimg.cn/release/phoenix/production/markdown_views-0bc64ada25.css"> </div>

相关文章推荐

- Hadoop0.20.2 完全分布式安装和配置

- Hadoop 2.4.0完全分布式平台搭建、配置、安装

- 项目期间hadoop完全分布式安装配置过程

- Hadoop集群完全分布式安装与配置

- Hadoop2.3完全分布式安装与配置

- hadoop2.7集群完全分布式安装配置

- centos 7 系统下进行hadoop-0.20.2的完全分布式配置

- Hadoop2.2.0安装配置手册!完全分布式Hadoop集群搭建过程~(心血之作啊~~) .

- Hadoop2.2.0安装配置手册!完全分布式Hadoop集群搭建过程~(心血之作啊~~)

- Hadoop 1.0.3 完全分布式 安装 配置 部署

- hadoop完全分布式安装配置

- 完全分布式Hadoop2.3安装与配置

- hadoop完全分布式安装配置

- Hadoop2.2.0安装配置手册!完全分布式Hadoop集群搭建过程~

- Hadoop2.2.0安装配置手册!完全分布式Hadoop集群搭建过程

- Hadoop 完全分布式安装配置学习

- hadoop-2.7.3 + hive-2.3.0 + zookeeper-3.4.8 + hbase-1.3.1 完全分布式安装配置

- 完全分布式Hadoop2.3安装与配置

- Hadoop2.6.0完全分布式的安装与配置(Centos)

- Hadoop2.6.2完全分布式集群HA模式安装配置详解