android绘制播放音频的波形图

2017-11-24 11:57

651 查看

之前做过android的录音,编辑(裁剪和合成(WAV格式)),思路大概是从麦克风获取音频的详细数据填充到list集合中,再将这些数据经过计算画到屏幕上,算是实时录制的波形图!之后有一段时间没碰过那个项目了,虽然功能是做出来了,但是还不算是完整的,那要是播放的时候呢?播放的时候怎么实时动态的获取音频数据来绘制呢?思考良久,在逛github的时候,发现了这个功能!在这里做个记录,也给没有这方面知识的朋友们做个补充,分享一下!

OK,先看效果图吧!

这个效果图是线性和圆形的音频傅里叶数据图形,当然还有柱状的效果图,这里并没有展示,整完这篇博客后,大家可以自己下载demo自己运行看看效果。

获取音频播放的实时数据并绘制,涉及到android提供的一个类,Visualizer,这个类可以捕获使用MediaPlayer的时候音频数据,主要返回两种类型的数据,一种是音频的波形数据,一种是傅里叶数据(未考究),android系统中关于这个类的描述几乎为0,并不像其它类会有大把英文注释!很烦。。

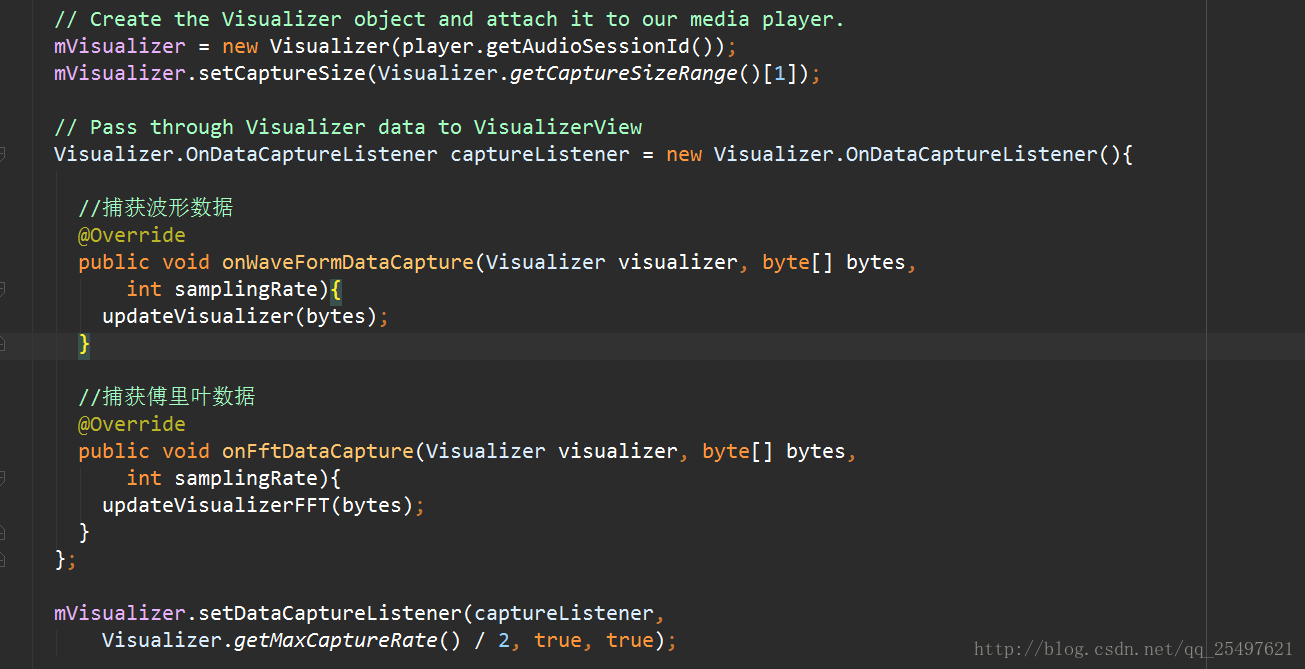

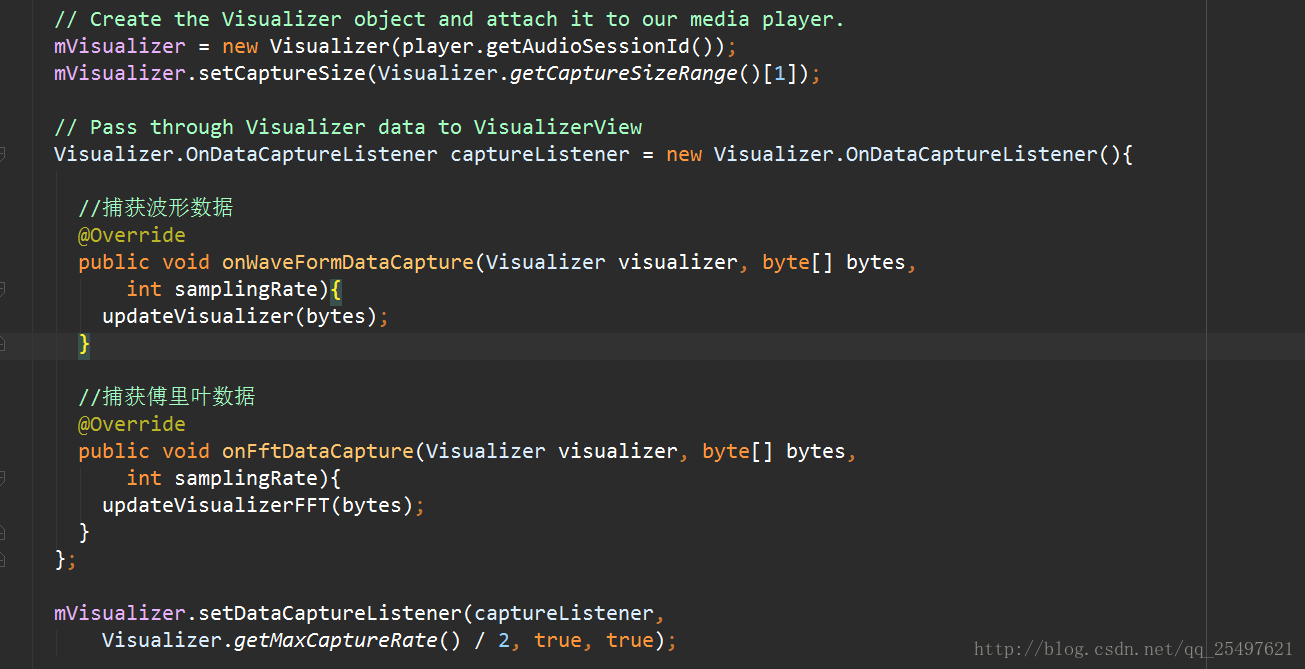

代码使用方法如下:

`

很简单吧!只需要实现一个捕获监听即可!

OK,我们再来看看柱状图。

效果图如下:

界面的效果有点糙?不急,我们理解了原理,之后慢慢改呗!哪有什么东西都是现成的?

先看自定义的界面展示的代码:

这个类很简单,对不同的展示界面进行了简单的封装,主要的绘制那肯定在onDraw方法体!而在获取到音频数据的时候,将Visualizer捕获到的音频数据(傅里叶),抽取出来进行invalidate();重新绘制,所以其他的我们可以跳过,直接看onDraw方法体,也包括怎样产生的阴影效果!

抽取的父类处理:

绘制数据类:

布局文件:

使用代码:

OK,不知道的,这个功能是很难做,知道后,就很简单了!哈哈。。

github地址(大家下载的话,顺手给个star,也是对作者的鼓励!谢谢啦!):

https://github.com/T-chuangxin/AudioWaveShow

每天进步一点点,时间会让你成为巨人!加油!

OK,先看效果图吧!

这个效果图是线性和圆形的音频傅里叶数据图形,当然还有柱状的效果图,这里并没有展示,整完这篇博客后,大家可以自己下载demo自己运行看看效果。

获取音频播放的实时数据并绘制,涉及到android提供的一个类,Visualizer,这个类可以捕获使用MediaPlayer的时候音频数据,主要返回两种类型的数据,一种是音频的波形数据,一种是傅里叶数据(未考究),android系统中关于这个类的描述几乎为0,并不像其它类会有大把英文注释!很烦。。

代码使用方法如下:

`

很简单吧!只需要实现一个捕获监听即可!

OK,我们再来看看柱状图。

效果图如下:

界面的效果有点糙?不急,我们理解了原理,之后慢慢改呗!哪有什么东西都是现成的?

先看自定义的界面展示的代码:

/** * Copyright 2011, Felix Palmer * * Licensed under the MIT license: * http://creativecommons.org/licenses/MIT/ */ package com.tian.audio.wave.widget; import java.util.HashSet; import java.util.Set; import android.content.Context; import android.graphics.Bitmap; import android.graphics.Bitmap.Config; import android.graphics.Canvas; import android.graphics.Color; import android.graphics.Matrix; import android.graphics.Paint; import android.graphics.PorterDuff.Mode; import android.graphics.PorterDuffXfermode; import android.graphics.Rect; import android.media.MediaPlayer; import android.media.audiofx.Visualizer; import android.util.AttributeSet; import android.view.View; import com.tian.audio.wave.dao.AudioData; import com.tian.audio.wave.dao.FFTData; import com.tian.audio.wave.renderer.Renderer; /** * A class that draws visualizations of data received from a * {@link Visualizer.OnDataCaptureListener#onWaveFormDataCapture } and * {@link Visualizer.OnDataCaptureListener#onFftDataCapture } */ public class VisualizerView extends View { private static final String TAG = "VisualizerView"; private byte[] mBytes; private byte[] mFFTBytes; private Rect mRect = new Rect(); private Visualizer mVisualizer; private Set<Renderer> mRenderers; private Paint mFlashPaint = new Paint(); private Paint mFadePaint = new Paint(); public VisualizerView(Context context, AttributeSet attrs, int defStyle){ super(context, attrs); init(); } public VisualizerView(Context context, AttributeSet attrs) { this(context, attrs, 0); } public VisualizerView(Context context) { this(context, null, 0); } private void init() { mBytes = null; mFFTBytes = null; mFlashPaint.setColor(Color.argb(122, 255, 255, 255)); mFadePaint.setColor(Color.argb(238, 255, 255, 255)); // Adjust alpha to change how quickly the image fades mFadePaint.setXfermode(new PorterDuffXfermode(Mode.MULTIPLY)); mRenderers = new HashSet<Renderer>(); } /** * Links the visualizer to a player * @param player - MediaPlayer instance to link to */ public void link(MediaPlayer player){ if(player == null) { throw new NullPointerException("Cannot link to null MediaPlayer"); } // Create the Visualizer object and attach it to our media player. mVisualizer = new Visualizer(player.getAudioSessionId()); mVisualizer.setCaptureSize(Visualizer.getCaptureSizeRange()[1]); // Pass through Visualizer data to VisualizerView Visualizer.OnDataCaptureListener captureListener = new Visualizer.OnDataCaptureListener(){ //捕获波形数据 @Override public void onWaveFormDataCapture(Visualizer visualizer, byte[] bytes, int samplingRate){ updateVisualizer(bytes); } //捕获傅里叶数据 @Override public void onFftDataCapture(Visualizer visualizer, byte[] bytes, int samplingRate){ updateVisualizerFFT(bytes); } }; mVisualizer.setDataCaptureListener(captureListener, Visualizer.getMaxCaptureRate() / 2, true, true); // Enabled Visualizer and disable when we're done with the stream mVisualizer.setEnabled(true); player.setOnCompletionListener(new MediaPlayer.OnCompletionListener(){ @Override public void onCompletion(MediaPlayer mediaPlayer){ mVisualizer.setEnabled(false); } }); } public void addRenderer(Renderer renderer){ if(renderer != null){ mRenderers.add(renderer); } } public void clearRenderers() { mRenderers.clear(); } /** * Call to release the resources used by VisualizerView. Like with the * MediaPlayer it is good practice to call this method */ public void release() { mVisualizer.release(); } /** * Pass data to the visualizer. Typically this will be obtained from the * Android Visualizer.OnDataCaptureListener call back. See * {@link Visualizer.OnDataCaptureListener#onWaveFormDataCapture } * @param bytes */ public void updateVisualizer(byte[] bytes) { mBytes = bytes; invalidate(); } /** * Pass FFT data to the visualizer. Typically this will be obtained from the * Android Visualizer.OnDataCaptureListener call back. See * {@link Visualizer.OnDataCaptureListener#onFftDataCapture } * @param bytes */ public void updateVisualizerFFT(byte[] bytes) { mFFTBytes = bytes; invalidate(); } boolean mFlash = false; /** * Call this to make the visualizer flash. Useful for flashing at the start * of a song/loop etc... */ public void flash() { mFlash = true; invalidate(); } Bitmap mCanvasBitmap; Canvas mCanvas; @Override protected void onDraw(Canvas canvas) { super.onDraw(canvas); // Create canvas once we're ready to draw mRect.set(0, 0, getWidth(), getHeight()); if(mCanvasBitmap == null){ mCanvasBitmap = Bitmap.createBitmap(canvas.getWidth(), canvas.getHeight(), Config.ARGB_8888); } if(mCanvas == null){ mCanvas = new Canvas(mCanvasBitmap); } if (mBytes != null) { // Render all audio renderers AudioData audioData = new AudioData(mBytes); for(Renderer r : mRenderers){ r.render(mCanvas, audioData, mRect); } } if (mFFTBytes != null) { // Render all FFT renderers FFTData fftData = new FFTData(mFFTBytes); for(Renderer r : mRenderers){ r.render(mCanvas, fftData, mRect); } } // 渐变产生的阴影的效果 mCanvas.drawPaint(mFadePaint); if(mFlash){ mFlash = false; mCanvas.drawPaint(mFlashPaint); } canvas.drawBitmap(mCanvasBitmap, new Matrix(), null); } }

这个类很简单,对不同的展示界面进行了简单的封装,主要的绘制那肯定在onDraw方法体!而在获取到音频数据的时候,将Visualizer捕获到的音频数据(傅里叶),抽取出来进行invalidate();重新绘制,所以其他的我们可以跳过,直接看onDraw方法体,也包括怎样产生的阴影效果!

抽取的父类处理:

package com.tian.audio.wave.renderer;

import android.graphics.Canvas;

import android.graphics.Rect;

import com.tian.audio.wave.dao.AudioData;

import com.tian.audio.wave.dao.FFTData;

abstract public class Renderer{

// Have these as members, so we don't have to re-create them each time

protected float[] mPoints;

protected float[] mFFTPoints;

public Renderer()

{

}

// As the display of raw/FFT audio will usually look different, subclasses

// will typically only implement one of the below methods

/**

* Implement this method to render the audio data onto the canvas

* @param canvas - Canvas to draw on

* @param data - Data to render

* @param rect - Rect to render into

*/

abstract public void onRender(Canvas canvas, AudioData data, Rect rect);

/**

* Implement this method to render the FFT audio data onto the canvas

* @param canvas - Canvas to draw on

* @param data - Data to render

* @param rect - Rect to render into

*/

abstract public void onRender(Canvas canvas, FFTData data, Rect rect);

// These methods should actually be called for rendering

/**

* Render the audio data onto the canvas

* @param canvas - Canvas to draw on

* @param data - Data to render

* @param rect - Rect to render into

*/

final public void render(Canvas canvas, AudioData data, Rect rect)

{

if (mPoints == null || mPoints.length < data.bytes.length * 4) {

mPoints = new float[data.bytes.length * 4];

}

onRender(canvas, data, rect);

}

/**

* Render the FFT data onto the canvas

* @param canvas - Canvas to draw on

* @param data - Data to render

* @param rect - Rect to render into

*/

final public void render(Canvas canvas, FFTData data, Rect rect)

{

if (mFFTPoints == null || mFFTPoints.length < data.bytes.length * 4) {

mFFTPoints = new float[data.bytes.length * 4];

}

onRender(canvas, data, rect);

}

}绘制数据类:

/** * Copyright 2011, Felix Palmer * * Licensed under the MIT license: * http://creativecommons.org/licenses/MIT/ */ package com.tian.audio.wave.renderer; import android.graphics.Canvas; import android.graphics.Paint; import android.graphics.Rect; import com.tian.audio.wave.dao.AudioData; import com.tian.audio.wave.dao.FFTData; /** * 操作画笔进行各个bar的绘制工作 */ public class BarGraphRenderer extends Renderer{ private int mDivisions; private Paint mPaint; private boolean mTop; /** * Renders the FFT data as a series of lines, in histogram form * @param divisions - must be a power of 2. Controls how many lines to draw * @param paint - Paint to draw lines with * @param top - whether to draw the lines at the top of the canvas, or the bottom */ public BarGraphRenderer(int divisions, Paint paint, boolean top){ super(); mDivisions = divisions; mPaint = paint; mTop = top; } @Override public void onRender(Canvas canvas, AudioData data, Rect rect){ // Do nothing, we only display FFT data } @Override public void onRender(Canvas canvas, FFTData data, Rect rect){ for (int i = 0; i < data.bytes.length / mDivisions; i++) { mFFTPoints[i * 4] = i * 4 * mDivisions; mFFTPoints[i * 4 + 2] = i * 4 * mDivisions; byte rfk = data.bytes[mDivisions * i];//间隔倍数 byte ifk = data.bytes[mDivisions * i + 1]; float magnitude = (rfk * rfk + ifk * ifk); int dbValue = (int) (10 * Math.log10(magnitude)); if(mTop){ mFFTPoints[i * 4 + 1] = 0; mFFTPoints[i * 4 + 3] = (dbValue * 2 - 10); }else{ mFFTPoints[i * 4 + 1] = rect.height(); mFFTPoints[i * 4 + 3] = rect.height() - (dbValue * 2 - 10); } } canvas.drawLines(mFFTPoints, mPaint); } }

布局文件:

<?xml version="1.0" encoding="utf-8"?> <LinearLayout xmlns:android="http://schemas.android.com/apk/res/android" android:layout_width="fill_parent" android:layout_height="fill_parent" android:background="@drawable/bg" android:orientation="vertical" > <FrameLayout android:layout_width="fill_parent" android:layout_height="0dp" android:layout_margin="10dp" android:layout_weight="1" android:background="#000" > <com.tian.audio.wave.widget.VisualizerView android:id="@+id/visualizerView" android:layout_width="fill_parent" android:layout_height="fill_parent" > </com.tian.audio.wave.widget.VisualizerView> </FrameLayout> <LinearLayout android:layout_width="fill_parent" android:layout_height="wrap_content" android:layout_weight="0" > <Button android:layout_width="0dp" android:layout_height="wrap_content" android:layout_margin="10dp" android:layout_weight="0.25" android:onClick="barPressed" android:text="Bar" > </Button> <Button android:layout_width="0dp" android:layout_height="wrap_content" android:layout_margin="10dp" android:layout_weight="0.25" android:onClick="circlePressed" android:text="Circle" > </Button> <Button android:layout_width="0dp" android:layout_height="wrap_content" android:layout_margin="10dp" android:layout_weight="0.25" android:onClick="circleBarPressed" android:text="Circle Bar" > </Button> <Button android:layout_width="0dp" android:layout_height="wrap_content" android:layout_margin="10dp" android:layout_weight="0.25" android:onClick="linePressed" android:text="Line" > </Button> <Button android:layout_width="0dp" android:layout_height="wrap_content" android:layout_margin="10dp" android:layout_weight="0.25" android:onClick="clearPressed" android:text="Clear" > </Button> </LinearLayout> <LinearLayout android:layout_width="fill_parent" android:layout_height="wrap_content" android:layout_weight="0" > <Button android:layout_width="0dp" android:layout_height="wrap_content" android:layout_margin="10dp" android:layout_weight="0.5" android:onClick="startPressed" android:text="Start" > </Button> <Button android:layout_width="0dp" android:layout_height="wrap_content" android:layout_margin="10dp" android:layout_weight="0.5" android:onClick="stopPressed" android:text="Stop" > </Button> </LinearLayout> </LinearLayout>

使用代码:

// Methods for adding renderers to visualizer

private void addBarGraphRenderers(){

//底部柱状条

Paint paint = new Paint();

paint.setStrokeWidth(50f);

paint.setAntiAlias(true);

paint.setColor(Color.argb(200, 56, 138, 252));

BarGraphRenderer barGraphRendererBottom = new BarGraphRenderer(16, paint, false);

mVisualizerView.addRenderer(barGraphRendererBottom);

//顶部柱状条

Paint paint2 = new Paint();

paint2.setStrokeWidth(12f);

paint2.setAntiAlias(true);

paint2.setColor(Color.argb(200, 181, 111, 233));

BarGraphRenderer barGraphRendererTop = new BarGraphRenderer(4, paint2, true);

mVisualizerView.addRenderer(barGraphRendererTop);

}OK,不知道的,这个功能是很难做,知道后,就很简单了!哈哈。。

github地址(大家下载的话,顺手给个star,也是对作者的鼓励!谢谢啦!):

https://github.com/T-chuangxin/AudioWaveShow

每天进步一点点,时间会让你成为巨人!加油!

相关文章推荐

- [Android]自定义绘制一个简易的音频条形图,附上对MP3音频波形数据的采集与展现

- 在Android上用Canvas绘制音频波形图

- Android MP3录制,波形显示,音频权限兼容与播放

- android音频波形图绘制

- android音频波形图绘制

- Android MP3录制,波形显示,音频权限兼容与播放

- Android控制音频播放速度及获取raw资源

- iOS 录音Wav 音频 转换 Amr ,Android 播放

- android开发--音效音频播放SoundPool

- Android控制所有播放器的音频切换上下首歌、播放、停止

- 视音频播放(Android学习随笔七)

- flash播放音频显示波形

- Android开发录音和播放音频的步骤(动态获取权限)

- android如何播放和录制音频

- Android音频实时传输与播放(二):服务端

- Android Mediaplayer 播放视频/音频 SoundPool

- 初识Android之音频播放

- android 缓存网络音频播放

- Android播放器MediaPlayer与MediaRecorder:录制音频并播放

- Android中关于assets和raw播放音频视频的实践