python之实战----KNN之手写数字位图

2017-11-10 19:34

381 查看

K近邻法分类和回归

#-*- coding=utf-8 -*-

import numpy as np

from sklearn import datasets,neighbors

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

def load_data():

digits=datasets.load_digits()

X_train=digits.data

y_train=digits.target

return train_test_split(X_train,y_train, test_size=0.25,random_state=0,stratify=y_train)

def creat_regression_data(n):

'''

在sin(x)基础上添加噪声

'''

X=5*np.random.rand(n,1)

y=np.sin(X).ravel()

y[::5]+=1*(0.5*np.random.rand(int(n/5)))

return train_test_split(X_train,y_train, test_size=0.25,random_state=0,stratify=y_train)

def test_KNeighborsClassifier(*data):

X_train,X_test,y_train,y_test=data

cls=neighbors.KNeighborsClassifier()

cls.fit(X_train,y_train)

print('KNeighborsClassifier Train score :%.2f'%cls.score(X_train,y_train))

print('KNeighborsClassifier Test score :%.2f'%cls.score(X_test,y_test))

def test_KNeighborsRegressor(*data):

X_train,X_test,y_train,y_test=data

cls=neighbors.KNeighborsRegressor()

cls.fit(X_train,y_train)

print('KNeighborsRegressor Train score :%.2f'%cls.score(X_train,y_train))

print('KNeighborsRegressor Test score :%.2f'%cls.score(X_test,y_test))

if __name__=='__main__':

X_train,X_test,y_train,y_test=load_data()

test_KNeighborsClassifier(X_train,X_test,y_train,y_test)

X_train,X_test,y_train,y_test=creat_regression_data(1000)

test_KNeighborsRegressor(X_train,X_test,y_train,y_test)结果是:

PS E:\p> python test.py KNeighborsClassifier Train score :0.99 KNeighborsClassifier Test score :0.98 KNeighborsRegressor Train score :0.97 KNeighborsRegressor Test score :0.94

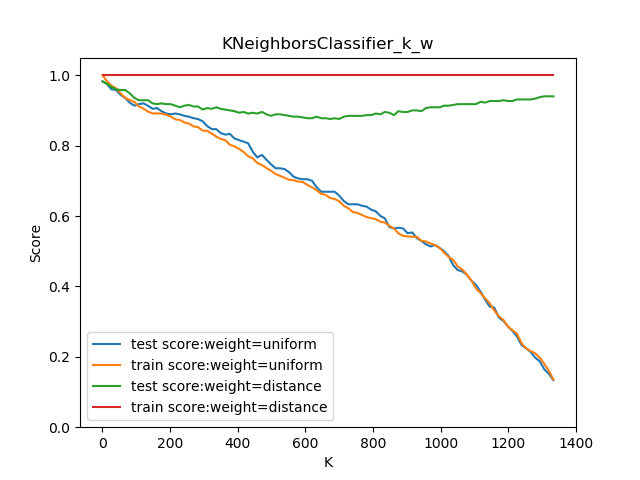

看看KNN分类K和权重对评分的影响:

#-*- coding=utf-8 -*-

import numpy as np

from sklearn import datasets,neighbors

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

def load_data():

digits=datasets.load_digits()

X_train=digits.data

y_train=digits.target

return train_test_split(X_train,y_train, test_size=0.25,random_state=0,stratify=y_train)

def test_KNeighborsClassifier_k_w(*data):

X_train,X_test,y_train,y_test=data

Ks=np.linspace(1,y_train.size,num=100,endpoint=False,dtype='int')#arange数据类型的size

#linspace最初是从MATLAB中学来的,用此来创建等差数列

#endpoint : bool, optional

#If True, stop is the last sample. Otherwise, it is not included. Default is True.

weights=['uniform','distance']

fig=plt.figure()

ax=fig.add_subplot(1,1,1)

for weight in weights:

Train_scores=[]

Test_scores=[]

for K in Ks:

clf=neighbors.KNeighborsClassifier(weights=weight,n_neighbors=K)

clf.fit(X_train,y_train)

Train_scores.append(clf.score(X_train,y_train))

Test_scores.append(clf.score(X_test,y_test))

ax.plot(Ks,Test_scores,label="test score:weight=%s"%weight)

ax.plot(Ks,Train_scores,label="train score:weight=%s"%weight)

ax.legend(loc='best')

ax.set_xlabel("K")

ax.set_ylabel("Score")

ax.set_ylim(0,1.05)

ax.set_title("KNeighborsClassifier_k_w")

plt.show()

if __name__=='__main__':

X_train,X_test,y_train,y_test=load_data()

test_KNeighborsClassifier_k_w( X_train,X_test,y_train,y_test)

#-*- coding=utf-8 -*-

import numpy as np

from sklearn import datasets,neighbors

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

def load_data():

digits=datasets.load_digits()

X_train=digits.data

y_train=digits.target

return train_test_split(X_train,y_train, test_size=0.25,random_state=0,stratify=y_train)

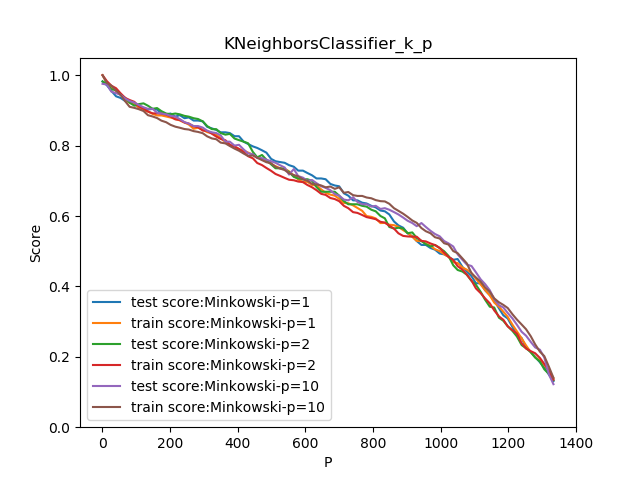

def test_KNeighborsClassifier_k_p(*data):

X_train,X_test,y_train,y_test=data

Ks=np.linspace(1,y_train.size,num=100,endpoint=False,dtype='int')#arange数据类型的size

#linspace最初是从MATLAB中学来的,用此来创建等差数列

#endpoint : bool, optional

#If True, stop is the last sample. Otherwise, it is not included. Default is True.

ps=[1,2,10]

fig=plt.figure()

ax=fig.add_subplot(1,1,1)

for p in ps:

Train_scores=[]

Test_scores=[]

for K in Ks:

clf=neighbors.KNeighborsClassifier(p=p,n_neighbors=K)

clf.fit(X_train,y_train)

Train_scores.append(clf.score(X_train,y_train))

Test_scores.append(clf.score(X_test,y_test))

ax.plot(Ks,Test_scores,label="test score:Minkowski-p=%s"%p)#闵可夫斯基

ax.plot(Ks,Train_scores,label="train score:Minkowski-p=%s"%p)

ax.legend(loc='best')

ax.set_xlabel("P")

ax.set_ylabel("Score")

ax.set_ylim(0,1.05)

ax.set_title("KNeighborsClassifier_k_p")

plt.show()

if __name__=='__main__':

X_train,X_test,y_train,y_test=load_data()

test_KNeighborsClassifier_k_p( X_train,X_test,y_train,y_test)

我想说这图画的好慢,电脑性能太菜了。

关于回归图的情况是一样一样的只不过更光滑一些。

相关文章推荐

- python之实战----朴素贝叶斯之手写数字位图

- Python实战 | TensorFlow之softmax的实现——手写数字识别

- 【python】机器学习实战KNN算法之手写数字识别

- KNN近邻算法(python3)识别手写数字

- Python机器学习实战之kNN手写识别系统

- Python Opencv实战之数字识别之knn算法入门

- 使用KNN算法在python下识别手写数字(带注释)

- Python实现knn算法手写数字识别

- 机器学习实战-kNN分类手写数字笔记

- 使用PCA + KNN对MNIST数据集进行手写数字识别 python

- 【机器学习实战-kNN:手写识别】python3实现-书本知识【3】

- 机器学习实战之KNN算法识别手写数字_代码注释

- Python knn 对手写数字分类

- Python实现KNN算法手写识别数字

- 机器学习python实战之手写数字识别

- 机器学习实战之程序清单1-kNN(手写数字识别系统)

- python3与机器学习实践---2、KNN实现手写数字识别

- KNN算法实战——手写数字识别

- KNN-手写数字识别

- 【Python | TensorBoard】用 PCA 可视化 MNIST 手写数字识别数据集