tensorflow之tensorboard

2017-10-26 17:52

274 查看

tensorflow之tensorboard

前言

tensorboard是TensorFlow中自带的一个数据可视化工具,在安装TensorFlow的同时,系统会自动安装。在不同的TensorFlow版本中,记录训练过程所需要的API也不一样,

1.3主要需要

summary,而之前的版本,则直接封装在了

tf下面

在使用tensorboard时,必须使用

name_scope来创建一个域,然后在每个域内定义变量的名称。

tf.summary中提供了一系列函数,用来帮忙统计。

histogram用来绘制直方图。

scale用来统计标量

除此之外,还需要用到

tf.summary.merge_all用来一次性生成所有摘要

tf.summary.Filewriter用来生成一个写入的文件夹(训练结果和测试结果可以放在不同的文件夹中)

add_summary讲新生成的

summer写入记录器

代码

# -*- coding: utf-8 -*-

"""

Created on Wed Oct 25 11:41:50 2017

@author: Sky_Gao

"""

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

max_steps = 100

learning_rate = 0.001

dropout = 0.9

data_dir = 'MNIST_data/'

log_dir = 'mnist_with_summaries/'

# 定义一个存储的目录

mnist = input_data.read_data_sets(data_dir, one_hot=True)

sess = tf.InteractiveSession()

def weight_variable(shape):

inital = tf.truncated_normal(shape=shape, stddev=0.1)

return tf.Variable(inital)

def bias_variable(shape):

initial = tf.constant(0.1, shape

4000

=shape)

return tf.Variable(initial)

with tf.name_scope('input'):

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y_ = tf.placeholder(tf.float32, [None, 10], name='y-input')

# 定义输入域,并在其中利用placeholder实现占位

#

with tf.name_scope('input_reshape'):

image_shaped_input = tf.reshape(x, [-1, 28, 28, 1])

tf.summary.image('input', image_shaped_input, 10)

def Variable_summaries(var):

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean)

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev)

tf.summary.scalar('max', tf.reduce_max(var))

tf.summary.scalar('min', tf.reduce_min(var))

tf.summary.histogram('histogram', var)

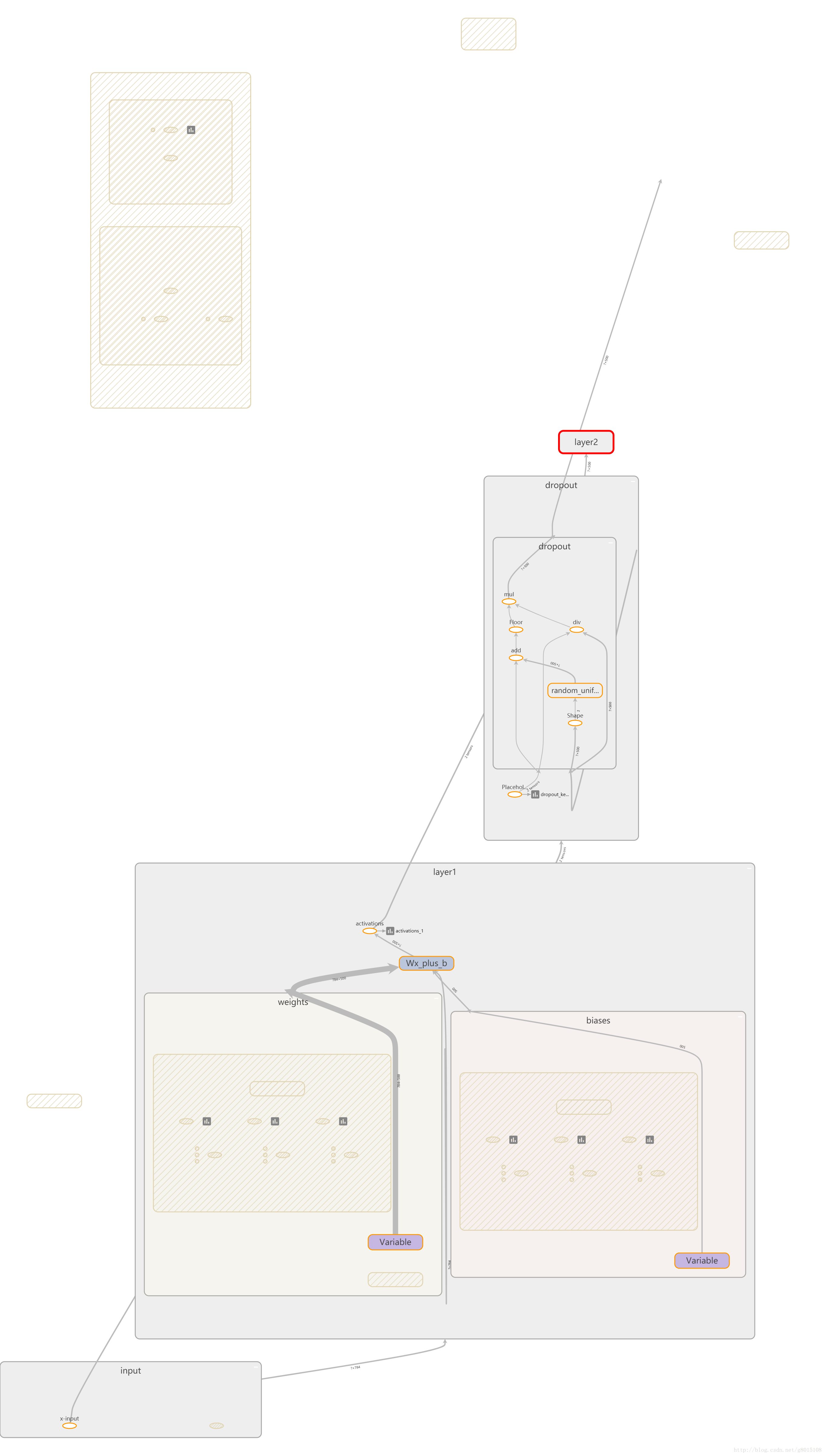

def nn_layer(input_tensor, input_dim, output_dim, layer_name, act=tf.nn.relu):

with tf.name_scope(layer_name):

with tf.name_scope('weights'):

weights = weight_variable([input_dim, output_dim])

Variable_summaries(weights)

with tf.name_scope('biases'):

biases = bias_variable([output_dim])

Variable_summaries(biases)

with tf.name_scope('Wx_plus_b'):

preactivate = tf.matmul(input_tensor, weights) + biases

tf.summary.histogram('preactivate', preactivate)

activations = act(preactivate, name='activations')

tf.summary.histogram('activations', activations)

return activations

hidden1 = nn_layer(x, 784, 500, 'layer1')

with tf.name_scope('dropout'):

keep_prob = tf.placeholder(dtype=tf.float32)

tf.summary.scalar('dropout_keep_probability', keep_prob)

dropped = tf.nn.dropout(hidden1, keep_prob)

y = nn_layer(dropped, 500, 10, 'layer2', act=tf.identity)

with tf.name_scope('cross_entropy'):

diff = tf.nn.softmax_cross_entropy_with_logits(logits=y, labels=y_)

with tf.name_scope('total'):

cross_entropy = tf.reduce_mean(diff)

tf.summary.scalar('cross_entropy', cross_entropy)

with tf.name_scope('train'):

train_step = tf.train.AdamOptimizer(learning_rate).minimize(cross_entropy)

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

with tf.name_scope('accuracy'):

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.summary.scalar('accuracy', accuracy)

#merge = tf.summary.merge_all()

with tf.Session() as sess:

merged = tf.summary.merge_all()

#定义合并变量操作,一次性生成所有摘要数据

# sess.run(merged)

train_writer = tf.summary.FileWriter(log_dir + '/train', sess.graph)

test_writer = tf.summary.FileWriter(log_dir + '/test', sess.graph)

tf.global_variables_initializer().run()

def feed_dict(train):

if train:

xs, ys = mnist.train.next_batch(100)

k = dropout

else:

xs, ys = mnist.test.images, mnist.test.labels

k = 1.0

return {x: xs, y_:ys, keep_prob:k}

saver = tf.train.Saver()

for i in range(max_steps):

if i % 10 == 0:

summary, acc = sess.run([merged, accuracy], feed_dict=feed_dict(False))

test_writer.add_summary(summary, i)

print('accuracy at step %s : %s' % (i, acc))

else:

if i % 100 == 99:

run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)

run_metadata = tf.RunMetadata()

summary, _ = sess.run([merged, train_step], feed_dict=feed_dict(True),

options=run_options, run_metadata=run_metadata)

train_writer.add_run_metadata(run_metadata, 'step%03d' % i)

train_writer.add_summary(summary, i)

saver.save(sess, log_dir+"/model.ckpt", i)

else:

summary, _ = sess.run([merged, train_step], feed_dict=feed_dict(True))

train_writer.add_summary(summary, i)

train_writer.close()

test_writer.close()

#sess.close()

相关文章推荐

- Tensorflow之TensorBoard的使用

- Windows下Anaconda中tensorflow的tensorboard使用

- tensorflow1.1/tensorboard可视化

- [step by step]利用docker搭建Tensorflow环境(tensorboard + tensorflow+gpu)

- TENSORFLOW新版本运行老版本代码报错解决(tensorboard) 2017.10最新版本

- Tensorflow的可视化工具Tensorboard的初步使用详解

- TensorFlow高级API(tf.contrib.learn)及可视化工具TensorBoard的使用

- TensorFlow训练softmax回归(带tensorboard)

- TensorFlow官方文档TensorBoard: 图表可视化

- tensorFlow之tensorboard可视化中遇到的问题

- [TensorFlow]入门学习笔记(6)-Tensorboard简易教程和模型保存

- 【tensorflow】tensorboard中graph的显示

- Tensorflow 自带可视化Tensorboard使用方法 附项目代码

- Tensorflow学习笔记(二)——Tensorboard和数据类型

- tensorflow之tensorboard的使用

- tensorflow手册tensorboard可视化

- 搭建TensorFlow中碰到的一些问题(TensorBoard不是内部或外部指令也不是可运行的程序)~

- TensorFlow学习--TensorBoard神经网络可视化

- 【TensorFlow | TensorBoard】理解 TensorBoard

- tensorflow日常小记---tensorboard曲线显示不全