HashMap底层原理之源码分析

2017-09-25 22:19

645 查看

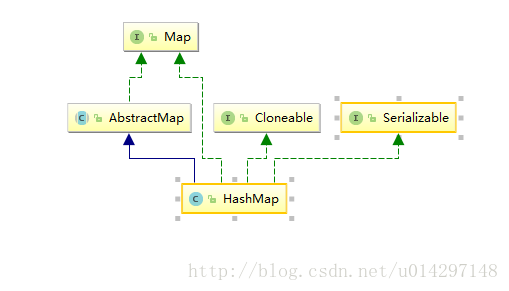

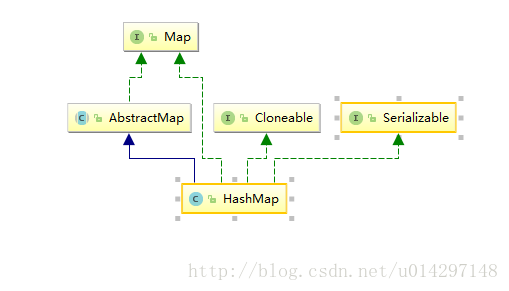

HashMap的继承结构图:

底层代码实现

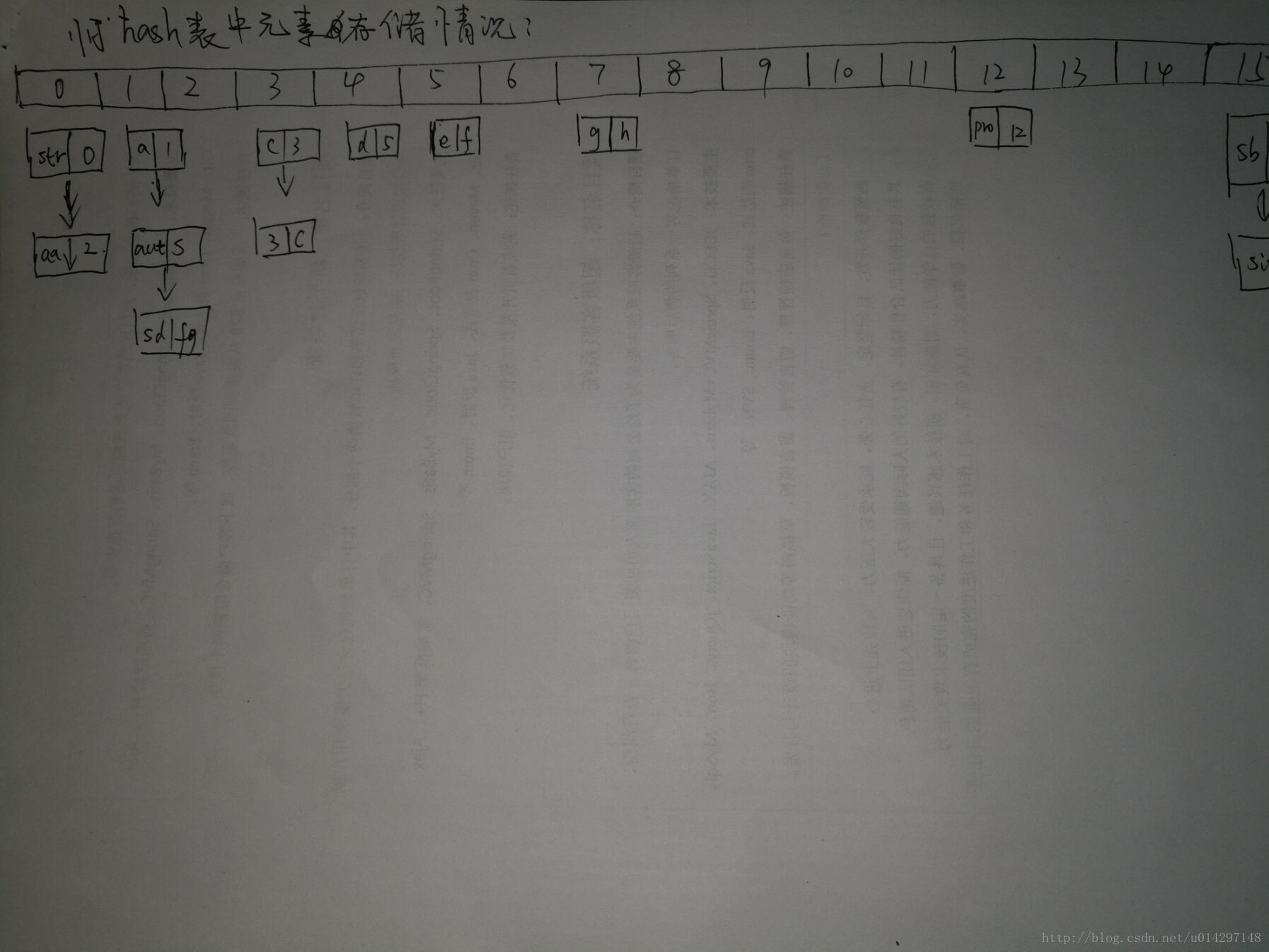

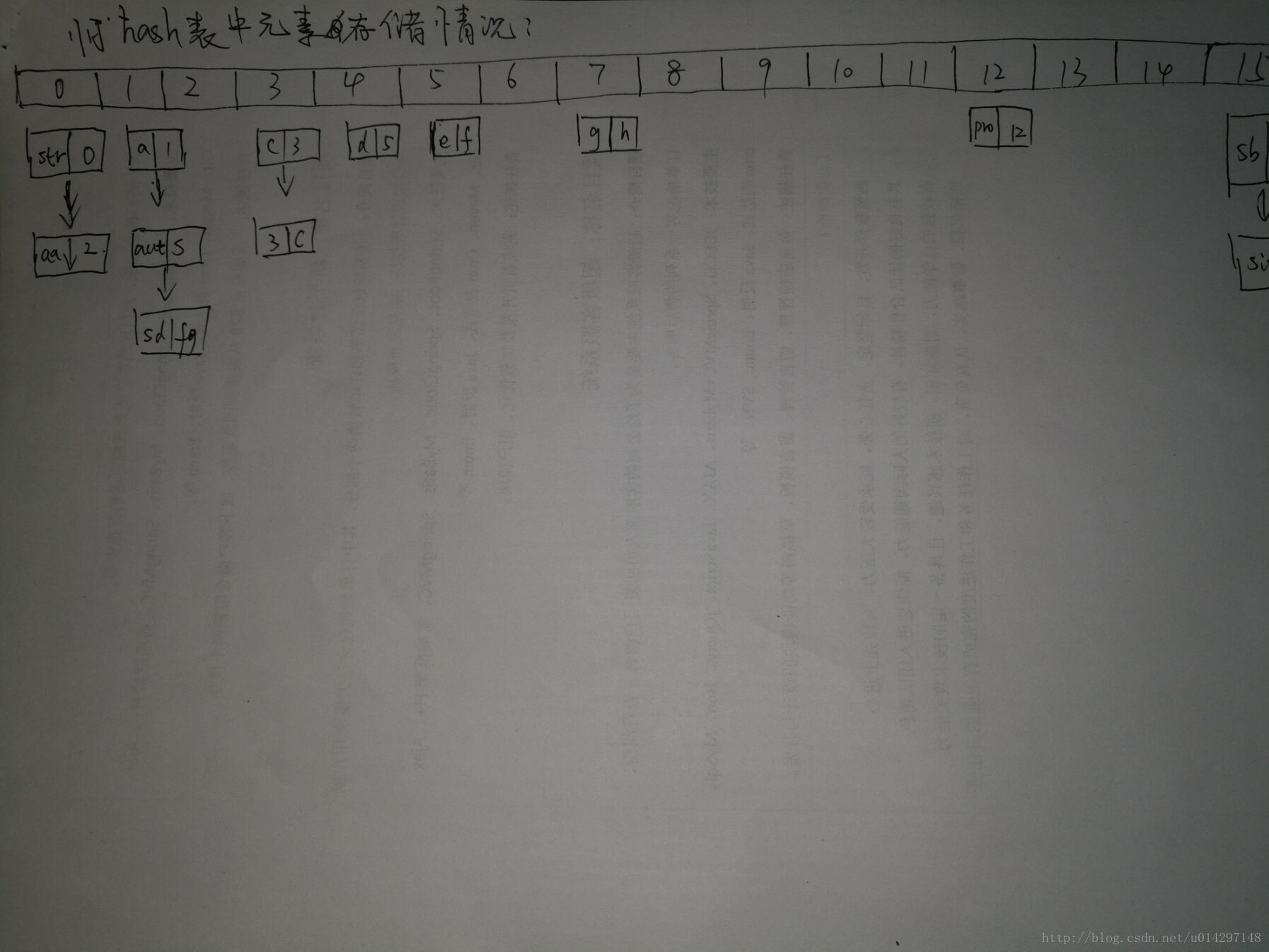

当还没有达到阈值的时候,存储完12个元素的情况是

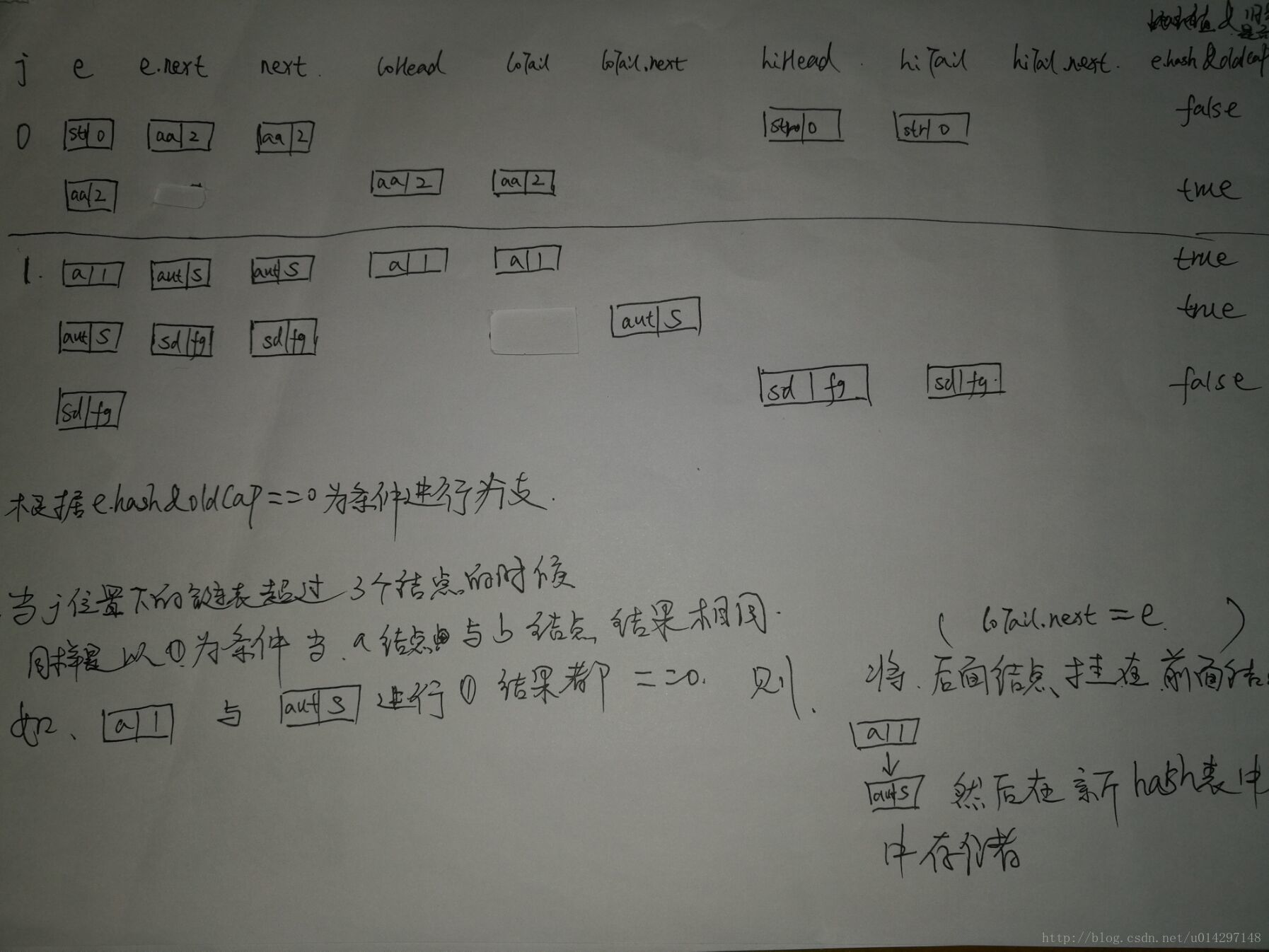

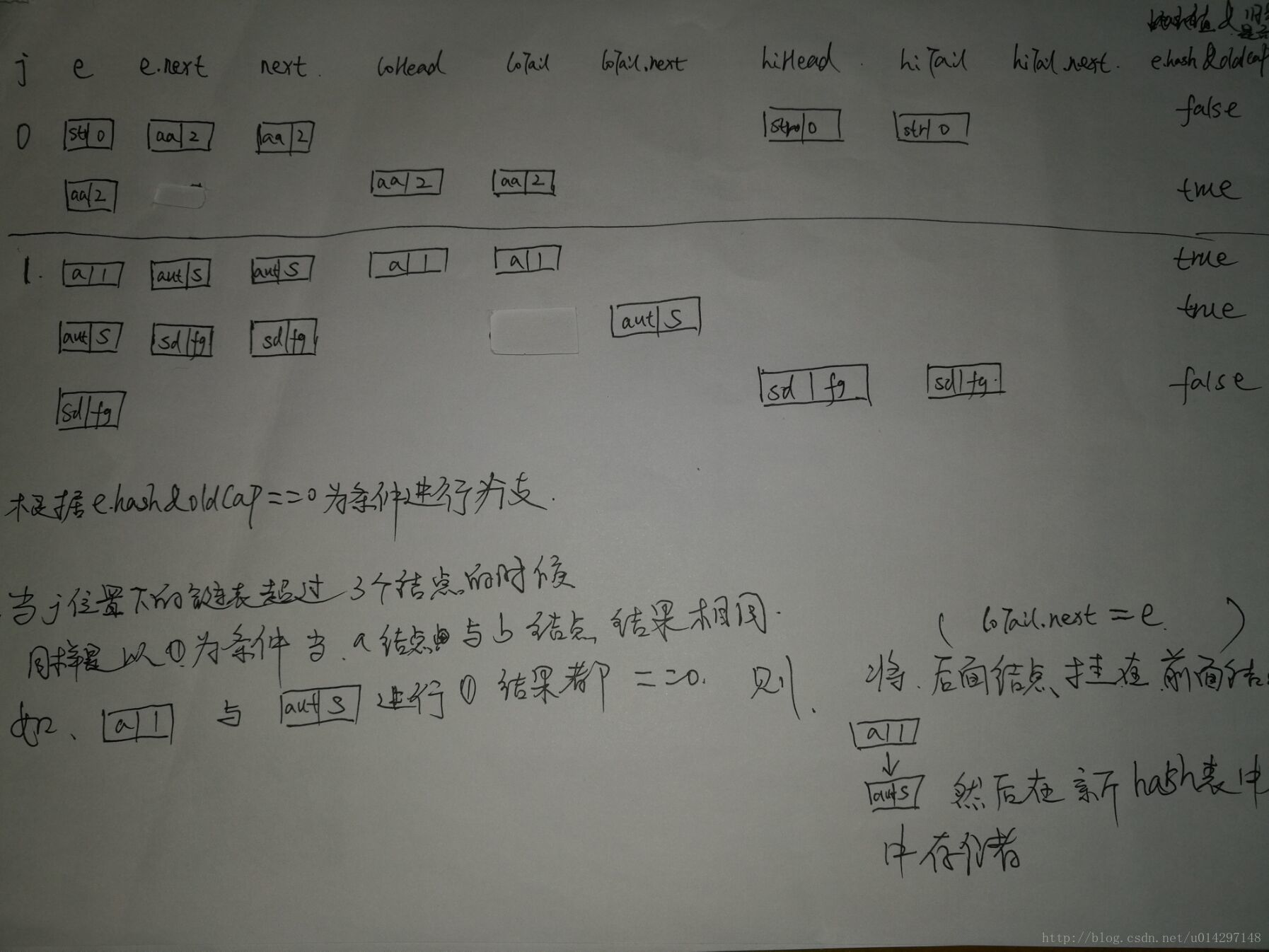

扩容过程

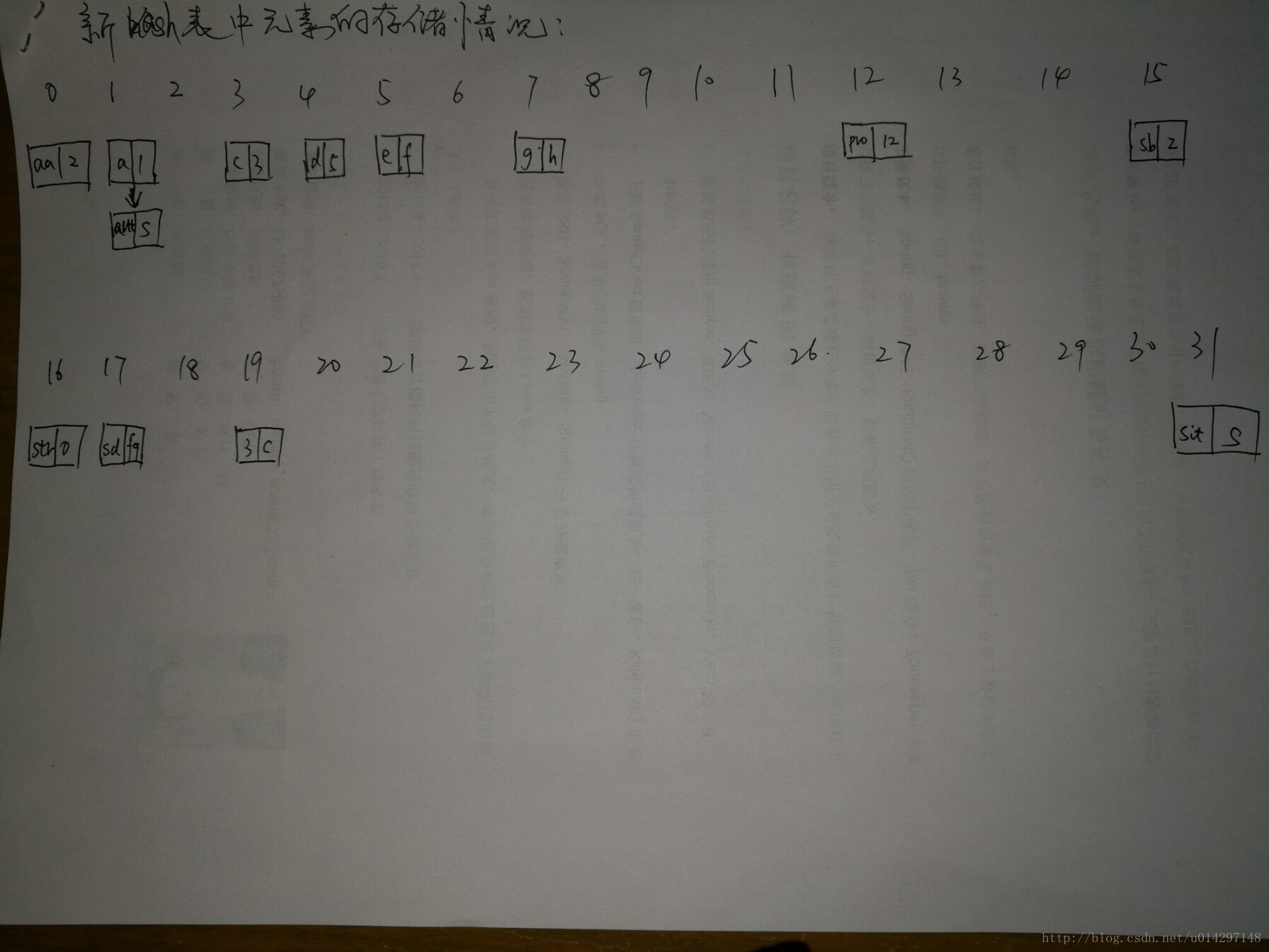

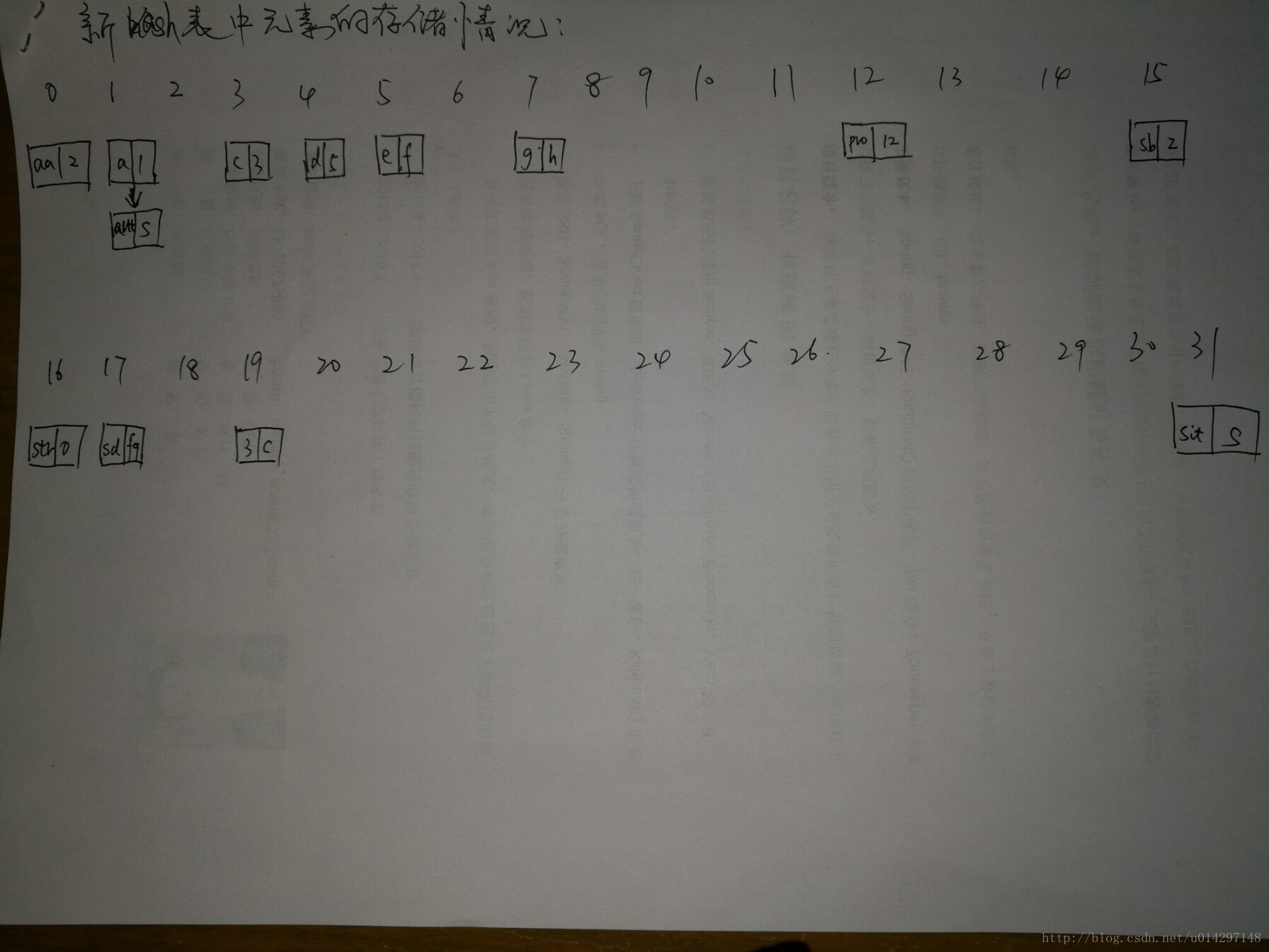

扩容后hash表中存储元素情况

2. 经过 if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

e = p;与if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}这两步后,可以保证 hashMap中的元素 是不重复的

3. 当两个元素的key的hashCode相同的时候,在判断两个元素的key是否==,如果key相等则 新元素的value 覆盖老元素的value。如果不相等则在该位置下顺延,形成链表

4. 新定义的hashMap 的最大容量为16 阈值为16*0.75, 当实际容量>12时会自动扩容

jdk源码

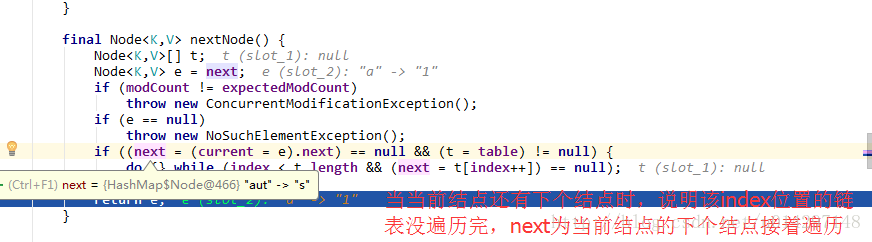

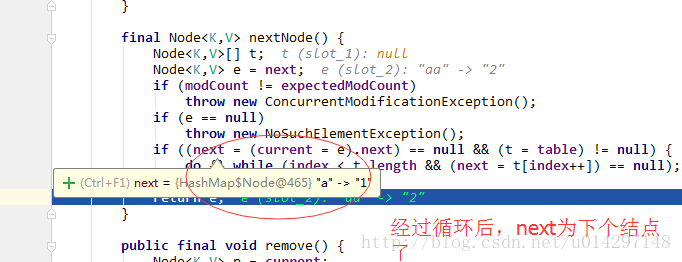

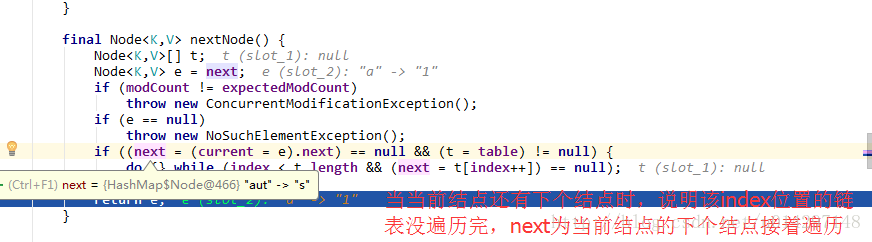

debug走一波遍历流程,详细可以看下图

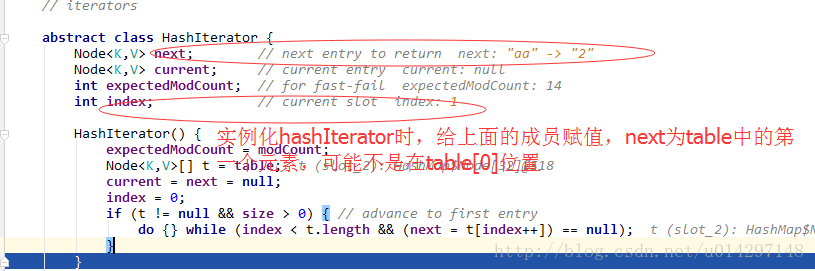

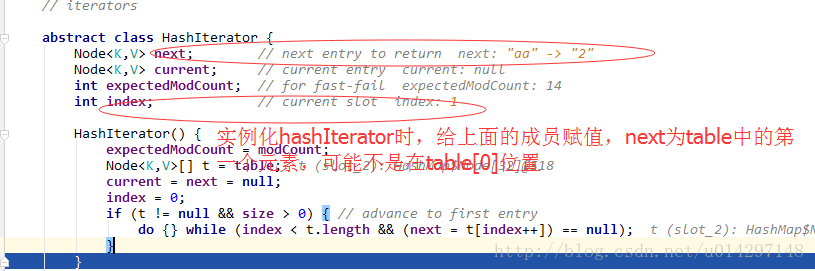

1.实例化HashIterator ,定位到第一个遍历的元素

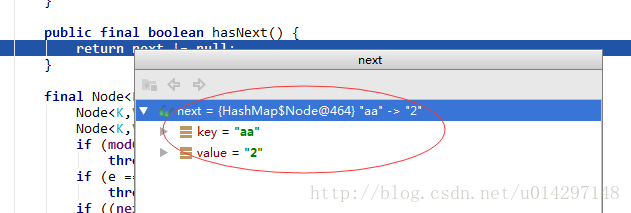

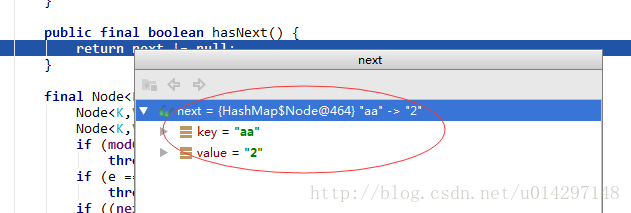

2.判断是否有下个node需要遍历

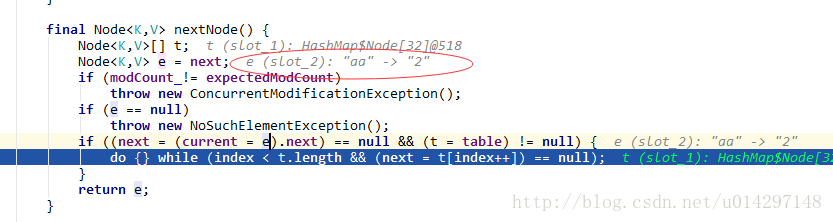

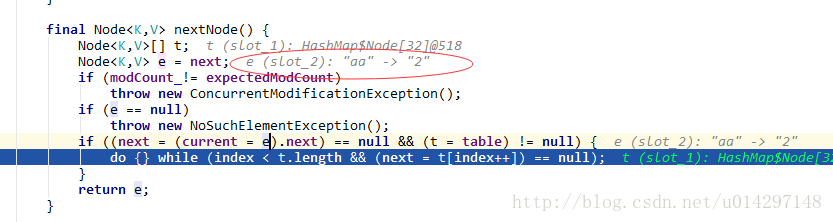

3.遍历下个node

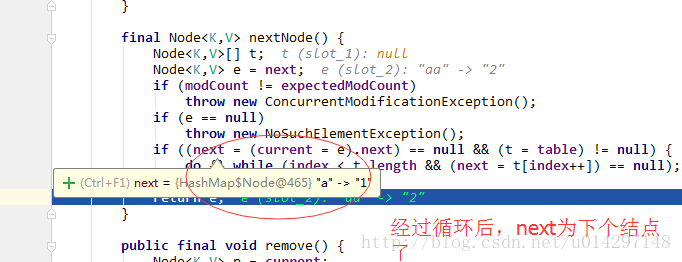

4.当table[index]不止一个结点时,要循环遍历完该位置的所有结点,然后再遍历数组中的其他元素

得到了该结点的key,在根据HashMap.get(key)取得对应得值,至此keySet遍历就走完了,总结一下keySet遍历就是将所有的key存放到一个set中,在通过set的iterator来遍历key集合—-》取得了key**——》**通调hashMap.get(key)取得对应得value

jdk中的源码

entrySet来遍历HashMap是取得所有结点的Set ,然后通过set集合自身的Iterator来遍历取得每个node,然后通过node的getKey()与getValue()方法取得每个结点的key与value;

jdk中的源码

1.HashMap的底层存储结构

HashMap的底层结构是数组元素为链表的动态数组实现的。

底层代码实现

1.1HashMap里几个比较重要的属性

1.默认初始化容量

static final int DEFAULT_INITIAL_CAPACITY = 1 << 4; // aka 16

2.默认最大容量

static final int MAXIMUM_CAPACITY = 1 << 30;

3.默认加载因子

static final float DEFAULT_LOAD_FACTOR = 0.75f;

4.散列桶中k-v的总数

transient int size;

5.重构的阈值(当散列桶中的元素达到该值时将自动扩容)

int threshold; //大小=capacity * load factor

6.链表的存储结构

static final Entry<?,?>[] EMPTY_TABLE = {};

7.散列桶(数组)

transient Entry<K,V>[] table = (Entry<K,V>[]) EMPTY_TABLE;

8.与当前对象关联并能减少hash碰撞概率的用于hash(key)的随机种子

transient int hashSeed = 0;1.2hash表中的底层链表实现

//链表的结点的代码实现

static class Node<K,V> implements Map.Entry<K,V> {

final int hash;

final K key;

V value;

Node<K,V> next;

Node(int hash, K key, V value, Node<K,V> next) {

this.hash = hash;

this.key = key;

this.value = value;

this.next = next;

}

public final K getKey() { return key; }

public final V getValue<

4000

/span>() { return value; }

public final String toString() { return key + "=" + value; }

public final int hashCode() {

return Objects.hashCode(key) ^ Objects.hashCode(value);

}

public final V setValue(V newValue) {

V oldValue = value;

value = newValue;

return oldValue;

}

public final boolean equals(Object o) {

if (o == this)

return true;

if (o instanceof Map.Entry) {

Map.Entry<?,?> e = (Map.Entry<?,?>)o;

if (Objects.equals(key, e.getKey()) &&

Objects.equals(value, e.getValue()))

return true;

}

return false;

}

}2.hashMap的初始过程

/**

* Constructs an empty <tt>HashMap</tt> with the default initial capacity

* (16) and the default load factor (0.75).

*/

public HashMap() {

this.loadFactor = DEFAULT_LOAD_FACTOR; // all other fields defaulted

}3.hashMap的存储原理

3.1 hashMap的jdk源码

/**

* Associates the specified value with the specified key in this map.

* If the map previously contained a mapping for the key, the old

* value is replaced.

*

* @param key key with which the specified value is to be associated

* @param value value to be associated with the specified key

* @return the previous value associated with <tt>key</tt>, or

* <tt>null</tt> if there was no mapping for <tt>key</tt>.

* (A <tt>null</tt> return can also indicate that the map

* previously associated <tt>null</tt> with <tt>key</tt>.)

*/

public V put(K key, V value) {

// 先计算key的hash值,然后再调存储方法

return putVal(hash(key), key, value, false, true);

}

/**

* Computes key.hashCode() and spreads (XORs) higher bits of hash

* to lower. Because the table uses power-of-two masking, sets of

* hashes that vary only in bits above the current mask will

* always collide. (Among known examples are sets of Float keys

* holding consecutive whole numbers in small tables.) So we

* apply a transform that spreads the impact of higher bits

* downward. There is a tradeoff between speed, utility, and

* quality of bit-spreading. Because many common sets of hashes

* are already reasonably distributed (so don't benefit from

* spreading), and because we use trees to handle large sets of

* collisions in bins, we just XOR some shifted bits in the

* cheapest possible way to reduce systematic lossage, as well as

* to incorporate impact of the highest bits that would otherwise

* never be used in index calculations because of table bounds.

*/

static final int hash(Object key) {

int h;

return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16);

}

/**

* Returns a hash code for this string. The hash code for a

* {@code String} object is computed as

* <blockquote><pre>

* s[0]*31^(n-1) + s[1]*31^(n-2) + ... + s[n-1]

* </pre></blockquote>

* using {@code int} arithmetic, where {@code s[i]} is the

* <i>i</i>th character of the string, {@code n} is the length of

* the string, and {@code ^} indicates exponentiation.

* (The hash value of the empty string is zero.)

*

* @return a hash code value for this object.

*/

public int hashCode() { //计算key的hashCode

int h = hash;

if (h == 0 && value.length > 0) {

char val[] = value;

for (int i = 0; i < value.length; i++) {

h = 31 * h + val[i];

}

hash = h;

}

return h;

}

/**

* Implements Map.put and related methods

*

* @param hash hash for key

* @param key the key

* @param value the value to put

* @param onlyIfAbsent if true, don't change existing value

* @param evict if false, the table is in creation mode.

* @return previous value, or null if none

*/

final V putVal(int hash, K key, V value, boolean onlyIfAbsent,

boolean evict) {

/**

* tab:定义一个局部链表,

* p 定义一个结点

* n:hash表初始化时候的最大容量,

* i:表示hash表中的index

*/

Node<K,V>[] tab; Node<K,V> p; int n, i;

//判断table是否为空 并将给tab初始化

if ((tab = table) == null || (n = tab.length) == 0)

/**

* 如果为空则重散列 第一次 重散列会个体 tab初始化一个容量为16

* 阈值为12的hash表

*/

n = (tab = resize()).length;

/**

* 利用key的hashCode & (size-1)的结果做为数组的index,

* 然后判断该index是否有元素,如果没有这将新建一个结点来存储元素,

* 如果有则说明产生了 hash碰撞,需要走else分支

*/

if ((p = tab[i = (n - 1) & hash]) == null)

tab[i] = newNode(hash, key, value, null);

else {

//定义一个结点,

Node<K,V> e; K k;

/**

* 如果该index的元素的hashCode==要存储元素的hashCode

* 并且他们key值也是相同的,

* 则用新key的vaule 覆盖老key的value 如果key不相等

* 走else分支

*/

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

e = p;

else if (p instanceof TreeNode)

e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value);

else {

//要在该hashCode的链表中顺延,直到找新的位置,或者覆盖key与他相等的结点值

for (int binCount = 0; ; ++binCount) {

//如果该hashCode下的链表只有一个结点,则new 一个结点来存储该元素

if ((e = p.next) == null) {

p.next = newNode(hash, key, value, null);

if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st

treeifyBin(tab, hash);

break;

}

/**

* 如果该hashCode下的结点有下一个结点,

* 并且该结点的key与当前要存储的key相等,

* 则覆盖原来的值

*/

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

break;

//如果key 也不等 则将该结点 赋值给p 进行上述的循环操作

p = e;

}

}

if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}

}

//记录该hash表的改变次数

++modCount;

/**

* 每存储一个元素实际容量 size+1,

* 我们默认初始化的hash表的最大容量是16,

* 但是为了追求内存利用率与查询等操作效率的平衡,

* 定义了一个阈值 threshold, 默认初始化则为12。

* 所以hash表的实际容量>阈值12是就进行重散列

*/

if (++size > threshold)

resize();

afterNodeInsertion(evict);

return null;

}

/**

* Initializes or doubles table size. If null, allocates in

* accord with initial capacity target held in field threshold.

* Otherwise, because we are using power-of-two expansion, the

* elements from each bin must either stay at same index, or move

* with a power of two offset in the new table.

*

* @return the table

*/

final Node<K,V>[] resize() {

//利用oldTab来接受原来的hash表

Node<K,V>[] oldTab = table;

//old hash表的容量

int oldCap = (oldTab == null) ? 0 : oldTab.length;

//old hash表的阈值

int oldThr = threshold;

//定义新的hash表的容量,新的阈值

int newCap, newThr = 0;

//如果是实际容量>阈值时,hash表自动进行扩容(重散列)

if (oldCap > 0) {

if (oldCap >= MAXIMUM_CAPACITY) {

/**

* 当前hash表的最大容量>=最大容量,

* 阈值就是Integer的最大值,

* hash表的最大容量已经到达了极限,只有扩大阈值了

*/

threshold = Integer.MAX_VALUE;

return oldTab;

}

//新hash表的最大容量=old的*2 如16*2=32

else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY &&

oldCap >= DEFAULT_INITIAL_CAPACITY)

//阈值=old的*2 如12*2=24

newThr = oldThr << 1; // double threshold

}

else if (oldThr > 0) // initial capacity was placed in threshold

newCap = oldThr;

else { // zero initial threshold signifies using defaults

/**

* 如果是新定义的hash表 则 给hash表进行初始化,

* 给默认的size 16 默认的阈值(12)=16*0.75

* 容量*加载因子

*/

newCap = DEFAULT_INITIAL_CAPACITY;

newThr = (int)(DEFAULT_LOAD_FACTOR * DEFAULT_INITIAL_CAPACITY);

}

if (newThr == 0) {

float ft = (float)newCap * loadFactor;

newThr = (newCap < MAXIMUM_CAPACITY && ft < (float)MAXIMUM_CAPACITY ?

(int)ft : Integer.MAX_VALUE);

}

threshold = newThr;

@SuppressWarnings({"rawtypes","unchecked"})

//据新的最大容量定义一个新的hash表

Node<K,V>[] newTab = (Node<K,V>[])new Node[newCap];

//将该新的hash表赋值给原来老的hash表

table = newTab;

/**

* 如果原来的hash表里面有元素,

* 则根据hash值和新的最大容量进行重散列,

* 将元素存储到新的hash表中

*/

if (oldTab != null) {

for (int j = 0; j < oldCap; ++j) {

Node<K,V> e;

//循环的对表中的每个元素进行重新存储

if ((e = oldTab[j]) != null) {

oldTab[j] = null;

/**

* 如果该index中只存储了一个结点,

* 如果是存储的一个链表则走else分支

*/

if (e.next == null)

//index=e.hash&(newCap-1)的位置存储该结点

newTab[e.hash & (newCap - 1)] = e;

else if (e instanceof TreeNode)

((TreeNode<K,V>)e).split(this, newTab, j, oldCap);

else { // preserve order

Node<K,V> loHead = null, loTail = null;

Node<K,V> hiHead = null, hiTail = null;

Node<K,V> next;

do {

next = e.next;

/**

* 利用该结点的hashCode&oldCap是否==0,

* 如果是相等就在新hash表的j存储该元素,

* 如果不相等则在新表的j+oldCap的位置存储该元素。

*/

if ((e.hash & oldCap) == 0) {

if (loTail == null)

loHead = e;

else

/**

* 如果是链表超过2个以上的结点,

* 则==0的结点跟着第一个==0的结点后面

*/

loTail.next = e;

loTail = e;

}

else {

if (hiTail == null)

hiHead = e;

else

hiTail.next = e;

hiTail = e;

}

} while ((e = next) != null);

if (loTail != null) {

//将该元素的下个结点置空

loTail.next = null;

//在新表中的原位置存储

newTab[j] = loHead;

}

if (hiTail != null) {

hiTail.next = null;

//在新表中的原位置+oldCap存储

newTab[j + oldCap] = hiHead;

}

}

}

}

}

return newTab;

}3.2测试hashMap存储元素的过程

/**

* @Description

* @Author xiaoqx <worldly_xuan@163.com>

* @Version V1.0.0

* @Since 1.0

* @Date 2018/1/28

*/

public class HashMapApi {

public static void main(String[]args){

HashMap<String, String> hsm = new HashMap<String, String>();

hsm.put("str","0");

hsm.put("a","1");

hsm.put("sb","2");

hsm.put("c","3");

hsm.put("d","5");

hsm.put("3","c");

hsm.put("sit","s");

hsm.put("aut","s");

hsm.put("pro","12");

hsm.put("e","f");

hsm.put("g","h");

hsm.put("sd","fg");

hsm.put("aa","2"); //12达到阈值

hsm.put("dff","2");

}

}当还没有达到阈值的时候,存储完12个元素的情况是

扩容过程

扩容后hash表中存储元素情况

4.hashMap的特点

1. 由于每个元素的key经过 tab[i = (n - 1) & hash] 来确定该元素在该hash表中的位置,所以第一个存储的元素并一定在 hash表中的第一个位置,所以hashMap是无序的。2. 经过 if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

e = p;与if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}这两步后,可以保证 hashMap中的元素 是不重复的

3. 当两个元素的key的hashCode相同的时候,在判断两个元素的key是否==,如果key相等则 新元素的value 覆盖老元素的value。如果不相等则在该位置下顺延,形成链表

4. 新定义的hashMap 的最大容量为16 阈值为16*0.75, 当实际容量>12时会自动扩容

5.hashMap的遍历

5.1 通过keySet去遍历

//调用hashMap的keySet方法,得到所有key的set集合

Set<String> keySet = hsm.keySet();

//通过set集合遍历方法,迭代器iterator

Iterator<String> iterator = keySet.iterator();

//判断是否有下一个

while(iterator.hasNext()){

//取得当前key的值

String key = iterator.next();

//取得对应key的value

System.out.println(hsm.get(key));

}jdk源码

/**

* Returns a {@link Set} view of the keys contained in this map.

* The set is backed by the map, so changes to the map are

* reflected in the set, and vice-versa. If the map is modified

* while an iteration over the set is in progress (except through

* the iterator's own <tt>remove</tt> operation), the results of

* the iteration are undefined. The set supports element removal,

* which removes the corresponding mapping from the map, via the

* <tt>Iterator.remove</tt>, <tt>Set.remove</tt>,

* <tt>removeAll</tt>, <tt>retainAll</tt>, and <tt>clear</tt>

* operations. It does not support the <tt>add</tt> or <tt>addAll</tt>

* operations.

*

* @return a set view of the keys contained in this map

*/

public Set<K> keySet() {

Set<K> ks;

return (ks = keySet) == null ? (keySet = new KeySet()) : ks;

}

//内部类KeySet

final class KeySet extends AbstractSet<K> {

public final int size() { return size; }

public final void clear() { HashMap.this.clear(); }

/**

* 调iterator 方法 返回的是 键set集合的iterator

*/

public final Iterator<K> iterator() { return new KeyIterator(); }

public final boolean contains(Object o) { return containsKey(o); }

public final boolean remove(Object key) {

return removeNode(hash(key), key, null, false, true) != null;

}

public final Spliterator<K> spliterator() {

return new KeySpliterator<>(HashMap.this, 0, -1, 0, 0);

}

public final void forEach(Consumer<? super K> action) {

Node<K,V>[] tab;

if (action == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next)

action.accept(e.key);

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

}

}

/**

* 因为KeyIterator 父类是HashIterator 实例化时会先将父类实例化

* 而HashIterator 也是HashMap的一个内部类

*/

final class KeyIterator extends HashIterator

implements Iterator<K> {

public final K next() { return nextNode().key; }

}

abstract class HashIterator {

Node<K,V> next; // next entry to return

Node<K,V> current; // current entry

//hashMap预期改变次数

int expectedModCount; // for fast-fail

//hashMap底层数组中的index

int index; // current slot

HashIterator() {//HashIterator的实例化

//将table的变化次数赋值给HashIterator的预期次数

expectedModCount = modCount;

//利用t来接受table对象

Node<K,V>[] t = table;

current = next = null;

//table从0开始遍历

index = 0;

//这是将HashIterator对象 指向table[0]的结点

if (t != null && size > 0) { // advance to first entry

/**

* 下一个结点next =t[0] 即hash表中的第一个元素

* 此时 next !=null

*/

do {} while (index < t.length && (next = t[index++]) == null);

}

}

/**

* 当hsm调hashNext()方法时,由于KeyIterator没有该方法,

* 所以会调用父类HashIterator 的hasNext()方法

* 1.由于HashIterator实例化的时候,给next赋了值 所以返回true

* 2.当遍历第二个元素的时候nextNode() 也会给next 赋值

*/

public final boolean hasNext() {

return next != null;

}

/**

* 当while(true)的时候执行 keySet.next()方法,

* 此时就会执行 nextNode()方法,跟hashNext()同理 HashIterator的

* nextNode()方法执行

*/

final Node<K,V> nextNode() {

Node<K,V>[] t;

//将下个结点 赋值给结点e

Node<K,V> e = next;

if (modCount != expectedModCount)

throw new ConcurrentModificationException();

if (e == null)

throw new NoSuchElementException();

/**

* 将e赋值给当前结点,然后判断当前结点是否有下一个结点

* 如果有下个结点,则说明该table[index]下存的是超过两个结点链表

* 此时直接返回 当前结点 e 而 next =e.next

* 遍历完 table[index]的所有结点后,才会继续遍历table[]中的

* 其他元素

*/

if ((next = (current = e).next) == null && (t = table) != null) {

/**

* 如果当前结点没有下一个结点,继续遍历数组

* 给下个要遍历的结点赋值,中间可能有的index 没有元素,

* 所以需要循环遍历直到 table[index]!=null

*/

do {} while (index < t.length && (next = t[index++]) == null);

}

return e;

}

public final void remove() {

Node<K,V> p = current;

if (p == null)

throw new IllegalStateException();

if (modCount != expectedModCount)

throw new ConcurrentModificationException();

current = null;

K key = p.key;

removeNode(hash(key), key, null, false, false);

expectedModCount = modCount;

}

}debug走一波遍历流程,详细可以看下图

1.实例化HashIterator ,定位到第一个遍历的元素

2.判断是否有下个node需要遍历

3.遍历下个node

4.当table[index]不止一个结点时,要循环遍历完该位置的所有结点,然后再遍历数组中的其他元素

得到了该结点的key,在根据HashMap.get(key)取得对应得值,至此keySet遍历就走完了,总结一下keySet遍历就是将所有的key存放到一个set中,在通过set的iterator来遍历key集合—-》取得了key**——》**通调hashMap.get(key)取得对应得value

5.2 通过entrySet去遍历

entrySet的代码//调用hashMap的entrySet方法,得到entrySet集合

Set<Map.Entry<String, String>> entrySet = hsm.entrySet();

//通过set集合遍历方法取得迭代器iterator

Iterator<Map.Entry<String, String>> iterator1 = entrySet.iterator();

while(iterator1.hasNext()){

//遍历取得结点

Map.Entry<String, String> next = iterator1.next();

//通过node的getKey 与getValue 取得相应的key与value

System.out.println(next.getKey());

System.out.println(next.getValue());

}jdk中的源码

/**

* Returns a {@link Set} view of the mappings contained in this map.

* The set is backed by the map, so changes to the map are

* reflected in the set, and vice-versa. If the map is modified

* while an iteration over the set is in progress (except through

* the iterator's own <tt>remove</tt> operation, or through the

* <tt>setValue</tt> operation on a map entry returned by the

* iterator) the results of the iteration are undefined. The set

* supports element removal, which removes the corresponding

* mapping from the map, via the <tt>Iterator.remove</tt>,

* <tt>Set.remove</tt>, <tt>removeAll</tt>, <tt>retainAll</tt> and

* <tt>clear</tt> operations. It does not support the

* <tt>add</tt> or <tt>addAll</tt> operations.

*

* @return a set view of the mappings contained in this map

*/

public Set<Map.Entry<K,V>> entrySet() {

Set<Map.Entry<K,V>> es;

return (es = entrySet) == null ? (entrySet = new EntrySet()) : es;

}

//内部类EntrySet

final class EntrySet extends AbstractSet<Map.Entry<K,V>> {

public final int size() { return size; }

public final void clear() { HashMap.this.clear(); }

//返回 EntryIterator对象

public final Iterator<Map.Entry<K,V>> iterator() {

return new EntryIterator();

}

public final boolean contains(Object o) {

if (!(o instanceof Map.Entry))

return false;

Map.Entry<?,?> e = (Map.Entry<?,?>) o;

Object key = e.getKey();

Node<K,V> candidate = getNode(hash(key), key);

return candidate != null && candidate.equals(e);

}

public final boolean remove(Object o) {

if (o instanceof Map.Entry) {

Map.Entry<?,?> e = (Map.Entry<?,?>) o;

Object key = e.getKey();

Object value = e.getValue();

return removeNode(hash(key), key, value, true, true) != null;

}

return false;

}

public final Spliterator<Map.Entry<K,V>> spliterator() {

return new EntrySpliterator<>(HashMap.this, 0, -1, 0, 0);

}

public final void forEach(Consumer<? super Map.Entry<K,V>> action) {

Node<K,V>[] tab;

if (action == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next)

action.accept(e);

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

}

}

/**

* EntryIterator 父类也是HashIterator

* 走的流程都是一样的

*/

final class EntryIterator extends HashIterator

implements Iterator<Map.Entry<K,V>> {

public final Map.Entry<K,V> next() { return nextNode(); }

}entrySet来遍历HashMap是取得所有结点的Set ,然后通过set集合自身的Iterator来遍历取得每个node,然后通过node的getKey()与getValue()方法取得每个结点的key与value;

5.3 通过values来遍历,只能取到value。

直接遍历value的结合来取得valueCollection<String> values = hsm.values();

Iterator<String> iterator2 = values.iterator();

while(iterator2.hasNext()){

System.out.println(iterator2.next());

}jdk中的源码

/**

* Returns a {@link Collection} view of the values contained in this map.

* The collection is backed by the map, so changes to the map are

* reflected in the collection, and vice-versa. If the map is

* modified while an iteration over the collection is in progress

* (except through the iterator's own <tt>remove</tt> operation),

* the results of the iteration are undefined. The collection

* supports element removal, which removes the corresponding

* mapping from the map, via the <tt>Iterator.remove</tt>,

* <tt>Collection.remove</tt>, <tt>removeAll</tt>,

* <tt>retainAll</tt> and <tt>clear</tt> operations. It does not

* support the <tt>add</tt> or <tt>addAll</tt> operations.

*

* @return a view of the values contained in this map

*/

public Collection<V> values() {

Collection<V> vs;

return (vs = values) == null ? (values = new Values()) : vs;

}

final class Values extends AbstractCollection<V> {

public final int size() { return size; }

public final void clear() { HashMap.this.clear(); }

public final Iterator<V> iterator() { return new ValueIterator(); }

public final boolean contains(Object o) { return containsValue(o); }

public final Spliterator<V> spliterator() {

return new ValueSpliterator<>(HashMap.this, 0, -1, 0, 0);

}

public final void forEach(Consumer<? super V> action) {

Node<K,V>[] tab;

if (action == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next)

action.accept(e.value);

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

}

}

/**

* valueIterator 的父类也是HashIterator

* 所以套路都是一样的

*/

final class ValueIterator extends HashIterator

implements Iterator<V> {

public final V next() { return nextNode().value; }

}

相关文章推荐

- Java中HashMap底层实现原理(JDK1.8)源码分析

- Java中HashMap底层实现原理(JDK1.8)源码分析

- Java中HashMap底层实现原理(JDK1.8)源码分析

- Java中HashMap底层实现原理(JDK1.8)源码分析

- Java中HashMap底层实现原理(JDK1.8)源码分析

- (转载)Java中HashMap底层实现原理(JDK1.8)源码分析

- Java中HashMap底层实现原理(JDK1.8)源码分析

- Java面试绕不开的问题: Java中HashMap底层实现原理(JDK1.8)源码分析

- Java中HashMap底层实现原理(JDK1.8)源码分析

- HashMap的底层原理及源码分析

- HashMap实现原理及源码分析

- HashMap实现原理及源码分析

- HashMap原理 源码分析

- HashMap实现原理及源码分析

- HashMap实现原理及源码分析

- HashMap的源码,实现原理,底层结构

- Mybatis底层原理学习(二):从源码角度分析一次查询操作过程

- HashMap实现原理及源码分析

- Android底层原理之从Service_manager源码分析Android进程间通信过程

- HashMap底层实现(源码分析)