使用python3分析Ajax爬取今日头条上的街拍美图

2017-03-25 20:11

721 查看

有的网站是通过Ajax异步的返回json数据,这种情况下使用爬取静态网站的方法是不能获取我们想要的信息的。比如,现在我们想爬取今日头条的街拍美图,打开http://www.toutiao.com/输入关键字“街拍”进行搜索:

点击搜索后发现这个页面是通过Ajax响应的,比如当我们滑到底部可以看见,页面在异步地生成响应结果:

按F12打开开发者工具,可以查看到Ajax请求发送的参数:

然后结合请求的url,分析html节点之间的关系,我们可以通过如下的代码实现抓取街拍图集,返回图集标题、url和子图的url集合,并将每一个图集的每一张子图存到项目根目录:

config.py

CrawlerAjax.py

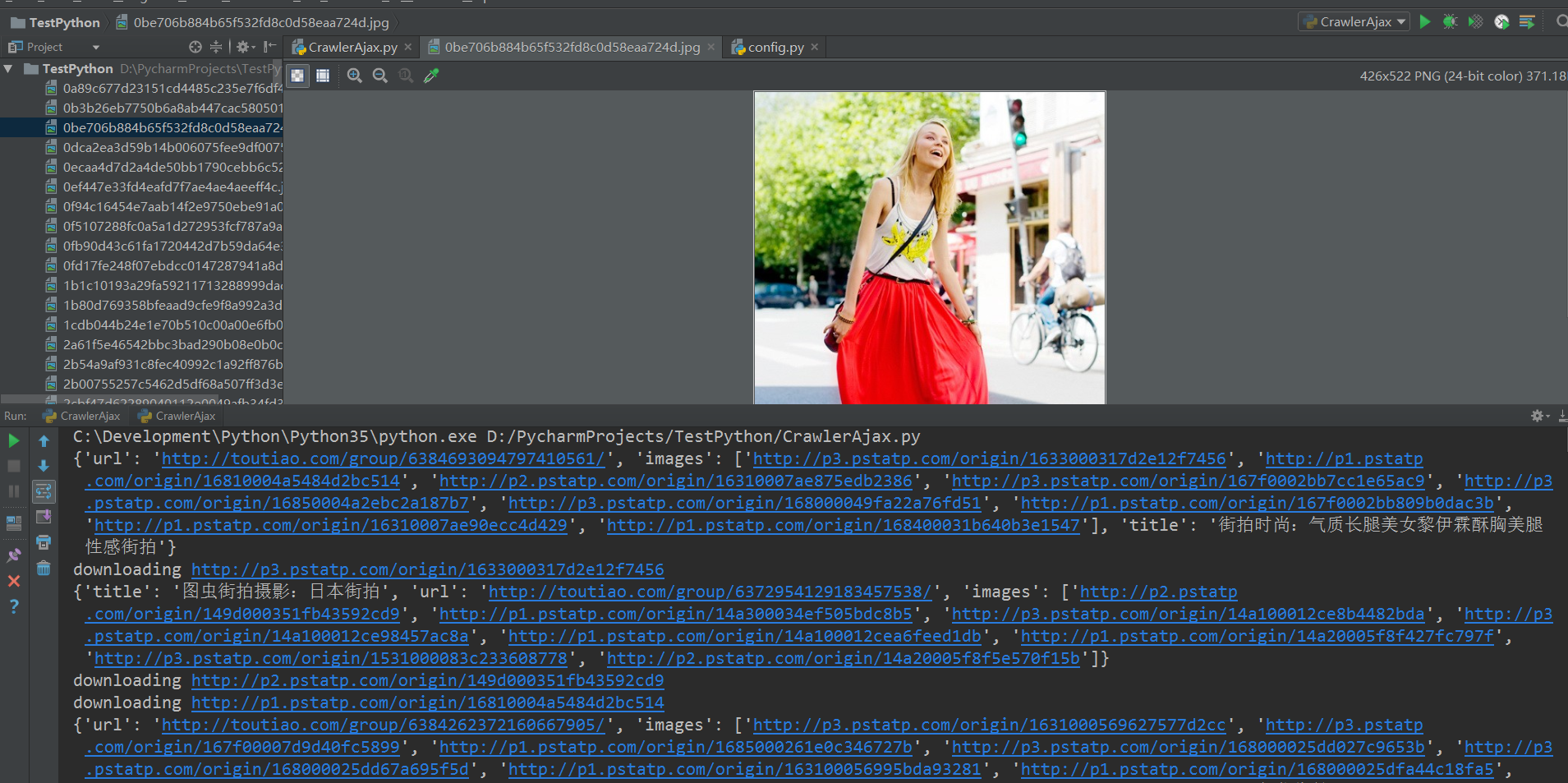

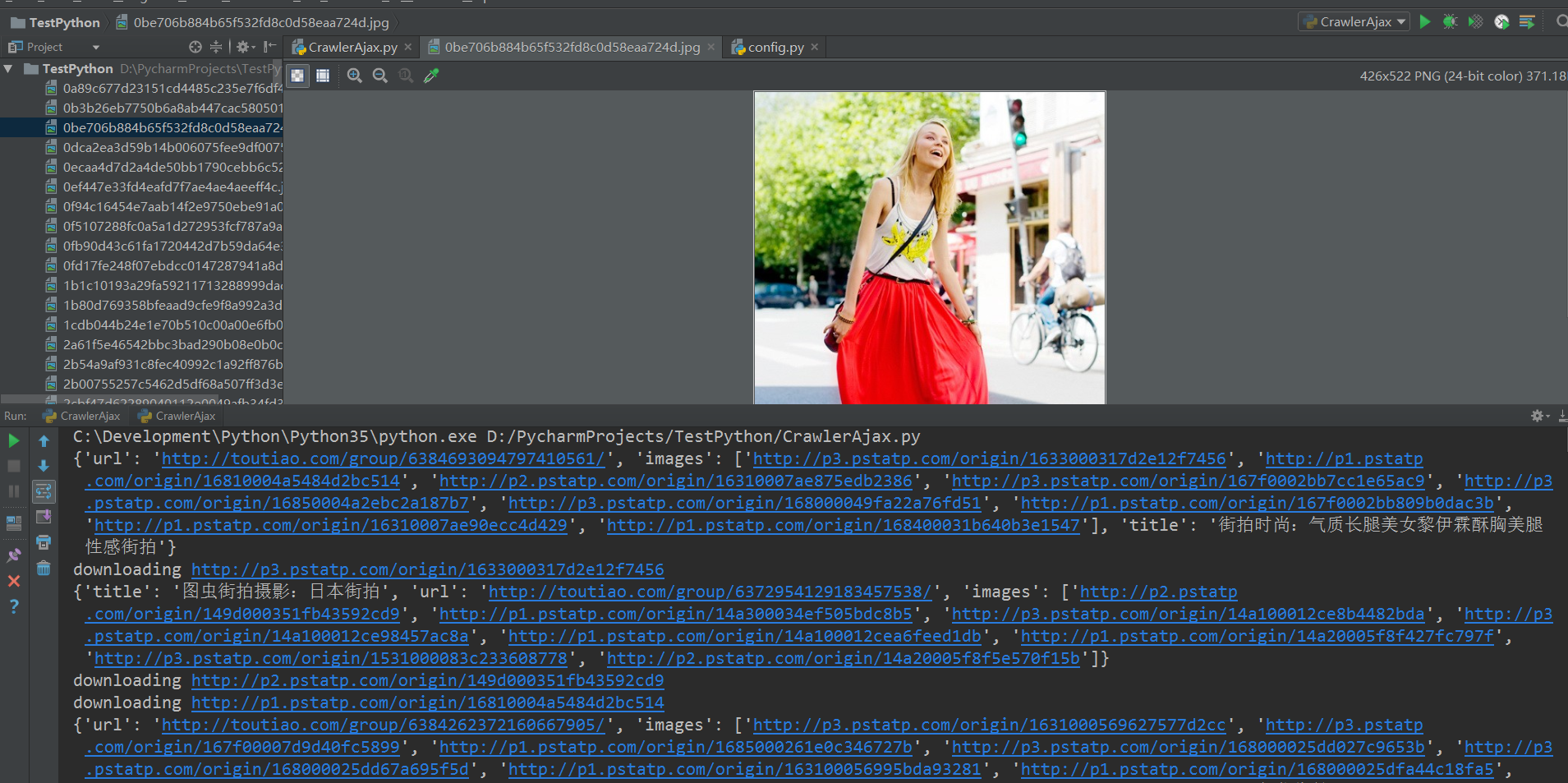

运行结果:

点击搜索后发现这个页面是通过Ajax响应的,比如当我们滑到底部可以看见,页面在异步地生成响应结果:

按F12打开开发者工具,可以查看到Ajax请求发送的参数:

然后结合请求的url,分析html节点之间的关系,我们可以通过如下的代码实现抓取街拍图集,返回图集标题、url和子图的url集合,并将每一个图集的每一张子图存到项目根目录:

config.py

# 指定搜索的参数offset范围为[GROUP_START*20,(GROUP_END+1)*20] GROUP_START = 1 GROUP_END = 5 # 搜索关键字,可以改变 KEYWORD = '街拍'

CrawlerAjax.py

import os

import re

from multiprocessing import Pool

from urllib.parse import urlencode

from hashlib import md5

from bs4 import BeautifulSoup

from requests.exceptions import RequestException

import requests

import json

from config import *

# 获取索引页

def get_index_page(offset, keyword):

# Ajax请求参数

data = {

'offset': offset,

'format': 'json',

'keyword': keyword,

'autoload': 'true',

'count': '20',

'cur_tab': 3

}

params = urlencode(data)

base = 'http://www.toutiao.com/search_content/'

url = base + '?' + params

try:

response = requests.get(url)

if response.status_code == 200:

return response.text

return None

except RequestException:

print('请求索引页出错', url)

return None

# 解析索引页

def parse_index_page(html):

data = json.loads(html)

if data and 'data' in data.keys():

for item in data.get('data'):

yield item.get('article_url')

# 获取详情页

def get_detail_page(url):

try:

response = requests.get(url)

if response.status_code == 200:

return response.text

return None

except RequestException:

print('请求详情页出错', url)

return None

# 解析详情页

def parse_detail_page(html, url):

soup = BeautifulSoup(html, 'html.parser')

title = soup.select('title')[0].get_text()

images_pattern = re.compile('var gallery = (.*?);', re.S)

result = re.search(images_pattern, html)

if result:

data = json.loads(result.group(1))

if data and 'sub_images' in data.keys():

sub_images = data.get('sub_images')

images = [image.get('url') for image in sub_images]

res = {

'title': title,

'url': url,

'images': images

}

print(res)

for image in images:

download_image(image)

return res

# 下载图片

def download_image(url):

print('downloading', url)

try:

response = requests.get(url)

if response.status_code == 200:

# content和text的区别:content返回二进制内容

save_image(response.content)

return None

except RequestException:

print('请求图片出错', url)

return None

# 保存图片

def save_image(content):

file_path = '{0}/{1}.{2}'.format(os.getcwd(), md5(content).hexdigest(), 'jpg')

if not os._exists(file_path):

with open(file_path, 'wb') as f:

f.write(content)

f.close()

def main(offset):

html = get_index_page(offset, KEYWORD)

urls = parse_index_page(html)

for url in urls:

html = get_detail_page(url)

res = parse_detail_page(html, url)

# if __name__ == '__main__':

# main(20)

# 开启多线程

if __name__ == '__main__':

pool = Pool()

group = [x * 20 for x in range(GROUP_START, GROUP_END + 1)]

pool.map(main, group)

pool.close()

pool.join()运行结果:

相关文章推荐

- Python爬虫-分析Ajax抓取今日头条街拍美图

- requests分析Ajax来爬取今日头条街拍美图

- 分析AJAX抓取今日头条街拍美图(上)

- 分析AJAX抓取今日头条街拍美图(存储)

- Python爬虫实战02:分析Ajax请求并抓取今日头条街拍

- 分析Ajax请求抓取今日头条街拍美图

- 分析AJAX抓取今日头条街拍美图(下)

- 分析Ajax抓取今日头条街拍美图

- Python基于分析Ajax请求实现抓取今日头条街拍图集功能示例

- 分析Ajax请求并爬取下载今日头条街拍美图

- python3正则+bs4+requests爬取今日头条街拍图片(ajax+html)

- 小白学python-今日头条街拍美图详解

- Python爬虫之四:今日头条街拍美图

- python爬今日头条(ajax分析)

- 使用python-aiohttp爬取今日头条

- Python实战---抓取头条网街拍美图

- Python爬取今日头条搜索的照片。使用requests+正则表达式

- Python使用Selenium + PhantomJS抓取动态网页:今日头条

- 爬取今日头条街拍美图

- python多线程爬取-今日头条的街拍数据(附源码加思路注释)