Android音视频学习第2章:使用ffmpeg进行音频解码

2017-01-20 13:20

681 查看

和视频解码前面的套路一毛一样,麻烦的要对音频参数进行配置

音频这里要进行参数设置

为了播放音频,使用C调用Java的方式来调用

在我的java层我初始化了一个AudioTrack对象

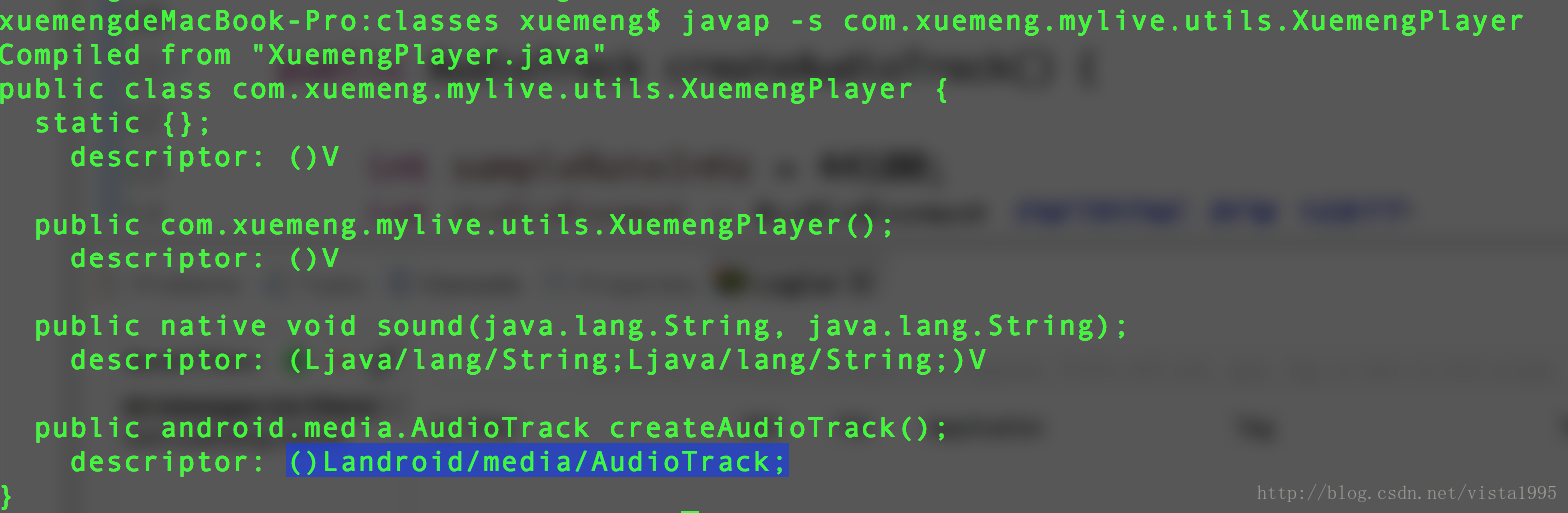

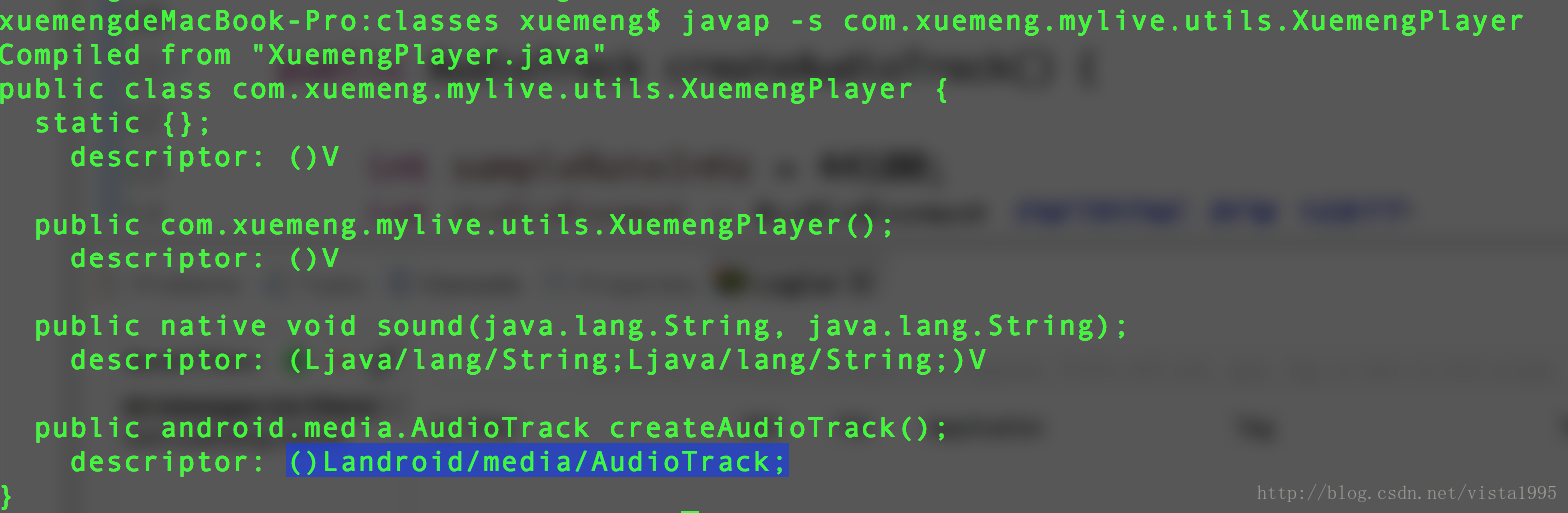

该方法在 com.xuemeng.mylive.utils.XuemengPlayer类中,为了获得该方法的签名,进入项目的class目录使用javap命令来获取

解码音频使用avcodec_decode_audio4方法

#include "com_xuemeng_mylive_utils_XuemengPlayer.h"

#include <stdlib.h>

#include <stdio.h>

#include <unistd.h>

#include <android/log.h>

#define LOGI(FORMAT,...) __android_log_print(ANDROID_LOG_INFO,"xuemeng",FORMAT,##__VA_ARGS__);

#define LOGE(FORMAT,...) __android_log_print(ANDROID_LOG_ERROR,"xuemeng",FORMAT,##__VA_ARGS__);

#include "libyuv.h"

//封装格式

#include "libavformat/avformat.h"

//解码

#include "libavcodec/avcodec.h"

//缩放

#include "libswscale/swscale.h"

#include "libswresample/swresample.h"

JNIEXPORT void JNICALL Java_com_xuemeng_mylive_utils_XuemengPlayer_sound(JNIEnv *env, jobject jthiz, jstring input_jstr,

jstring output_jstr) {

const char* input_cstr = (*env)->GetStringUTFChars(env, input_jstr, NULL);

const char* output_cstr = (*env)->GetStringUTFChars(env, output_jstr, NULL);

//1.注册组件

av_register_all();

//封装格式上下文

AVFormatContext *pFormatCtx = avformat_alloc_context();

//2.打开输入音频文件

if (avformat_open_input(&pFormatCtx, input_cstr, NULL, NULL) != 0) {

LOGI("%s", "打开输入音频文件失败");

return;

}

//3.获取音频信息

if (avformat_find_stream_info(pFormatCtx, NULL) < 0) {

LOGI("%s", "获取音频信息失败");

return;

}

//音频解码,需要找到对应的AVStream所在的pFormatCtx->streams的索引位置

int audio_stream_idx = -1;

int i = 0;

for (; i < pFormatCtx->nb_streams; i++) {

//根据类型判断是否是音频流

if (pFormatCtx->streams[i]->codec->codec_type == AVMEDIA_TYPE_AUDIO) {

audio_stream_idx = i;

break;

}

}

//4.获取解码器

//根据索引拿到对应的流,根据流拿到解码器上下文

AVCodecContext *pCodeCtx = pFormatCtx->streams[audio_stream_idx]->codec;

//再根据上下文拿到编解码id,通过该id拿到解码器

AVCodec *pCodec = avcodec_find_decoder(pCodeCtx->codec_id);

if (pCodec == NULL) {

LOGI("%s", "无法解码");

return;

}

//5.打开解码器

if (avcodec_open2(pCodeCtx, pCodec, NULL) < 0) {

LOGI("%s", "编码器无法打开");

return;

}

//编码数据

AVPacket *packet = av_malloc(sizeof(AVPacket));

//解压缩数据

AVFrame *frame = av_frame_alloc();音频这里要进行参数设置

//frame->16bit 44100 PCM 统一音频采样格式与采样率 SwrContext *swrCtx = swr_alloc(); //重采样设置选项-----------------------------------------------------------start //输入的采样格式 enum AVSampleFormat in_sample_fmt = pCodeCtx->sample_fmt; //输出的采样格式 16bit PCM enum AVSampleFormat out_sample_fmt = AV_SAMPLE_FMT_S16; //输入的采样率 int in_sample_rate = pCodeCtx->sample_rate; //输出的采样率 int out_sample_rate = 44100; //输入的声道布局 uint64_t in_ch_layout = pCodeCtx->channel_layout; //输出的声道布局 uint64_t out_ch_layout = AV_CH_LAYOUT_STEREO; swr_alloc_set_opts(swrCtx, out_ch_layout, out_sample_fmt, out_sample_rate, in_ch_layout, in_sample_fmt, in_sample_rate, 0, NULL); swr_init(swrCtx); //重采样设置选项-----------------------------------------------------------end //获取输出的声道个数 int out_channel_nb = av_get_channel_layout_nb_channels(out_ch_layout);

为了播放音频,使用C调用Java的方式来调用

在我的java层我初始化了一个AudioTrack对象

该方法在 com.xuemeng.mylive.utils.XuemengPlayer类中,为了获得该方法的签名,进入项目的class目录使用javap命令来获取

//java层代码

public AudioTrack createAudioTrack() {

int sampleRateInHz = 44100;

int audioFormat = AudioFormat.ENCODING_PCM_16BIT;

int channelConfig = android.media.AudioFormat.CHANNEL_OUT_STEREO;

int bufferSizeInBytes = AudioTrack.getMinBufferSize(sampleRateInHz,

channelConfig, audioFormat);

AudioTrack audioTrack = new AudioTrack(AudioManager.STREAM_MUSIC,

sampleRateInHz, channelConfig, audioFormat, bufferSizeInBytes,

AudioTrack.MODE_STREAM);

// audioTrack.write(audioData, offsetInBytes, sizeInBytes);

return audioTrack;

}//JNI调用-----------------------------------------------------------------start //XuemengPlayer jclass player_class = (*env)->GetObjectClass(env, jthiz); //AudioTrack对象 jmethodID create_audio_track_mid = (*env)->GetMethodID(env, player_class, "createAudioTrack", "()Landroid/media/AudioTrack;"); jobject audio_track = (*env)->CallObjectMethod(env, jthiz, create_audio_track_mid); //调用AudioTrack.play方法 jclass audio_track_class = (*env)->GetObjectClass(env, audio_track); jmethodID audio_track_play_mid = (*env)->GetMethodID(env, audio_track_class, "play", "()V"); (*env)->CallVoidMethod(env, audio_track, audio_track_play_mid); //AudioTrack.write jmethodID audio_track_write_mid = (*env)->GetMethodID(env, audio_track_class, "write", "([BII)I"); //JNI调用-----------------------------------------------------------------end

解码音频使用avcodec_decode_audio4方法

//存储pcm数据

uint8_t *out_buffer = (uint8_t *) av_malloc(2 * 44100);

//FILE *fp_pcm = fopen(output_cstr, "wb");

int ret, got_frame, framecount = 0;

//6.一帧一帧读取压缩的音频数据AVPacket

while (av_read_frame(pFormatCtx, packet) >= 0) {

if (packet->stream_index == audio_stream_idx) {

//解码AVPacket->AVFrame

ret = avcodec_decode_audio4(pCodeCtx, frame, &got_frame, packet);

if (ret < 0) {

LOGI("%s", "解码完成");

}

//非0,正在解码

if (got_frame) {

LOGI("解码%d帧", framecount++);

swr_convert(swrCtx, &out_buffer, 2 * 44100, frame->data, frame->nb_samples);

//获取sample的size

int out_buffer_size = av_samples_get_buffer_size(NULL, out_channel_nb, frame->nb_samples,

out_sample_fmt, 1);

//写入文件进行测试

//fwrite(out_buffer, 1, out_buffer_size, fp_pcm);

//out_buffer缓冲区数据转byte数组

jbyteArray audio_sample_array = (*env)->NewByteArray(env, out_buffer_size);

jbyte *sample_bytep = (*env)->GetByteArrayElements(env, audio_sample_array, NULL);

//out_buffer数据复制到sample_bytep

memcpy(sample_bytep, out_buffer, out_buffer_size);

//同步

(*env)->ReleaseByteArrayElements(env, audio_sample_array, sample_bytep, 0);

//AudioTrack.write PCM数据

(*env)->CallIntMethod(env, audio_track, audio_track_write_mid, audio_sample_array, 0, out_buffer_size);

//释放局部引用

(*env)->DeleteLocalRef(env, audio_sample_array);

usleep(1000 * 16);

}

}

av_free_packet(packet);

}//fclose(fp_pcm); av_frame_free(&frame); av_free(out_buffer); swr_free(&swrCtx); avcodec_close(pCodeCtx); avformat_close_input(&pFormatCtx); (*env)->ReleaseStringUTFChars(env, input_jstr, input_cstr); (*env)->ReleaseStringUTFChars(env, output_jstr, output_cstr); }

相关文章推荐

- Android音视频学习第7章:使用OpenSL ES进行音频解码

- Android音视频学习第1章:使用ffmpeg进行视频解码

- Android音视频学习第7章:使用OpenSL ES进行音频解码

- 使用 ffmpeg 进行网络推流:拉流->解封装->解码->处理原始数据(音频、视频)->编码->编码->推流

- 使用ffmpeg进行视频解码以及图像转换

- 再谈使用ffmpeg进行单纯音频编解码

- 从零开始学习音视频编程技术(五) 使用FFMPEG解码视频之保存成图片

- Android平台使用MediaCodec进行H264格式的视频编解码

- Android平台上使用SDL官方demo播放视频(使用ffmpeg最新版解码)

- 使用ffmpeg进行视频解码以及图像转换

- Android平台上使用SDL官方demo播放视频(使用ffmpeg最新版解码)

- 使用ffmpeg进行视频解码以及图像转换

- Android本地视频播放器开发--ffmpeg解码视频文件中的音频(1)

- Android本地视频播放器开发--ffmpeg解码视频文件中的音频(2)

- 音视频编解码问题:javaCV如何快速进行音频预处理和解复用编解码(基于javaCV-FFMPEG)

- Android使用MediaCodec解码视频并用OpenGL ES进行渲染的思路

- FFmpeg 学习之 定时器解码两路视频并进行对比<2>

- 通过C++/CLI使用FFMPEG库进行视频解码[初步]

- 通过C++/CLI使用FFMPEG库进行视频解码[初步]

- 使用ffmpeg进行视频解码以及图像转换