hadoop初学的第一个程序详细讲解-含排错过程

2016-07-24 17:17

447 查看

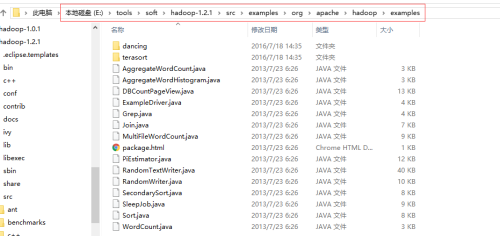

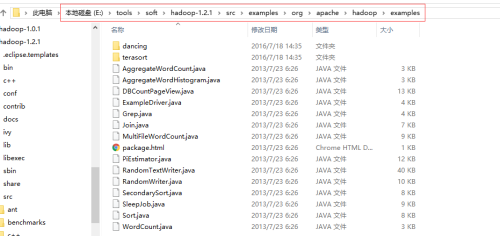

学hadoop,第一个程序当然就是WordCount,这个程序不用自己照着书上抄写,hadoop的安装包里已经带了很多学习示例,其中就包括WordCount,如下图中的最后一个文件就是了

先附上两个测试文件如下:

echo "Hello World Bye World" > file01

echo "Hello Hadoop Goodbye Hadoop" > file02

hadoop dfs -mkdir input

hadoop dfs -put file0* /user/hadoop/input

然后我用myeclipse打开进行编辑:

1.添加必要的JAR包,jar包在3个地方:1.hadoop安装包解压出来的首层目录中;2.lib文件夹内;3.lib\jsp-2.1文件夹内。注意lib文件夹里面还有两个文本文件,不要拷进去。

2.还有个关于字符编码方式的小细节要注意一下,不然执行的时候容易报错,设置方式见下图:

先在项目上右键,在弹出菜单中选择属性:

然后在项目属性对话框中,我这里原来默认的是GBK编码,现已经改为UTF-8,如下图:

3.源代码中有如下一个片段,让我初次调试时遇到些麻烦

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount < in > < out >");

System.exit(2);

}

这个意思是说,如果执行的时候,参数个数不等于2,就提示错误信息并且退出。

但是我执行的时候是参考了书上的命令,如下:

hadoop jar wordcount.jar WordCount input output

这个命令我解释一下:

第一个参数hadoop,没什么可讲的,运行hadoop的所有命令,都得以hadoop开头

第二个参数jar,表示即将输入一个jar包

第三个参数wordcount.jar,是我打包上传的jar包的具体名字,就存在于本地路径,而非hdfs上面

第四个参数WordCount,是主类名,也就是要运行的主要的程序了

后面两个参数,都是集群上的,一个是输入路径,一个是输出路径,注意输出路径应该保障是不存在的,否则会被覆盖

运行上面的命令的结果,很显然,输出如下:

Usage: wordcount < in > < out >

这就表明,参数个数不是2个,所以执行了这段代码,程序就退出了

我一开始也不明白,所以把代码改了一下:

for(int i=0;i

System.out.println(otherArgs[i]);

}

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount < in > < out >");

System.exit(2);

}

结果输出如下:

WordCount

/user/hadoop/input

/user/hadoop/output

Usage: wordcount

原来是3个参数,咋办呢???

很简单

我把这段命令注掉了,然后调用命令改了一下:

hadoop jar wordcount.jar /user/hadoop/input /user/hadoop/output -mapper WordCount -reducer WordCount

终于见到了正常的输出界面如下:

****hdfs://localhost:9000/user/hadoop/input

16/07/19 02:07:28 INFO input.FileInputFormat: Total input paths to process : 2

16/07/19 02:07:29 INFO mapred.JobClient: Running job: job_201607170436_0003

16/07/19 02:07:30 INFO mapred.JobClient: map 0% reduce 0%

16/07/19 02:07:55 INFO mapred.JobClient: map 100% reduce 0%

16/07/19 02:08:07 INFO mapred.JobClient: map 100% reduce 33%

16/07/19 02:08:16 INFO mapred.JobClient: map 100% reduce 100%

16/07/19 02:08:21 INFO mapred.JobClient: Job complete: job_201607170436_0003

16/07/19 02:08:21 INFO mapred.JobClient: Counters: 29

16/07/19 02:08:21 INFO mapred.JobClient: Job Counters

16/07/19 02:08:21 INFO mapred.JobClient: Launched reduce tasks=1

16/07/19 02:08:21 INFO mapred.JobClient: SLOTS_MILLIS_MAPS=29309

16/07/19 02:08:21 INFO mapred.JobClient: Total time spent by all reduces waiting after reserving slots (ms)=0

16/07/19 02:08:21 INFO mapred.JobClient: Total time spent by all maps waiting after reserving slots (ms)=0

16/07/19 02:08:21 INFO mapred.JobClient: Launched map tasks=2

16/07/19 02:08:21 INFO mapred.JobClient: Data-local map tasks=2

16/07/19 02:08:21 INFO mapred.JobClient: SLOTS_MILLIS_REDUCES=18127

16/07/19 02:08:21 INFO mapred.JobClient: File Output Format Counters

16/07/19 02:08:21 INFO mapred.JobClient: Bytes Written=41

16/07/19 02:08:21 INFO mapred.JobClient: FileSystemCounters

16/07/19 02:08:21 INFO mapred.JobClient: FILE_BYTES_READ=79

16/07/19 02:08:21 INFO mapred.JobClient: HDFS_BYTES_READ=272

16/07/19 02:08:21 INFO mapred.JobClient: FILE_BYTES_WRITTEN=64576

16/07/19 02:08:21 INFO mapred.JobClient: HDFS_BYTES_WRITTEN=41

16/07/19 02:08:21 INFO mapred.JobClient: File Input Format Counters

16/07/19 02:08:21 INFO mapred.JobClient: Bytes Read=50

16/07/19 02:08:21 INFO mapred.JobClient: Map-Reduce Framework

16/07/19 02:08:21 INFO mapred.JobClient: Map output materialized bytes=85

16/07/19 02:08:21 INFO mapred.JobClient: Map input records=2

16/07/19 02:08:21 INFO mapred.JobClient: Reduce shuffle bytes=85

16/07/19 02:08:21 INFO mapred.JobClient: Spilled Records=12

16/07/19 02:08:21 INFO mapred.JobClient: Map output bytes=82

16/07/19 02:08:21 INFO mapred.JobClient: Total committed heap usage (bytes)=246685696

16/07/19 02:08:21 INFO mapred.JobClient: CPU time spent (ms)=6910

16/07/19 02:08:21 INFO mapred.JobClient: Combine input records=8

16/07/19 02:08:21 INFO mapred.JobClient: SPLIT_RAW_BYTES=222

16/07/19 02:08:21 INFO mapred.JobClient: Reduce input records=6

16/07/19 02:08:21 INFO mapred.JobClient: Reduce input groups=5

16/07/19 02:08:21 INFO mapred.JobClient: Combine output records=6

16/07/19 02:08:21 INFO mapred.JobClient: Physical memory (bytes) snapshot=387923968

16/07/19 02:08:21 INFO mapred.JobClient: Reduce output records=5

16/07/19 02:08:21 INFO mapred.JobClient: Virtual memory (bytes) snapshot=4964081664

16/07/19 02:08:21 INFO mapred.JobClient: Map output records=8

[root@master data]# hadoop dfs -ls /user/hadoop/output

Found 3 items

-rw-r--r-- 1 root supergroup 0 2016-07-19 02:08 /user/hadoop/output/_SUCCESS

drwxrwxrwx - root supergroup 0 2016-07-19 02:07 /user/hadoop/output/_logs

-rw-r--r-- 1 root supergroup 41 2016-07-19 02:08 /user/hadoop/output/part-r-00000

[root@master data]# hadoop dfs -cat /user/hadoop/output/part-r-00000

Bye 1

Goodbye 1

Hadoop 2

Hello 2

World 2

先附上两个测试文件如下:

echo "Hello World Bye World" > file01

echo "Hello Hadoop Goodbye Hadoop" > file02

hadoop dfs -mkdir input

hadoop dfs -put file0* /user/hadoop/input

然后我用myeclipse打开进行编辑:

1.添加必要的JAR包,jar包在3个地方:1.hadoop安装包解压出来的首层目录中;2.lib文件夹内;3.lib\jsp-2.1文件夹内。注意lib文件夹里面还有两个文本文件,不要拷进去。

2.还有个关于字符编码方式的小细节要注意一下,不然执行的时候容易报错,设置方式见下图:

先在项目上右键,在弹出菜单中选择属性:

然后在项目属性对话框中,我这里原来默认的是GBK编码,现已经改为UTF-8,如下图:

3.源代码中有如下一个片段,让我初次调试时遇到些麻烦

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount < in > < out >");

System.exit(2);

}

这个意思是说,如果执行的时候,参数个数不等于2,就提示错误信息并且退出。

但是我执行的时候是参考了书上的命令,如下:

hadoop jar wordcount.jar WordCount input output

这个命令我解释一下:

第一个参数hadoop,没什么可讲的,运行hadoop的所有命令,都得以hadoop开头

第二个参数jar,表示即将输入一个jar包

第三个参数wordcount.jar,是我打包上传的jar包的具体名字,就存在于本地路径,而非hdfs上面

第四个参数WordCount,是主类名,也就是要运行的主要的程序了

后面两个参数,都是集群上的,一个是输入路径,一个是输出路径,注意输出路径应该保障是不存在的,否则会被覆盖

运行上面的命令的结果,很显然,输出如下:

Usage: wordcount < in > < out >

这就表明,参数个数不是2个,所以执行了这段代码,程序就退出了

我一开始也不明白,所以把代码改了一下:

for(int i=0;i

System.out.println(otherArgs[i]);

}

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount < in > < out >");

System.exit(2);

}

结果输出如下:

WordCount

/user/hadoop/input

/user/hadoop/output

Usage: wordcount

原来是3个参数,咋办呢???

很简单

我把这段命令注掉了,然后调用命令改了一下:

hadoop jar wordcount.jar /user/hadoop/input /user/hadoop/output -mapper WordCount -reducer WordCount

终于见到了正常的输出界面如下:

****hdfs://localhost:9000/user/hadoop/input

16/07/19 02:07:28 INFO input.FileInputFormat: Total input paths to process : 2

16/07/19 02:07:29 INFO mapred.JobClient: Running job: job_201607170436_0003

16/07/19 02:07:30 INFO mapred.JobClient: map 0% reduce 0%

16/07/19 02:07:55 INFO mapred.JobClient: map 100% reduce 0%

16/07/19 02:08:07 INFO mapred.JobClient: map 100% reduce 33%

16/07/19 02:08:16 INFO mapred.JobClient: map 100% reduce 100%

16/07/19 02:08:21 INFO mapred.JobClient: Job complete: job_201607170436_0003

16/07/19 02:08:21 INFO mapred.JobClient: Counters: 29

16/07/19 02:08:21 INFO mapred.JobClient: Job Counters

16/07/19 02:08:21 INFO mapred.JobClient: Launched reduce tasks=1

16/07/19 02:08:21 INFO mapred.JobClient: SLOTS_MILLIS_MAPS=29309

16/07/19 02:08:21 INFO mapred.JobClient: Total time spent by all reduces waiting after reserving slots (ms)=0

16/07/19 02:08:21 INFO mapred.JobClient: Total time spent by all maps waiting after reserving slots (ms)=0

16/07/19 02:08:21 INFO mapred.JobClient: Launched map tasks=2

16/07/19 02:08:21 INFO mapred.JobClient: Data-local map tasks=2

16/07/19 02:08:21 INFO mapred.JobClient: SLOTS_MILLIS_REDUCES=18127

16/07/19 02:08:21 INFO mapred.JobClient: File Output Format Counters

16/07/19 02:08:21 INFO mapred.JobClient: Bytes Written=41

16/07/19 02:08:21 INFO mapred.JobClient: FileSystemCounters

16/07/19 02:08:21 INFO mapred.JobClient: FILE_BYTES_READ=79

16/07/19 02:08:21 INFO mapred.JobClient: HDFS_BYTES_READ=272

16/07/19 02:08:21 INFO mapred.JobClient: FILE_BYTES_WRITTEN=64576

16/07/19 02:08:21 INFO mapred.JobClient: HDFS_BYTES_WRITTEN=41

16/07/19 02:08:21 INFO mapred.JobClient: File Input Format Counters

16/07/19 02:08:21 INFO mapred.JobClient: Bytes Read=50

16/07/19 02:08:21 INFO mapred.JobClient: Map-Reduce Framework

16/07/19 02:08:21 INFO mapred.JobClient: Map output materialized bytes=85

16/07/19 02:08:21 INFO mapred.JobClient: Map input records=2

16/07/19 02:08:21 INFO mapred.JobClient: Reduce shuffle bytes=85

16/07/19 02:08:21 INFO mapred.JobClient: Spilled Records=12

16/07/19 02:08:21 INFO mapred.JobClient: Map output bytes=82

16/07/19 02:08:21 INFO mapred.JobClient: Total committed heap usage (bytes)=246685696

16/07/19 02:08:21 INFO mapred.JobClient: CPU time spent (ms)=6910

16/07/19 02:08:21 INFO mapred.JobClient: Combine input records=8

16/07/19 02:08:21 INFO mapred.JobClient: SPLIT_RAW_BYTES=222

16/07/19 02:08:21 INFO mapred.JobClient: Reduce input records=6

16/07/19 02:08:21 INFO mapred.JobClient: Reduce input groups=5

16/07/19 02:08:21 INFO mapred.JobClient: Combine output records=6

16/07/19 02:08:21 INFO mapred.JobClient: Physical memory (bytes) snapshot=387923968

16/07/19 02:08:21 INFO mapred.JobClient: Reduce output records=5

16/07/19 02:08:21 INFO mapred.JobClient: Virtual memory (bytes) snapshot=4964081664

16/07/19 02:08:21 INFO mapred.JobClient: Map output records=8

[root@master data]# hadoop dfs -ls /user/hadoop/output

Found 3 items

-rw-r--r-- 1 root supergroup 0 2016-07-19 02:08 /user/hadoop/output/_SUCCESS

drwxrwxrwx - root supergroup 0 2016-07-19 02:07 /user/hadoop/output/_logs

-rw-r--r-- 1 root supergroup 41 2016-07-19 02:08 /user/hadoop/output/part-r-00000

[root@master data]# hadoop dfs -cat /user/hadoop/output/part-r-00000

Bye 1

Goodbye 1

Hadoop 2

Hello 2

World 2

相关文章推荐

- [转][源代码]Comex公布JailbreakMe 3.0源代码

- MooBox 基于Mootools的对话框插件

- 用VBScript写合并文本文件的脚本

- CMD命令行将当前磁盘所有文件名写入到文本文件的方法

- 基于jQuery实现带动画效果超炫酷的弹出对话框(附源码下载)

- C#获取网页源代码的方法

- C#读写文本文件的方法

- C#实现在前端网页弹出警告对话框(alert)的方法

- LCL.VBS 病毒源代码

- 使用VBS访问外部文本文件一些方法和脚本实例代码

- VBS文本文件操作实现代码

- C#处理文本文件TXT实例详解

- C#读写指定编码格式的文本文件

- Android开发必知 九种对话框的实现方法

- Android中创建对话框(确定取消对话框、单选对话框、多选对话框)实例代码

- Android列表对话框用法实例分析

- 文本文件编码方式区别

- C语言中使用lex统计文本文件字符数

- C#使用Word中的内置对话框实例

- MFC对话框中添加状态栏的方法