Hinton's Dropout in 3 Lines of Python

2016-06-30 15:20

791 查看

Hinton's Dropout in 3 Lines of Python

How to install Dropout into a neural network by only changing 3 lines of python.

Posted by iamtrask on July 28, 2015Summary: Dropout is a vital feature in almost every state-of-the-art neural network implementation. This tutorial teaches how to install Dropout into a neural

network in only a few lines of Python code. Those who walk through this tutorial will finish with a working Dropout implementation and will be empowered with the intuitions to install it and tune it in any neural network they encounter.

Followup Post: I intend to write a followup post to this one adding popular features leveraged by state-of-the-art

approaches. I'll tweet it out when it's complete @iamtrask. Feel free to follow if you'd be interested in reading more and thanks for all

the feedback!

Just Give Me The Code:

viewsourceprint?

01.

import

numpy as np

02.

X

=

np.array([ [

0

,

0

,

1

],[

0

,

1

,

1

],[

1

,

0

,

1

],[

1

,

1

,

1

] ])

03.

y

=

np.array([[

0

,

1

,

1

,

0

]]).T

04.

alpha,hidden_dim,dropout_percent,do_dropout

=

(

0.5

,

4

,

0.2

,

True

)

05.

synapse_0

=

2

*

np.random.random((

3

,hidden_dim))

-

1

06.

synapse_1

=

2

*

np.random.random((hidden_dim,

1

))

-

1

07.

for

j

in

xrange(

60000

):

08.

layer_1

=

(

1

/

(

1

+

np.exp(

-

(np.dot(X,synapse_0)))))

09.

if

(do_dropout):

10.

layer_1

*

=

np.random.binomial([np.ones((len(X),hidden_dim))],

1

-

dropout_percent)[

0

]

*

(

1.0

/

(

1

-

dropout_percent))

11.

layer_2

=

1

/

(

1

+

np.exp(

-

(np.dot(layer_1,synapse_1))))

12.

layer_2_delta

=

(layer_2

-

y)

*

(layer_2

*

(

1

-

layer_2))

13.

layer_1_delta

=

layer_2_delta.dot(synapse_1.T)

*

(layer_1

*

(

1

-

layer_1))

14.

synapse_1

-

=

(alpha

*

layer_1.T.dot(layer_2_delta))

15.

synapse_0

-

=

(alpha

*

X.T.dot(layer_1_delta))

Part 1: What is Dropout?

As discovered in the previous post, a neural network is a glorified search problem. Each node in the neural network is searching for correlation between the input data and the correct output data.

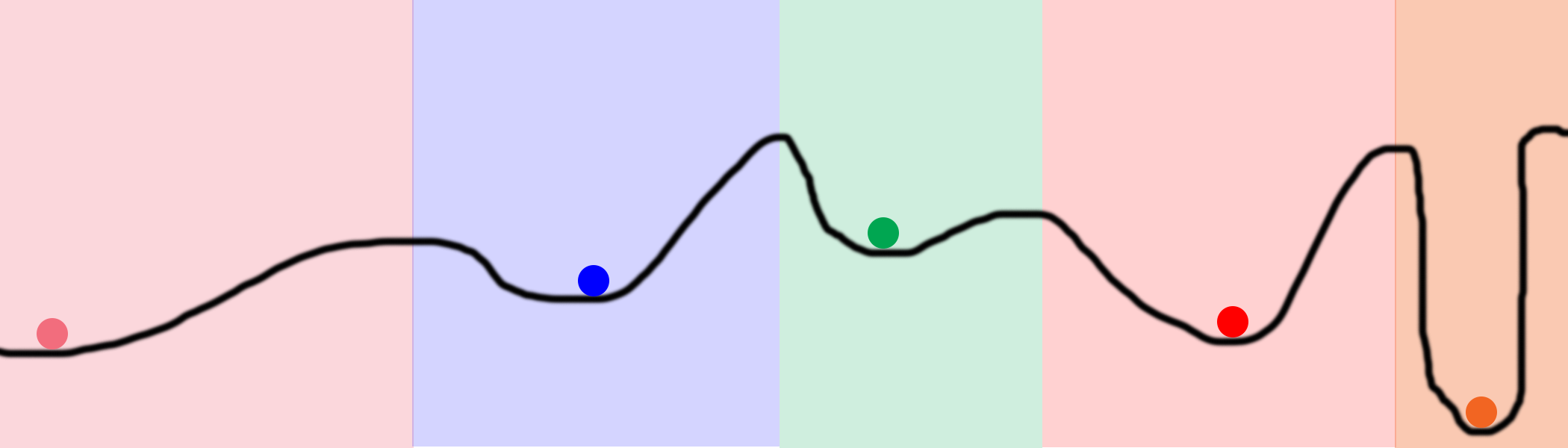

Consider the graphic above from the previous post. The line represents the error the network generates for every value of a particular weight. The low-points (READ: low error) in that line signify the weight "finding" points of correlation between the input and output data. The balls in the picture signify various weights. They are trying to find those low points.Consider the color. The ball's initial positions are randomly generated (just like weights in a neural network). If two balls randomly start in the same colored zone, they will converge to the same point. This makes them redundant! They're wasting computation and memory! This is exactly what happens in neural networks.Why Dropout: Dropout helps prevent weights from converging to identical positions. It does this by randomly turning nodes off when forward propagating. It then back-propagates with all the nodes turned on. Let’s take a closer look.

Part 2: How Do I Install and Tune Dropout?

The highlighted code above demonstrates how to install dropout. To perform dropout on a layer, you randomly set some of the layer's values to 0 during forward propagation. This is demonstratedon line 10.

Line 9: parameterizes using dropout at all. You see, you only want to use Dropout during training. Do not use it at runtime

or on your testing dataset.

EDIT: Line 9: has a second portion to increase the size of the values being propagated forward. This happens in proportion to the number of values being turned

off. A simple intuition is that if you're turning off half of your hidden layer, you want to double the values that ARE pushing forward so that the output compensates correctly. Many thanks to @karpathy for

catching this one.

Tuning Best Practice

Line 4: parameterizes the dropout_percent. This affects the probability that any one node will be turned off. A good initial configuration for this for hiddenlayers is 50%. If applying dropout to an input layer, it's best to not exceed 25%.

Hinton advocates tuning dropout in conjunction with tuning the size of your hidden layer. Increase your hidden layer size(s) with dropout turned off until you perfectly fit your data. Then, using

the same hidden layer size, train with dropout turned on. This should be a nearly optimal configuration. Turn off dropout as soon as you're done training and voila! You have a working neural network!

Want to Work in Machine Learning?

One of the best things you can do to learn Machine Learning is to have a job where you're practicing Machine Learning professionally. I'd encourage you to checkout the positions at Digital Reasoning in your job hunt. If you have questions about any of the positions or about life at Digital

Reasoning, feel free to send me a message on my LinkedIn. I'm happy to hear about where

you want to go in life, and help you evaluate whether Digital Reasoning could be a good fit.

If none of the positions above feel like a good fit. Continue your search! Machine Learning expertise is one of the most valuable skills in the job market today,

and there are many firms looking for practitioners. Perhaps some of these services below will help you in your hunt.

相关文章推荐

- python flush使用

- Python:zip函数

- python 代码

- python内置函数

- 如何在Eclipse上搭建python环境

- python使用random函数生成随机数

- python之pexpect模块

- python操作csv

- python 生成验证码

- Python用户存储加密及登录验证系统(乞丐版)

- 贡献一段学习过程中的爬糗百的代码python

- Python--关于 join 和 split

- 如何基于Python构建一个可扩展的运维自动化平台

- Python 黑帽子 snffer ip header decoder

- Python学习笔记

- 机器学习实战读书笔记(序)

- python知识(3)----正则表达式

- Python 闭包

- python之数据库表迁移

- Python 核心编程笔记_Chapter_5_Note_1 数据类型及相关运算