(版本定制)第8课:Spark Streaming源码解读之RDD生成生命周期彻底研究和思考

2016-05-21 11:33

537 查看

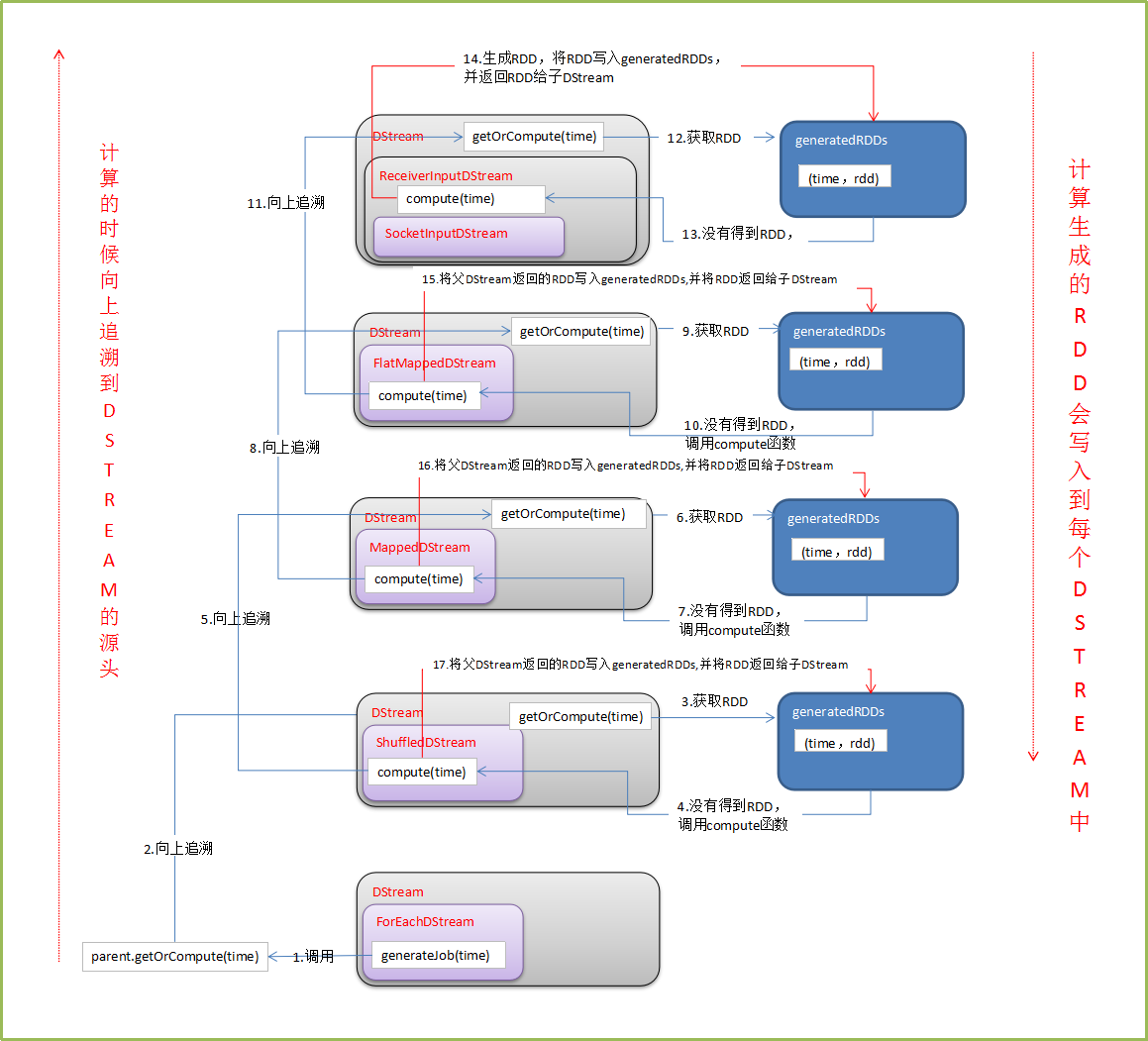

本篇博客将详细探讨DStream模板下的RDD是如何被创建,然后被执行的。在开始叙述之前,先来思考几个问题,本篇文章也就是基于此问题构建的。1. RDD是谁产生的?2. 如何产生RDD?带着这两个问题开启我们的探索之旅。DStream是RDD的模板,每隔一个Batch Interval会根据DStream模板生成一个对应的RDD,然后将RDD存储到DStream中的generatedRDDs数据结构中,下面是存储结构格式。

// RDDs generated, marked as private[streaming] so that testsuites can access it @transient private[streaming] var generatedRDDs = new HashMap[Time, RDD[T]] ()1、简单的WordCount程序

object WordCount { def main(args:Array[String]): Unit ={

val sparkConf = new SparkConf().setMaster("Master:7077").setAppName("WordCount")

val ssc = new StreamingContext(sparkConf,Seconds(10)) // Timer触发频率

val lines = ssc.socketTextStream("Master",9999) //接收数据

val words = lines.flatMap(_.split(" "))

val wordCounts = words.map(x => (x,1)).reduceByKey(_+_)

wordCounts.print()

ssc.start()

ssc.awaitTermination()

}

}首先我们先看看print方法,具体的代码如下:

/*** Print the first num elements of each RDD generated in this DStream. This is an output* operator, so this DStream will be registered as an output stream and there materialized.*/def print(num: Int): Unit = ssc.withScope {def foreachFunc: (RDD[T], Time) => Unit = {(rdd: RDD[T], time: Time) => {val firstNum = rdd.take(num + 1)// scalastyle:off printlnprintln("-------------------------------------------")println("Time: " + time)println("-------------------------------------------")firstNum.take(num).foreach(println)if (firstNum.length > num) println("...")println()// scalastyle:on println}}foreachRDD(context.sparkContext.clean(foreachFunc), displayInnerRDDOps = false)}首先定义了一个函数,该函数用来从RDD中取出前几条数据,并打印出结果与时间等,后面会调用foreachRDD函数。

private def foreachRDD(foreachFunc: (RDD[T], Time) => Unit,displayInnerRDDOps: Boolean): Unit = {new ForEachDStream(this,context.sparkContext.clean(foreachFunc, false), displayInnerRDDOps).register()}/*** Register this streaming as an output stream. This would ensure that RDDs of this* DStream will be generated.*/private[streaming] def register(): DStream[T] = {ssc.graph.addOutputStream(this)this}def addOutputStream(outputStream: DStream[_]) {this.synchronized {outputStream.setGraph(this)outputStreams += outputStream}在foreachRDD中new出了一个ForEachDStream对象,并将这个注册给DStreamGraph,ForEachDStream对象也就是DStreamGraph中的outputStreams。

当每到达一个BatchInterval时候,就会调用DStreamingGraph中的generateJobs.

def generateJobs(time: Time): Seq[Job] = {logDebug("Generating jobs for time " + time)val jobs = this.synchronized {outputStreams.flatMap { outputStream =>val jobOption = outputStream.generateJob(time)jobOption.foreach(_.setCallSite(outputStream.creationSite))jobOption}}logDebug("Generated " + jobs.length + " jobs for time " + time)jobs}这里就会调用outputStream的generateJob方法

private[streaming] def generateJob(time: Time): Option[Job] = {getOrCompute(time) match {case Some(rdd) => {val jobFunc = () => {val emptyFunc = { (iterator: Iterator[T]) => {} }context.sparkContext.runJob(rdd, emptyFunc)}Some(new Job(time, jobFunc))}case None => None}}这里会调用getOrCompute(time)来产生新RDD,并将其存入到generatedRDDs中,整理的过程如下图:

参考博客:

备注:

1、DT大数据梦工厂微信公众号DT_Spark2、IMF晚8点大数据实战YY直播频道号:689175803、新浪微博: http://www.weibo.com/ilovepains[/code]

相关文章推荐

- Spark RDD API详解(一) Map和Reduce

- 使用spark和spark mllib进行股票预测

- Spark随谈——开发指南(译)

- Spark,一种快速数据分析替代方案

- eclipse 开发 spark Streaming wordCount

- Understanding Spark Caching

- ClassNotFoundException:scala.PreDef$

- Windows 下Spark 快速搭建Spark源码阅读环境

- Spark中将对象序列化存储到hdfs

- 使用java代码提交Spark的hive sql任务,run as java application

- Spark机器学习(一) -- Machine Learning Library (MLlib)

- Spark机器学习(二) 局部向量 Local-- Data Types - MLlib

- Spark机器学习(三) Labeled point-- Data Types

- Spark弹性数据集

- Spark初探

- Spark Streaming初探

- Spark本地开发环境搭建

- 直播|易观CTO郭炜:精益化数据分析——如何让你的企业具有BAT一样的分析能力

- 挨踢部落第一期:Spark离线分析维度 推荐