完全分布式Hadoop3.X的搭建

准备工作以及安装Hadoop之前的操作和Hadoop2.X的安装相同,在我上一篇博客中,这里不做过多介绍

https://www.cnblogs.com/lmandcc/p/15306163.html

1. 写一些关键脚本,利于后续集群使用

1.1集群分发脚本xsync

cd /bin/

vim xsyn

#!/bin/bash #1. 判断参数个数 if [ $# -lt 1 ] then echo Not Enough Arguement! exit; fi #2. 遍历集群所有机器 for host in hadoop102 hadoop103 hadoop104 do echo ==================== $host ==================== #3. 遍历所有目录,挨个发送 for file in $@ do #4. 判断文件是否存在 if [ -e $file ] then #5. 获取父目录 pdir=$(cd -P $(dirname $file); pwd) #6. 获取当前文件的名称 fname=$(basename $file) ssh $host "mkdir -p $pdir" rsync -av $pdir/$fname $host:$pdir else echo $file does not exists! fi done done

1.2 查看节点Java进程脚本 jpsall

vim jpsall

#!/bin/bash for i in hadoop102 hadoop103 hadoop104 do echo =============== $i =============== ssh $i "$*" "/usr/local/soft/jdk1.8.0_212/bin/jps" done

1.3 控制Hadoop启动和停止脚本

vim myhadoop

#!/bin/bash if [ $# -lt 1 ] then echo "No Args Input..." exit ; fi case $1 in "start") echo " =================== 启动 hadoop集群 ===================" echo " --------------- 启动 hdfs ---------------" ssh hadoop102 "/usr/local/soft/hadoop-3.1.3/sbin/start-dfs.sh" echo " --------------- 启动 yarn ---------------" ssh hadoop103 "/usr/local/soft/hadoop-3.1.3/sbin/start-yarn.sh" echo " --------------- 启动 historyserver ---------------" ssh hadoop102 "/usr/local/soft/hadoop-3.1.3/bin/mapred --daemon start his toryserver";; "stop") echo " =================== 关闭 hadoop集群 ===================" echo " --------------- 关闭 historyserver ---------------" ssh hadoop102 "/usr/local/soft/hadoop-3.1.3/bin/mapred --daemon stop hist oryserver" echo " --------------- 关闭 yarn ---------------" ssh hadoop103 "/usr/local/soft/hadoop-3.1.3/sbin/stop-yarn.sh" echo " --------------- 关闭 hdfs ---------------" ssh hadoop102 "/usr/local/soft/hadoop-3.1.3/sbin/stop-dfs.sh" ;; *) echo "Input Args Error..." ;; esac

注意:这里每个脚本都需要修改读写权限:chmod 777 脚本名称

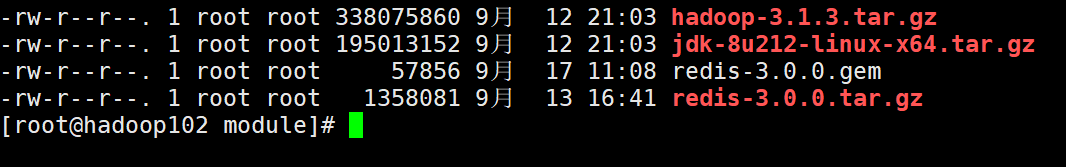

2. 将Hadoop3.1.3用xftp导入Hadoop102中

3. 解压Hadoop文件

tar -zxvf /usr/local/module/hadoop-3.1.3.tar.gz -C /usr/local/soft

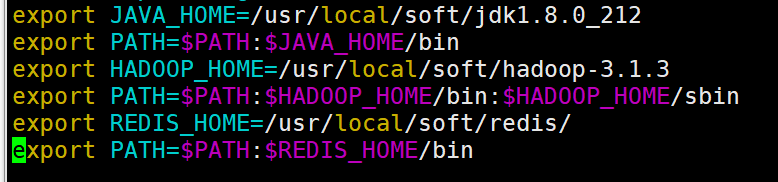

4. 配置环境变量

export JAVA_HOME=/usr/local/soft/jdk1.8.0_212 export PATH=$PATH:$JAVA_HOME/bin export HADOOP_HOME=/usr/local/soft/hadoop-3.1.3 export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin export REDIS_HOME=/usr/local/soft/redis/ export PATH=$PATH:$REDIS_HOME/bin

5. 集群配置搭建

1) 集群部署规划

备注:NameNode和SecondaryNameNode不要安装在同一台服务器

备注:ResourceManager也很消耗内存,不要和NameNode、SecondaryNameNode配置在同一台机器上。

|

hadoop102 |

hadoop103 |

hadoop104 |

|

|

HDFS

|

NameNode DataNode |

DataNode |

SecondaryNameNode DataNode |

|

YARN |

NodeManager |

ResourceManager NodeManager |

NodeManager |

2)配置集群

cd /usr/local/soft/hadoop-3.1.3/etc/hadoop

(a) 核心配置文件 core.site.xml

<configuration> <!-- 指定NameNode的地址 --> <property> <name>fs.defaultFS</name> <value>hdfs://hadoop102:8020</value> </property> <!-- 指定hadoop数据的存储目录 --> <property> <name>hadoop.tmp.dir</name> <value>/usr/local/soft/hadoop-3.1.3/data</value> </property> </configuration>

(b)HDFS文件 hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!-- nn web端访问地址--> <property> <name>dfs.namenode.http-address</name> <value>hadoop102:9870</value> </property> <!-- 2nn web端访问地址--> <property> <name>dfs.namenode.secondary.http-address</name> <value>hadoop104:9868</value> </property> <property> <name>dfs.permissions.enabled</name> <value>false</value> </property> <property> <name> dfs.client.block.write.replace-datanode-on-failure.enable </name> <value>true</value> </property> <property> <name> dfs.client.block.write.replace-datanode-on-failure.policy </name> <value>never</value> </property> <property> <name>dfs.webhdfs.enabled</name> <value>true</value> </property> </configuration>

(c)YARN配置文件 yarn-site.xml

<configuration> <!-- Site specific YARN configuration properties --> <!-- 指定MR走shuffle --> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <!-- 指定ResourceManager的地址--> <property> <name>yarn.resourcemanager.hostname</name> <value>hadoop103</value> </property> <!-- 环境变量的继承 --> <property> <name>yarn.nodemanager.env-whitelist</name> <value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLAS SPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME</value> </property> <!-- 开启日志聚集功能 --> <property> <name>yarn.log-aggregation-enable</name> <value>true</value> </property> <!-- 设置日志聚集服务器地址 --> <property> <name>yarn.log.server.url</name> <value>http://hadoop102:19888/jobhistory/logs</value> </property> <!-- 设置日志保留时间为7天 --> <property> <name>yarn.log-aggregation.retain-seconds</name> <value>604800</value> </property> </configuration>

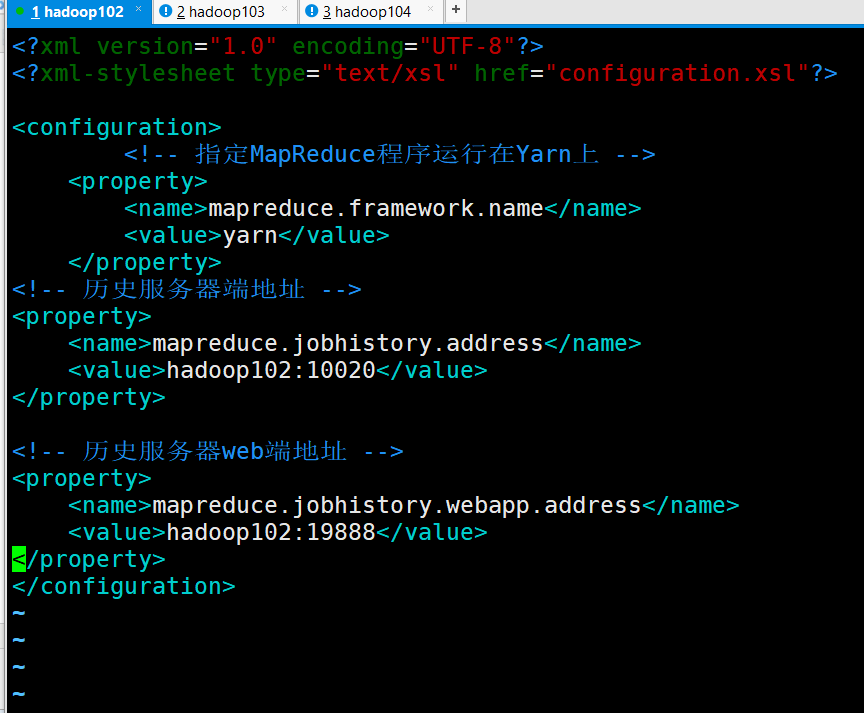

(d)MapReduce配置文件 mapred-site.xml

在这里需要重命名mapred-site.xml.template

命令:mv mapred-site.xml.template mapred-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!-- 指定MapReduce程序运行在Yarn上 --> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <!-- 历史服务器端地址 --> <property> <name>mapreduce.jobhistory.address</name> <value>hadoop102:10020</value> </property> <!-- 历史服务器web端地址 --> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>hadoop102:19888</value> </property> </configuration>

(e)配置workers

(f)在/hadoop/sbin路径下:将start-dfs.sh,stop-dfs.sh两个文件顶部添加以下参数

HDFS_DATANODE_USER=root HADOOP_SECURE_DN_USER=hdfs HDFS_NAMENODE_USER=root HDFS_SECONDARYNAMENODE_USER=root

(g)start-yarn.sh,stop-yarn.sh顶部也需添加以下

YARN_RESOURCEMANAGER_USER=root HADOOP_SECURE_DN_USER=yarn YARN_NODEMANAGER_USER=root

6.分发Hadoop给剩余节点

xsync /usr/local/soft/hadoop-3.1.3

7. 启动集群

如果集群是第一次启动,需要在hadoop102节点格式化NameNode

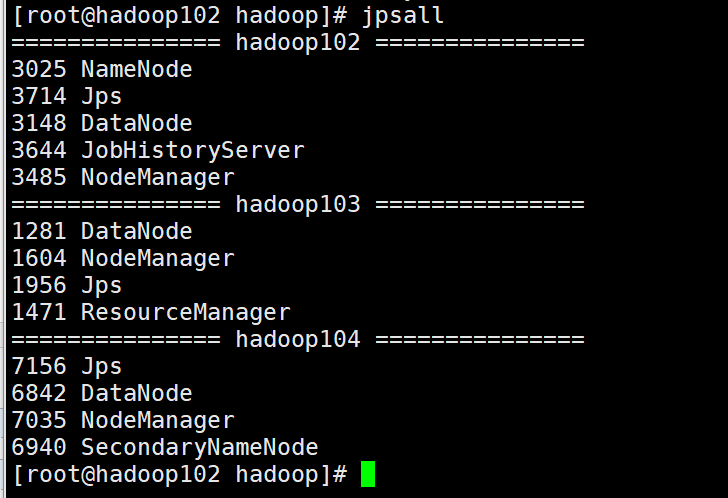

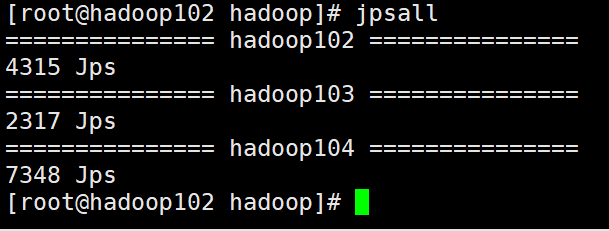

使用myhadoop start 启动集群

使用jpsall查看Java进程

如果配置文件不出错的话,应该和我Java进程相同

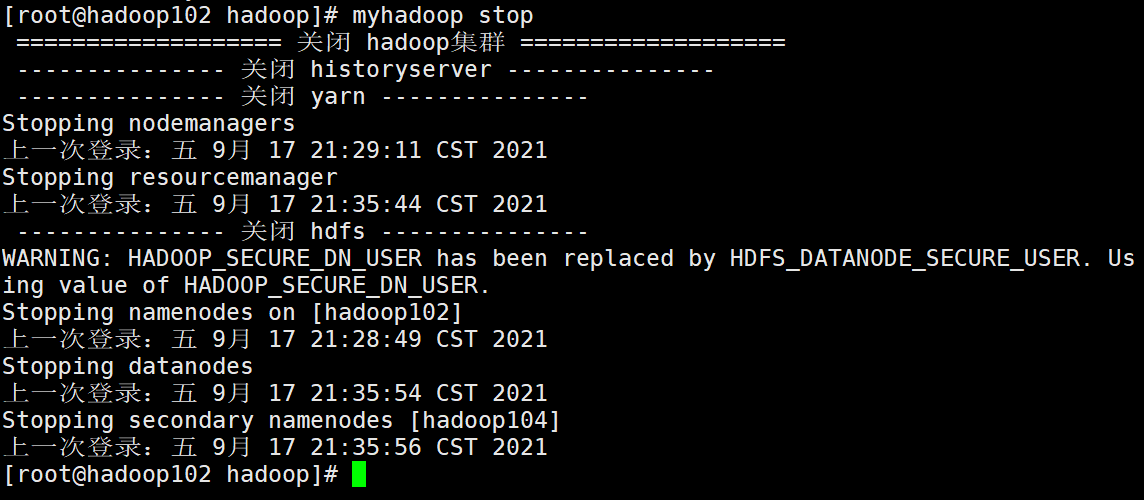

8.停止Hadoop集群

myhadoop stop

- Hadoop分布式集群搭建完全教程

- Hadoop集群完全分布式搭建教程-CentOS

- hadoop2.7.1在vmware上3台centos7虚拟机上的完全分布式集群搭建

- Hadoop2.7.3+Spark2.1.0完全分布式集群搭建过程

- Hadoop手把手逐级搭建,从单机伪分布到高可用+联邦(2)Hadoop完全分布式(full)

- 【Hadoop】搭建完全分布式的hadoop

- 搭建hadoop完全分布式环境,CentOS6.5安装Hadoop2.7.3完整流程

- hadoop完全分布式搭建datanode无法启动原因

- hadoop完全分布式文件系统集群搭建

- Ubuntu Hadoop 完全分布式搭建

- win10+VMware+centos7 环境下搭建hadoop完全分布式

- 【Hadoop】搭建完全分布式的hadoop

- 完全分布式Hadoop集群在虚拟机CentOS7上搭建——过程与注意事项

- Hadoop完全分布式的搭建

- Hadoop系统完全分布式集群搭建方法

- Hadoop完全分布式集群搭建(2.9.0)

- 【干货】Apache Hadoop 2.8 完全分布式集群搭建超详细过程,实现NameNode HA、ResourceManager HA高可靠性...

- ubuntu 虚拟机 完全分布式 hadoop集群搭建 hive搭建 ha搭建

- Hadoop 2.7.3 完全分布式集群系统搭建

- Hadoop2.5.2完全分布式搭建