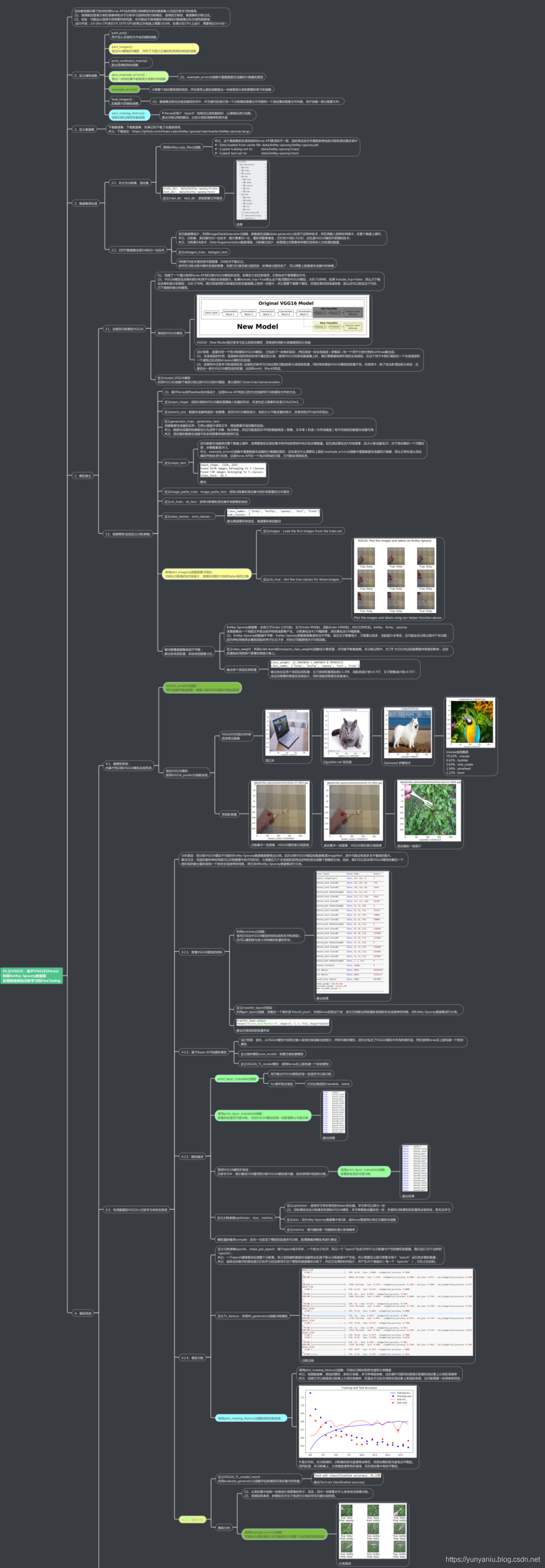

DL之VGG16:基于VGG16(Keras)利用Knifey-Spoony数据集对网络架构进行迁移学习

2019-08-13 22:36

1201 查看

版权声明:本文为博主原创文章,遵循 CC 4.0 by-sa 版权协议,转载请附上原文出处链接和本声明。

本文链接:https://blog.csdn.net/qq_41185868/article/details/99485570

DL之VGG16:基于VGG16(Keras)利用Knifey-Spoony数据集对网络架构迁移学习

目录

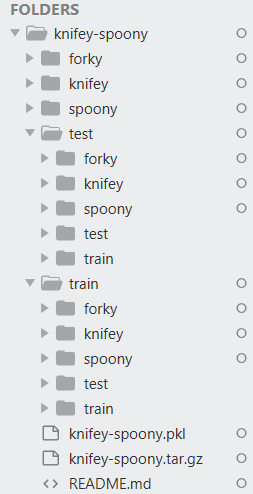

数据集

Dataset之Knifey-Spoony:Knifey-Spoony数据集的简介、下载、使用方法之详细攻略

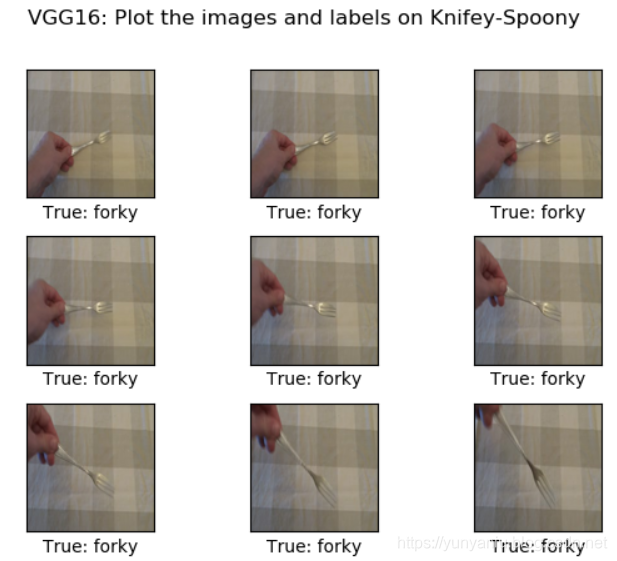

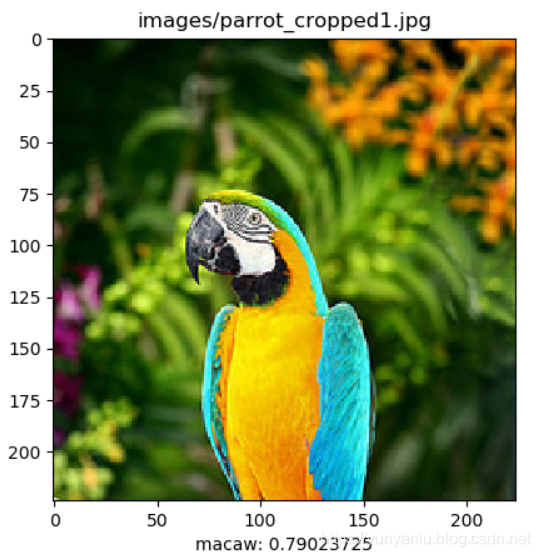

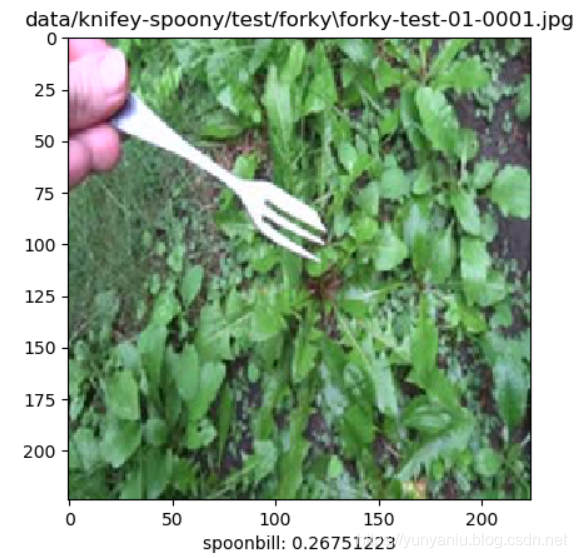

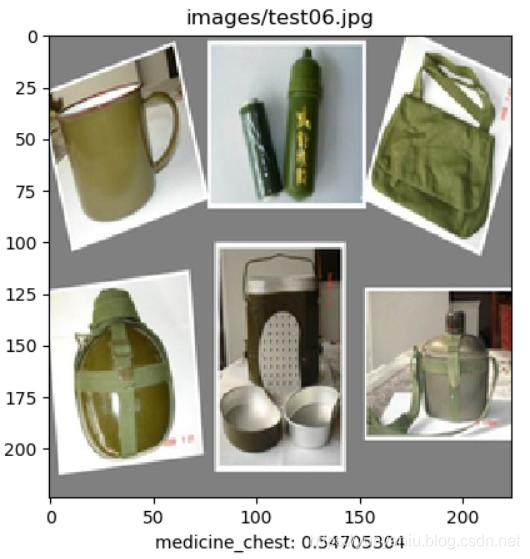

输出结果

|

|

|

|

|

|

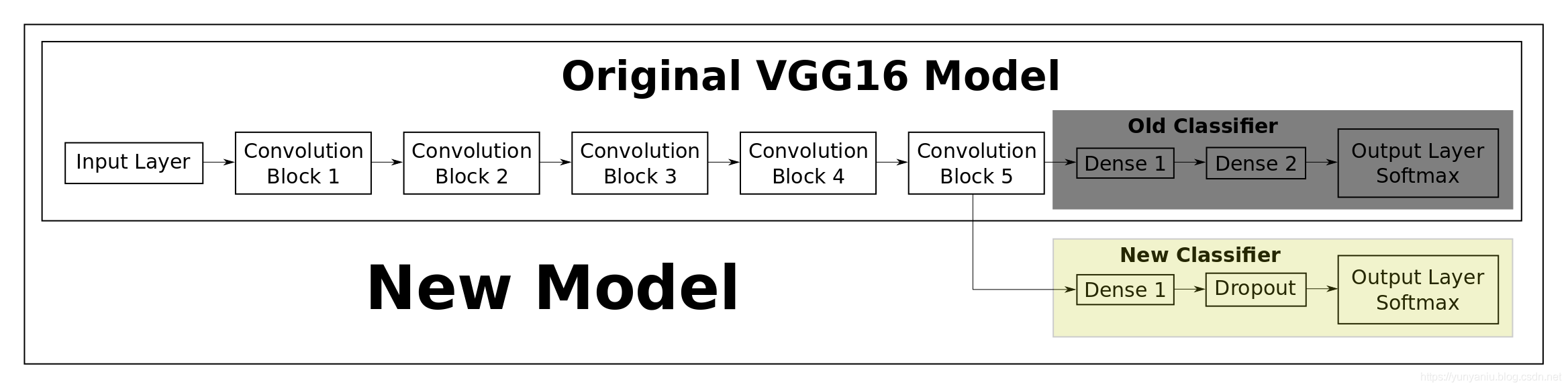

设计思路

1、基模型

2、思路导图

核心代码

[code]

model_VGG16.summary()

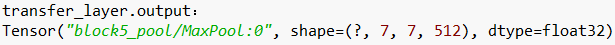

transfer_layer = model_VGG16.get_layer('block5_pool')

print('transfer_layer.output:', transfer_layer.output)

conv_model = Model(inputs=model_VGG16.input,

outputs=transfer_layer.output)

VGG16_TL_model = Sequential() # Start a new Keras Sequential model.

VGG16_TL_model.add(conv_model) # Add the convolutional part of the VGG16 model from above.

VGG16_TL_model.add(Flatten()) # Flatten the output of the VGG16 model because it is from a convolutio

7ff7

nal layer.

VGG16_TL_model.add(Dense(1024, activation='relu')) # Add a dense (aka. fully-connected) layer. This is for combining features that the VGG16 model has recognized in the image.

VGG16_TL_model.add(Dropout(0.5)) # Add a dropout-layer which may prevent overfitting and improve generalization ability to unseen data e.g. the test-set.

VGG16_TL_model.add(Dense(num_classes, activation='softmax')) # Add the final layer for the actual classification.

print_layer_trainable()

conv_model.trainable = False

for layer in conv_model.layers:

layer.trainable = False

print_layer_trainable()

loss = 'categorical_crossentropy'

metrics = ['categorical_accuracy']

VGG16_TL_model.compile(optimizer=optimizer, loss=loss, metrics=metrics)

epochs = 20

steps_per_epoch = 100

history = VGG16_TL_model.fit_generator(generator=generator_train,

epochs=epochs,

steps_per_epoch=steps_per_epoch,

class_weight=class_weight,

validation_data=generator_test,

validation_steps=steps_test)

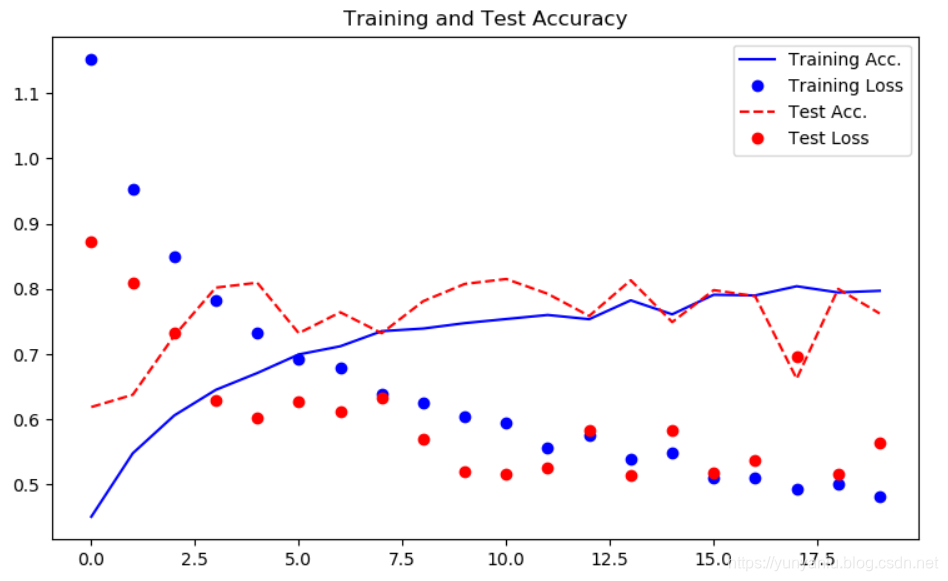

plot_training_history(history)

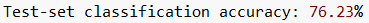

VGG16_TL_model_result = VGG16_TL_model.evaluate_generator(generator_test, steps=steps_test)

print("Test-set classification accuracy: {0:.2%}".format(VGG16_TL_model_result[1]))

更多输出

[code]输出tensorflow的版本: 1.10.0

Data has apparently already been downloaded and unpacked.

maybe_download_and_extract()函数执行结束!

load()函数的data_dir: data/knifey-spoony/

Creating dataset from the files in: data/knifey-spoony/

- Data loaded from cache-file: data/knifey-spoony/knifey-spoony.pkl

执行load()函数结束!

get_paths()函数的self.in_dir输出: data/knifey-spoony

- Copied training-set to: data/knifey-spoony/train/

get_paths()函数的self.in_dir输出: data/knifey-spoony

- Copied test-set to: data/knifey-spoony/test/

data/knifey-spoony/train/ data/knifey-spoony/test/

……

383418368/553467096 [===================>..........] - ETA: 2:09

383614976/553467096 [===================>..........] - ETA: 2:09

383811584/553467096 [===================>..........] - ETA: 2:09

383860736/553467096 [===================>..........] - ETA: 2:09

383942656/553467096 [===================>..........] - ETA: 2:09

……

394297344/553467096 [====================>.........] - ETA: 2:00

394510336/553467096 [====================>.........] - ETA: 1:59

394723328/553467096 [====================>.........] - ETA: 1:59

394821632/553467096 [====================>.........] - ETA: 1:59

395018240/553467096 [====================>.........] - ETA: 1:59

395214848/553467096 [====================>.........] - ETA: 1:59

395395072/553467096 [====================>.........] - ETA: 1:59

395542528/553467096 [====================>.........] - ETA: 1:58

……

469909504/553467096 [========================>.....] - ETA: 1:00

470040576/553467096 [========================>.....] - ETA: 1:00

470122496/553467096 [========================>.....] - ETA: 59s

470351872/553467096 [========================>.....] - ETA: 59s

470499328/553467096 [========================>.....] - ETA: 59s

470630400/553467096 [========================>.....] - ETA: 59s

470712320/553467096 [========================>.....] - ETA: 59s

470925312/553467096 [========================>.....] - ETA: 59s

471089152/553467096 [========================>.....] - ETA: 59s

471220224/553467096 [========================>.....] - ETA: 59s

471302144/553467096 [========================>.....] - ETA: 59s

471515136/553467096 [========================>.....] - ETA: 58s

471678976/553467096 [========================>.....] - ETA: 58s

……

536248320/553467096 [============================>.] - ETA: 12s

536772608/553467096 [============================>.] - ETA: 11s

537329664/553467096 [============================>.] - ETA: 11s

537378816/553467096 [============================>.] - ETA: 11s

537444352/553467096 [============================>.] - ETA: 11s

537640960/553467096 [============================>.] - ETA: 11s

537755648/553467096 [============================>.] - ETA: 11s

537788416/553467096 [============================>.] - ETA: 10s

537821184/553467096 [============================>.] - ETA: 10s

……

551862272/553467096 [============================>.] - ETA: 1s

551993344/553467096 [============================>.] - ETA: 1s

552042496/553467096 [============================>.] - ETA: 0s

552124416/553467096 [============================>.] - ETA: 0s

552337408/553467096 [============================>.] - ETA: 0s

552501248/553467096 [============================>.] - ETA: 0s

552517632/553467096 [============================>.] - ETA: 0s

552583168/553467096 [============================>.] - ETA: 0s

552697856/553467096 [============================>.] - ETA: 0s

552910848/553467096 [============================>.] - ETA: 0s

553041920/553467096 [============================>.] - ETA: 0s

553123840/553467096 [============================>.] - ETA: 0s

553287680/553467096 [============================>.] - ETA: 0s

553467904/553467096 [==============================] - 386s 1us/step

2019-08-14 11:44:51.782638: I T:\src\github\tensorflow\tensorflow\core\platform\cpu_feature_guard.cc:141] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2

2019-08-14 11:44:53.212742: W T:\src\github\tensorflow\tensorflow\core\framework\allocator.cc:108] Allocation of 411041792 exceeds 10% of system memory.

2019-08-14 11:44:54.302588: W T:\src\github\tensorflow\tensorflow\core\framework\allocator.cc:108] Allocation of 411041792 exceeds 10% of system memory.

2019-08-14 11:44:54.310978: W T:\src\github\tensorflow\tensorflow\core\framework\allocator.cc:108] Allocation of 411041792 exceeds 10% of system memory.

(224, 224)

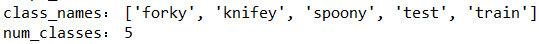

Found 4170 images belonging to 5 classes.

Found 530 images belonging to 5 classes.

26.5

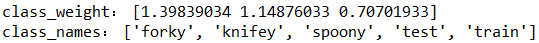

['forky', 'knifey', 'spoony', 'test', 'train']

5

class_weight: [1.39839034 1.14876033 0.70701933]

['forky', 'knifey', 'spoony', 'test', 'train']

Downloading data from https://s3.amazonaws.com/deep-learning-models/image-models/imagenet_class_index.json

8192/35363 [=====>........................] - ETA: 0s

16384/35363 [============>.................] - ETA: 0s

40960/35363 [==================================] - 0s 6us/step

79.02% : macaw

6.61% : bubble

3.64% : vine_snake

1.90% : pinwheel

1.22% : knot

50.31% : shower_curtain

17.08% : handkerchief

12.75% : mosquito_net

2.87% : window_shade

1.32% : toilet_tissue

45.08% : shower_curtain

21.84% : mosquito_net

11.55% : handkerchief

2.02% : window_shade

0.91% : Windsor_tie

26.75% : spoonbill

7.06% : black_stork

7.04% : wooden_spoon

4.21% : limpkin

3.72% : paddle

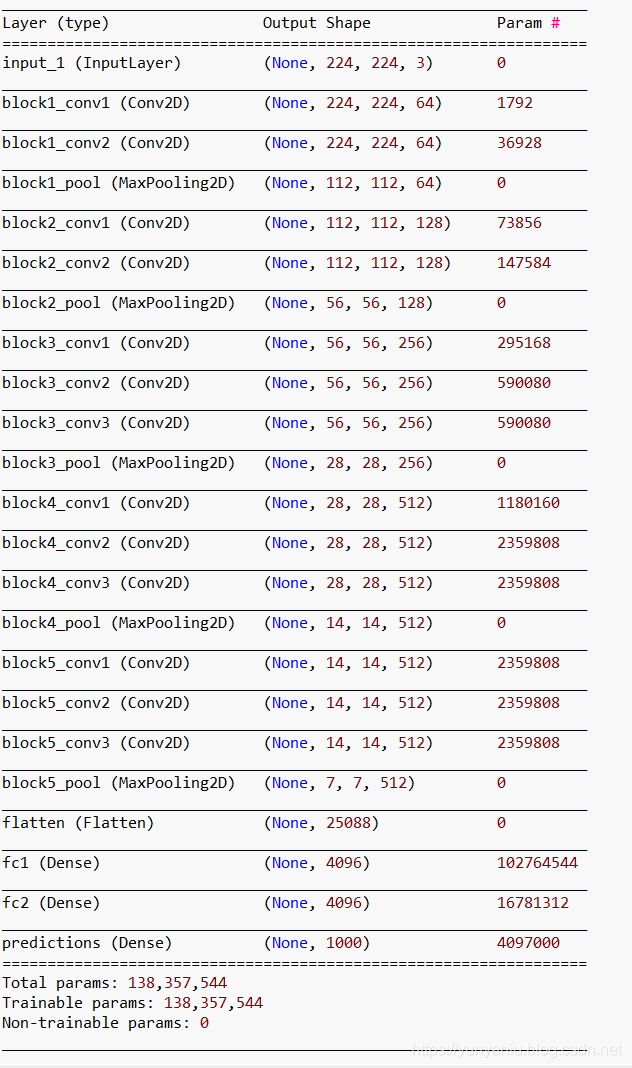

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_1 (InputLayer) (None, 224, 224, 3) 0

_________________________________________________________________

block1_conv1 (Conv2D) (None, 224, 224, 64) 1792

_________________________________________________________________

block1_conv2 (Conv2D) (None, 224, 224, 64) 36928

_________________________________________________________________

block1_pool (MaxPooling2D) (None, 112, 112, 64) 0

_________________________________________________________________

block2_conv1 (Conv2D) (None, 112, 112, 128) 73856

_________________________________________________________________

block2_conv2 (Conv2D) (None, 112, 112, 128) 147584

_________________________________________________________________

block2_pool (MaxPooling2D) (None, 56, 56, 128) 0

_________________________________________________________________

block3_conv1 (Conv2D) (None, 56, 56, 256) 295168

_________________________________________________________________

block3_conv2 (Conv2D) (None, 56, 56, 256) 590080

_________________________________________________________________

block3_conv3 (Conv2D) (None, 56, 56, 256) 590080

_________________________________________________________________

block3_pool (MaxPooling2D) (None, 28, 28, 256) 0

_________________________________________________________________

block4_conv1 (Conv2D) (None, 28, 28, 512) 1180160

_________________________________________________________________

block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

block4_pool (MaxPooling2D) (None, 14, 14, 512) 0

_________________________________________________________________

block5_conv1 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_pool (MaxPooling2D) (None, 7, 7, 512) 0

_________________________________________________________________

flatten (Flatten) (None, 25088) 0

_________________________________________________________________

fc1 (Dense) (None, 4096) 102764544

_________________________________________________________________

fc2 (Dense) (None, 4096) 16781312

_________________________________________________________________

predictions (Dense) (None, 1000) 4097000

=================================================================

Total params: 138,357,544

Trainable params: 138,357,544

Non-trainable params: 0

_________________________________________________________________

Tensor("block5_pool/MaxPool:0", shape=(?, 7, 7, 512), dtype=float32)

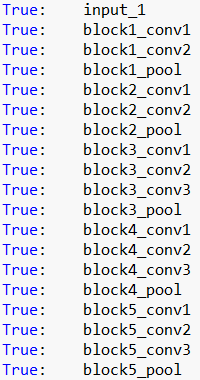

True: input_1

True: block1_conv1

True: block1_conv2

True: block1_pool

True: block2_conv1

True: block2_conv2

True: block2_pool

True: block3_conv1

True: block3_conv2

True: block3_conv3

True: block3_pool

True: block4_conv1

True: block4_conv2

True: block4_conv3

True: block4_pool

True: block5_conv1

True: block5_conv2

True: block5_conv3

True: block5_pool

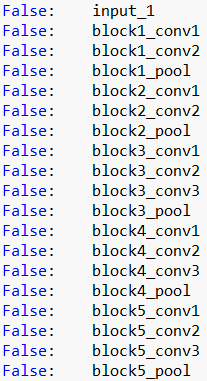

False: input_1

False: block1_conv1

False: block1_conv2

False: block1_pool

False: block2_conv1

False: block2_conv2

False: block2_pool

False: block3_conv1

False: block3_conv2

False: block3_conv3

False: block3_pool

False: block4_conv1

False: block4_conv2

False: block4_conv3

False: block4_pool

False: block5_conv1

False: block5_conv2

False: block5_conv3

False: block5_pool

--------------

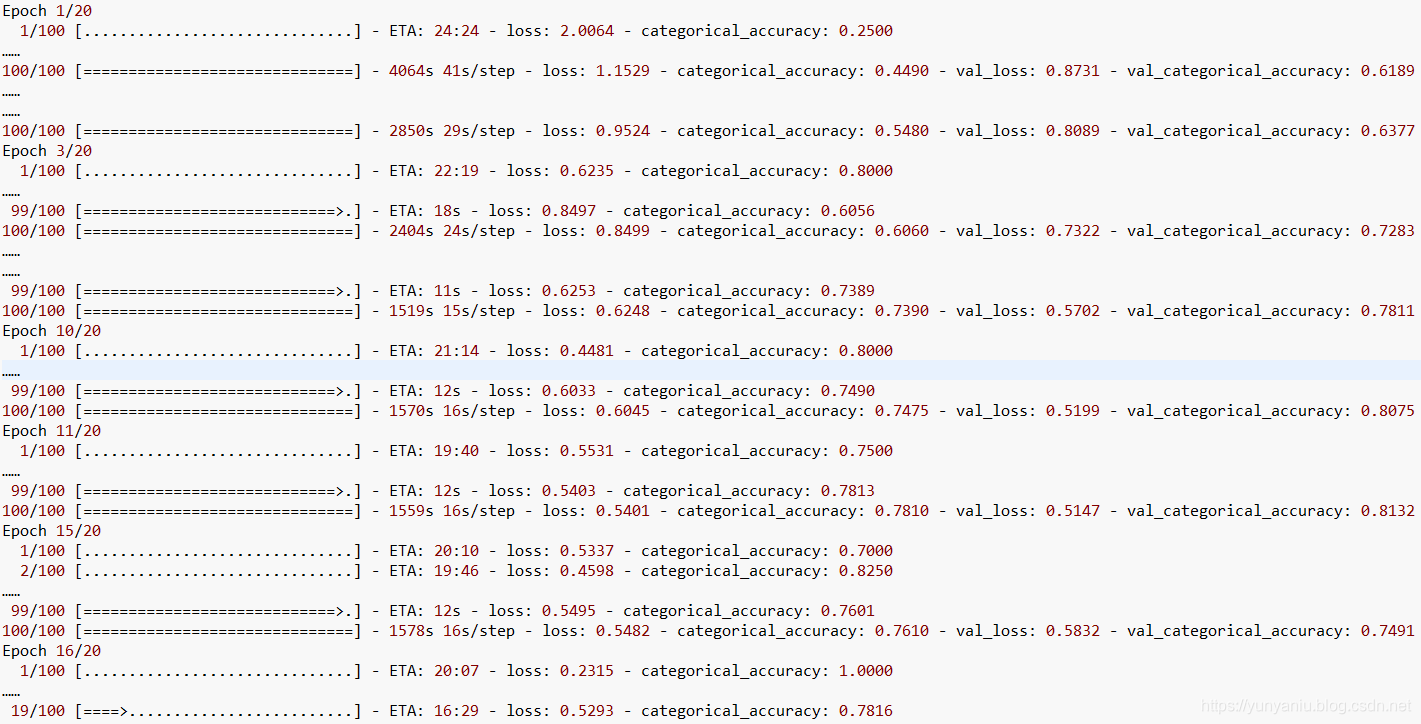

Epoch 1/20

1/100 [..............................] - ETA: 24:24 - loss: 2.0064 - categorical_accuracy: 0.2500

……

100/100 [==============================] - 4064s 41s/step - loss: 1.1529 - categorical_accuracy: 0.4490 - val_loss: 0.8731 - val_categorical_accuracy: 0.6189

……

……

100/100 [==============================] - 2850s 29s/step - loss: 0.9524 - categorical_accuracy: 0.5480 - val_loss: 0.8089 - val_categorical_accuracy: 0.6377

Epoch 3/20

1/100 [..............................] - ETA: 22:19 - loss: 0.6235 - categorical_accuracy: 0.8000

……

99/100 [============================>.] - ETA: 18s - loss: 0.8497 - categorical_accuracy: 0.6056

100/100 [==============================] - 2404s 24s/step - loss: 0.8499 - categorical_accuracy: 0.6060 - val_loss: 0.7322 - val_categorical_accuracy: 0.7283

……

……

99/100 [============================>.] - ETA: 11s - loss: 0.6253 - categorical_accuracy: 0.7389

100/100 [==============================] - 1519s 15s/step - loss: 0.6248 - categorical_accuracy: 0.7390 - val_loss: 0.5702 - val_categorical_accuracy: 0.7811

Epoch 10/20

1/100 [..............................] - ETA: 21:14 - loss: 0.4481 - categorical_accuracy: 0.8000

……

99/100 [============================>.] - ETA: 12s - loss: 0.6033 - categorical_accuracy: 0.7490

100/100 [==============================] - 1570s 16s/step - loss: 0.6045 - categorical_accuracy: 0.7475 - val_loss: 0.5199 - val_categorical_accuracy: 0.8075

Epoch 11/20

1/100 [..............................] - ETA: 19:40 - loss: 0.5531 - categorical_accuracy: 0.7500

……

99/100 [============================>.] - ETA: 12s - loss: 0.5403 - categorical_accuracy: 0.7813

100/100 [==============================] - 1559s 16s/step - loss: 0.5401 - categorical_accuracy: 0.7810 - val_loss: 0.5147 - val_categorical_accuracy: 0.8132

Epoch 15/20

1/100 [..............................] - ETA: 20:10 - loss: 0.5337 - categorical_accuracy: 0.7000

2/100 [..............................] - ETA: 19:46 - loss: 0.4598 - categorical_accuracy: 0.8250

……

99/100 [============================>.] - ETA: 12s - loss: 0.5495 - categorical_accuracy: 0.7601

100/100 [==============================] - 1578s 16s/step - loss: 0.5482 - categorical_accuracy: 0.7610 - val_loss: 0.5832 - val_categorical_accuracy: 0.7491

Epoch 16/20

1/100 [..............................] - ETA: 20:07 - loss: 0.2315 - categorical_accuracy: 1.0000

……

19/100 [====>.........................] - ETA: 16:29 - loss: 0.5293 - categorical_accuracy: 0.7816

相关文章推荐

- DL之VGG16:基于VGG16(Keras)利用Knifey-Spoony数据集对网络架构FineTuning

- keras系列︱迁移学习:利用InceptionV3进行fine-tuning及预测、完美案例(五)

- 『TensorFlow』SSD源码学习_其二:基于VGG的SSD网络前向架构

- keras迁移学习 使用vgg16进行手写数字识别

- TensorFlow学习实践(五):基于vgg-16、inception_v3、resnet_v1_50模型进行fine-tune全过程

- keras系列︱迁移学习:利用InceptionV3进行fine-tuning及预测、完美案例(五)

- Keras学习2:使用自己的数据实现迁移学习微调VGG-16

- 利用tensorflow一步一步实现基于MNIST 数据集进行手写数字识别的神经网络,逻辑回归

- keras系列︱迁移学习:利用InceptionV3进行fine-tuning及预测、完美案例(五)

- keras系列︱迁移学习:利用InceptionV3进行fine-tuning及预测、完美案例(五)

- keras系列︱迁移学习:利用InceptionV3进行fine-tuning及预测、完美案例(五)

- 深度学习-CAFFE利用CIFAR10网络模型训练自己的图像数据获得模型-1.制作自己的数据集

- TensorFlow迁移学习-使用谷歌训练好的Inception-v3网络进行分类

- 【备忘】深度学习实战项目-利用RNN与LSTM网络原理进行唐诗生成视频课程

- [深度学习框架] Keras上使用神经网络进行mnist分类

- 利用Keras搭建神经网络进行回归预测

- 基于Theano的深度学习(Deep Learning)框架Keras学习随笔-11-数据集

- 神经网络与深度学习 1.6 使用Python实现基于梯度下降算法的神经网络和MNIST数据集的手写数字分类程序

- DL之DNN:自定义2层神经网络TwoLayerNet模型(计算梯度两种方法)算法对MNIST数据集进行训练、预测

- 神经网络与深度学习 使用Python实现基于梯度下降算法的神经网络和自制仿MNIST数据集的手写数字分类可视化程序 web版本