kubeadm部署kubernetes-1.12.0 HA集群-ipvs

一、概述

主要介绍搭建流程及使用注意事项,如果线上使用的话,请务必做好相关测试及压测。

1、基础环境准备

系统:ubuntu TLS 16.04 5台

docker-ce:17.06.2

kubeadm、kubelet、kubectl:1.12.0

keepalived、haproxy

etcd-3.2.22

2、安装前准备

1)k8s各节点SSH免密登录。

2)各Node必须关闭swap:swapoff -a,否则kubelet启动失败。

vim /etc/sysctl.conf vm.swappiness = 0 sysctl -p

3)各节点主机名和IP加入/etc/hosts解析

etcd采用单独部署的集群。

二、部署准备

1、安装docker:

apt-get update apt-get install \ apt-transport-https \ ca-certificates \ curl \ software-properties-common curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add - apt-key fingerprint 0EBFCD88 add-apt-repository \ "deb [arch=amd64] https://download.docker.com/linux/ubuntu \ $(lsb_release -cs) \ stable" apt-get update apt policy docker-ce 找到自己需要的版本,然后安装 apt install -y docker-ce=17.06.2~ce-0~ubuntu

修改docker的一些设置

vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --graph=/data --storage-driver=overlay2

配置docker的proxy,否则无法直接下载镜像。

mkdir /etc/systemd/system/docker.service.d

cat http-proxy.conf

[Service]

Environment="HTTP_PROXY=http://10.42.3.110:8118" "NO_PROXY=localhost,127.0.0.1,0.0.0.0,10.42.73.110,10.10.25.49,10.2.140.154,10.42.104.113,10.2.177.142,10.2.68.77,172.11.0.0,172.10.0.0,172.11.0.0/16,172.10.0.0/16,10.,172.,.evo.get.com,.kube.hp.com,charts.gitlab.io,.mirror.ucloud.cn"

cat https-proxy.conf

[Service]

Environment="HTTPS_PROXY=http://10.42.3.110:8118" "NO_PROXY=localhost,127.0.0.1,0.0.0.0,10.42.73.110,10.10.25.49,10.2.140.154,10.42.104.113,10.2.177.142,10.2.68.77,172.11.0.0,172.10.0.0,172.11.0.0/16,172.10.0.0/16,10.,172.,.evo.get.com,.kube.hp.com,charts.gitlab.io,.mirror.ucloud.cn"

systemctl daemon-reload

systemctl restart docker

iptables设置,17.06.2默认的FORWARD是DROP,这样pod之间的通信就会收到影响,所以需要设置成ACCEPT

iptables -P FORWARD ACCEPT iptables-save

2、安装CA证书

1)安装cfssl证书管理工具

mkdir /opt/bin wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 chmod +x cfssl_linux-amd64 mv cfssl_linux-amd64 /opt/bin/cfssl wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 chmod +x cfssljson_linux-amd64 mv cfssljson_linux-amd64 /opt/bin/cfssljson wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 chmod +x cfssl-certinfo_linux-amd64 mv cfssl-certinfo_linux-amd64 /opt/bin/cfssl-certinfo echo "export PATH=/opt/bin:$PATH" > /etc/profile.d/k8s.sh

source /etc/profile.d/k8s.sh

2)创建CA配置文件

mkdir ssl;cd ssl cfssl print-defaults config >config.json cfssl print-defaults csr >csr.json

根据生成的文件创建ca-config.json文件,过期时间设置为87600h

# cat ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

创建CA证书签名请求

# cat ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "GD",

"L": "SZ",

"O": "k8s",

"OU": "System"

}

]

}

生成证书和私钥

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

copy证书到相应节点

mkdir /etc/kubernetes/ssl -p cp ~/ssl/ca* /etc/kubernetes/ssl scp -r /etc/kubernetes/ km12-02:/etc scp -r /etc/kubernetes/ km12-03:/etc

3、下载etcd,新版本的k8s要求etcd版本3.2.18以上,这里使用3.2.22

wget https://github.com/coreos/etcd/releases/download/v3.2.22/etcd-v3.2.22-linux-amd64.tar.gz tar xf etcd-v3.2.22-linux-amd64.tar.gz cd etcd-v3.2.22-linux-amd64 cp etcd etcdctl /opt/bin

source /etc/profile.d/k8s.sh

制作etcd证书:

创建签名文件

# cat etcd-csr.json

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"10.42.13.230",

"10.42.43.147",

"10.42.150.212"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "GD",

"L": "SZ",

"O": "k8s",

"OU": "System"

}

]

}

生成证书和私钥

cfssl gencert -ca=/etc/kubernetes/ssl/ca.pem -ca-key=/etc/kubernetes/ssl/ca-key.pem -config=/etc/kubernetes/ssl/ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd mkdir /etc/etcd/ssl -p cp etcd*.pem /etc/etcd/ssl scp -r /etc/etcd/ km12-02:/etc scp -r /etc/etcd/ km12-03:/etc

部署etcd集群:

创建etcd的systemd unit文件

mkdir -p /var/lib/etcd # cat /etc/systemd/system/etcd.service [Unit] Description=Etcd Server After=network.target After=network-online.target Wants=network-online.target Documentation=https://github.com/coreos [Service] Type=notify WorkingDirectory=/var/lib/etcd/ EnvironmentFile=-/etc/etcd/etcd.conf ExecStart=/opt/bin/etcd \ --name=etcd-host0 \ --cert-file=/etc/etcd/ssl/etcd.pem \ --key-file=/etc/etcd/ssl/etcd-key.pem \ --peer-cert-file=/etc/etcd/ssl/etcd.pem \ --peer-key-file=/etc/etcd/ssl/etcd-key.pem \ --trusted-ca-file=/etc/kubernetes/ssl/ca.pem \ --peer-trusted-ca-file=/etc/kubernetes/ssl/ca.pem \ --initial-advertise-peer-urls=https://10.42.13.230:2380 \ --listen-peer-urls=https://10.42.13.230:2380 \ --listen-client-urls=https://10.42.13.230:2379,http://127.0.0.1:2379 \ --advertise-client-urls=https://10.42.13.230:2379 \ --initial-cluster-token=etcd-cluster-1 \ --initial-cluster=etcd-host0=https://10.42.13.230:2380,etcd-host1=https://10.42.43.147:2380,etcd-host2=https://10.42.150.212:2380 \ --initial-cluster-state=new \ --data-dir=/var/lib/etcd Restart=on-failure RestartSec=5 LimitNOFILE=65536 [Install] WantedBy=multi-user.target

将创建好的启动文件和之前解压的etcd文件都copy到其他master节点上

tar xf etcd-v3.2.22-linux-amd64.tar.gz cd etcd-v3.2.22-linux-amd64 cp etcd etcdctl /opt/bin scp -r /opt/bin km12-02:/opt scp -r /opt/bin km12-03:/opt source /etc/profile.d/k8s.sh copy到其他节点 ,注意修改几个地方,修改为相应的名字和地址: --name=etcd-host0 --initial-advertise-peer-urls=https://10.42.13.230:2380 --listen-peer-urls=https://10.42.13.230:2380 --listen-client-urls=https://10.42.13.230:2379,http://127.0.0.1:2379 --advertise-client-urls=https://10.42.13.230:2379

启动etcd

systemctl enable etcd systemctl start etcd systemctl status etcd

查看集群状态

etcdctl --key-file /etc/etcd/ssl/etcd-key.pem --cert-file /etc/etcd/ssl/etcd.pem --ca-file /etc/kubernetes/ssl/ca.pem member list etcdctl --key-file /etc/etcd/ssl/etcd-key.pem --cert-file /etc/etcd/ssl/etcd.pem --ca-file /etc/kubernetes/ssl/ca.pem cluster-health

为了方便使用etcdctl我们可以做一个alias,因为kubernetes默认使用etcdv3,而etcd部署默认使用etcdv2,所以在etcd里面查看不到kubernetes的数据,需要添加一个全局变量export ETCDCTL_API=3,

在所有master节点上添加如下内容:

vim ~/.bashrc

#etcd

host1='10.42.13.230:2379'

host2='10.42.43.147:2379'

host3='10.42.150.212:2379'

endpoints=$host1,$host2,$host3

alias etcdctl='etcdctl --endpoints=$endpoints --key /etc/etcd/ssl/etcd-key.pem --cert /etc/etcd/ssl/etcd.pem --cacert /etc/kubernetes/ssl/ca.pem'

export ETCDCTL_API=3

source ~/.bashrc

4、在另外的两台服务器上面部署keepalived、haproxy

apt install -y keepalived haproxy

1)创建keepalived配置文件

master:

cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost #发送邮箱

}

notification_email_from keepalived@localhost #邮箱地址

smtp_server 127.0.0.1 #邮件服务器地址

smtp_connect_timeout 30

router_id km12-01 #主机名,每个节点不同即可

}

vrrp_instance VI_1 {

state MASTER #在另一个节点上为BACKUP

interface eth0 #IP地址漂移到的网卡

virtual_router_id 6 #多个节点必须相同

priority 100 #优先级,备用节点的值必须低于主节点的值

advert_int 1 #通告间隔1秒

authentication {

auth_type PASS #预共享密钥认证

auth_pass 571f97b2 #密钥

}

unicast_src_ip 10.42.13.23

unicast_peer {

10.42.43.14

}

virtual_ipaddress {

10.42.79.210/16 #VIP地址

}

}

slave1:

cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost #发送邮箱

}

notification_email_from keepalived@localhost #邮箱地址

smtp_server 127.0.0.1 #邮件服务器地址

smtp_connect_timeout 30

router_id km12-02 #主机名,每个节点不同即可

}

vrrp_instance VI_1 {

state BACKUP #在另一个节点上为MASTER

interface eth0 #IP地址漂移到的网卡

virtual_router_id 6 #多个节点必须相同

priority 80 #优先级,备用节点的值必须低于主节点的值

advert_int 1 #通告间隔1秒

authentication {

auth_type PASS #预共享密钥认证

auth_pass 571f97b2 #密钥

}

unicast_src_ip 10.42.43.14

unicast_peer {

10.42.13.23

}

virtual_ipaddress {

10.42.79.210/16 #漂移过来的IP地址

}

}

启动keepalived

systemctl enable keepalived systemctl start keepalived

keepalived采用的是单播。

2)haproxy配置文件

cat /etc/haproxy/haproxy.cfg global log 127.0.0.1 local2 chroot /var/lib/haproxy pidfile /var/run/haproxy.pid maxconn 4000 user haproxy group haproxy daemon defaults mode tcp log global retries 3 timeout connect 10s timeout client 1m timeout server 1m frontend kubernetes bind *:6443 mode tcp default_backend kubernetes-master backend kubernetes-master balance roundrobin server master 10.42.13.230:6443 check maxconn 2000 server master2 10.42.43.147:6443 check maxconn 2000

两台配置文件是一样的。

启动haproxy

systemctl enable haproxy systemctl start haproxy

5、安装指定版本的kubeadm、kubectl、kubelet

由于服务器不FQ,下载会有问题,所以我们需要换一下源:

cat <<EOF > /etc/apt/sources.list.d/kubernetes.list deb http://mirrors.ustc.edu.cn/kubernetes/apt kubernetes-xenial main EOF

安装

apt-get update apt policy kubeadm apt-get install kubeadm=1.12.0-00 kubectl=1.12.0-00 kubelet=1.12.0-00

卸载软件

apt-get --purge remove 包名称 如果不记得包的全部名称,则可以使用如下命令查找 dpkg --get-selections | grep kube

创建初始化的kubeadm-config.yaml

# cat kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1alpha3

controlPlaneEndpoint: 10.42.9.210:6443 kind: ClusterConfiguration kubernetesVersion: v1.12.0 networking: podSubnet: 10.244.0.0/16

serviceSubnet: 10.96.0.0/16 apiServerCertSANs: - 10.42.13.230 - 10.42.43.147 - 10.42.150.212 - km12-01 - km12-02 - km12-03 - 127.0.0.1 - localhost - 10.42.9.210 etcd: external: endpoints: - https://10.42.13.230:2379 - https://10.42.43.147:2379 - https://10.42.150.212:2379 caFile: /etc/kubernetes/ssl/ca.pem certFile: /etc/etcd/ssl/etcd.pem keyFile: /etc/etcd/ssl/etcd-key.pem dataDir: /var/lib/etcd

clusterDNS:

- 10.96.0.2

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: "ipvs"

controlPlaneEndpoint是haproxy的地址,或vip

初始化

kubeadm init --config kubeadm-config.yaml

如果是已经初始化完成的集群想要修改为ipvs,做如下修改:

kubectl edit configmap kube-proxy -n kube-system ipvs: excludeCIDRs: null minSyncPeriod: 0s scheduler: "" syncPeriod: 30s kind: KubeProxyConfiguration metricsBindAddress: 127.0.0.1:10249 mode: "ipvs"

重新部署kube-proxy:

kubectl delete pod kube-proxy-ppsk90sd -n kube-system

将生成的pki拷贝到其他节点

scp -r /etc/kuberbetes/pki km12-02:/etc/kubernetes scp -r /etc/kubernetes/pki km12-03:/etc/kubernetes 注意,到其他服务器上面删掉里面的两个文件: rm -rf apiserver.crt apiserver.key

将初始化的文件以及/etc/profile.d/k8s.conf拷贝到其他节点

scp -r kubeadm-config.yaml km12-02: scp -r kubeadm-config.yaml km12-03: scp -r /etc/profile.d/k8s.sh km12-02:/etc/profile.d scp -r /etc/profile.d/k8s.sh km12-03:/etc/profile.d

有与所有的节点都设置了taints,所以都不能部署,此时会看到coredns一直处于pending的状态,我们暂时把taints去掉,使其能正常部署

kubectl taint nodes --all node-role.kubernetes.io/master-

6、部署网络

网络有几种选择flannel+vxlan、flannel+host-gw、calico+ipip,经过测试calico+ipip这种方式网络性能是比较好的,这里给出两种方式部署网络flannel+host-gw和calico+ipip。推荐使用calico+ipip。

第一种calico+ipip:

calico的datastore有两种方式:kubernetes和etcd,这里建议使用etcd进行数据存储。calico默认使用ipip模式,如果想关闭ipip模式只需要将CALICO_IPV4POOL_IPIP设置为off,然后就会使用BGP。

下载文件:

wget https://docs.projectcalico.org/v3.3/getting-started/kubernetes/installation/rbac.yaml wget https://docs.projectcalico.org/v3.3/getting-started/kubernetes/installation/hosted/calico.yaml

etcd使用前面部署好的etcd集群,所以需要修改calico.yaml文件:

主要修改几个地方:一个是etcd的相关配置,一个是CALICO_IPV4POOL_CIDR

# vim calico.yaml

kind: ConfigMap apiVersion: v1 metadata: name: calico-config namespace: kube-system data: # Configure this with the location of your etcd cluster. etcd_endpoints: "https://10.42.13.230:2379,https://10.42.43.147:2379,https://10.42.150.212:2379" # If you're using TLS enabled etcd uncomment the following. # You must also populate the Secret below with these files. etcd_ca: "/calico-secrets/etcd-ca" etcd_cert: "/calico-secrets/etcd-cert" etcd_key: "/calico-secrets/etcd-key"

apiVersion: v1 kind: Secret type: Opaque metadata: name: calico-etcd-secrets namespace: kube-system data: # Populate the following files with etcd TLS configuration if desired, but leave blank if # not using TLS for etcd. # This self-hosted install expects three files with the following names. The values # should be base64 encoded strings of the entire contents of each file. etcd-key: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFB0NBUUVBd2F3ZHNKcHB5WTR1UjFtZXJocHl5dWlPU1B3N0lWS1Zxc3dXaFV6ZG1YVWRzN2swCmlMaVNGbDhIUjRaVDcvcEIzOGRuenJINE1pQit4TTRDVHRKVkZjTFJBK2tkNUwzZWdodC9KOHlBS3UyVnE2M2wKTWpBazlIb3NqUU96a2dLSnN1RlhyRFdaZG5FeGlvNkZrQXBncDFySmZJdFh1UkpFdDFRMGplblVTM0xLaFZVWQpkdTdTV0thVkc5T3RmS3BzZUsvc2tYcDJlVmJIdGpiRE9lQURodnhyNk1FcDV4SVJ2bmZlWXppWmpUaUJXMkUrCkdFOCtjTGVCTmVLMVYxWFYvR1pmR0FTN2orWFVLOEIxVHFEQ2ZzU3QrRTEzTGMvQWhnTTF2QTZjaDIzMm16RlUKWWw0L21VaU5ZeUFHZmNkTW9XMkR4ck96M28yQ0JoMm50R1FIbndJREFRQUJBb0lCQVFDdUF2dGFKaU5PbVpVegpQbTY0ZDhNVVZRY2l4SjVNUG11RFhvUU5tUmF5UHV4RkF1OFV1T0ZwZ0ZDR0QwZHpveU4wOFRGd0lhK2pPbGNzCmRQelYzVjNkZzRGUlJpODV5dThWYjZjOEZ4NUJjOTY2dGkvc2ZCMTRIQjNUUmJRZVNIRjRhOGZlVHZwbjFTUnkKSGNRYnUzZEVadW9LSVJqY2pIRjVWd3N4UnhzYi9hSGE3MzUzR2pwTDhsZThQVGtielVyVktGYkZDN1U2cE1IYwo3MGxTeW5uUUVmNC9QdjJFcGNWa1hjQVFvWk44MkJUNWNEbTFheWhaWWhaUk5wdEhmV0w5VWtHaUZma3h5TXRnCjllSFJPNFpoMnZ6RW12QWRGbTZKcjB3aDlOQVVMUDhkNlh5OStnUFJ0Q1I4K2p0WHd3SzgwazZZMlpTWXhSTS8KYlAxYytyc0JBb0dCQU1xdk12cHVjTTVrc04vdkl0NE9Uc09lNE5QMzhSQVdaWktycmt4TjEwTXFrSEM5V0cxNQpwYmcyWGVyN2ZYTUFycmtpZUhiUU5ySzB6OTVVN3VnMzMrZ3VvVzRMNUJrYlNYVHY0eDJqMFBuQ3lhOFlMNkwrCjhuTi95SFBmUW1scnZWazJOd1FPSzgyaHQwL0RuOHFQeENNZVY1RmJUOWNjVVN2ZEI1czZqMHcvQW9HQkFQU2UKQzFTRnhJYThFSzVobGxZdXhQSUFHd29BNEIxV2R3RXRLMUF2WjRaamdCdmJqRjEySi9yMGNoQ08zbnllbThGdQovTU51WnczM1hsTThLTmJJWGpja3Y5RG9zQ2lOUXVtRE1ZUVY0SGFCL3U2SHo0MkNiaW1IMUlOSmtacDg5eS9nClRsQmRzb3puMUZiMG9aSFBsamcxR3BlM1hlUW9IZjJnRFFtSFJleWhBb0dBQjhXYURkSklUeEc3V29yZjZtWGcKRFU0OVRzTjJJZWpKOGtyVS95WUkrQkZjd29Yd0t4cDhDVWxFWnF0SUR5M2FoVXpXRTdBK09MSUlPbjFGUC9CTQpqS21sRlFRdHRleSs3MVgzZm41MmwrMHBNS2FieFFORHByd2lvcGJRQkJ6V2dPSThUOUovU1g1RytpOEZKSTVJCnRoUUd4Wk1ieDZMVlZmbyt2V3dNYko4Q2dZQkVEUk9wMSt6c2JyVlZUZUM4NlJYeEhRWm9xZ1d2STdiSHBRRS8dFhCZmVwN1JJU3JUZFdONTlUY21WQmloSXA3Q0dWWklLQmFUVkJYeG9mTGFqYk5vTTlrSkRUSzBsVmZnRHBkZgozNVlxWWMvQ0hCWXBqL2VGcGp3QXFoN1BrNlJRdFY0VURYejJwaWYwYU9ucDNvNHo1TklaRXZJVDN2VTQ4YVd1CjJPc2pBUUtCZ0c0OXZiNjNyc1p0eHZ3ZkhSaUVPUWdZZE9uWkhET2I0K2MxU2hpQWluN0hpOGRjdFR3cnl4a2UKdzBwZzV0R09xa1d1QUJ5VTFSQWR1c0pnUnZQZDBFc2l2YVBVTXhmd0cyWjBEVFpIZGVjS0tjWkNrdXkxQ3NKdgpkVEJLbnRjNmhwUUUrRnl3eHpBc1VTSCtpYUJ4YzczRlp3SWEwWEw2T1BuOFpnTnl5Qi9lCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg etcd-cert: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQ0akNDQXNxZ0UJBZ0lVWFdsazdGY3JJR005T3Nxc08vOVBqTitjWGlrd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1d6RUxNQWtHQTFVRUJoTUNRMDR4Q3pBSkJnTlZCQWdUQWtkRU1Rc3dDUVlEVlFRSEV3SlRXakVNTUFvRwpBMVVFQ2hNRGF6aHpNUTh3RFFZRFZRUUxFd1pUZVhOMFpXMHhFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13CkhoY05NVGd4TVRBeE1EVTBNVEF3V2hjTk1qZ3hNREk1TURVME1UQXdXakJWTVFzd0NRWURWUVFHRXdKRFRqRUwKTUFrR0ExVUVDQk1DUjBReEN6QUpCZ05WQkFjVEFsTmFNUXd3Q2dZRFZRUUtFd05yT0hNeER6QU5CZ05WQkFzVApCbE41YzNSbGJURU5NQXNHQTFVRUF4TUVaWFJqWkRDQ0FTSXdEUVlKS29aSWh2Y05BUUVCQlFBRGdnRVBBRENDCkFRb0NnZ0VCQU1Hc0hiQ2FhY21PTGtkWm5xNGFjc3JvamtqOE95RlNsYXJNRm9WTTNabDFIYk81TklpNGtoWmYKQjBlR1UrLzZRZC9IWjg2eCtESWdmc1RPQWs3U1ZSWEMwUVBwSGVTOTNvSWJmeWZNZ0NydGxhdXQ1VEl3SlBSNgpMSTBEczVJQ2liTGhWNncxbVhaeE1ZcU9oWkFLWUtkYXlYeUxWN2tTUkxkVU5JM3AxRXR5eW9WVkdIYnUwbGltCmxSdlRyWHlxYkhpdjdKRjZkbmxXeDdZMnd6bmdBNGI4YStqQktlY1NFYjUzM21NNG1ZMDRnVnRoUGhoUFBuQzMKZ1RYaXRWZFYxZnhtWHhnRXU0L2wxQ3ZBZFU2Z3duN0VyZmhOZHkzUHdJWUROYndPbklkdDlwc3hWR0plUDVsSQpqV01nQm4zSFRLRnRnOGF6czk2TmdnWWRwN1JrQjU4Q0F3RUFBYU9Cb3pDQm9EQU9CZ05WSFE4QkFmOEVCQU1DCkJhQXdIUVlEVlIwbEJCWXdGQVlJS3dZQkJRVUhBd0VHQ0NzR0FRVUZCd01DTUF3R0ExVWRFd0VCL3dRQ01BQXcKSFFZRFZSME9CQllFRkdDa2lWS0RwcEtWUHp3TVkrU1hWY2NoeFB4OE1COEdBMVVkSXdRWU1CYUFGSlFEaTdGNQp2WlNaSFhFVTlqK0FXVE9Xemx6TU1DRUdBMVVkRVFRYU1CaUhCSDhBQUFHSEJBb3FqSnFIQkFvcWFIR0hCQW9xCnNZNHdEUVlKS29aSWh2Y05BUUVMQlFBRGdnRUJBQmlkNUh2VmNGUTNlUjhBZXNHekhaRHRsWUZsNGVSUVlsZ0kKd0orbC9XV09QamwxL0lwSDJtanBsWHlnNlJBUmhIeENnZXY1NG1BSGhyK0U4TktBOXMyZFdMNWpnd2twL2p3aApzaDhsUEljeVE2ZHdXdjJNYWZaTU1Xdng5OWIrd2wyRHRGQUpiTzM5bWU4QVFJc2FrRGgrV2ZlZk02dW5QVkdHClhqMjVFbjVwQlB4NHFyRTRUcTVmeURaYUF1ay9tN0NianQ2WklaUUthRjVwYmczMVpCNjZRTVdQbjYvU05lWmkKbEhkWHY3c2xweExvWkZFczdXdEpxM0VUcDE4aXRjTER3S3FDWGZaVzhVZkRWaWNHdlRNYmpBb3NXSG1uaFdnZwpEc0w5MVJhUW9qTTzZnd1d0VaZ3lMaEhrQi96NFR1UUFXT1NaRWU3clJ0ckx0UFNNdVE9Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K etcd-ca: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURxakNDQXBLZ0F3SUJBZ0lVR0tCU2dDUTdVU2t6b2E4WnRlNTJ6UERGUk1zd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1d6RUxNQWtHQTFVRUJoTUNRMDR4Q3pBSkJnTlZCQWdUQWtkRU1Rc3dDUVlEVlFRSEV3SlRXakVNTUFvRwpBMVVFQ2hNRGF6aHpNUTh3RFFZRFZRUUxFd1pUZVhOMFpXMHhFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13CkhoY05NVGd4TVRBeE1EVXpPREF3V2hjTk1qTXhNRE14TURVek9EQXdXakJiTVFzd0NRWURWUVFHRXdKRFRqRUwKTUFrR0ExVUVDQk1DUjBReEN6QUpCZ05WQkFjVEFsTmFNUXd3Q2dZRFZRUUtFd05yT0hNeER6QU5CZ05WQkFzVApCbE41YzNSbGJURVRNQkVHQTFVRUF4TUthM1ZpWlhKdVpYUmxjekNDQVNJd0RRWUpLb1pJaHZjTkFRRUJCUUFECmdnRVBBRENDQVFvQ2dnRUJBTjdLd0ZJUlFNL2hTZTdZdTRsSTEyVDRLdzJHYVc4bnA4YU9XZXZzNzhGQU9qYnAKQnBsSEZVQmFrVVcrN3pnU0FHQVMzRnU2Um14ZGk1T2c3a2dZUG9TbitNRDFkWjVIYm1oQjhCY2RtOGVnQnZRcApONUtlS0ovOTJTN09SSm9VeHpmWEhUN3V5bTlZZUhSa0owaTFzSlNJWVB5dXJUbGhOUGs2K3B6aEUzN3Rhd1NkClNHM3l5UlJOY25BOHFyRE5nQlVyUUJBVVRwa0N5SklyVG1oOEpqckl1YTdKMml4YTRidXo3ZWxGRURDYTJpcTcKVmNQZkZ3MVdnM09pMVNmSWVxWVlTUW0zQkpiRnZ2cjBCaUQzYVM5cDNJenNoNEtRYlkyUlhrKzZxTWxYb2ZNaAo4Vmp0MVU2Tmh4RHlkZGwwVDJrRWNwRkx3dWk4ZFRRSEtndU4yQTBDQXdFQUFhTm1NR1F3RGdZRFZSMFBBUUgvCkJBUURBZ0VHTUJJR0ExVWRFd0VCL3dRSU1BWUJBZjhDQVFJd0hRWURWUjBPQkJZRUZKUURpN0Y1dlpTWkhYRVUKOWorQVdUT1d6bHpNTUI4R0ExVWRJd1FZTUJhQUZKUURpN0Y1dlpTWkhYRVU5aitBV1RPV3psek1NQTBHQ1NxRwpTSWIzRFFFQkN3VUFBNElCQVFBamRMakRoMGN2bnNSai9STVhwbWFZRnEwaFowcmZnVmtWcnowK1VDdWRkZnZ3ClVISVEva0p2RFVCdDl5WEtud3ZqQ0JKR3grMWsySTlENVVUcDI4bUpXcUpFbXdWcllqaXo4eUhYamNVbUlNVWkKeHFrZUlwU2lYR0tVZ3NDV1d0U0cwR2Vnbi9telVPMERwVHBVMkdyM0ZjbXJPcGlVNFd0RURZVUZtUm4yMXc0Wgo1S3RnSkpPNWFXaGFMR3c2VU52MFVhRUhTTjZoUk9qWG9pMy8zK09KN1pRajc1YXFnU1k0TURheDZBSFZ4NENSCnk2Q0srNUx0NWhNc0lKVkhETjZkQXYyTlFBU0VxSFVZazJmVFJ1VjJjeExIcE1MQVVTc1JRaThZWmJwYys2TUMKZjkxOHp3MG03NjZ3eTdqYVFJYmJTTXJzbGUvTnhoZnFTQSs0anNFdwotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0t

etcd-key:填写cat /etc/etcd/ssl/etcd-key.pem | base64 | tr -d '\n' 的输出

etcd-cert:填写 cat /etc/etcd/ssl/etcd.pem | base64 | tr -d '\n' 的输出

etcd-ca:填写cat /etc/kubernetes/ssl/ca.pem | base64 | tr -d '\n' 的输出

- name: CALICO_IPV4POOL_CIDR value: "10.244.0.0/16"

CALICO_IPV4POOL_CIDR的value需要与初始化设置的 podSubnet: 10.244.0.0/16相同,不能和serviceSubnet重复。

部署

kubectl create -f rbac.yaml -f calico.yaml

删除calico

kubectl delete -f rbac.yaml -f calico.yaml modprobe -r ipip

QA:部署过程中可能会碰到如下错误:

2018-11-19 08:51:49.719 [INFO][8] startup.go 264: Early log level set to info 2018-11-19 08:51:49.719 [INFO][8] startup.go 284: Using HOSTNAME environment (lowercase) for node name 2018-11-19 08:51:49.719 [INFO][8] startup.go 292: Determined node name: ui 2018-11-19 08:51:49.720 [INFO][8] startup.go 105: Skipping datastore connection test 2018-11-19 08:51:49.721 [INFO][8] startup.go 365: Building new node resource Name="ui" 2018-11-19 08:51:49.721 [INFO][8] startup.go 380: Initialize BGP data 2018-11-19 08:51:49.722 [INFO][8] startup.go 582: Using autodetected IPv4 address on interface br-99ef8e85b719: 172.18.0.1/16 2018-11-19 08:51:49.722 [INFO][8] startup.go 450: Node IPv4 changed, will check for conflicts 2018-11-19 08:51:49.722 [WARNING][8] startup.go 879: Calico node 'ku' is already using the IPv4 address 172.18.0.1. 2018-11-19 08:51:49.722 [WARNING][8] startup.go 1104: Terminating Calico node failed to start

导致node节点的calico-node不能启动,这个主要是ip地址冲突导致,因为calico里面有个参数IP_AUTODETECTION_METHOD默认值是first-found.官方解释:

The method to use to autodetect the IPv4 address for this host. This is only used when the IPv4 address is being autodetected. See IP Autodetection methods for details of the valid methods. [Default: first-found]

https://docs.projectcalico.org/v3.3/reference/node/configuration

由于我们使用的ipv4,如果是ipv6的花还需要设置IP6_AUTODETECTION_METHOD。

这里的解决方式是在calico.yaml文件里面添加如下修改:

# Auto-detect the BGP IP address. - name: IP value: "autodetect" - name: IP_AUTODETECTION_METHOD value: "can-reach=8.8.8.8"

重新部署calico即可解决。

第二种flannel+host-gw:

下载文件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

需要作如下修改

vim kube-flannel.yml args: - --ip-masq - --kube-subnet-mgr - --iface=eth0

如果是自己定义的 podSubnet: 10.244.0.0/16 和serviceSubnet: 10.96.0.0/16,则还需要修改一个地方,修改为 podSubnet:

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "host-gw"

}

}

部署

kubectl apply -f kube-flannel.yaml

删除flannel

kubectl delete -f flannel.yaml ifconfig cni0 down ip link delete cni0 ifconfig flannel.1 down ip link delete flannel.1 rm -rf /var/lib/cni/

三、添加node节点

默认每个节点使用kubeadm初始化的时候都会创建一个token,可以选择任意一个master节点的token来添加节点,但是这样管理起来不是很方便。所一先把节点的所有token都删掉,然后创建一个token

用来添加节点,token默认ttl是24小时,可以讲ttl设置为0,这样token就永久有效,不建议将ttl设置成0,设置成2天轮换一次。具体步骤如下:

1、删掉原油默认的token

kubeadm tokne list 找到默认的token,然后都删掉 kubeadm token delete l36ybs.04f5zkvewgtsd703 kubeadm token delete zvbdfg.9kx9fzozxfz8seko kubeadm token delete zvfoiw.r7p0rxtvgxc95owu 使用kubeadm token create生成token kubeadm token create --ttl 72h

为了方便使用,写了一个脚本:

# cat uptk.sh

#!/bin/bash

tk=`kubeadm token list | awk '{print $1}' |grep -v TOKEN`

kubeadm token delete $tk

kubeadm token create --ttl 72h

crontab -e * * */2 * * /data/scripts/uptk.sh 2>&1 & >>/dev/null

关于kubeadm管理token更多细节参考官方文档:https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm-token/

2、添加节点

准备一台新的服务器,做好免密交互,安装kubeadm、kubelet、docker等,按照上面准备master那样,只是不执行初始化,而是kubeadm join,注意版本要一致:

在master上操作:

查看集群的token

kubeadm token list | grep authentication,signing | awk '{print $1}'

查看discovery-token-ca-cert-hash

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

为了方便使用将上述命令做成了一个生成脚本,需要在master节点上执行,当然可以根据服务器情况进行修改:

cat generate_kubeadm_join.sh

#!/bin/bash

#delete the invalid token and create new token

tk_old=`kubeadm token list | awk '{print $1}' | grep -v TOKEN`

kubeadm token delete $tk_old >>/dev/null 2>& 1

kubeadm token create --ttl 72h >>/dev/null 2>& 1

#print option which kubeadm join

tk_new=`kubeadm token list | awk '{print $1}' | grep -v TOKEN`

hs=`openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'`

port=6443

ip=`ip a | grep eth0 | grep inet| awk -F / '{print $1}'| awk '{print $2}'`

op="kubeadm join --token $tk_new $ip:$port --discovery-token-ca-cert-hash sha256:$hs"

echo $op

在node节点上操作:

根据上面获取的token和hash kubeadm join --token c04f89.b781cdb55d83c1ef 10.42.9.210:6443 --discovery-token-ca-cert-hash sha256:986e83a9cb948368ad0552b95232e31d3b76e2476b595bd1d905d5242ace29af

在master上操作:

为node节点打标签 kubectl label node knode1 node-role.kubernetes.io/node=

删除节点:

在master节点上: kubectl drain node1 --delete-local-data --force --ignore-daemonsets kubectl delete node node1 在node节点上: kubeadm reset

ps:node1 是节点名称

如果部署的有问题,可以使用如下的方法进行重置:

#kubeadm reset ifconfig cni0 down ip link delete cni0 ifconfig flannel.1 down ip link delete flannel.1 rm -rf /var/lib/cni/

rm -rf ~/.kube

如果使用的etcd是external的方式,也就是外部集群,使用kubeadm reset重置集群的时候并不能删除etcd里面的数据,需要使用etcdctl工具手动删除。

由于前面已经设置了alias,所以可以直接使用etcdctl:

etcdctl del "" --prefix

四、部署监控

1、部署metrics-server

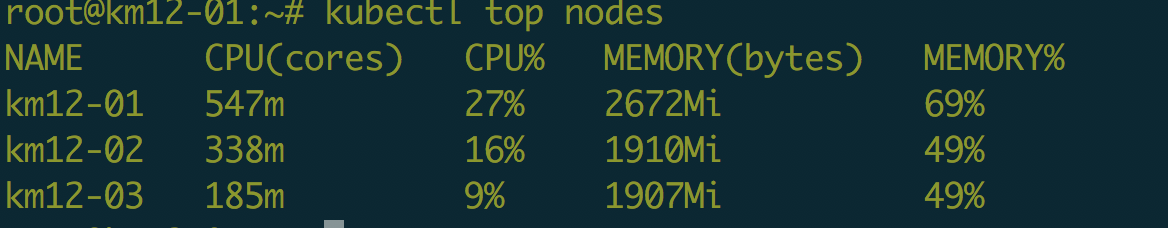

mkdir k8s-monitor cd k8s-monitor git clone https://github.com/kubernetes-incubator/metrics-server.git cd metrics-server 需要修改一下metrics-server的deployment文件,即metrics-server-deployment.yaml containers: - name: metrics-server image: k8s.gcr.io/metrics-server-amd64:v0.3.1 imagePullPolicy: Always command: - /metrics-server - --kubelet-insecure-tls - --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP 部署 kubectl create -f metrics-server/deploy/1.8+/

如果有报错参考我的另外一片博客:https://www.cnblogs.com/cuishuai/p/9857120.html

接下来稍等几十秒就可以查看状态了

2、部署prometheus-operator

下载相关文件 git clone https://github.com/mgxian/k8s-monitor.git cd k8s-monitor #创建namespace,monitoring kubectl apply -f monitoring-namespace.yaml #部署prometheus-operator kubectl apply -f prometheus-operator.yaml 部署k8s组件 kubectl apply -f kube-k8s-service.yaml 部署node_exporter kubectl apply -f node_exporter.yaml 部署kube-state-metrics kubectl apply -f kube-state-metrics.yaml 部署prometheus kubectl apply -f prometheus.yaml 收集数据 kubectl apply -f kube-servicemonitor.yaml 部署grafana kubectl apply -f grafana.yaml 部署altermanager kubectl apply -f alertmanager.yaml

prometheus和grafana都是采用的nodePort方式暴漏的服务,所以可以直接访问。

grafana默认的用户名密码:admin/admin

- kubernetes kubeadm部署高可用集群

- k8s集群之kubernetes-dashboard和kube-dns组件部署安装

- <转>kubernetes集群中部署kube-ui

- 利用kubeadm部署kubernetes 1.7 with flannel

- 按照官方文档,安装minikube,kubectl,实现Kubernetes集群部署(采坑记)

- kubeadm 搭建 kubernetes 集群

- 直播 | 准生产级的集群部署工具kubeadm原理解析

- 使用 kubeadm 创建一个 kubernetes 集群

- 使用kubeadm部署kubernetes1.9.1+coredns+kube-router(ipvs)高可用集群

- kubeadm安装kubernetes1.9.2集群

- kubernetes集群中部署kube-ui

- kubeadm快速部署kubernetes1.7.6

- kubeadm 搭建 kubernetes 集群

- centos7环境下kubeadm方式部署 kubernetes 1.7