机器学习学习笔记——logistic回归(数学推导及python实现)

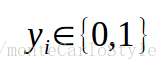

Logistic回归是一种二分类算法。我们设定输出标记:一类为0,一类为1。则输出标签y可以表示为:

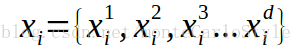

其样本x的属性数据可以表示为下图。其中i表示第i个样本,i <= m,上标d表示为每个样本有d个属性。这里x表示成行向量。

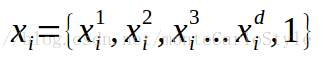

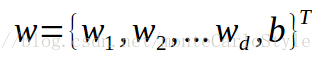

普通的线性回归得到的是数值。它是用求出一个由d个权值组成的列向量w,使得 x * w + b尽可能得靠近真实值,b是偏移量,是个未知常数。为了方便表示,我们扩展x和w。

这样在以后的表示中可以省去b。

算法的目的是利用一组样本(包含属性标记x和标签标记y),训练合适的w,使这个w可以用来预测新样本(只包含属性标记x)的类别。

前面说道普通的线性回归得到的是一个值,我们需要一个函数将x * w得到值进一步处理,将样本分为两类(0和1)。

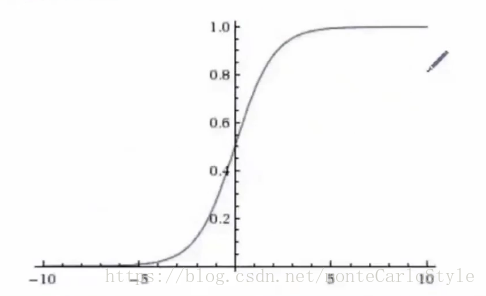

simoid函数

sigmoid函数是个阶跃函数,恰好可以将 x * w的值压缩到0和1之间,在输入值0附近变化很陡,满足了分类的需求。它还有一个优良的性质:连续且可导,且求导简单(求导的方便意味着好训练)。如图

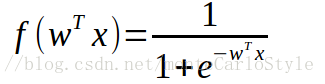

函数式为

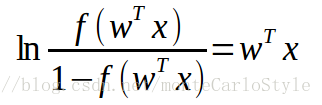

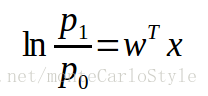

sigmoid函数的值域为(0,1),输出值可以当作其样本x属于正例的概率,1-输出值 表示样本x属于反例的概率。对上述函数式进行变形得对数几率:

其中

为几率,含义为某样本作为正例相对于反例的可能性。

对数几率可以重写为

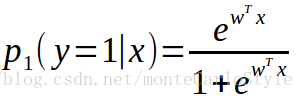

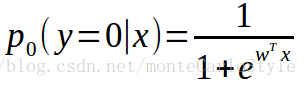

且

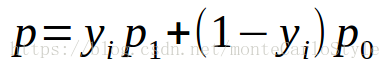

(这里需要耐心想一想)注意y的取值只为0和1,则样本能被正确分类的概率p:

极大似然估计

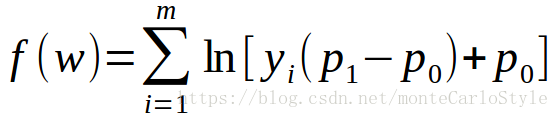

使用极大似然估计估计w的每个值,使得样本被正确分类的概率p最大。构造极大似然函数

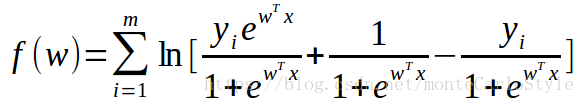

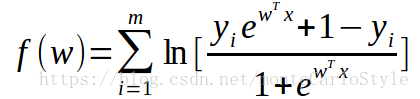

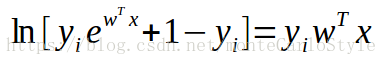

将p0和p1带入,对右式变形:

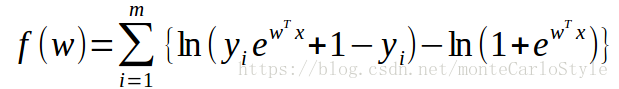

由于y只能取0和1,所以:

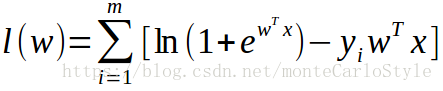

最大化f(w)等于最小化l(w)

现在,我们的任务是,求得w列向量中的每个元素,使l(w)最小。

梯度下降法

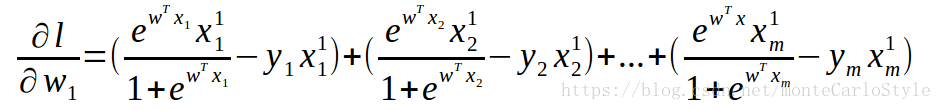

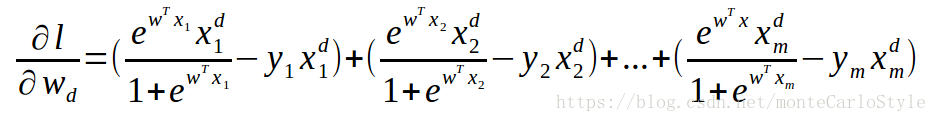

梯度下降法是一种最常用的最优化算法。它的做法是:分别求l(w)对w1, w2, w3...wd, b的偏导。再把偏导乘以学习率的结果加到对应的原有w参数上。

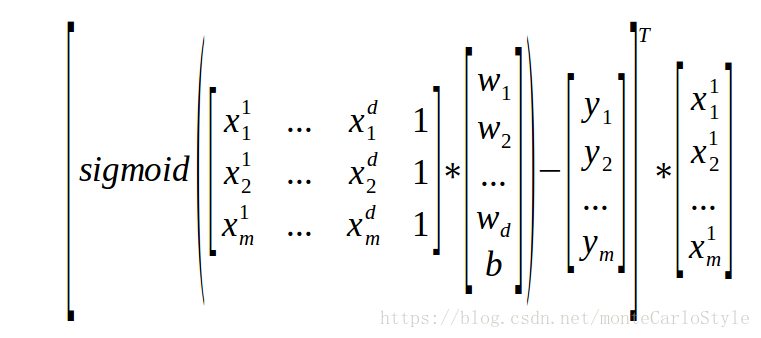

l(w)对w1偏导的矩阵表达:

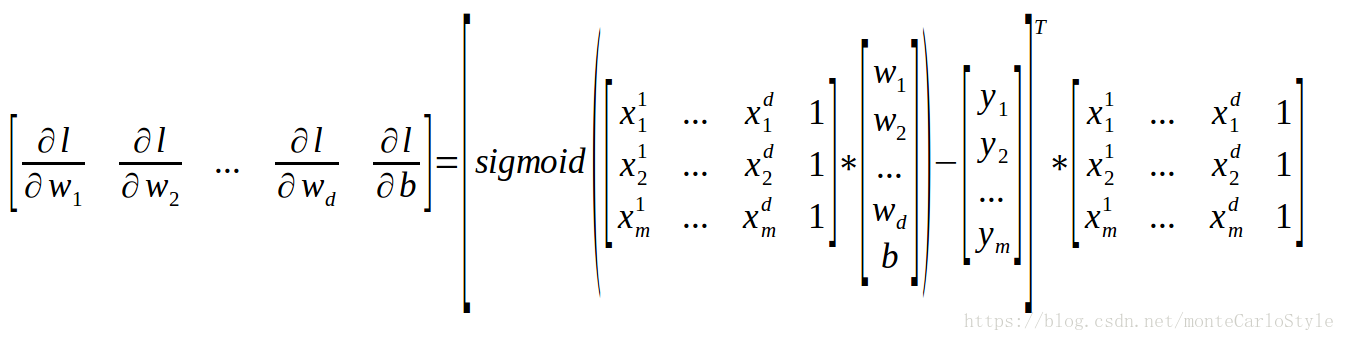

则:

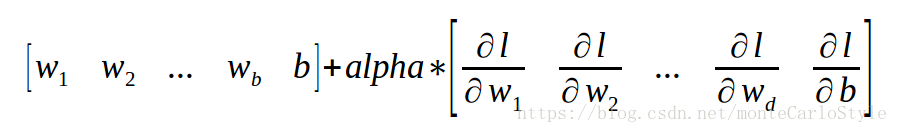

上式的左边就是梯度向量,将它乘以步长(学习率)alpha就等于我们要对w向量调整的数值(下面公式输错了,加号应该改成减号)。

动量梯度下降

保存上一次的梯度向量为preV,本次梯度向量为curV。权值为b。普通梯度下降每次迭代调整量为alpha * curV,动量梯度下降每次调整量为alpha*[b*curV + (1-b)*preV]

牛顿法

因为我们要优化的函数是连续可导的凸函数,可以使用二阶导数使梯度下降的方向更准确。牛顿法在其他博客里再做记录。

Python代码(注意缩进)

说明:代码大量参考了《机器学习实战》(皮特著)logistic回归章节。更改了梯度下降函数(前缀为gradAscent的函数都是梯度下降函数。我弄了这么多是因为,我用来测试梯度下降函数的效果....懒得改句子或者注释..)

from numpy import *

import operator

def loadDataSet():

dataMat = []

labelMat = []

fr = open('testSet.txt')for line in fr.readlines():

lineArr = line.strip().split()

dataMat.append([1.0, float(lineArr[0]), float(lineArr[1])])

labelMat.append(int(lineArr[2]))

return dataMat, labelMat

def sigmoid(inX):

return 1.0 / (1 + exp(-inX))

def gradAscent(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn)

epsilon = 0.0001

labelMat = mat(classLabels).transpose()

m, n = shape(dataMatrix)

alpha = 0.0001

maxCycles = 20000

weights = ones((n, 1))

for k in range(maxCycles):

h = sigmoid(dataMatrix * weights)

error = (labelMat - h)

weights = weights + alpha * dataMatrix.transpose() * error

return weights, maxCycles

def gradAscent0(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn)

labelMat = mat(classLabels).transpose()

epsilon = 0.000001

m, n = shape(dataMatrix)

alpha = 0.001

weights = ones((n, 1))

cnt = 0

error1 = 0

diffLast = 0.0

diff = 0.0

while 1:

cnt += 1

h = sigmoid(dataMatrix * weights)

diffMat = (labelMat - h)

weights = weights + alpha * dataMatrix.transpose() * diffMat

sqDiff = diffMat.transpose() * diffMat

diff = sqDiff[0,0] / (2*n)

if abs(diff - diffLast) > epsilon:

diffLast = diff

else:

return weights, cnt

def gradAscent1(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn)

labelMat = mat(classLabels).transpose()

epsilon = 0.000000001

m, n = shape(dataMatrix)

alpha = 0.0001

weights = ones((n, 1))

cnt = 0

diffLast = 0.0

reviseMat = ones((len(dataMatIn),1))

diff = 0.0

while 1:

cnt += 1

h = sigmoid(dataMatrix * weights)

reviseMatTran = (h - labelMat).transpose() * dataMatrix

reviseMat = reviseMatTran.transpose()

weights = weights - alpha * reviseMat

diffMat = (h - labelMat)

sqDiff = diffMat.transpose() * diffMat

diff = sqDiff[0,0] / (2*n)

if (cnt % 1000) == 0 :

print ("cycles + 1000, %d cycles" %cnt)if abs(diff - diffLast) > epsilon:

diffLast = diff

else:

return weights, cnt

def gradAscent2(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn)

labelMat = mat(classLabels).transpose()

epsilon = 0.000000001

m, n = shape(dataMatrix)

alpha = 0.0001

weights = ones((n, 1))

cnt = 0

diffLast = 0.0

preV = ones((n, 1))

diff = 0.0

b = 0.9

while 1:

cnt += 1

h = sigmoid(dataMatrix * weights)

reviseMatTran = (h - labelMat).transpose() * dataMatrix

reviseMat = reviseMatTran.transpose()

weights = weights - alpha * (b * reviseMat + (1 - b) * preV)

preV = reviseMat

diffMat = (h - labelMat)

sqDiff = diffMat.transpose() * diffMat

diff = sqDiff[0,0] / (2*n)

if (cnt % 1000) == 0 :

print ("cycles + 1000, the current cycle is %d" %cnt)if abs(diff - diffLast) > epsilon:

diffLast = diff

else:

return weights, cnt

def classifierTest():

dataMat, labelMat = loadDataSet()

numOfSamp = len(labelMat)

dataMatrix = mat(dataMat)

labelMatrix = mat(labelMat).transpose()

weights, cnt = gradAscent2(dataMat, labelMat)

resultMat = sigmoid(dataMatrix * weights)

error = 0

for i in range(numOfSamp):

resulClass = 1 if resultMat[i, 0] > 0.5 else 0

#print 'the classifier came back with%d, the real answer is: %d' % (

# resulClass, labelMatrix[i, 0])

if resulClass != labelMatrix[i, 0]:

error += 1

print '\nthe total number of error is %d,\nthe total error rate is %.2f%%, ' % (error,

float(error)*100 / numOfSamp)

print 'cycles: %d' % cnt

print weights

def plotBestFit(weights):

import matplotlib.pyplot as plt

dataMat, labelMat = loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]

xcord1 = []

ycord1 = []

xcord2 = []

ycord2 = []

for i in range(n):

if int(labelMat[i]) == i:

xcord1.append(dataArr[i, 1])

ycord1.append(dataArr[i, 2])

else:

xcord2.append(dataArr[i, 1])

ycord2.append(dataArr[i, 2])

fig = plt.figure()

ax = fig.add_subplot(111)

ax.scatter(xcord1, ycord1, s = 30, c = 'red', marker = 's')

ax.scatter(xcord2, ycord2, s = 30, c = 'green')

x = mat(arange(-3.0, 3.0, 0.1))

y = ((-weights[0] - weights[1] * x) / weights[2])

ax.plot(x, y)

plt.xlabel('X1')plt.ylabel('X2')plt.show()

训练数据

-0.017612 14.053064 0

-1.395634 4.662541 1

-0.752157 6.538620 0

-1.322371 7.152853 0

0.423363 11.054677 0

0.406704 7.067335 1

0.667394 12.741452 0

-2.460150 6.866805 1

0.569411 9.548755 0

-0.026632 10.427743 0

0.850433 6.920334 1

1.347183 13.175500 0

1.176813 3.167020 1

-1.781871 9.097953 0

-0.566606 5.749003 1

0.931635 1.589505 1

-0.024205 6.151823 1

-0.036453 2.690988 1

-0.196949 0.444165 1

1.014459 5.754399 1

1.985298 3.230619 1

-1.693453 -0.557540 1

-0.576525 11.778922 0

-0.346811 -1.678730 1

-2.124484 2.672471 1

1.217916 9.597015 0

-0.733928 9.098687 0

-3.642001 -1.618087 1

0.315985 3.523953 1

1.416614 9.619232 0

-0.386323 3.989286 1

0.556921 8.294984 1

1.224863 11.587360 0

-1.347803 -2.406051 1

1.196604 4.951851 1

0.275221 9.543647 0

0.470575 9.332488 0

-1.889567 9.542662 0

-1.527893 12.150579 0

-1.185247 11.309318 0

-0.445678 3.297303 1

1.042222 6.105155 1

-0.618787 10.320986 0

1.152083 0.548467 1

0.828534 2.676045 1

-1.237728 10.549033 0

-0.683565 -2.166125 1

0.229456 5.921938 1

-0.959885 11.555336 0

0.492911 10.993324 0

0.184992 8.721488 0

-0.355715 10.325976 0

-0.397822 8.058397 0

0.824839 13.730343 0

1.507278 5.027866 1

0.099671 6.835839 1

-0.344008 10.717485 0

1.785928 7.718645 1

-0.918801 11.560217 0

-0.364009 4.747300 1

-0.841722 4.119083 1

0.490426 1.960539 1

-0.007194 9.075792 0

0.356107 12.447863 0

0.342578 12.281162 0

-0.810823 -1.466018 1

2.530777 6.476801 1

1.296683 11.607559 0

0.475487 12.040035 0

-0.783277 11.009725 0

0.074798 11.023650 0

-1.337472 0.468339 1

-0.102781 13.763651 0

-0.147324 2.874846 1

0.518389 9.887035 0

1.015399 7.571882 0

-1.658086 -0.027255 1

1.319944 2.171228 1

2.056216 5.019981 1

-0.851633 4.375691 1

-1.510047 6.061992 0

-1.076637 -3.181888 1

1.821096 10.283990 0

3.010150 8.401766 1

-1.099458 1.688274 1

-0.834872 -1.733869 1

-0.846637 3.849075 1

1.400102 12.628781 0

1.752842 5.468166 1

0.078557 0.059736 1

0.089392 -0.715300 1

1.825662 12.693808 0

0.197445 9.744638 0

0.126117 0.922311 1

-0.679797 1.220530 1

0.677983 2.556666 1

0.761349 10.693862 0

-2.168791 0.143632 1

1.388610 9.341997 0

0.317029 14.739025 0

- 机器学习入门学习笔记:(2.2)线性回归python程序实现

- 机器学习经典 PRML 最新 Python 代码实现,附最全 PRML 笔记视频学习资料

- 机器学习入门学习笔记:(一)BP神经网络原理推导及程序实现

- 机器学习实战笔记(六):Logistic回归(Python3 实现)

- 机器学习---Logistic回归数学推导以及python实现

- 一个无聊男人的疯狂《数据结构与算法分析-C++描述》学习笔记 用C++/lua/python/bash的四重实现(5)欧几里得算法欧几里得算法求最大公约数

- 《 Head First 》学习笔记:装饰者模式 (python实现)

- python数据结构学习笔记-2016-10-27-02-使用单链表实现包ADT

- Andrew NG 机器学习听课笔记(2)——过学习与欠学习,最小二乘的概率意义、logistic回归

- Python2学习笔记之实现ping和which源码

- python学习笔记之:用urlllib库实现后台管理员登陆页面扫描

- 机器学习实战笔记(Python实现)-08-线性回归

- 《 Head First 》学习笔记:观察者模式 (python实现)

- Python学习笔记:Trie Tree的实现

- Objective-C学习笔记(四)——OC实现最简单的数学运算

- 机器学习实战笔记(四):Logist线性回归算法的Python实现

- python数据结构学习笔记-2016-10-24-02-使用排序列表实现集合ADT

- Scrap学习笔记 --- python实现抓取整个网页

- python机器学习及实践学习笔记2-编码问题

- python学习笔记 1 数学运算