[置顶] 【python 爬虫】链家天津租房在售房源数据爬虫

2017-10-16 11:40

423 查看

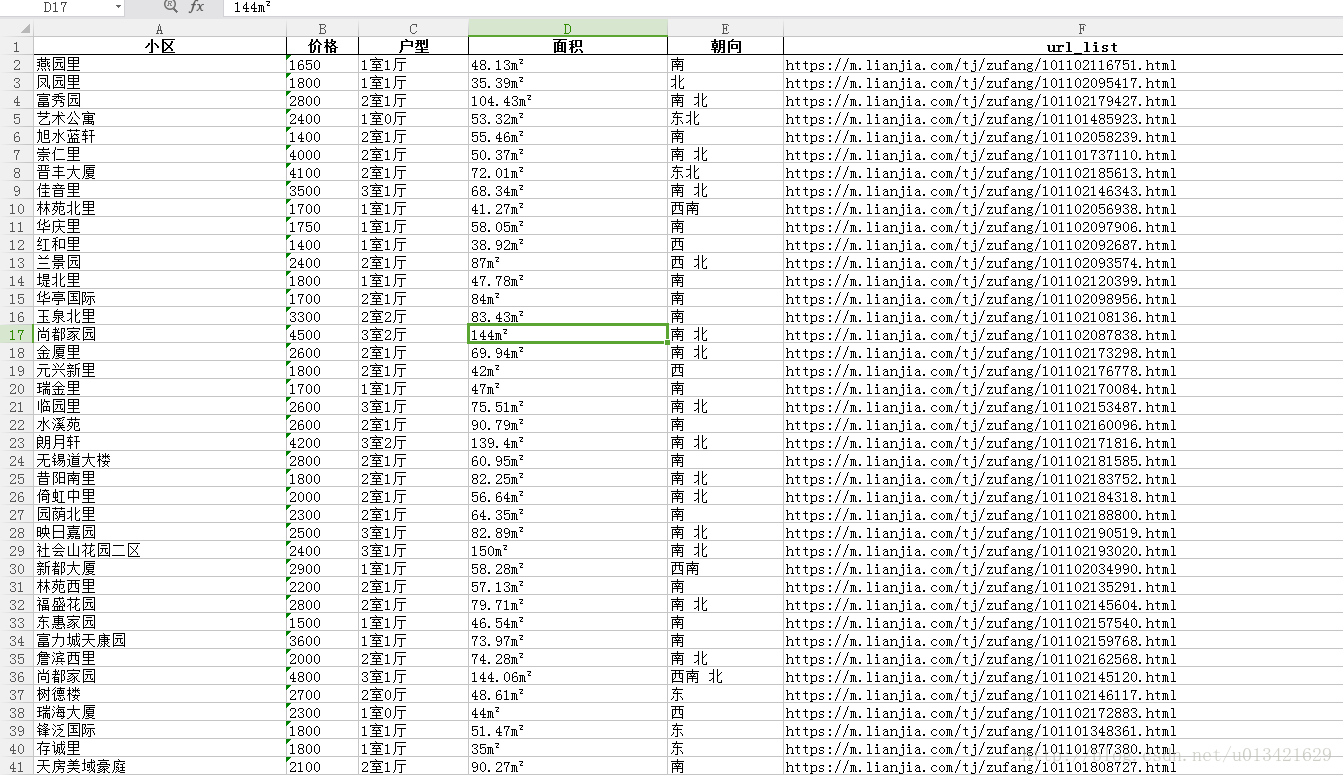

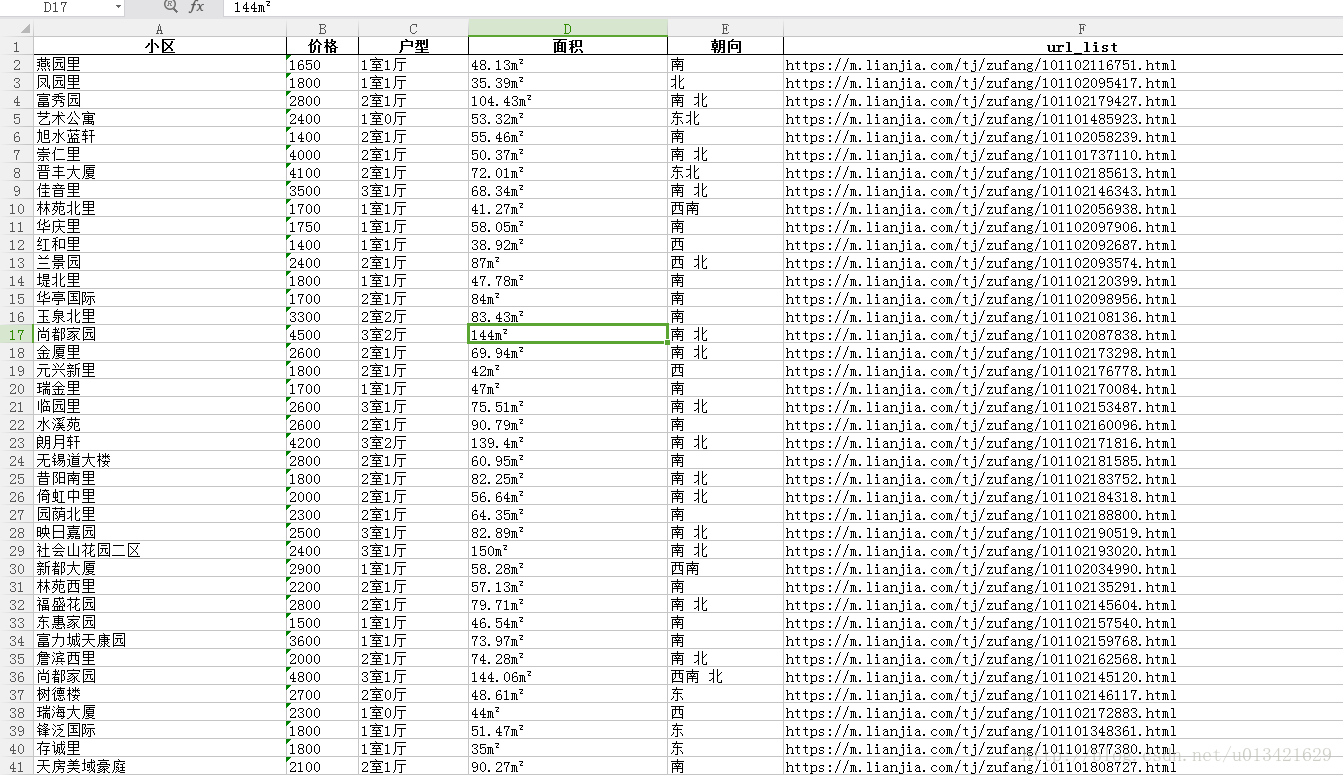

爬取字段:

户型、面积、朝向、小区、价格、url

户型、面积、朝向、小区、价格、url

#-*-coding:utf-8-*-

import sys

reload(sys)

sys.setdefaultencoding('utf-8')

import time

import requests

from lxml import etree

import pandas as pd

time1=time.time()

import re

import random

city='tj'

host_url='http://'+city+'.lianjia.com/zufang/'

print host_url

data1 = []

data2 = []

url_list = []

for i in range(1,110):

try:

print "正在抓取第"+str(i)+"页。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。"

url1="https://m.lianjia.com/tj/zufang/pg"+str(i)+"/?_t=1"

user_agent_list=[

'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.1 (KHTML, like Gecko) Chrome/14.0.835.163 Safari/535.1',

'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:6.0) Gecko/20100101 Firefox/6.0',

'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/534.50 (KHTML, like Gecko) Version/5.1 Safari/534.50',

'Opera/9.80 (Windows NT 6.1; U; zh-cn) Presto/2.9.168 Version/11.50',

'Mozilla/5.0 (Windows; U; Windows NT 5.2) AppleWebKit/525.13 (KHTML, like Gecko) Chrome/0.2.149.27 Safari/525.13',

'Mozilla/5.0 (Linux; U; Android 2.2; en-us; Nexus One Build/FRF91) AppleWebKit/533.1 (KHTML, like Gecko) Version/4.0 Mobile Safari/533.1',

'Mozilla/5.0 (iPad; U; CPU OS 3_2_2 like Mac OS X; en-us) AppleWebKit/531.21.10 (KHTML, like Gecko) Version/4.0.4 Mobile/7B500 Safari/531.21.10',

'Mozilla/5.0 (SymbianOS/9.4; Series60/5.0 NokiaN97-1/20.0.019; Profile/MIDP-2.1 Configuration/CLDC-1.1) AppleWebKit/525 (KHTML, like Gecko) BrowserNG/7.1.18124',

'Nokia5700AP23.01/SymbianOS/9.1 Series60/3.0',

'UCWEB7.0.2.37/28/999',

'Mozilla/4.0 (compatible; MSIE 6.0; ) Opera/UCWEB7.0.2.37/28/999',

'Opera/9.80 (X11; Linux x86_64; U; en) Presto/2.10.229 Version/11.61',

'Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0)',

'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/534.52.7 (KHTML, like Gecko) Version/5.1.2',

'Opera/9.80 (Windows NT 6.1; U; zh-cn) Presto/2.10.229 Version/11.61',

'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11',

'Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0)',

'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56',

'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/45.0.2454.101 Safari/537.36',

'Mozilla/5.0 (Windows; U; Windows NT 5.2) Gecko/2008070208 Firefox/3.0.1',

'Mozilla/5.0 (Windows; U; Windows NT 5.1) Gecko/20070309 Firefox/2.0.0.3',

'Mozilla/5.0 (Windows; U; Windows NT 5.1) Gecko/20070803 Firefox/1.5.0.12',

'Mozilla/5.0 (iPhone; U; CPU like Mac OS X; en) AppleWebKit/420+ (KHTML, like Gecko) Version/3.0 Mobile/1A543a Safari/419.3',

'Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.12) Gecko/20080219 Firefox/2.0.0.12 Navigator/9.0.0.6',

'Mozilla/5.0 (Windows; U; Windows NT 5.2) AppleWebKit/525.13 (KHTML, like Gecko) Chrome/0.2.149.27 Safari/525.13',

'Mozilla/5.0 (iPhone; U; CPU like Mac OS X) AppleWebKit/420.1 (KHTML, like Gecko) Version/3.0 Mobile/4A93 Safari/419.3',

'Mozilla/5.0 (Windows; U; Windows NT 5.2) AppleWebKit/525.13 (KHTML, like Gecko) Version/3.1 Safari/525.13',

'Opera/9.64 (Windows NT 5.1; U; en) Presto/2.1.1',

'Opera/9.80 (Macintosh; Intel Mac OS X; U; en) Presto/2.2.15 Version/10.10',

'Opera/9.27 (Windows NT 5.2; U; zh-cn)'

]

head={'User-Agent':random.choice(user_agent_list),

"Cookie":"select_nation=1; lj-ss=9fc6cee08e4d99ced4584517044e1242; lianjia_uuid=cc14aa71-496b-40db-80fd-d136e55a4f81; UM_distinctid=15f22e787c4232-0d1b04a52ef6d2-3a3e5e06-1fa400-15f22e787c5c79; select_city=120000; lianjia_token=2.0079fcccf103161e086851e5c0a1095042; _ga=GA1.2.1281750422.1508119120; _gid=GA1.2.11799009.1508119120; Hm_lvt_9152f8221cb6243a53c83b956842be8a=1508119120; Hm_lpvt_9152f8221cb6243a53c83b956842be8a=1508119221; _gat=1; _gat_past=1; _gat_new=1; _gat_global=1; _gat_new_global=1; CNZZDATA1254525948=274494434-1508117917-%7C1508117917; CNZZDATA1253491255=227527654-1508117030-%7C1508117030; lianjia_ssid=403ab941-dd7a-4efc-ac03-52079a8c9b93",

"Host":"m.lianjia.com",

"Referer":"https://m.lianjia.com/tj/zufang/pg3/"

}

session=requests.session()

html=session.get(url1,headers=head).content

# print html

selector=etree.HTML(html)

data1_1=selector.xpath('//div[@class="item_minor"]//span//em/text()')

for each in data1_1:

print each

data1.append(each)

data2_1=selector.xpath('//div[@class="item_other text_cut"]/text()')

for each in data2_1:

print each

data2.append(each)

data3_1=selector.xpath('//li[@class="pictext"]//a/@href')

for each in data3_1:

if "html" in each:

k="https://m.lianjia.com"+str(each)

print k

url_list.append(k)

except:

pass

print len(data1),len(data2),len(url_list)

data = pd.DataFrame({"data1":data1, "data2": data2,"url_list":url_list})

print len(data)

# 写出excel

writer = pd.ExcelWriter(r'c:\\lianjia_new.xlsx', engine='xlsxwriter', options={'strings_to_urls': False})

data.to_excel(writer, index=False)

writer.close()

time2 = time.time()

print u'ok,爬虫结束!'

print u'总共耗时:' + str(time2 - time1) + 's'

相关文章推荐

- python爬虫抓取链家租房数据

- Python数据爬虫,爬链家的二手房信息

- [置顶] python爬虫实践——零基础快速入门(四)爬取小猪租房信息

- python selenium爬虫实践:获取自如租房数据保存到文件

- 【Python爬虫系列】Python 爬取上海链家二手房数据

- Python爬虫:获取链家,搜房,大众点评的数据

- [置顶] python爬虫实践——零基础快速入门(五)将爬取的数据存到本地

- [置顶] 【python爬虫】网贷天眼平台表格数据抓取

- [置顶] [爬虫]使用python抓取京东全站数据(商品,店铺,分类,评论)

- Python3实现的爬虫爬取数据并存入mysql数据库操作示例

- 数据科学工程师面试宝典系列之一----Python爬虫

- Python爬虫处理抓取数据中文乱码问题

- python爬虫+R数据可视化 实例

- [置顶] 【python 新浪微博爬虫】python 爬取新浪微博24小时热门话题top500

- python爬虫--爬去300个租房信息页

- Python爬虫数据存储MySQL【1】连接方式

- Python数据抓取(2) —简单网络爬虫的撰写

- 操作 Python爬虫数据存储MySQL【3】爬取信息

- python 数据爬虫 爬取糗百

- python,scrapy爬虫sql之爬取数据存储到mysql的piplelines.py配置