C++实现多线程全局内存池

2016-06-07 23:44

435 查看

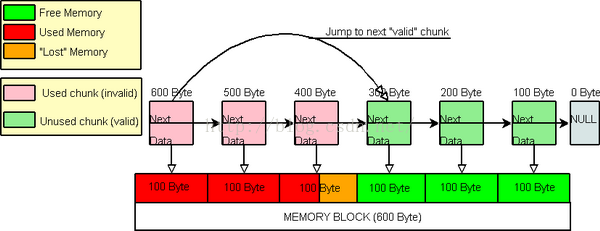

1.内存池数据结构示意图:

2.下面是完整实现代码包括与常规new,delete分配内存性能对比:

#include <Windows.h>

#include <iostream>

#include <map>

#include <assert.h>

#include <map>

#include <vector>

#include <time.h>

typedef int s32;

typedef unsigned int u32;

typedef char c8;

typedef long long s64;

typedef unsigned long long u64;

typedef void* LPVOID;

typedef unsigned char* LPBYTE;

//锁对象封装

class LockObject

{

public:

LockObject()

{

InitializeCriticalSection(&mLock);

}

~LockObject()

{

DeleteCriticalSection(&mLock);

}

void Lock()

{

EnterCriticalSection(&mLock);

}

void UnLock()

{

LeaveCriticalSection(&mLock);

}

bool TryLock()

{

return TryEnterCriticalSection(&mLock);

}

private:

LockObject(const LockObject &other)

{}

LockObject& operator = (const LockObject &other)

{}

private:

CRITICAL_SECTION mLock;

};

//锁定区域对象封装

class ScopeLock

{

public:

ScopeLock(CRITICAL_SECTION &lock)

:mlock(lock)

{

EnterCriticalSection(&mlock);

}

ScopeLock(LockObject &lock)

:mlock( reinterpret_cast<CRITICAL_SECTION&>(lock) )

{

EnterCriticalSection(&mlock);

}

~ScopeLock()

{

LeaveCriticalSection(&mlock);

}

private:

ScopeLock( const ScopeLock &other)

:mlock(other.mlock)

{}

ScopeLock& operator = (const ScopeLock &other)

{}

private:

CRITICAL_SECTION &mlock;

};

//内存块结构

typedef struct MemoryChunk

{

u64 mID; //块ID

LPVOID mpData; //真正的提供给外部分配内存

u64 mDataSize; //管理数据块的大小

u64 mUsedSize; //使用数据块大小

MemoryChunk *mpNext;//下一个块

}* MemoryChunkPtr;

//内存池完整实现

class MemoryPool

{

public:

MemoryPool(u64 MemoryBlockSize, u64 MemoryChunkSize )

{

mTotalMemoryPoolSize =

mUsedMemorySize =

mFreeMemorySize =

mChunkIDPool = 0;

mpBeginChunk =

mpNearFreeChunk =

mpEndChunk = nullptr;

mMemoryChunkSize = MemoryChunkSize;

_AllocateMemory( MemoryBlockSize );

}

~MemoryPool()

{

ScopeLock LockBody( mLock );

_Destory();

}

//获取内存

template<typename T>

T* GetMemory(u64 Count)

{

if( Count <= 0 )

{

assert(false);

return nullptr;

}

auto p = static_cast<T*>( GetMemory( Count * sizeof( T ) ) );

if(nullptr == p)

{

assert( false );

return nullptr;

}

//调用构造方法

for( auto i = 0; i < Count; ++i )

{

new (p+i)T();

}

return p;

}

LPVOID GetMemory( u64 MemoryBlockSize )

{

ScopeLock LockBody( mLock );

//调整到合适的值

const auto NewMemoryBlockSize = _AdjustMemoryBlockSize( MemoryBlockSize );

MemoryChunkPtr pBeginChunk = nullptr;

MemoryChunkPtr pEndChunk = nullptr;

//找到合适的块

if( false == _FindAllocateChunk( NewMemoryBlockSize, pBeginChunk, pEndChunk ) )

{

if( false == _AllocateMemory( NewMemoryBlockSize ) )

{

assert(false);

return nullptr;

}

if( false == _FindAllocateChunk( NewMemoryBlockSize, pBeginChunk, pEndChunk ) )

{

assert(false);

return nullptr;

}

}

//调整块的使用情况,注意pEndChunk是开区间( [ pBeginChunk, pEndChunk ) )

_AdjustChunkData( pBeginChunk, pEndChunk, MemoryBlockSize );

//设置内存使用大小

mUsedMemorySize += NewMemoryBlockSize;

mFreeMemorySize = mTotalMemoryPoolSize - mUsedMemorySize;

//调整最近的空闲块提高下次分配的性能

if( mpNearFreeChunk == pBeginChunk )

{

mpNearFreeChunk = pEndChunk;

}

//记录分配的情况

AllocChunk AC = { pBeginChunk, pEndChunk, NewMemoryBlockSize };

mAllocBlocks.insert( std::make_pair( pBeginChunk->mpData, AC ) );

return pBeginChunk->mpData;

}

template<typename T>

void ReleaseMemory( T* p, u64 count )

{

if(nullptr == p || count <= 0 )

{

assert( false );

return;

}

ScopeLock LockBody( mLock );

const auto Search = mAllocBlocks.find( p );

if( Search == mAllocBlocks.end() )

{

assert( false );

return;

}

//调用析构

for(auto i = 0; i < count; ++i)

{

(p+i)->~T();

}

//调整最近空闲块位置

if( mpNearFreeChunk->mID > Search->second.mBeginChunk->mID )

{

mpNearFreeChunk = Search->second.mBeginChunk;

}

//归还内存

do

{

Search->second.mBeginChunk->mUsedSize = 0;

Search->second.mBeginChunk = Search->second.mBeginChunk->mpNext;

}while(Search->second.mBeginChunk != Search->second.mEndChunk);

mAllocBlocks.erase(p);

}

void ReleaseMemory(LPVOID p)

{

if( nullptr == p )

{

assert( false );

return;

}

ScopeLock LockBody( mLock );

const auto Search = mAllocBlocks.find( p );

if( Search == mAllocBlocks.end() )

{

assert( false );

return;

}

//调整最近空闲块位置

if( mpNearFreeChunk->mID > Search->second.mBeginChunk->mID )

{

mpNearFreeChunk = Search->second.mBeginChunk;

}

do

{

Search->second.mBeginChunk->mUsedSize = 0;

Search->second.mBeginChunk = Search->second.mBeginChunk->mpNext;

}while(Search->second.mBeginChunk != Search->second.mEndChunk);

mAllocBlocks.erase(p);

}

//关闭无用的方法

private:

MemoryPool()

{}

MemoryPool( const MemoryPool &other )

{}

MemoryPool& operator = (const MemoryPool &other)

{}

bool _AllocateMemory(u64 MemoryBlockSize)

{

//调整分配的值

const auto NewMemoryBlockSize = _AdjustMemoryBlockSize(MemoryBlockSize);

auto pNewMemoryBlock = malloc( NewMemoryBlockSize );

if(nullptr == pNewMemoryBlock)

{

assert(false);

return false;

}

//计算块数量

const auto NewChunkCount = NewMemoryBlockSize / mMemoryChunkSize;

auto pNewMemoryChunk = malloc(NewChunkCount * sizeof( MemoryChunk ) );

if(nullptr == pNewMemoryChunk)

{

assert(false);

return false;

}

mTotalMemoryPoolSize += NewMemoryBlockSize;

if( false == _CreateNewChunkLink(pNewMemoryBlock, pNewMemoryChunk, NewChunkCount))

{

assert(false);

return false;

}

return true;

}

u64 _AdjustMemoryBlockSize(u64 MemoryBlockSize)

{

const auto Mod = MemoryBlockSize % mMemoryChunkSize;

return Mod <= 0 ? MemoryBlockSize : MemoryBlockSize + mMemoryChunkSize - Mod;

}

//创建新的块链表

bool _CreateNewChunkLink(LPVOID pNewMemoryBlock, LPVOID pNewMemoryChunk, u64 NewChunkCount)

{

MemoryChunkPtr pNewChunk = nullptr;

u64 MemoryOffset = 0;

for(auto i = 0; i < NewChunkCount; ++i)

{

pNewChunk = static_cast<MemoryChunkPtr>(pNewMemoryChunk) + i;

if(nullptr == mpBeginChunk)

{

mpBeginChunk =

mpNearFreeChunk =

mpEndChunk = pNewChunk;

}

else

{

mpEndChunk->mpNext = pNewChunk;

mpEndChunk = pNewChunk;

}

MemoryOffset = i * mMemoryChunkSize;

mpEndChunk->mpData = static_cast<LPBYTE>( pNewMemoryBlock ) + MemoryOffset;

mpEndChunk->mDataSize = mTotalMemoryPoolSize - MemoryOffset;

mpEndChunk->mUsedSize = 0;

mpEndChunk->mID = ++mChunkIDPool;

}

mpEndChunk->mpNext = nullptr;

//记录首地址方便释放

HeadAddress Address = { pNewMemoryChunk, pNewMemoryBlock };

mHeadAddresses.push_back( Address );

return true;

}

bool _FindAllocateChunk(u64 MemoryBlockSize, MemoryChunkPtr &pBeginChunk, MemoryChunkPtr &pEndChunk)

{

auto pTemp = mpNearFreeChunk;

while( pTemp != nullptr && ( pTemp->mDataSize < MemoryBlockSize || pTemp->mUsedSize > 0 ) )

{

pTemp = pTemp->mpNext;

}

if( nullptr == pTemp )

{

return false;

}

pBeginChunk = pTemp;

pEndChunk = pBeginChunk->mpNext;

for( u64 i = mMemoryChunkSize; i < MemoryBlockSize; i += mMemoryChunkSize )

{

pEndChunk = pEndChunk->mpNext;

}

return true;

}

void _AdjustChunkData( MemoryChunkPtr pBeginChunk, MemoryChunkPtr pEndChunk, u64 MemoryBlockSize )

{

u64 Counter = 0;

for( auto i = pBeginChunk; i != pEndChunk; i = i->mpNext )

{

Counter += mMemoryChunkSize;

if(Counter > MemoryBlockSize)

{

i->mUsedSize = MemoryBlockSize - mMemoryChunkSize;

}

else

{

i->mUsedSize = mMemoryChunkSize;

}

}

}

void _Destory()

{

for(auto i = mHeadAddresses.begin(); i != mHeadAddresses.end(); ++i)

{

free(i->m1);

free(i->m2);

}

mHeadAddresses.clear();

mAllocBlocks.clear();

}

private:

MemoryChunkPtr mpBeginChunk;

MemoryChunkPtr mpNearFreeChunk;

MemoryChunkPtr mpEndChunk;

u64 mChunkIDPool;

u64 mTotalMemoryPoolSize;

u64 mUsedMemorySize;

u64 mFreeMemorySize;

u64 mMemoryChunkSize;

struct HeadAddress

{

LPVOID m1;

LPVOID m2;

};

std::vector< HeadAddress > mHeadAddresses;

struct AllocChunk

{

MemoryChunkPtr mBeginChunk;

MemoryChunkPtr mEndChunk;

u64 mMemoryBlockSize;

};

std::map<LPVOID, AllocChunk> mAllocBlocks;

LockObject mLock;

};

int main()

{

srand( time( nullptr ) );

//分配400MB内存空间,每个块128bytes

MemoryPool *pPool = new MemoryPool( 1024 * 1024* 400, 128 );

u32 AllocateSize = 0;

u32 Tick = 0;

u32 Cost = 0;

for(u32 i = 0; i < 20; ++i)

{

Tick = GetTickCount();

for( u32 j = 0; j < 25000; ++j )

{

AllocateSize = 64 + rand() % 1024;

auto p = (char*)pPool->GetMemory( AllocateSize );

pPool->ReleaseMemory(p);

}

Cost = GetTickCount() - Tick;

printf( "test of %u times pool cost %ums.\n", i + 1, Cost );

Tick = GetTickCount();

for( u32 j = 0; j < 25000; ++j )

{

AllocateSize = 64 + rand() % 1024;

auto p = new char[ AllocateSize ];

delete[] p;

}

Cost = GetTickCount() - Tick;

printf( "test of %u times new and delete cost %ums.\n", i + 1, Cost );

}

delete pPool;

system( "pause" );

return 0;

}3.输出结果( 当然内存池代码性能还有待优化,可能会有BUG,但核心思想是这样的 )

相关文章推荐

- 使用C++实现JNI接口需要注意的事项

- Python3写爬虫(四)多线程实现数据爬取

- 关于指针的一些事情

- c++ primer 第五版 笔记前言

- share_ptr的几个注意点

- C#实现多线程的同步方法实例分析

- Lua中调用C++函数示例

- 浅谈chuck-lua中的多线程

- Lua教程(一):在C++中嵌入Lua脚本

- Lua教程(二):C++和Lua相互传递数据示例

- C#简单多线程同步和优先权用法实例

- C#多线程学习之(四)使用线程池进行多线程的自动管理

- C#多线程编程中的锁系统(三)

- 解析C#多线程编程中异步多线程的实现及线程池的使用

- C#多线程学习之(六)互斥对象用法实例

- 基于一个应用程序多线程误用的分析详解

- C++联合体转换成C#结构的实现方法

- C#多线程学习之(三)生产者和消费者用法分析

- C#多线程学习之(一)多线程的相关概念分析

- C#多线程之Thread中Thread.IsAlive属性用法分析