OpenStack Networking – FlatManager and FlatDHCPManager

2016-01-30 21:28

756 查看

最好的分析FlatDHCPManager的源文,有机会把这篇翻译了

===========================

Over time, networking in OpenStack has been evolving from a simple, barely usable model, to one that aims to support full customer isolation. To address different user needs, OpenStack comes with a handful of “network managers”. A network manager defines the network topology for a given OpenStack deployment. As of the current stable “Essex” release of OpenStack, one can choose from three different types of network managers: FlatManager, FlatDHCPManager, VlanManager. I’ll discuss the first two of them here.

FlatManager and FlatDHCPManager have lots in common. They both rely on the concept of bridged networking, with a single bridge device. Let’s consider her the example of a multi-host network; we’ll look at a single-host use case in a subsequent post.

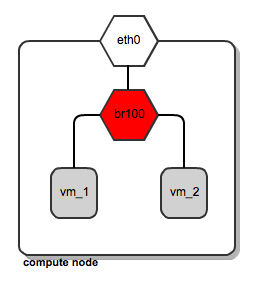

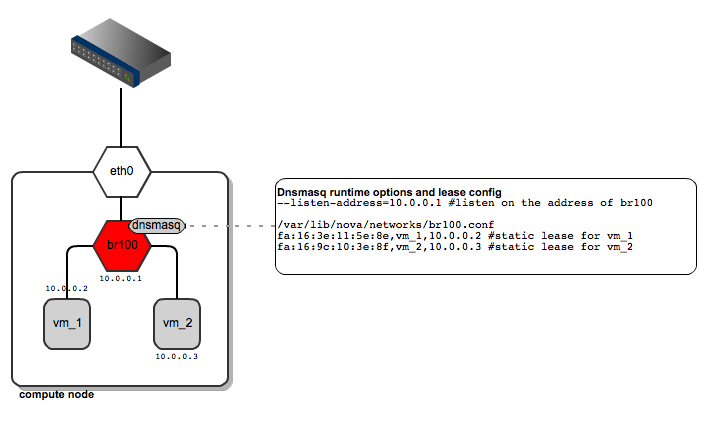

For each compute node, there is a single virtual bridge created, the name of which is specified in the Nova configuration file using this option:

flat_network_bridge=br100

All the VMs spawned by OpenStack get attached to this dedicated bridge.

Network bridging on OpenStack compute node

This approach (single bridge per compute node) suffers from a common known limitation of bridged networking: a linux bridge can be attached only to a signle physical interface on the host machine (we could get away with VLAN interfaces here, but this is not supported by FlatDHCPManager and FlatManager). Because of this, there is no L2 isolation between hosts. They all share the same ARP broadcast domain.

The idea behind FlatManager and FlatDHCPManager is to have one “flat” IP address pool defined throughout the cluster. This address space is shared among all user instances, regardless of which tenant they belong to. Each tenant is free to grab whatever address is available in the pool.

FlatManager network topology

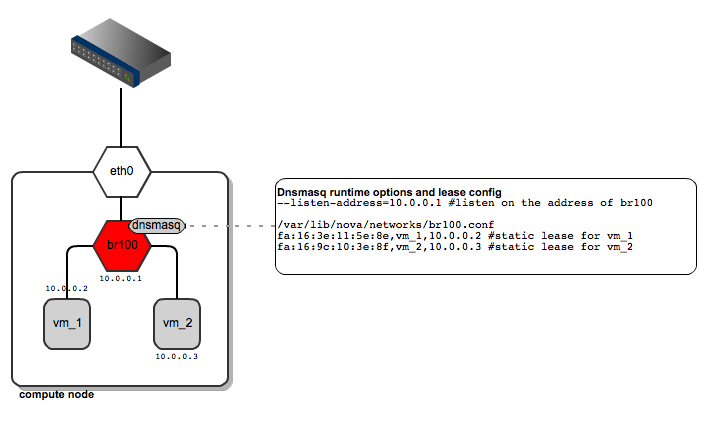

On each compute node:

the network bridge is given an address from the “flat” IP pool

a dnsmasq DHCP server process is spawned and listens on the bridge interface IP

the bridge acts as the default gateway for all the instances running on the given compute node

FlatDHCPManager – network topology

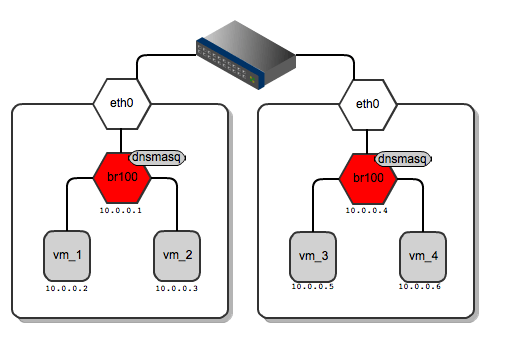

As for dnsmasq, FlatDHCPManager creates a static lease file per compute node to guarantee the same IP address for the instance over time. The lease file is constructed based on instance data from the Nova database, namely MAC, IP and hostname. The dnsmasq server is supposed to hand out addresses only to instances running locally on the compute node. To achieve this, instance data to be put into DHCP lease file are filtered by the ‘host’ field from the ‘instances’ table. Also, the default gateway option in dnsmasq is set to the bridge’s IP address. On the diagram below you san see that it will be given a different default gateway depending on which compute node the instance lands.

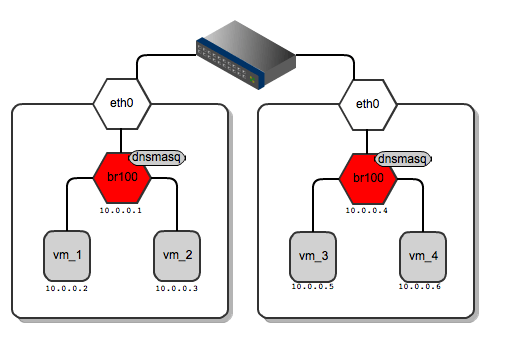

Network gateways for instances running on different compute nodes

Below I’ve shown the routing table from vm_1 and for vm_3 – each of them has a different default gateway:

root@vm_1:~# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.1 0.0.0.0 UG 0 0 0 eth0

root@vm_3:~# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.4 0.0.0.0 UG 0 0 0 eth0

By default, all the VMs in the “flat”

network can see one another regardless of which tenant they belong to.

One can enforce instance isolation by applying the following flag in nova.conf:

allow_same_net_traffic=False

This configures IPtables policies to

prevent any traffic between instances (even inside the same tenant),

unless it is unblocked in a security group.

From practical standpoint, “flat”

managers seem to be usable for homogenous, relatively small, internal

corporate clouds where there are no tenants at all, or their number is

very limited. Typically, the usage scenario will be a dynamically

scaled web server farm or an HPC cluster. For this purpose it is usually

sufficient to have a single IP address space where IP address

management is offloaded to some central DHCP server or is managed in a

simple way by OpenStack’s dnsmasq. On the other hand, flat networking

can struggle with scalability, as all the instances share the same L2

broadcast domain.

These issues (scalability +

multitenancy) are in some ways addressed by VlanManager, which will be

covered in an upcoming blog posts.

===========================

Over time, networking in OpenStack has been evolving from a simple, barely usable model, to one that aims to support full customer isolation. To address different user needs, OpenStack comes with a handful of “network managers”. A network manager defines the network topology for a given OpenStack deployment. As of the current stable “Essex” release of OpenStack, one can choose from three different types of network managers: FlatManager, FlatDHCPManager, VlanManager. I’ll discuss the first two of them here.

FlatManager and FlatDHCPManager have lots in common. They both rely on the concept of bridged networking, with a single bridge device. Let’s consider her the example of a multi-host network; we’ll look at a single-host use case in a subsequent post.

For each compute node, there is a single virtual bridge created, the name of which is specified in the Nova configuration file using this option:

flat_network_bridge=br100

All the VMs spawned by OpenStack get attached to this dedicated bridge.

Network bridging on OpenStack compute node

This approach (single bridge per compute node) suffers from a common known limitation of bridged networking: a linux bridge can be attached only to a signle physical interface on the host machine (we could get away with VLAN interfaces here, but this is not supported by FlatDHCPManager and FlatManager). Because of this, there is no L2 isolation between hosts. They all share the same ARP broadcast domain.

The idea behind FlatManager and FlatDHCPManager is to have one “flat” IP address pool defined throughout the cluster. This address space is shared among all user instances, regardless of which tenant they belong to. Each tenant is free to grab whatever address is available in the pool.

FlatManager

FlatManager provides the most primitive set of operations. Its role boils down just to attaching the instance to the bridge on the compute node. By default, it does no IP configuration of the instance. This task is left for the systems administrator and can be done using some external DHCP server or other means.

FlatManager network topology

FlatDHCPManager

FlatDHCPManager plugs a given instance into the bridge, and on top of that provides a DHCP server to boot up from.On each compute node:

the network bridge is given an address from the “flat” IP pool

a dnsmasq DHCP server process is spawned and listens on the bridge interface IP

the bridge acts as the default gateway for all the instances running on the given compute node

FlatDHCPManager – network topology

As for dnsmasq, FlatDHCPManager creates a static lease file per compute node to guarantee the same IP address for the instance over time. The lease file is constructed based on instance data from the Nova database, namely MAC, IP and hostname. The dnsmasq server is supposed to hand out addresses only to instances running locally on the compute node. To achieve this, instance data to be put into DHCP lease file are filtered by the ‘host’ field from the ‘instances’ table. Also, the default gateway option in dnsmasq is set to the bridge’s IP address. On the diagram below you san see that it will be given a different default gateway depending on which compute node the instance lands.

Network gateways for instances running on different compute nodes

Below I’ve shown the routing table from vm_1 and for vm_3 – each of them has a different default gateway:

root@vm_1:~# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.1 0.0.0.0 UG 0 0 0 eth0

root@vm_3:~# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.4 0.0.0.0 UG 0 0 0 eth0

By default, all the VMs in the “flat”

network can see one another regardless of which tenant they belong to.

One can enforce instance isolation by applying the following flag in nova.conf:

allow_same_net_traffic=False

This configures IPtables policies to

prevent any traffic between instances (even inside the same tenant),

unless it is unblocked in a security group.

From practical standpoint, “flat”

managers seem to be usable for homogenous, relatively small, internal

corporate clouds where there are no tenants at all, or their number is

very limited. Typically, the usage scenario will be a dynamically

scaled web server farm or an HPC cluster. For this purpose it is usually

sufficient to have a single IP address space where IP address

management is offloaded to some central DHCP server or is managed in a

simple way by OpenStack’s dnsmasq. On the other hand, flat networking

can struggle with scalability, as all the instances share the same L2

broadcast domain.

These issues (scalability +

multitenancy) are in some ways addressed by VlanManager, which will be

covered in an upcoming blog posts.

相关文章推荐

- 七星级产品经理张小龙做产品也会痛苦!他有3句狠话值得学习

- 为什么初创企业寻找CTO这么难?

- 0~2岁的产品经理该如何提高软实力?

- 产品经理的年度吐槽: 老板的放肆和我的克制

- 产品经理常用的六张图!

- 产品经理的年度吐槽: 老板的放肆和我的克制

- 基于SfM和PMVS的序列图像三维重建

- leapMotion概述(API Overview)

- rpm常用选项

- 修改终端下vim的PopupMenu选种项的背景颜色

- DPM(Deformable Parts Model)

- PM应知晓的互联网产品研发改进之路

- 技术人员怎样转型成为产品经理?

- PMP学习经历反思(1)---PMBook第五版

- 使用DPM2012恢复Exchange2013单用户邮箱数据

- 初创公司如何招聘产品经理

- NPM小结

- 项目经理和产品经理的区别

- gitlab客户端简单使用

- emaGpmuJ.55