Windows上运行Eclipse使用virtualbox搭建的Ubuntu的hadoop集群服务

2015-10-19 00:00

911 查看

摘要: 很多人直接在master上安装eclipse来编写程序,但是这样是不对的,服务就是服务,是远程调用的,本文章使用windows运行Eclipse来使用远端Hadoop服务,解决了很多问题。注意本文以WordCount为例。如有疑问,请阅读上一篇我的文章《 在window上使用VirtualBox搭建Ubuntu15.04全分布Hadoop2.7.1集群》

在windows端使用eclipse在ubuntu集群中运行程序

将ubuntu的master节点的hadoop拷贝到windows某个路径下,例如:E:\Spring\Hadoop\hadoop\hadoop-2.7.1

Eclipse安装对应版本的hadoop插件,并且,在windows-preference-mapreduce中设置hadoop目录的路径

第一种:空指针异常

Exception in thread "main" java.lang.NullPointerException

at java.lang.ProcessBuilder.start(ProcessBuilder.java:441)

at org.apache.hadoop.util.Shell.runCommand(Shell.java:445)

at org.apache.hadoop.util.Shell.run(Shell.java:418)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:650)

at org.apache.hadoop.util.Shell.execCommand(Shell.java:739)

at org.apache.hadoop.util.Shell.execCommand(Shell.java:722)

at org.apache.hadoop.fs.RawLocalFileSystem.setPermission(RawLocalFileSystem.java:633)

at org.apache.hadoop.fs.RawLocalFileSystem.mkdirs(RawLocalFileSystem.java:421)

at org.apache.hadoop.fs.FilterFileSystem.mkdirs(FilterFileSystem.java:281)

at org.apache.hadoop.mapreduce.JobSubmissionFiles.getStagingDir(JobSubmissionFiles.java:125)

at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:348)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1285)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1282)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1548)

at org.apache.hadoop.mapreduce.Job.submit(Job.java:1282)

at org.apache.hadoop.mapreduce.Job.waitForCompletion(Job.java:1303)

at WordCount.main(WordCount.java:89)

来源: <http://bbs.csdn.net/topics/390876548>

原因:要读写windows平台的文件,没有权限,所以,在hadoop\bin中以及System32放置对应版本的winutils.exe以及hadoop.dll,加入环境变量HADOOP_HOME,值为:E:\Spring\Hadoop\hadoop\hadoop-2.7.1,在Path中加入%HADOOP_HOME%\bin,重启eclipse(否则不会生效),运行不会报这个异常了

第二种:Permission denied

Caused by: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.AccessControlException): Permission denied: user=cuiguangfan, access=WRITE, inode="/tmp/hadoop-yarn/staging/cuiguangfan/.staging":linux1:supergroup:drwxr-xr-x

参考以下链接(启发):

http://www.huqiwen.com/2013/07/18/hdfs-permission-denied/

所以,在程序运行时设置System.setProperty("HADOOP_USER_NAME", "linux1");

注意,在windows中设置系统环境变量不起作用

第三种:no job control

2014-05-28 17:32:19,761 WARN org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor: Exception from container-launch with container ID: container_1401177251807_0034_01_000001 and exit code: 1

org.apache.hadoop.util.Shell$ExitCodeException: /bin/bash: line 0: fg: no job control

at org.apache.hadoop.util.Shell.runCommand(Shell.java:505)

at org.apache.hadoop.util.Shell.run(Shell.java:418)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:650)

at org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor.launchContainer(DefaultContainerExecutor.java:195)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:300)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:81)

at java.util.concurrent.FutureTask.run(FutureTask.java:262)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:744)

原因:hadoop的系统环境变量没有正确设置导致的

解决:重写YARNRunner

参考链接:http://blog.csdn.net/fansy1990/article/details/27526167

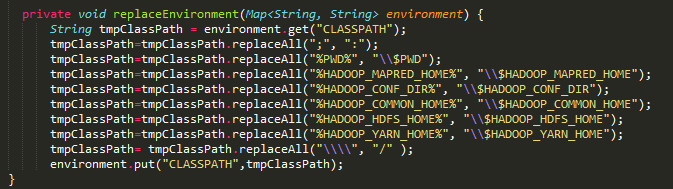

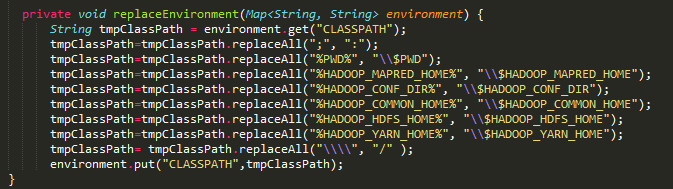

即将所有%XX%替换成$XX,将\\替换成/,我这里处理的更完整一点,截图:

第四种:Invalid host name: local host is: (unknown); destination host is

原因:本应该运行在远端master上的端口没有设置完全

解决:

将hdfs-site.xml、mapred-site.xml、yarn-site.xml中的属性在程序中显式设置为master节点(IP设置)

截图:

第五种:在192.168.99.101:8088(查看所有Application)中,点开某个datanode节点,无法找到

原因:因为点开的是linuxX-clound,系统没有找到linuxX-clound对应的IP地址,这里,设置windows的hosts文件,将在master或者slaves中设置的hosts拷贝过来

即:

,由此,修改完hosts后,我们可以将conf中的设置远端地址改为linux0-cloud(master节点)

补充:在解决了第三种错误后,这个错误应该消失,如果没消失,在Mapreduce-site.xml和Yarn-site.xml都加入以下内容:

第六种:java.lang.RuntimeException:java.lang.ClassNotFoundException

原因:mapreduce程序在hadoop中的运行机理:mapreduce框架在运行Job时,为了使得各个从节点上能执行task任务(即map和reduce函数),会在作业提交时将运行作业所需的资源,包括作业jar文件、配置文件和计算所得的输入划分,复制到HDFS上一个以作业ID命名的目录中,并且作业jar的副本较多,以保证tasktracker运行task时可以访问副本,执行程序。程序不是以jar的形式运行的,所以不会上传jar到HDFS中,以致节点外的所有节点在执行task任务时上不能找到map和reduce类,所以在运行task时会出现错误。

解决:临时生成jar包,设置路径

参考链接:http://m.blog.csdn.net/blog/le119126/40983213

将以上bug解决后,运行成功!

我在git osc上传了自己的wordcount代码,大家可以看看https://git.oschina.net/xingkong/HadoopExplorer

在windows端使用eclipse在ubuntu集群中运行程序

将ubuntu的master节点的hadoop拷贝到windows某个路径下,例如:E:\Spring\Hadoop\hadoop\hadoop-2.7.1

Eclipse安装对应版本的hadoop插件,并且,在windows-preference-mapreduce中设置hadoop目录的路径

第一种:空指针异常

Exception in thread "main" java.lang.NullPointerException

at java.lang.ProcessBuilder.start(ProcessBuilder.java:441)

at org.apache.hadoop.util.Shell.runCommand(Shell.java:445)

at org.apache.hadoop.util.Shell.run(Shell.java:418)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:650)

at org.apache.hadoop.util.Shell.execCommand(Shell.java:739)

at org.apache.hadoop.util.Shell.execCommand(Shell.java:722)

at org.apache.hadoop.fs.RawLocalFileSystem.setPermission(RawLocalFileSystem.java:633)

at org.apache.hadoop.fs.RawLocalFileSystem.mkdirs(RawLocalFileSystem.java:421)

at org.apache.hadoop.fs.FilterFileSystem.mkdirs(FilterFileSystem.java:281)

at org.apache.hadoop.mapreduce.JobSubmissionFiles.getStagingDir(JobSubmissionFiles.java:125)

at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:348)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1285)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1282)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1548)

at org.apache.hadoop.mapreduce.Job.submit(Job.java:1282)

at org.apache.hadoop.mapreduce.Job.waitForCompletion(Job.java:1303)

at WordCount.main(WordCount.java:89)

来源: <http://bbs.csdn.net/topics/390876548>

原因:要读写windows平台的文件,没有权限,所以,在hadoop\bin中以及System32放置对应版本的winutils.exe以及hadoop.dll,加入环境变量HADOOP_HOME,值为:E:\Spring\Hadoop\hadoop\hadoop-2.7.1,在Path中加入%HADOOP_HOME%\bin,重启eclipse(否则不会生效),运行不会报这个异常了

第二种:Permission denied

Caused by: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.AccessControlException): Permission denied: user=cuiguangfan, access=WRITE, inode="/tmp/hadoop-yarn/staging/cuiguangfan/.staging":linux1:supergroup:drwxr-xr-x

参考以下链接(启发):

http://www.huqiwen.com/2013/07/18/hdfs-permission-denied/

所以,在程序运行时设置System.setProperty("HADOOP_USER_NAME", "linux1");

注意,在windows中设置系统环境变量不起作用

第三种:no job control

2014-05-28 17:32:19,761 WARN org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor: Exception from container-launch with container ID: container_1401177251807_0034_01_000001 and exit code: 1

org.apache.hadoop.util.Shell$ExitCodeException: /bin/bash: line 0: fg: no job control

at org.apache.hadoop.util.Shell.runCommand(Shell.java:505)

at org.apache.hadoop.util.Shell.run(Shell.java:418)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:650)

at org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor.launchContainer(DefaultContainerExecutor.java:195)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:300)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:81)

at java.util.concurrent.FutureTask.run(FutureTask.java:262)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:744)

原因:hadoop的系统环境变量没有正确设置导致的

解决:重写YARNRunner

参考链接:http://blog.csdn.net/fansy1990/article/details/27526167

即将所有%XX%替换成$XX,将\\替换成/,我这里处理的更完整一点,截图:

第四种:Invalid host name: local host is: (unknown); destination host is

原因:本应该运行在远端master上的端口没有设置完全

解决:

将hdfs-site.xml、mapred-site.xml、yarn-site.xml中的属性在程序中显式设置为master节点(IP设置)

截图:

第五种:在192.168.99.101:8088(查看所有Application)中,点开某个datanode节点,无法找到

原因:因为点开的是linuxX-clound,系统没有找到linuxX-clound对应的IP地址,这里,设置windows的hosts文件,将在master或者slaves中设置的hosts拷贝过来

即:

192.168.99.101 linux0-cloud 192.168.99.100 linux1-cloud 192.168.99.102 linux2-cloud 192.168.99.103 linux3-cloud

,由此,修改完hosts后,我们可以将conf中的设置远端地址改为linux0-cloud(master节点)

补充:在解决了第三种错误后,这个错误应该消失,如果没消失,在Mapreduce-site.xml和Yarn-site.xml都加入以下内容:

<property> <name>mapreduce.application.classpath</name> <value> /home/linux1/hadoop/hadoop-2.7.1/etc/hadoop, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/common/*, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/common/lib/*, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/hdfs/*, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/hdfs/lib/*, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/mapreduce/*, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/mapreduce/lib/*, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/yarn/*, /home/linux1/hadoop/hadoop-2.7.1/share/hadoop/yarn/lib/* </value> </property>

第六种:java.lang.RuntimeException:java.lang.ClassNotFoundException

原因:mapreduce程序在hadoop中的运行机理:mapreduce框架在运行Job时,为了使得各个从节点上能执行task任务(即map和reduce函数),会在作业提交时将运行作业所需的资源,包括作业jar文件、配置文件和计算所得的输入划分,复制到HDFS上一个以作业ID命名的目录中,并且作业jar的副本较多,以保证tasktracker运行task时可以访问副本,执行程序。程序不是以jar的形式运行的,所以不会上传jar到HDFS中,以致节点外的所有节点在执行task任务时上不能找到map和reduce类,所以在运行task时会出现错误。

解决:临时生成jar包,设置路径

参考链接:http://m.blog.csdn.net/blog/le119126/40983213

将以上bug解决后,运行成功!

我在git osc上传了自己的wordcount代码,大家可以看看https://git.oschina.net/xingkong/HadoopExplorer

相关文章推荐

- Ubuntu 默认壁纸历代记

- Ubuntu Remix Cinnamon 20.04 评测:Ubuntu 与 Cinnamon 的完美融合

- 关于Ubuntu 11.10启动提示waiting for the network configuration的问题

- 在 Ubuntu 桌面中使用文件和文件夹

- ubuntu下chrome无法同步问题解决

- 详解HDFS Short Circuit Local Reads

- Ubuntu Linux使用体验

- 如何重装TCP/IP协议

- 使用 GNOME 优化工具自定义 Linux 桌面的 10 种方法

- 以Ubuntu 9.04为例 将工作环境迁移到 Linux

- Hadoop_2.1.0 MapReduce序列图

- 使用Hadoop搭建现代电信企业架构

- Windows 8 官方高清壁纸欣赏与下载

- VirtualBox虚拟机XP与宿主机Ubuntu互访共享文件夹

- 从USB安装Ubuntu Server 10.04.3 图文详解

- 谁是桌面王者?Win PK Linux三大镇山之宝

- Ubuntu 15.04 正式版发布下载

- Linux-Ubuntu 10.04安装Cadence-ic610 方法总结图解

- 对《大家都在点赞 Windows Terminal,我决定给你泼一盆冷水》一文的商榷